Machine Learning with Operational Costs

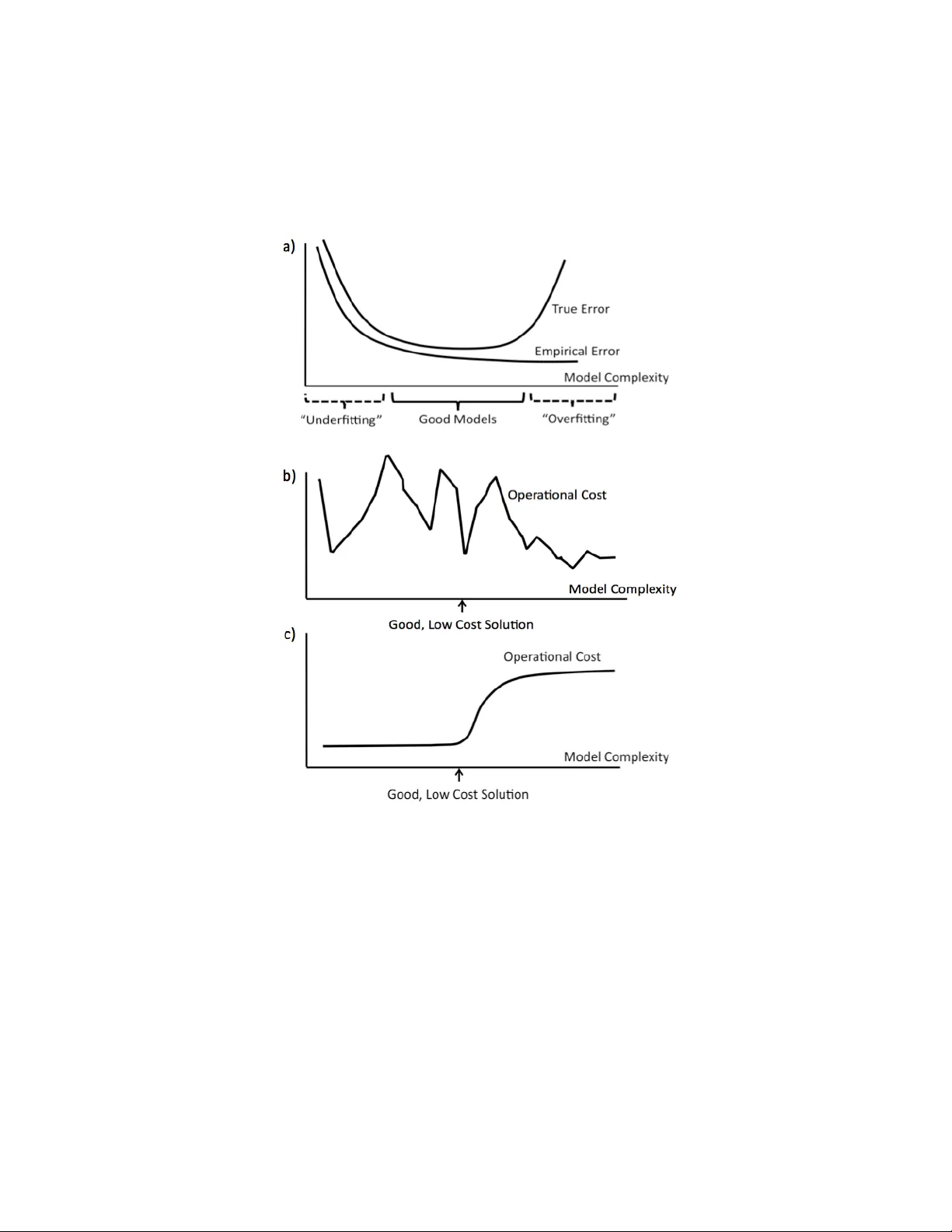

This work proposes a way to align statistical modeling with decision making. We provide a method that propagates the uncertainty in predictive modeling to the uncertainty in operational cost, where operational cost is the amount spent by the practiti…

Authors: Theja Tulab, hula, Cynthia Rudin