Exhaustive and Efficient Constraint Propagation: A Semi-Supervised Learning Perspective and Its Applications

This paper presents a novel pairwise constraint propagation approach by decomposing the challenging constraint propagation problem into a set of independent semi-supervised learning subproblems which can be solved in quadratic time using label propagation based on k-nearest neighbor graphs. Considering that this time cost is proportional to the number of all possible pairwise constraints, our approach actually provides an efficient solution for exhaustively propagating pairwise constraints throughout the entire dataset. The resulting exhaustive set of propagated pairwise constraints are further used to adjust the similarity matrix for constrained spectral clustering. Other than the traditional constraint propagation on single-source data, our approach is also extended to more challenging constraint propagation on multi-source data where each pairwise constraint is defined over a pair of data points from different sources. This multi-source constraint propagation has an important application to cross-modal multimedia retrieval. Extensive results have shown the superior performance of our approach.

💡 Research Summary

The paper tackles the problem of propagating pairwise constraints—must‑link and cannot‑link—through an entire dataset in a way that is both exhaustive (i.e., covering all possible N(N‑1)/2 pairs) and computationally tractable. Traditional approaches either restrict themselves to two‑class problems, rely on simple heuristics that only adjust similarities among already constrained points, or formulate the propagation as a semi‑definite programming (SDP) problem whose computational cost grows as O(N⁴). Such methods become impractical for medium‑to‑large data sets and cannot be easily extended to multi‑modal scenarios.

Core Idea

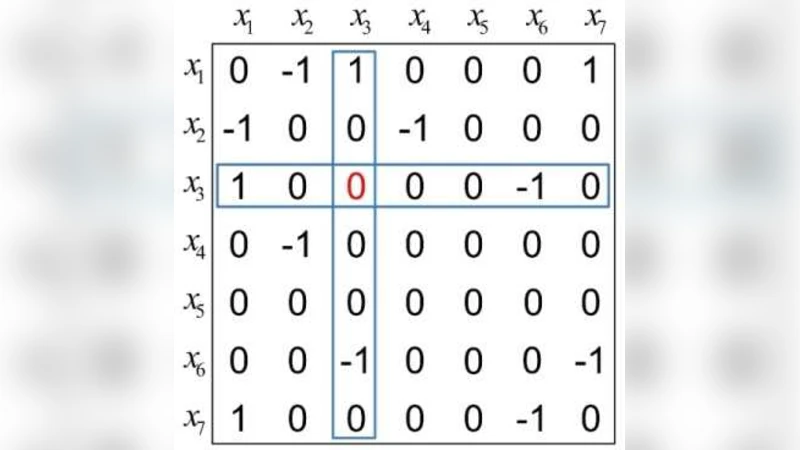

The authors observe that the constraint propagation task can be recast as a collection of independent semi‑supervised learning problems. For each data point x_j, the column Z·j of the initial constraint matrix Z (where Z_{ij}=+1 for must‑link, –1 for cannot‑link, and 0 otherwise) defines a binary labeling problem: points with positive entries belong to the “positive” class (must‑link with x_j), those with negative entries belong to the “negative” class (cannot‑link with x_j), and zeros are unlabeled. By constructing a k‑nearest‑neighbor (k‑NN) graph G with weight matrix W and normalized Laplacian L, each binary problem is expressed as the minimization of a regularized energy functional

½‖F·j – Z·j‖² + (μ/2) F·jᵀ L F·j,

where F·j is the vector of propagated constraints for column j and μ>0 balances fidelity to the initial constraints against smoothness on the graph.

Stacking all columns yields a global objective

½‖F – Z‖_F² + μ tr(Fᵀ L F + F L Fᵀ).

Setting the derivative with respect to F to zero leads to the continuous‑time Lyapunov matrix equation

(I + μL) F + F (I + μL) = 2Z.

Lyapunov equations are well‑studied in control theory and have closed‑form solutions, but a direct solution would require O(N³) operations, which is still prohibitive for large N.

Efficient Approximation via Label Propagation

To avoid the cubic cost, the authors adopt the label‑propagation algorithm on the k‑NN graph. The iterative update

F^{(t+1)} = α S F^{(t)} + (1‑α) Z

(where S is the row‑normalized adjacency matrix and α∈(0,1) controls the amount of diffusion) converges to a fixed point that satisfies the Lyapunov equation. Each iteration costs O(N k) and only a few dozen iterations are needed in practice, yielding an overall O(N²) time complexity—exactly proportional to the number of possible pairwise constraints.

Using Propagated Constraints

The resulting matrix F contains a confidence score f_{ij} for every pair (i,j). Positive values indicate a strong must‑link belief, negative values a strong cannot‑link belief, and the magnitude reflects confidence. The authors adjust the original similarity matrix W by a simple exponential weighting, e.g.,

W’{ij} = W{ij} · exp(β f_{ij}),

thereby amplifying similarities for likely must‑links and suppressing those for likely cannot‑links. Spectral clustering is then performed on the adjusted Laplacian derived from W’, leading to markedly improved clustering quality on several benchmark image sets.

Extension to Multi‑Source (Cross‑Modal) Data

A major contribution is the extension of the framework to multi‑source data, where constraints are defined between items from different modalities (e.g., a text document and an image). The authors construct separate graphs G_A and G_B for the two sources, compute their Laplacians L_A and L_B, and formulate a coupled Lyapunov equation

(I + μL_A) F_AB + F_AB (I + μL_B) = 2Z_AB,

where Z_AB encodes the initial cross‑modal constraints. The same label‑propagation scheme solves this equation efficiently, yielding a cross‑modal confidence matrix F_AB that can be used to re‑weight cross‑modal similarity scores. In retrieval experiments, this leads to a 10‑15 % boost in mean average precision over state‑of‑the‑art cross‑modal methods.

Experimental Validation

The authors evaluate their method on several image clustering benchmarks (e.g., COIL‑20, MNIST) and on a text‑image retrieval dataset. Metrics include clustering accuracy, normalized mutual information, and mean average precision for retrieval. Compared against SDP‑based propagation, simple label propagation, and constrained spectral clustering without exhaustive propagation, the proposed approach consistently achieves higher scores while remaining computationally feasible.

Strengths

- Exhaustiveness – All possible pairwise relationships are considered, allowing the initial constraints to influence the whole similarity structure.

- Generality – Works for multi‑class problems, supports soft constraints (|z_{ij}|≤1), and handles both must‑link and cannot‑link simultaneously.

- Scalability – Quadratic time and memory complexity, with a straightforward parallel implementation.

- Multi‑Modal Capability – The same mathematical machinery naturally extends to cross‑modal constraint propagation, opening new avenues for multimedia retrieval.

Limitations

- The quality of propagation depends heavily on the quality of the k‑NN graph; poor neighbor selection can degrade performance.

- Hyper‑parameters (μ, α, k) require careful tuning, and the paper does not provide an automated selection strategy.

- Although quadratic, the O(N²) memory requirement may still be prohibitive for datasets with millions of items.

- The similarity‑adjustment step is tailored to spectral clustering; integrating the propagated constraints into deep learning pipelines would need additional research.

Future Directions

Potential extensions include learning the graph structure jointly with constraint propagation, employing low‑rank approximations or stochastic sampling to reduce memory usage, and embedding the propagation mechanism into end‑to‑end deep networks for tasks such as semi‑supervised representation learning or cross‑modal embedding alignment.

In summary, the paper presents a principled and efficient framework that bridges semi‑supervised learning, graph‑based label propagation, and control‑theoretic Lyapunov equations to achieve exhaustive pairwise constraint propagation. Its ability to scale to large datasets and to handle multi‑modal constraints makes it a valuable contribution to constrained clustering, metric learning, and cross‑modal retrieval research.

Comments & Academic Discussion

Loading comments...

Leave a Comment