Oblivious RAM Simulation with Efficient Worst-Case Access Overhead

Oblivious RAM simulation is a method for achieving confidentiality and privacy in cloud computing environments. It involves obscuring the access patterns to a remote storage so that the manager of that storage cannot infer information about its contents. Existing solutions typically involve small amortized overheads for achieving this goal, but nevertheless involve potentially huge variations in access times, depending on when they occur. In this paper, we show how to de-amortize oblivious RAM simulations, so that each access takes a worst-case bounded amount of time.

💡 Research Summary

The paper addresses a critical limitation of existing Oblivious RAM (ORAM) constructions: while many schemes achieve low amortized overhead, their worst‑case access cost can be as large as Θ(n). This variability makes ORAM unsuitable for latency‑sensitive applications such as real‑time systems or multi‑user cloud services where a single request must not incur a long, unpredictable delay.

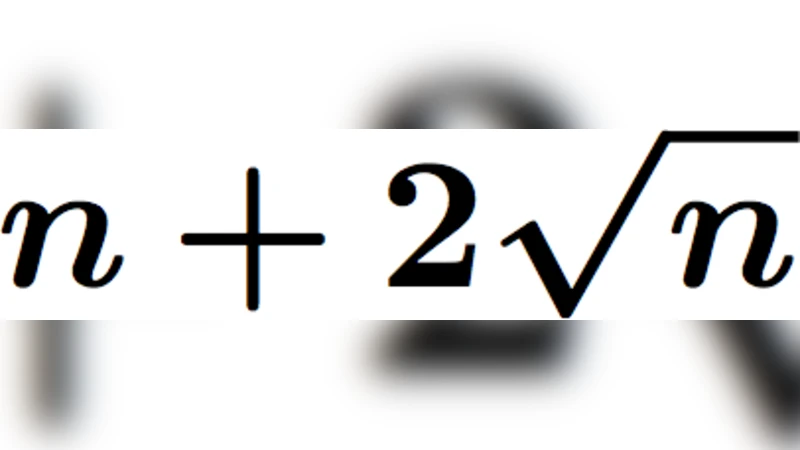

The authors present two de‑amortized ORAM simulations that guarantee a sub‑linear bound on every individual access. The first builds on the classic square‑root ORAM of Goldreich and Ostrovsky. In the original design, after every √n requests the entire table is rebuilt using a fresh random permutation, an operation that costs O(n·log²n) server accesses. The authors split this costly rebuild into √n incremental batches, each performed immediately after a client request. To orchestrate this, they maintain two buffers (B_cur and B_prev) of size √n, two tables (T_cur and T_next) each of size n+√n, and a temporary workspace W of size n+2√n. During an epoch of √n requests, T_cur serves reads/writes while W incrementally constructs T_next. At the epoch’s end the roles of T_cur and T_next are swapped, and the buffers are rotated. Consequently, every request incurs only O(√n·log²n) accesses, matching the amortized cost of the original scheme but now as a worst‑case guarantee. The client’s private memory requirement remains O(1).

The second construction de‑amortizes the more efficient “log‑n hierarchical” ORAM introduced by Goodrich et al. That scheme uses a small cache C (size O(log n)), a stash S (size O(log n)), and a hierarchy of cuckoo hash tables T₁,…,T_L where |T_i| = 2^i·q and L = O(log n). In the original version, when a level becomes full the entire level (and possibly lower levels) is rebuilt, leading to occasional large spikes in server accesses. The authors propose to perform each level’s rebuild in small, evenly spaced chunks. Specifically, the O(|T_i|) work required to rebuild T_i is divided into |T_i|/√n (or another suitable granularity) sub‑operations, each executed after a client request. By interleaving rebuild work across all levels, the algorithm ensures that at any moment only O(log n) server accesses are performed for the request itself, while the background work proceeds in lockstep. The client now needs a workspace of size O(n^τ) for a constant τ>0, which is realistic for modern clients. The worst‑case overhead per request becomes O(log n), and the amortized overhead remains O(log n) as in the original scheme.

Both constructions preserve obliviousness: every server access either targets a truly random location (dummy accesses) or follows a pseudo‑random hash function that is refreshed each epoch or rebuild phase. Probabilistic encryption guarantees that the server cannot distinguish reads from writes or track repeated accesses.

The paper’s contributions are threefold: (1) a concrete method to de‑amortize the square‑root ORAM, achieving O(√n·log²n) worst‑case cost with O(1) client memory; (2) a de‑amortized version of the log‑n hierarchical ORAM with O(log n) worst‑case cost and O(n^τ) client memory; (3) a thorough analysis showing that these bounds hold with high probability and that the schemes remain secure under standard ORAM assumptions.

These results close the gap between theoretical ORAM efficiency and practical deployment requirements, making oblivious storage viable for applications where predictable latency is essential. The techniques of incremental rebuilding and careful workspace management may also inspire de‑amortization in other cryptographic or data‑structure contexts.

Comments & Academic Discussion

Loading comments...

Leave a Comment