Unleashing the Power of Mobile Cloud Computing using ThinkAir

Smartphones have exploded in popularity in recent years, becoming ever more sophisticated and capable. As a result, developers worldwide are building increasingly complex applications that require ever increasing amounts of computational power and energy. In this paper we propose ThinkAir, a framework that makes it simple for developers to migrate their smartphone applications to the cloud. ThinkAir exploits the concept of smartphone virtualization in the cloud and provides method level computation offloading. Advancing on previous works, it focuses on the elasticity and scalability of the server side and enhances the power of mobile cloud computing by parallelizing method execution using multiple Virtual Machine (VM) images. We evaluate the system using a range of benchmarks starting from simple micro-benchmarks to more complex applications. First, we show that the execution time and energy consumption decrease two orders of magnitude for the N-queens puzzle and one order of magnitude for a face detection and a virus scan application, using cloud offloading. We then show that if a task is parallelizable, the user can request more than one VM to execute it, and these VMs will be provided dynamically. In fact, by exploiting parallelization, we achieve a greater reduction on the execution time and energy consumption for the previous applications. Finally, we use a memory-hungry image combiner tool to demonstrate that applications can dynamically request VMs with more computational power in order to meet their computational requirements.

💡 Research Summary

The paper introduces ThinkAir, a novel mobile cloud computing framework that enables fine‑grained, method‑level offloading of smartphone applications to the cloud while supporting dynamic, elastic allocation of virtual machines (VMs) for parallel execution. The authors begin by highlighting the growing computational and energy demands of modern mobile apps and the limitations of existing solutions such as MAUI (which provides method‑level offloading but assigns a dedicated server per app, limiting scalability) and CloneCloud (which clones the whole device OS to the cloud but relies on offline static analysis and lacks dynamic resource management).

ThinkAir’s design rests on four key assumptions: continued improvements in mobile broadband (low RTT, high bandwidth), increasing app complexity, and the availability of low‑cost, on‑demand cloud resources. Guided by these assumptions, the framework emphasizes (1) rapid adaptation to changing network and device conditions, (2) a developer‑friendly API that requires only minimal code changes, (3) performance and energy gains through cloud execution, and (4) elastic scaling of computational power, allowing users to request multiple VMs for parallelism.

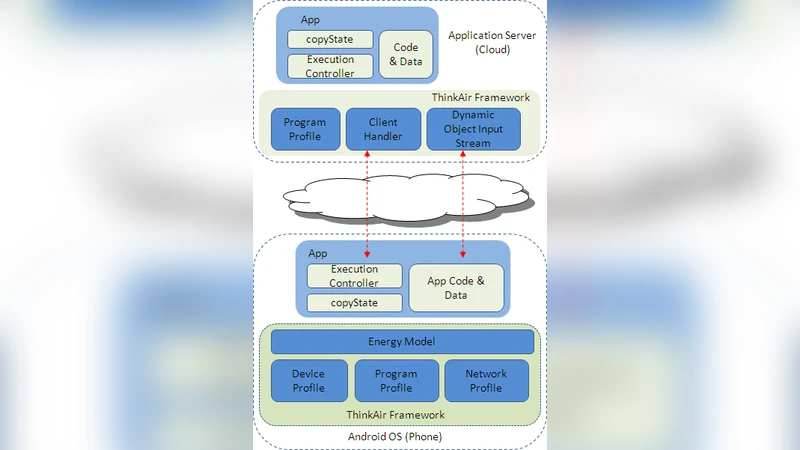

The architecture comprises three major components: the Execution Environment (including a compiler and an ExecutionController), the Application Server (hosted in a virtualized cloud), and a set of Profilers. The compiler automatically transforms Java code annotated with @Remote and extending the abstract Remoteable class, inserting stub code that routes method calls through the ExecutionController. At runtime, the ExecutionController consults the Profilers, which continuously collect device status (battery level, CPU load, Wi‑Fi/Cellular connectivity), network metrics, method execution history, and estimated energy costs. Based on this multi‑dimensional profile, the controller decides whether to execute locally or offload, and if offloading, how many VMs to allocate.

On the cloud side, each application is served by a ClientHandler that can resume existing VM clones, instantiate additional VMs, or distribute work across several VMs when parallelism is requested. The implementation uses Oracle VirtualBox but is designed to be portable to other hypervisors such as Xen or QEMU. The framework thus supports on‑demand scaling similar to commercial IaaS platforms, enabling users to select VM configurations (CPU cores, memory) that match their workload’s requirements.

The evaluation covers micro‑benchmarks and four real‑world workloads: the N‑Queens puzzle, face detection, virus scanning, and a memory‑intensive image combiner. Results show that single‑VM offloading reduces execution time and energy consumption by up to two orders of magnitude for N‑Queens and about one order of magnitude for the other two applications. When parallelism is exploited (2–4 VMs), further speedups of 2–3× are observed, especially for the compute‑heavy N‑Queens and image combiner tasks, while energy consumption also drops proportionally. These findings demonstrate that dynamic VM provisioning and parallel execution can substantially amplify the benefits of mobile cloud offloading.

The authors discuss several practical considerations. Network latency remains a critical factor; in high‑latency scenarios the offloading advantage diminishes, particularly for fine‑grained methods with small computational loads. Data transfer costs can offset gains for bandwidth‑intensive tasks. Managing multiple VMs incurs cloud‑service costs and raises security and privacy concerns that must be addressed before large‑scale deployment.

In conclusion, ThinkAir represents a significant step forward by combining fine‑grained offloading, real‑time profiling, and elastic, parallel cloud resources within a single framework. It offers developers a simple annotation‑based API, requires minimal code changes, and delivers measurable performance and energy improvements across a variety of applications. Future work outlined includes automated detection of parallelizable code regions, richer cost‑benefit models, support for additional mobile operating systems, and stronger security mechanisms to protect user data during offload.

Comments & Academic Discussion

Loading comments...

Leave a Comment