Metamodel-based importance sampling for the simulation of rare events

In the field of structural reliability, the Monte-Carlo estimator is considered as the reference probability estimator. However, it is still untractable for real engineering cases since it requires a high number of runs of the model. In order to redu…

Authors: V. Dubourg, F. Deheeger, B. Sudret

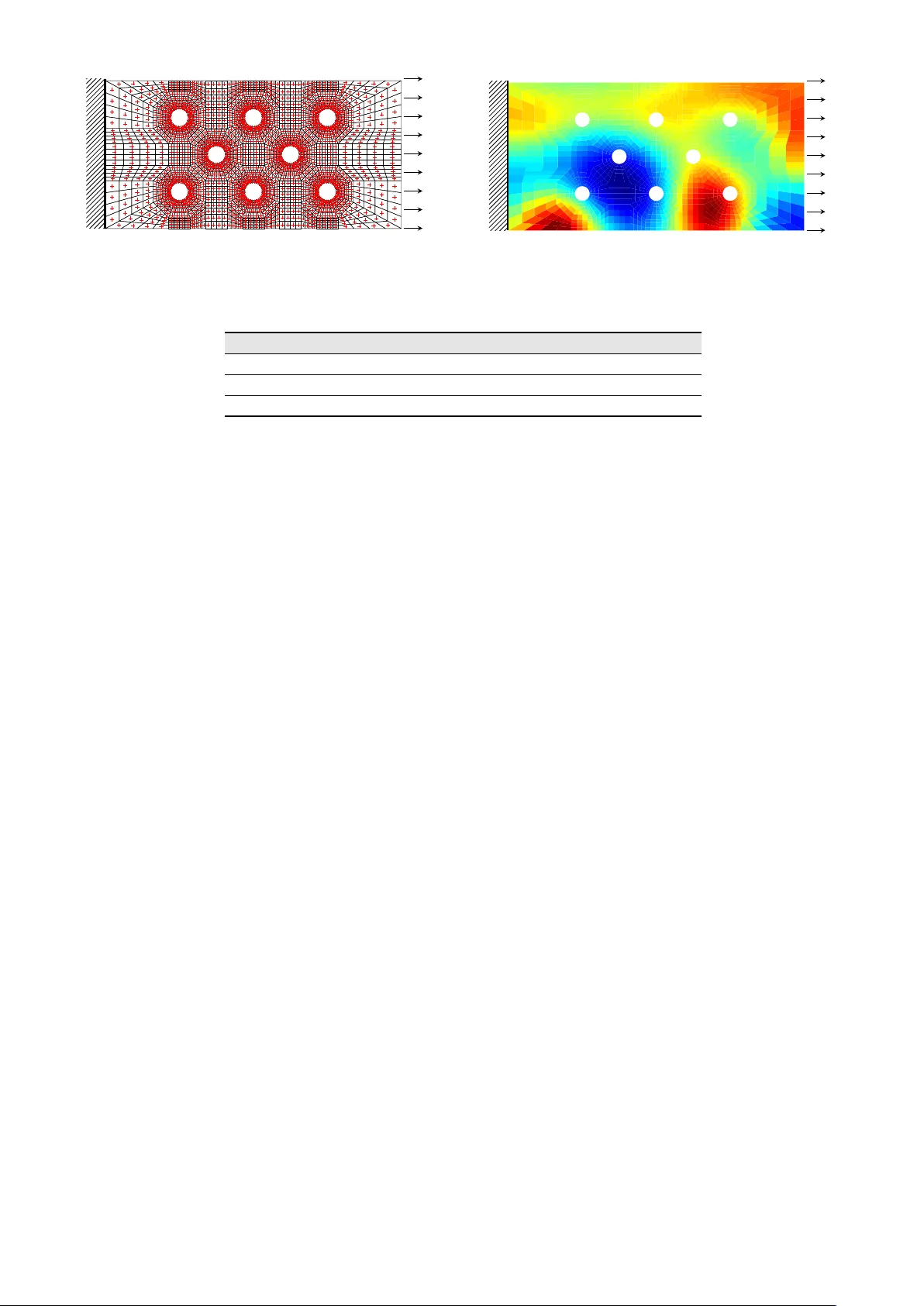

Metamodel-based importance sampling for the simulation of rare e v ents V . Dubour g 1 , 2 , F . Deheeger 2 , B. Sudret 1 , 2 1 Clermont Universit ´ e, IFMA, EA 3867, Laboratoir e de M ´ ecanique et Ing ´ enieries, BP 10448 F-63000 Clermont-F errand 2 Phimeca Engineering, Centr e d’Af fair es du Z ´ enith, 34 rue de Sarli ` eve, F-63800 Cournon d’A uver gne ABSTRA CT : In the field of structural reliability , the Monte-Carlo estimator is considered as the reference probability estimator . Howe ver , it is still untractable for real engineering cases since it requires a high number of runs of the model. In order to reduce the number of computer experiments, many other approaches known as reliability methods have been proposed. A certain approach consists in replacing the original experiment by a surrogate which is much faster to ev aluate. Nev ertheless, it is often difficult (or ev en impossible) to quantify the error made by this substitution. In this paper an alternativ e approach is dev eloped. It takes advantage of the kriging meta-modeling and importance sampling techniques. The proposed alternati ve estimator is finally applied to a finite element based structural reliability analysis. 1 INTR ODUCTION Reliability analysis consists in the assessment of the le vel of safety of a system. Giv en a probabilistic model (a random vector X with pr obability density function (PDF) f ) and a performance model (a func- tion g ), it makes use of mathematical techniques in order to estimate the system’ s le vel of safety in the form of a failure probability . A basic approach, which makes reference, is the Monte-Carlo simulation tech- nique that resorts to numerical simulation of the performance model through the probabilistic model. Failure is usually defined as the e vent F = { g ( X ) ≤ 0 } , so that the failure probability is defined as follo ws: p f = P ( F ) = P ( { g ( X ) ≤ 0 } ) = Z g ( x ) ≤ 0 f ( x ) d x (1) Introducing the failure indicator function 1 g ≤ 0 being equal to one if g ( x ) ≤ 0 and zero otherwise, the f ailure probability turns out to be the mathematical expecta- tion of this indicator function with respect to the joint probability density function f of the random vector X . The Monte-Carlo estimator is then deri ved from this con venient definition. It reads: b p f MC = b E f 1 g ≤ 0 ( X ) = 1 N N X k =1 1 g ≤ 0 x ( k ) (2) where n x (1) , . . . , x ( N ) o , N ∈ N ∗ is a set of samples of the random vector X . According to the central limit theorem, this estimator is asymptotically unbi- ased and normally distributed with v ariance: σ 2 b p f MC = V b p f MC = p f 1 − p f N (3) In practice, this variance is compared to the unbiased estimate of the failure probability in order to decide whether it is accurate enough or not. The coefficient of variation is defined as δ b p f MC = σ b p f MC / b p f MC . Giv en N , this coefficient dramatically increases as soon as the failure ev ent is too rare ( p f → 0 ) and prov es that the Monte-Carlo estimation technique intractable for real world engineering problems for which the perfor - mance function in volv es the output of an expensi ve- to-e valuate black box function – e.g . a finite element code. Note that this remark is also true for too fre- quent e vents ( p f → 1 ) as the coef ficient of v ariation of 1 − b p f MC exhibits the same property . In order to reduce the number of simulation runs, a lar ge set of other approaches known as reliability methods ha ve been proposed. They might be classi- fied as follo ws. A first approach consists in replacing the orig- inal experiment by a surr ogate which is much faster to ev aluate. Among such approaches, there are the well-kno wn first and second order re- liability methods ( e.g . Ditle vsen and Madsen, 1996; Lemaire, 2009), quadratic response surfaces (Bucher and Bourgund, 1990) and the more re- cent meta-models such as support vector machines (Hurtado, 2004; Deheeger and Lemaire, 2007), neu- ral networks (Papadrakakis and Lagaros, 2002) and kriging (Kaymaz, 2005; Bichon et al., 2008). Nev er- theless, it is often difficult or e ven impossible to quan- tify the error made by such a substitution. The other approaches are the so-called variance r e- duction techniques . In essence, these techniques aims at fa voring the Monte-Carlo simulation of the fail- ure e vent F in order to reduce the estimation v ari- ance. These approaches are more rob ust because they do not rely on any assumption re garding the func- tional relationship g , though they are still too compu- tationally demanding to be implemented for industrial cases. For an extended re vie w of these techniques, the reader is referred to the book by Rubinstein and Kroese (2008). In this paper an hybrid approach is de veloped. It is based on both mar gin meta-models (defined here- after) and the importance sampling technique. It is then applied to an academic structural reliability prob- lem in volving a linear finite-element model and a two- dimensional random field. 2 AD APTIVE PR OB ABILISTIC CLASSIFICA TION USING MARGIN MET A-MODELS A meta-model means to a model what the model it- self means to the real-world. Loosely speaking, it is the model of the model . As opposed to the model, its construction does not rely on any physical assump- tion about the phenomenon of interest but rather on statistical considerations about the coherence of some scattered observ ations that result from a set of e xperi- ments. This set is usually referred to as a design of ex- periments (DOE): X = { x 1 , . . . , x m } . It should be care- fully selected in order to retriev e the largest amount of statistical information about the underlying functional relationship ov er the input space D x . Here, we attempt to build a meta-model for the failure indicator func- tion 1 g ≤ 0 . In the statistical learning theory (V apnik, 1995) this is referred to as a classification problem. Hereafter , we define a mar gin meta-model as a meta-model that is able to gi ve a pr obabilistic pr edic- tion of the response quantity of interest whose spread ( i.e. variance) depends on the lack of information brought by the DOE. It is thus reducible by bring- ing more observ ations into the DOE. In other words, this is an epistemic (reducible) source of uncertainty . T o the authors’ kno wledge, there exist only two fam- ilies of such margin meta-models: the probabilistic support vector machines P -SVM by Platt (1999) and Gaussian-Process- (or kriging-) based classification (Santner et al., 2003). The present paper mak es use of the kriging meta-model, but the ov erall concept could easily be extended to P -SVM. The theoretical aspects of the kriging prediction are briefly introduced in the follo wing subsection before it is applied to the classi- fication problem of interest. 2.1 Gaussian-pr ocess based pr ediction The Gaussian-Process based prediction (also known as kriging ) theory is detailed in the book by Santner et al. (2003). In essence, kriging assumes that the per- formance function g is a sample path of an underlying Gaussian stochastic process G that would read as fol- lo ws: G ( x ) = f ( x ) T β + Z ( x ) (4) where: • f ( x ) T β denotes the mean of the GP which cor- responds to a classical linear regression model on a given functional basis { f i , i = 1 , . . . , p } ∈ L 2 ( D x , R ) ; • Z ( x ) denotes the stochastic part of the GP which is modelled as a zero mean, constant variance σ 2 G , stationary Gaussian process with a gi ven sym- metric positiv e definite autocorrelation model. It is fully defined by its autocov ariance function which reads ( x , x 0 ∈ D x × D x ): C GG x , x 0 = σ 2 G R x − x 0 , ` (5) where ` is a vector of parameters defining R . The most widely used class of autocorrelation func- tions is the anisotropic squared exponential model: R x − x 0 , ` = exp n X k =1 − x k − x 0 k ` k 2 ! (6) The best linear unbiased estimation of G at point x is shown (Santner et al., 2003; Se verini, 2005, Chap. 8) to be the follo wing Gaussian random variate: b G ( x ) = G ( x ) |{ g ( x 1 ) , . . . , g ( x m ) } ∼ N µ b G ( x ) , σ b G ( x ) (7) with moments: µ b G ( x ) = f ( x ) T b β + r ( x ) T R − 1 Y − F b β (8) σ 2 b G ( x ) = σ 2 G 1 − f ( x ) r ( x ) T 0 F T F R − 1 f ( x ) r ( x ) ! (9) where we hav e introduced r , R and F such that: r i ( x ) = R ( | x − x i | , ` ) , i = 1 , . . . , m (10) R ij = R x i − x j , ` , i = 1 , . . . , m, j = 1 , . . . , m (11) F ij = f i x j , i = 1 , . . . , p, j = 1 , . . . , m (12) At this stage it can easily be prov en that µ b G ( x i ) = g ( x i ) and σ b G ( x i ) = 0 for i = 1 , . . . , m , thus meaning the kriging surrogate is an e xact interpolator . Gi ven a choice for the regression and correlation models, the optimal set of parameters β ∗ , ` ∗ and σ 2 ∗ G can then be inferred using the maximum lik eli- hood principle applied to the unique sparse obser- v ation of the GP sample path grouped in the vector y = h g ( x i ) , i = 1 , . . . , m i . This inference problem turns into an optimization problem that can be solved an- alytically for both β ∗ and σ 2 ∗ G assuming ` ∗ is kno wn. Thus, the problem is solved in two steps: the max- imum likelihood estimation of ` ∗ is first solved by a global optimization algorithm which in turns gi ves the optimal v alues of β ∗ and σ 2 ∗ G . 2.2 Pr obabilistic classification function A surrogate-based reliability analysis simply consists in replacing the performance function g by its meta- model µ b G . Again, this meta-model may be a first- or second-order T aylor expansion of the limit-state surface g = 0 at a so-called design point (Ditle vsen and Madsen, 1996, FORM/SORM), a polynomial re- sponse surface (Bucher and Bourgund, 1990), a neu- ral networks based prediction (Papadrakakis and La- garos, 2002), or a kriging based prediction (Bichon et al., 2008). This surrog ate may not be fully accurate and it is difficult or e ven impossible to quantify the error made by substitution on the final failure proba- bility of interest. In this paper as in the work by Picheny (2009), it is proposed to use the complete probabilistic pre- diction provided by the kriging meta-model instead of the sole mean prediction ( e.g . as in Bichon et al., 2008). Indeed, since the probabilistic distribution of the prediction is fully characterized, the probability that the prediction is neg ativ e may be e xpressed in closed-form and reads as follo ws: P h b G ( x ) ≤ 0 i = Φ 0 − µ b G ( x ) σ b G ( x ) ! (13) Note that this latter quantity is not the sought failure probability p f , this is simply the probability that the prediction b G at some deterministic vector x is nega- ti ve. Picheny (2009) proposes then to use this proba- bilistic classification function as a surrogate for the real failure indicator function, and uses crude Monte- Carlo simulation to estimate the f ailure probability . A dif ferent use is proposed in the next section. Figure 1 illustrates the concepts introduced in this section on a basic structural reliability example from Der Kiureghian and Dakessian (1998). This exam- ple in volv es two independent standard Gaussian ran- dom v ariates X 1 and X 2 , and the performance function reads: g ( x 1 , x 2 ) = b − x 2 − κ ( x 1 − e ) 2 (14) where b = 5 , κ = 0 . 5 and e = 0 . 1 . In subfigure 1(a), the limit-state function g ( x ) = 0 is represented by the black dash-dot line. The red minusses ( g ≤ 0 ) and blue plusses ( g > 0 ) represent the initial DOE from which the kriging meta-model is built. The mean prediction’ s limit-state µ b G ( x ) = 0 is represented by the dashed black line. It can be seen that the meta- model is not fully accurate since the green triangle x 0 (among others) is misclassified. Indeed, x 0 is safe ac- cording to the real performance function g , but it f ails according to the mean prediction of the meta-model µ b G . The probabilistic classification function makes a smoother decision possible: x 0 fails with a 60% prob- ability w .r .t. the epistemic uncertainty in the random prediction b G ( x 0 ) ∼ N ( µ b G ( x 0 ) , σ b G ( x 0 )) . Note also that the red and blue points in the DOE fails with prob- abilities 100% and 0% (safe) respectiv ely due to the interpolating property of the kriging metamodel. Sub- figure 1(a) is the one-dimensional illustration of the three classification strate gies for the v ector x 0 . The deterministic decision function is an heaviside func- tion centered in zero, and the probabilistic classifica- tion is a smoother Gaussian cumulativ e density func- tion. 2.3 Refinement of the pr obabilistic classification function In this subsection, a strategy is proposed in order to refine the probabilistic classification function so that it tends to wards the real indicator function 1 g ≤ 0 . First, let the mar gin of uncertainty M be defined as follo ws: M = n x : − k σ b G ( x ) ≤ b G ( x ) ≤ + k σ b G ( x ) o (15) where k might be chosen as k = Φ − 1 (97 . 5%) = 1 . 96 meaning a 95% confidence interval onto the predic- tion of the limit-state surface is chosen. Such a 95% confidence margin is illustrated in Subfigure 1(a) as the area bounded belo w by the blue line (2.5% confi- dence le vel) and abov e by the red line (97.5% confi- dence le vel). The points that are located in this margin hav e an uncertain sign, the others being either failed or safe with a confidence le vel greater than 97.5%. W e also define the probability that a point x ∈ D x belongs to this margin of uncertainty . Due to the Gaussian nature of the prediction, this probability may also be expressed in closed-form and reads as follo ws: P [ x ∈ M ] = Φ k σ b G ( x ) − µ b G ( x ) σ b G ( x ) ! − Φ − k σ b G ( x ) − µ b G ( x ) σ b G ( x ) ! (16) Then, finding the point that maximizes this quan- tity on the support of the PDF of X will finally bring the best improvement point in the DOE. Starting with this statement, many authors in the kriging literature 8 0 8 x 1 8 0 8 x 2 x 0 g ( x ) = 0 [ c G ( x ) 0 ] = 2 . 5 % µ c G ( x ) = 0 [ c G ( x ) 0 ] = 9 7 . 5 % [ c G ( x ) 0 ] = 9 7 . 5 % 0.0 0.2 0.4 0.6 0.8 1.0 [ c G ( x ) 0 ] (a) Limit-states and probability contours. − 1 . 9 6 σ c G ( x 0 ) g ( x 0 ) µ c G ( x 0 ) + 1 . 9 6 σ c G ( x 0 ) g 0 ( x 0 ) = 0 . 0 0 [ c G ( x 0 ) 0 ] = 0 . 6 0 µ c G 0 ( x 0 ) = 1 . 0 0 0 . 9 7 5 0 . 0 2 5 0 Deterministic Probabilistic (b) Classification giv en x = x 0 . Figure 1: Comparison of the three classification strategies on a two-dimensional example from Der Kiureghian and Dakessian (1998). decide to use global optimization algorithms in or - der to find the best improvement point. For instance, Bichon et al. (2008) use a dif ferent criterion named the e xpected feasibility function , and Lee and Jung (2008) use the constraint boundary sampling crite- rion. Note also that an equiv alent concept is used by Hurtado (2004); Deheeger and Lemaire (2007); De- heeger (2008); Bourinet et al. (2010) for SVM. In this paper , as in Dubourg et al. (2011), a slightly dif ferent strategy is proposed in order to add se veral points in the DOE. The proposed criterion P [ x ∈ M ] is multiplied by a weighting density function w so that C ( x ) = P ( x ∈ M ) w ( x ) (17) can itself be reg arded as a PDF up to an unknown b ut finite normalizing constant. The weighting density w can either be chosen as the original PDF of X , or , as it is proposed here, the uniform PDF on a sufficiently large confidence region of the original PDF . Such a confidence region might be difficult to define for any gi ven PDF , but as it is usually done in structural relia- bility (Ditle vsen and Madsen, 1996), the original ran- dom v ector X can be transformed into a probabilisti- cally equiv alent standard Gaussian random vector U for which the confidence region is simply an hyper- sphere with radius β 0 . The reader is referred to Lebrun and Dutfoy (2009) for a recent discussion on such mappings U = T ( X ) . In that gi ven space, β 0 can be easily selected as e.g. β 0 = 8 which corresponds to the maximal generalized reliability index (Ditlevsen and Madsen, 1996) that can be justified numerically , and the sought uniform PDF is simply defined in terms of the follo wing indicator function: w ( u ) ∝ 1 √ u T u ≤ β 0 ( u ) (18) Marko v-chain Monte-Carlo simulation techniques ( e.g . the slice sampling technique proposed by Neal, 2003) might be used in order to generate N (say N = 10 4 ) samples from the pseudo-PDF C . These sam- ples are expected to be highly concentrated around the maxima of the criterion P ( x ∈ M ) , and thus in the vicinity of the predicted limit-state µ b G ( x ) = 0 where the sign of b G is the most uncertain. This large candi- date population can then be reduced to a smaller one that condensate its statistical properties by means of a K -means clustering algorithm (MacQueen, 1967). The K ( K being gi ven) cluster centers uniformly span the margin M and may be added to the DOE in order to enrich the prediction of the performance function in the vicinity of the limit-state and thus reduce the margin of uncertainty . 3 MET A-MODEL-BASED IMPOR T ANCE SAMPLING Picheny (2009) proposes to use the probabilistic clas- sification function as a surrogate for the real indica- tor function, so that the failure probability is rewritten from its definition in Eq. (1) as follo ws: p f ε = Z P h b G ( x ) ≤ 0 i f ( x ) d x ≡ E f h P h b G ( X ) ≤ 0 ii (19) It is argued here that this latter quantity does not equal the failure probability of interest because it sums the aleatory uncertainty in the random vector X and the epistemic uncertainty in the prediction b G . This is the reason why p f ε will hereafter be referred to as the augmented failur e pr obability . As a matter of fact, e ven if the epistemic uncertainty in the prediction can be reduced ( e.g . by enriching the DOE as proposed in section 2.3), it is impossible to quantify the contrib u- tion of each source of uncertainty a posteriori . This remark motiv ates the approach introduced in this section where the probabilistic classification function is used in conjunction with the importance sampling technique in order to build a ne w estimator of the failure probability . 3.1 Importance sampling According to Rubinstein and Kroese (2008), impor - tance sampling (IS) is the most ef ficient variance re- duction technique. This technique consists in comput- ing the mathematical expectation of the failure indica- tor function according to a biased PDF which fav ors the failure e vent of interest. This PDF is called the instrumental density . Gi ven an instrumental density h , such that h dom- inates 1 g ≤ 0 f , the definition of the failure probability of Eq. (1) may be re written as follows: p f = Z g ( x ) ≤ 0 f ( x ) h ( x ) h ( x ) d x ≡ E h 1 g ≤ 0 ( X ) f ( X ) h ( X ) (20) where the subscript h on the expectation operator is added to recall that X is therefore distributed accord- ing to h . Note that the domination requirement of h ov er 1 g ≤ 0 f simply means that: ∀ x ∈ D x , h ( x ) = 0 ⇒ 1 g ≤ 0 ( x ) f ( x ) = 0 (21) so that the so-called likelihood ratio ` ( x ) = f ( x ) /h ( x ) is finite for any gi ven x ∈ D x . The latter definition of the f ailure probability easily leads to the establishment of the importance sampling estimator which reads as follo ws: b p f IS = b E f 1 g ≤ 0 ( X ) = 1 N N X k =1 1 g ≤ 0 x ( k ) f ( x ( k ) ) h ( x ( k ) ) (22) where n x (1) , . . . , x ( N ) o , N ∈ N ∗ is a set of samples from h . According to the central limit theorem, this estima- tion is unbiased and its quality may be measured by means of its v ariance of estimation which reads: V ar b p f IS = 1 N − 1 1 N N X k =1 1 g ≤ 0 ( x ( k ) ) f ( x ( k ) ) h ( x ( k ) ) ! 2 − b p 2 f IS (23) Rubinstein and Kroese (2008) sho w that this vari- ance is zero (optimality of the IS estimator) when the instrumental PDF is chosen as: h ∗ ( x ) = 1 g ≤ 0 ( x ) f ( x ) R 1 g ≤ 0 ( x ) f ( x ) d x = 1 g ≤ 0 ( x ) f ( x ) p f (24) Ho wev er this instrumental PDF is not implementable in practice because it in volves the sought failure prob- ability in its denominator . There e xists infinitely many PDF h that allows to significantly reduce the v ariance of estimation though. 3.2 A meta-model-based appr oximation of the optimal instrumental PDF Dif ferent strategies have been proposed in order to build quasi-optimal instrumental PDF suited for spe- cific estimation problems. For instance, Melchers (1989) uses a standard normal PDF centered onto the most pr obable failur e point (MPFP) in the space of the independent standard Gaussian random v ariables U = T ( X ) in order to estimate a failure probability . Although this approach may lose accuracy as soon as the MPFP is not unique. Cannamela et al. (2008) use a kriging prediction of the performance function g in order to build an instrumental PDF suited for the es- timation of extreme quantiles of the random v ariate G = g ( X ) . Here, it is proposed to use the probabilistic classi- fication function in Eq. (13) as a surrogate for the real indicator function in the optimal instrumental PDF in Eq. (24). The proposed quasi-optimal PDF thus reads as follo ws: c h ∗ ( x ) = P h b G ( x ) ≤ 0 i f ( x ) R P h b G ( x ) ≤ 0 i f ( x ) d x ≡ 1 b G ≤ 0 ( x ) f ( x ) p f ε (25) where p f ε is the augmented failure probability which has been already defined in Eq. (19). This quasi- optimal instrumental PDF is compared to its impracti- cal optimal counterpart in Figure 2 using the e xample of Section 2.2. 3.3 The meta-model-based importance sampling estimator Choosing the proposed quasi-optimal instrumental PDF in Eq. (25) in the importance sampling defini- tion of the failure probability in Eq. (20) leads to the follo wing new definition: p f = Z 1 g ≤ 0 ( x ) f ( x ) c h ∗ ( x ) c h ∗ ( x ) d x (26) = p f ε Z 1 g ≤ 0 ( x ) P h b G ( x ) ≤ 0 i c h ∗ ( x ) d x (27) ≡ p f ε α corr (28) where we hav e introduced: α corr ≡ E c h ∗ 1 g ≤ 0 ( X ) P h b G ( X ) ≤ 0 i (29) This means that the failure probability is no w defined as the product between the augmented failure proba- bility p f ε and a correction factor α corr . This correction factor is defined as the e xpected ratio between the real x 1 x 2 h ∗ ( x ) g ( x ) = 0 0.00 0.02 0.04 0.06 0.08 0.10 0.12 0.14 f ( x ) (a) The optimal instrumental PDF . x 1 x 2 d h ∗ ( x ) g ( x ) = 0 0.00 0.02 0.04 0.06 0.08 0.10 0.12 0.14 f ( x ) (b) A quasi-optimal PDF . Figure 2: Comparison of the instrumental PDF on the two-dimensional example from Der Kiure ghian and Dakessian (1998). indicator function 1 g ≤ 0 and the probabilistic classifi- cation function P [ b G ( • ) ≤ 0] . Thus, if the kriging pre- diction is fully accurate, the correction factor is equal to unity and the failure probability is identical to the augmented failure probability (optimality of the pro- posed estimator). On the other hand, in the more gen- eral case where the kriging prediction is not fully ac- curate, the correction factor modifies the augmented failure probability accounting for the epistemic uncer- tainty in the prediction. The two terms of the latter definition of the failure probability may now be estimated using Monte-Carlo simulation: b p f ε = 1 N ε N ε X k =1 P h b G ( x ( k ) ) ≤ 0 i (30) b α corr = 1 N corr N corr X k =1 1 g ≤ 0 ( x ( k ) ) P h b G ( x ( k ) ) ≤ 0 i (31) where the first N ε -sample is generated from the orig- inal PDF f , and the second N corr -sample is generated from the quasi-optimal instrumental PDF c h ∗ . Accord- ing to the central limit theorem, these two estimates are unbiased and normally distrib uted. Their respec- ti ve v ariance of estimation denoted by σ 2 ε and σ 2 corr are not gi ven here b ut they might be easily deri ved. T o generate samples from c h ∗ , it is proposed to use a Markov chain Monte-Carlo simulation tech- nique which is applicable to a broad class of impr oper PDF for which the normalizing constant is not known and thus for the instrumental PDF of interest c h ∗ ( x ) ∝ P [ b G ( x ) ≤ 0] f ( x ) . The work presented here makes use of the slice sampling technique (Neal, 2003). Finally , the final estimator of the f ailure probability simply reads as follo ws: b p f metaIS = b p f ε b α corr (32) The calculation of the coef ficient of variation of the fi- nal estimator b p f metaIS will be detailed in a forthcoming paper . 4 RELIABILITY ANAL YSIS OF AN 8-HOLE PLA TE This structural reliability example is inspired from Deheeger and Lemaire (2007). It concerns the relia- bility analysis of a 200 × 100 mm 8-hole plate illus- trated in Figure 3. The diameter of the holes ∅ is set equal to 10 mm. Its left end is clamped both horizon- tally and vertically while its right end is subjected to a distributed line load with magnitude q = 100 MP a. Plain stress is assumed and the material is supposed to hav e a linear elastic behavior . The Poisson coeffi- cient ν is set equal to 0.3. Due to the boundary con- ditions the Poisson ef fect is not the same on all the plate though. The Y oung’ s modulus is modeled by an homogeneous lognormal random field with a mean µ E = 200 000 MPa, a coef ficient of v ariation δ E = 25% and assuming an isotropic squared exponential auto- correlation function with a 20 mm correlation length ` . The two-dimensional random field is represented by a translated Karhunen-Loe ve expansion discretized by means of a wa velet-Galerkin strategy proposed by Phoon et al. (2002). The stochastic model in volves 20 independent standard Gaussian random v ariates grouped in the vector X to simulate the random field. The mechanical model is solved with Code Aster (eDF , R&D Division, 2006) in order to retrie ve the maximal V on Mises stress in the plate P . The perfor- mance function is then defined as follo ws: g ( x ) = σ 0 − max p ∈P { σ V on Mises ( p ) } (33) with respect to an arbitrary threshold σ 0 = 450 MPa. Clamped q = 100 MPa (a) Mesh, boundary conditions and loads. Clamped q = 100 MPa (b) One sample path of the Y oung modulus random field. Figure 3: Illustration of the 8-hole plate example from Dehee ger and Lemaire (2007). DOE MPFP Simulations P f estimate C. o. V . Subset (ref .) - - 25 000 1.70 × 10 − 5 15% multi-FORM - 1 168 - 0.65 × 10 − 5 - meta-IS 1 000 - 250 1.41 × 10 − 5 ¡ 10% T able 1: Reliability analyses results for the 8-hole plate example from Dehee ger and Lemaire (2007). The proposed meta-model-based importance sam- pling procedure is applied to this structural reliability example. First an initial kriging predictor is built for the performance function g using a 100-point DOE. These 100 points are uniformally generated within the β 0 radius hypersphere. Based on this initial pre- diction, the DOE refinement procedure introduced in Section 2.3 is used. K = 100 new points are added at each refinement iteration. The refinement procedure is stopped after 1 000 estimations of the performance function. This may seem arbitrary but it is difficult to provide another stopping criterion for the refinement procedure – this needs further in vestigation. Then, the probabilistic classification function is defined with re- spect to the latest (finest) kriging prediction and it is used to compute the proposed estimator of the failure probability . The results are provided in T able 1. They are com- pared to a reference solution obtained by subset sim- ulation (Au and Beck, 2001), and the multi-FORM estimator from Der Kiure ghian and Dakessian (1998) using FER UM v4.0 (Bourinet et al., 2009) implemen- tations of these algorithms. FER UM is a Matlab tool- box for reliability analysis published under the Gen- eral Public License. The estimate of the augmented failure probability is equal to b p f ε = 2 . 85 × 10 − 5 , and the correction f actor is equal to b α corr = 0 . 412 . It means that the kriging predictor is rather accurate in that case. The probabilistic classification function is very close to its deterministic counterpart – and so is the instru- mental importance sampling density b h . 5 CONCLUSION Starting from the double premise that a surrogate- based reliability analyses does not permit to quantify the substitution error, and that the existing v ariance reduction techniques remain time-consuming when the performance function in volv es the output of an expensi ve-to-e v aluate black box function, an hybrid strategy has been proposed. First, the probabilistic classification function was introduced, this function allo ws a smoother classification than its determinis- tic counterpart accounting for the epistemic uncer - tainty in the kriging prediction. Using this smoother classification function within an importance sampling frame work then allo wed to deriv e a meta-model- based importance sampling estimator . This estimator con ver ges to wards the theoretically impractical opti- mal importance sampling estimator and may provide a significant reduction of the estimation variance as illustrated in the example. In the present paper , the refinement procedure that leads to the probabilistic classification function is stopped arbitrarily . W ork is in progress in order to es- tablish the best trade-of f between the size of the DOE and the number of simulations required to estimate the correction factor α corr . A CKNO WLEDGEMENTS The first author is funded by a CIFRE grant from Phimeca Engineering S.A. subsidized by the ANR T (con vention number 706/2008). The financial support from the ANR through the KidPocket project is also gratefully ackno wledged. REFERENCES Au, S. & J. Beck (2001). Estimation of small failure probabilities in high dimensions by subset simula- tion. Pr ob . Eng. Mec h. 16 (4), 263–277. Bichon, B., M. Eldred, L. Swiler , S. Mahade v an, & J. McFarland (2008). Ef ficient global reliability analysis for nonlinear implicit performance func- tions. AIAA Journal 46 (10), 2459–2468. Bourinet, J.-M., F . Deheeger , & M. Lemaire (2010). Assessing small failure probabilities by combined subset simulation and support vector machines. Submitted to Structural Safety . Bourinet, J.-M., C. Mattrand, & V . Dubourg (2009). A re view of recent features and improvements added to FER UM software. In Pr oc. ICOSSAR’09, Int Conf. on Structural Safety And Reliability , Osaka, J apan . Bucher , C. & U. Bourgund (1990). A fast and efficient response surface approach for structural reliability problems. Structural Safety 7 (1), 57–66. Cannamela, C., J. Garnier , & B. Iooss (2008). Con- trolled stratification for quantile estimation. Annals of Applied Statistics 2 (4), 1554–1580. Deheeger , F . (2008). Couplage m ´ ecano-fiabiliste, 2 SMART m ´ ethodologie d’appr entissage stochas- tique en fiabilit ´ e . Ph. D. thesis, Univ ersit ´ e Blaise Pascal - Clermont II. Deheeger , F . & M. Lemaire (2007). Support vector machine for efficient subset simulations: 2SMAR T method. In Pr oc. 10th Int. Conf. on Applications of Stat. and Pr ob . in Civil Engineering (ICASP10), T okyo, J apan . Der Kiureghian, A. & T . Dakessian (1998). Multiple design points in first and second-order reliability. Structural Safety 20 (1), 37–49. Ditle vsen, O. & H. Madsen (1996). Structural r eli- ability methods (Internet (v2.3.7, June-Sept 2007) ed.). John W iley & Sons Ltd, Chichester . Dubourg, V ., B. Sudret, & J.-M. Bourinet (2011). Reliability-based design optimization using krig- ing and subset simulation. Struct. Multidisc. Op- tim. Accepted . eDF , R&D Di vision (2006). Code Aster : Analyse des structur es et thermo-m ´ ecanique pour des ´ etudes et des r echer ches, V .7 . http://www.co de-aster.o rg . Hurtado, J. (2004). Structural r eliability – Statistical learning perspectives , V olume 17 of Lectur e notes in applied and computational mechanics . Springer . Kaymaz, I. (2005). Application of kriging method to structural reliability problems. Structural Safety 27 (2), 133–151. Lebrun, R. & A. Dutfoy (2009). An innov ating anal- ysis of the Nataf transformation from the copula vie wpoint. Pr ob . Eng. Mec h. 24 (3), 312–320. Lee, T . & J. Jung (2008). A sampling technique enhancing accuracy and efficienc y of metamodel- based RBDO: Constraint boundary sampling. Computers & Structur es 86 (13-14), 1463–1476. Lemaire, M. (2009). Structural Reliability . John W i- ley & Sons Inc. MacQueen, J. (1967). Some methods for classifi- cation and analysis of multiv ariate observations. In J. Le Cam, L.M. & Neyman (Ed.), Pr oc. 5 th Berkele y Symp. on Math. Stat. & Pr ob . , V olume 1, Berkele y , CA, pp. 281–297. Uni versity of Califor- nia Press. Melchers, R. (1989). Importance sampling in struc- tural systems. Structural Safety 6 (1), 3–10. Neal, R. (2003). Slice sampling. Annals Stat. 31 , 705–767. Papadrakakis, M. & N. Lagaros (2002). Reliability- based structural optimization using neural net- works and Monte Carlo simulation. Comput. Meth- ods Appl. Mech. Engr g. 191 (32), 3491–3507. Phoon, K., S. Huang, & S. Quek (2002). Simulation of second-order processes using Karhunen-Lo ` eve expansion. Computers & Structur es 80 (12), 1049– 1060. Picheny , V . (2009). Impr oving accur acy and compen- sating for uncertainty in surr ogate modeling . Ph. D. thesis, Uni versity of Florida. Platt, J. (1999). Probabilistic outputs for support vec- tor machines and comparisons to regularized like- lihood methods. In Advances in lar ge mar gin clas- sifiers , pp. 61–74. MIT Press. Rubinstein, R. & D. Kroese (2008). Simulation and the Monte Carlo method . W iley Series in Probabil- ity and Statistics. W iley . Santner , T ., B. W illiams, & W . Notz (2003). The design and analysis of computer experiments . Springer series in Statistics. Springer . Se verini, T . (2005). Elements of distribution the- ory . Cambridge series in Statistical and Probabilis- tic mathematics. Cambridge Uni versity Press. V apnik, V . (1995). The natur e of statistical learning theory . Springer .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment