Lack of confidence in ABC model choice

Approximate Bayesian computation (ABC) have become a essential tool for the analysis of complex stochastic models. Earlier, Grelaud et al. (2009) advocated the use of ABC for Bayesian model choice in the specific case of Gibbs random fields, relying on a inter-model sufficiency property to show that the approximation was legitimate. Having implemented ABC-based model choice in a wide range of phylogenetic models in the DIY-ABC software (Cornuet et al., 2008), we now present theoretical background as to why a generic use of ABC for model choice is ungrounded, since it depends on an unknown amount of information loss induced by the use of insufficient summary statistics. The approximation error of the posterior probabilities of the models under comparison may thus be unrelated with the computational effort spent in running an ABC algorithm. We then conclude that additional empirical verifications of the performances of the ABC procedure as those available in DIYABC are necessary to conduct model choice.

💡 Research Summary

The paper critically examines the use of Approximate Bayesian Computation (ABC) for Bayesian model choice, focusing on the often‑overlooked problem of information loss caused by insufficient summary statistics. After a brief historical overview of ABC’s success in complex stochastic settings—particularly population genetics and phylogenetics—the authors recall the basic rejection‑sampling ABC algorithm: one draws parameters from the prior, simulates data, and accepts the draw only if the distance between the simulated and observed summary statistics is below a tolerance ε. The algorithm’s validity rests on the assumption that the chosen summary statistic η(·) is sufficient for the parameters of interest; when ε→0 the ABC posterior π^ε(θ|y) converges to the true posterior π(θ|y). In practice η is almost never sufficient, so the limiting ABC posterior actually targets π(θ|η(y)), a coarser distribution that discards information present in the full data y.

For model comparison, the Bayesian framework introduces a model index M with prior π(M=m) and model‑specific priors π_m(θ_m). The posterior model probabilities are proportional to the marginal likelihoods w_m(y)=∫π_m(θ_m)f_m(y|θ_m)dθ_m. When these marginal likelihoods cannot be evaluated analytically, ABC‑MC (ABC for model choice) is employed. The standard ABC‑MC procedure concatenates the summary statistics of all competing models, draws a model and its parameters, simulates a dataset, and accepts the draw if the distance between η(z) and η(y) is ≤ε. The proportion of accepted draws from each model is taken as an estimate of the posterior model probability.

The authors derive the asymptotic behavior of this estimator. As the number of simulations T→∞ and ε→0, the ABC‑MC Bayes factor converges to

B₁₂^ε(y)=P(M=1,ρ≤ε)/P(M=2,ρ≤ε) → B₁₂^η(y)=∫π₁(θ₁)f_{η,1}(η(y)|θ₁)dθ₁ / ∫π₂(θ₂)f_{η,2}(η(y)|θ₂)dθ₂,

which is precisely the Bayes factor computed on the basis of the summary statistic alone. The true Bayes factor, however, can be expressed as

B₁₂(y)=g₁(y)·B₁₂^η(y)/g₂(y),

where g_i(y) captures the part of the likelihood that is independent of η. Unless g₁(y)=g₂(y) (a situation that essentially never occurs outside the special case of Gibbs random fields), the two Bayes factors differ by the ratio g₁(y)/g₂(y). This ratio can be arbitrarily large or small as the data dimension grows, because it corresponds to a density ratio for a sample of size O(n). Consequently, the ABC‑MC approximation may be arbitrarily far from the exact Bayes factor, even with infinite computational resources, whenever η is insufficient across models.

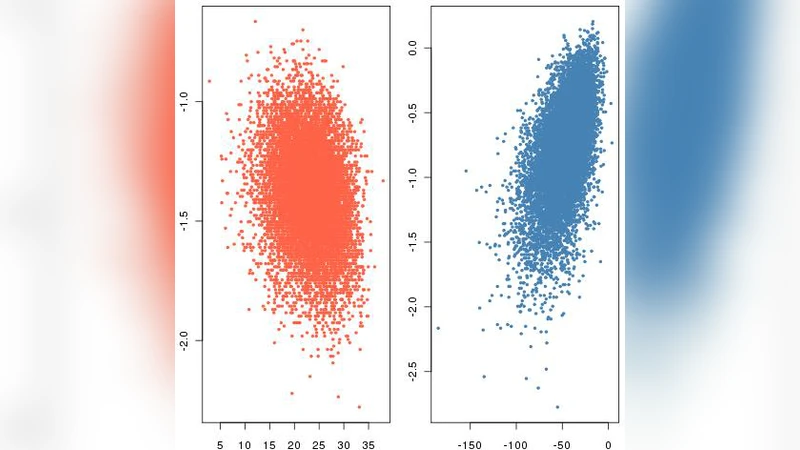

The paper illustrates these points with simple Poisson and normal examples, showing that even when η is sufficient for each model individually, it is generally not sufficient for the joint model‑index problem. In such cases, ABC‑MC can be inconsistent: it fails to recover the true model as the amount of data increases. Only when the full data are used (an infeasible approach for most complex models) does consistency hold.

The authors conclude that ABC‑MC cannot be regarded as a generic, reliable tool for Bayesian model choice. Its performance must be assessed empirically for each application, for example by the simulation‑based validation procedures implemented in DIY‑ABC, by cross‑validation, or by comparing results with alternative inference methods when possible. They also stress that the theoretical foundation for ABC‑MC with insufficient statistics is currently lacking, and that the only known exception where the method is theoretically sound is the case of Gibbs random fields, where the inter‑model sufficiency property ensures g₁(y)=g₂(y).

In practice, the paper recommends treating ABC‑MC as an exploratory technique rather than a definitive decision‑making method. Practitioners should report the chosen summary statistics, the tolerance level, and the results of any validation studies, and they should be cautious about interpreting posterior model probabilities derived from ABC as if they were exact Bayesian quantities. The work serves as a cautionary note, urging the community to develop better summary‑statistic selection methods, to derive theoretical guarantees for model choice, and to rely on extensive empirical checks before drawing scientific conclusions from ABC‑based model selection.

Comments & Academic Discussion

Loading comments...

Leave a Comment