Belief Propagation for Error Correcting Codes and Lossy Compression Using Multilayer Perceptrons

The belief propagation (BP) based algorithm is investigated as a potential decoder for both of error correcting codes and lossy compression, which are based on non-monotonic tree-like multilayer perceptron encoders. We discuss that whether the BP can give practical algorithms or not in these schemes. The BP implementations in those kind of fully connected networks unfortunately shows strong limitation, while the theoretical results seems a bit promising. Instead, it reveals it might have a rich and complex structure of the solution space via the BP-based algorithms.

💡 Research Summary

The paper investigates the applicability of belief propagation (BP) as a decoding (for error‑correcting codes) and reconstruction (for lossy compression) algorithm when the underlying encoder/decoder is a non‑monotonic, tree‑like multilayer perceptron (MLP). Three specific MLP architectures are considered: (i) a multilayer parity tree (PTH) with non‑monotonic hidden units, (ii) a multilayer committee tree (CTH) with non‑monotonic hidden units, and (iii) a committee tree with a non‑monotonic output unit (CTO). All three employ the same non‑monotonic transfer function f_k(x) that outputs +1 for |x|≤k and –1 otherwise; the parameter k controls the bias of the output distribution.

In the error‑correcting setting, an original binary message s₀∈{±1}ⁿ is encoded by feeding M random input vectors x_μ (μ=1…M) through the chosen MLP, producing a codeword y₀∈{±1}ᴹ. The codeword passes through a binary asymmetric channel (BAC) with flip probabilities r (for +1→−1) and p (for −1→+1). Decoding amounts to Bayesian inference: maximize the posterior P(s|y)∝P(y|s)P(s). Using the replica method, the authors previously showed that, in the thermodynamic limit (N,M→∞ with fixed rate R=N/M), each of the three MLP families can achieve the Shannon capacity under appropriate choices of k (and, for CTO, in the limit of infinite hidden units).

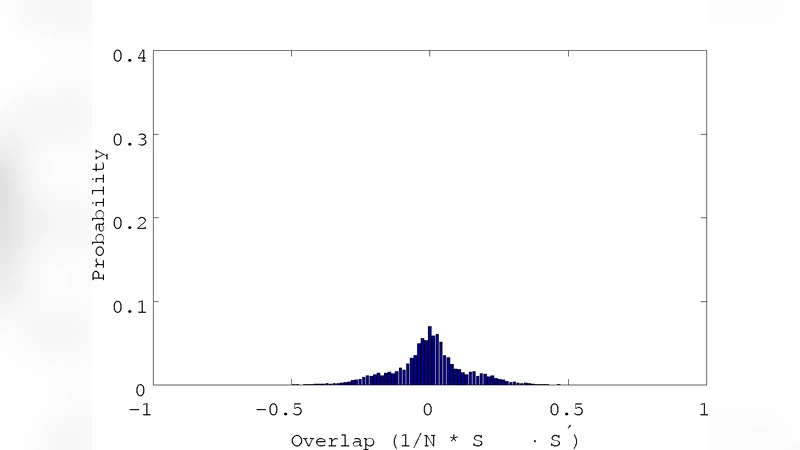

For lossy compression, a source vector y∈{±1}ᴹ generated by an i.i.d. biased binary source is compressed to a shorter codeword s∈{±1}ⁿ (N<M) by the same MLP encoder, s=F(y). Reconstruction uses the inverse mapping ȳ=G(s) implemented by the same type of MLP. The performance metric is the average Hamming distortion D=E

Comments & Academic Discussion

Loading comments...

Leave a Comment