Information Content in Data Sets for a Nucleated-Polymerization Model

We illustrate the use of tools (asymptotic theories of standard error quantification using appropriate statistical models, bootstrapping, model comparison techniques) in addition to sensitivity that may be employed to determine the information conten…

Authors: H. T. Banks (CRSC), M Doumic (INRIA-Paris-Rocquencourt, LJLL)

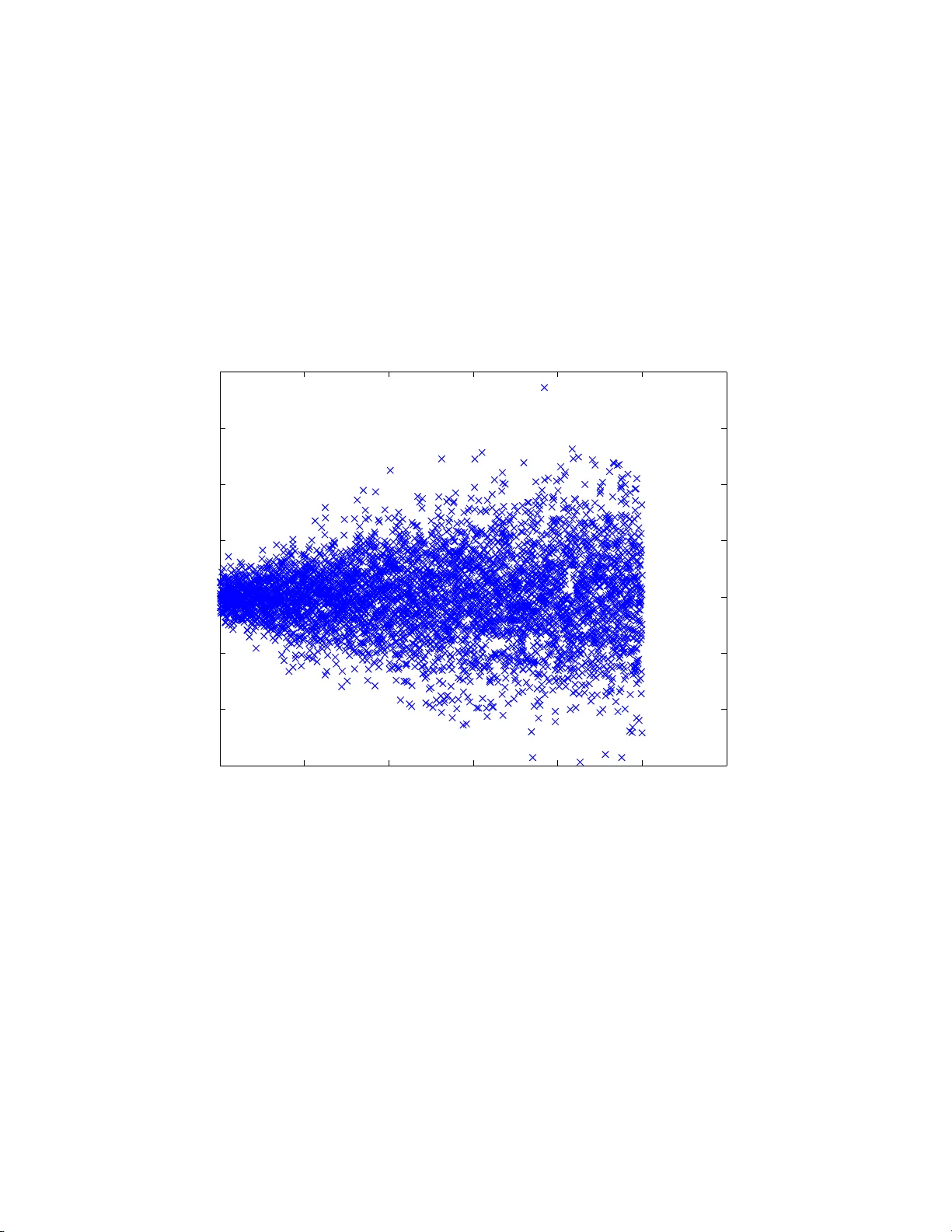

Information Con ten t in Data Sets for a Nucleated-P olymerization Mo del H.T. Banks 1 , M. Doumic 2 , 3 , C. Kruse 2 , 3 , S. Prig en t 2 , 3 , H.Rezaei 4 1 Cent er for Research in Scien tific Computation North Carolina State Universit y , Raleigh, NC 27695-82 12 2 Institut National de Recherche en Informatique et Automatique Paris-Ro cquencourt, F rance 3 Pierre et Marie Curie Univ ers it y Paris, F rance 4 Institut Natio nal de Recherche Agro no mique Jouy-en-J osas, F rance September 18 , 201 8 Abstract W e illustrate the us e of to ols (asymptotic theories of standard er- ror quantificati on using appropriate statistical mo dels, b o otstrapping, mo del comparison tec h niques) in addition to sensitivit y that may b e emplo y ed to determine the information conten t in data sets. W e d o this in the con text of recent mo dels [23] for nucleate d p olymerizatio n in proteins, ab out which very little is known regarding the un derlying mec hanisms; thus the metho d ology we develo p h ere m a y b e of great help to exp erimenta lists. Key W ords : In v erse problems, p olyglutamine and a ggregation mo deling, n ucleation, infor mat ion con tent, sensitivit y , Fisher matrix, uncertaint y quan- tification, Mathematics Sub ject Classification : 6 5M32,62P10,64B10 ,49Q12 1 1 In tro duction As mathematical mo dels b ecome more complex with multiple states and man y parameters to be estimated using experimen tal data, there is a need for critical analysis in mo del v alidation related to the reliability of parameter estimates obtained in mo del fitting. A recen t concrete example in v olv es pre- vious HIV mo dels [1 , 6] with 15 or more para meters to b e estimated. In [4], using recen tly deve lop ed para meter selectivit y to ols [5] base d on pa r a meter sensitivit y based scores, it was sho wn that man y parameters could not b e es- timated with an y degree of reliabilit y . Moreov er, w e found that quan tifiable uncertain t y v aries among patien ts dep ending upo n the n um b er of treatment in terruptions (p erturba t io ns of therap y). This leads to a fund a mental ques- tion : ho w m uc h informa t io n with respect to mo del v alida t io n can be exp ected in a g iv en da t a set or collection of data sets? Here w e illustrate the use of other to ols (a symptotic theories of standar d error quantific ation using appropriate statistical mo dels, b o otstra pping, and mo del comparison tec hniques) in addition to sensitiv ity theory that may b e used to determine the information con tent in data sets. W e do this in the con text of recen t mo dels [2 3] for nucle ated p o lymerization in pro teins. After presen ting the biolog ical con text of amyloid formation, w e describ e the mo del in Sec tion 2. In Section 3, w e in ves tiga t e the statistical mo del to b e used with our noisy data. This is a necessary step in order to use the correct error mo del in our generalized leas t sq uares (G LS) minimization. This also rev eals information on o ur experimen tal obse rv a t io n pro cess. Once w e ha v e found parameters whic h allow a reasonable fit, we determine the confidence w e ma y ha v e in our estimation pro cedures. W e do this in Sec tion 4, using b oth the condition n um b er of the co v ariance matrix and a sensitivit y analysis. This rev eals a smaller num b er of parameters (than those estimated in [23]) whic h a pp ear as reasonably sensitiv e t o the data sets , whereas other do not really affect the quality of the fits to our data. T o f urther supp or t our sensitivit y findings, we then apply a b o o t strapping analysis in Section 5. W e are lead to four main parameters and compare their resulting error s with the asymptotic confidence in terv als of Section 4. F ina lly , in Section 6, w e carry out mo del comparison tests [8, 9 , 10] as used in [3], and these lead us to select three w ell-defined parameters that can b e reliabilit y e stimated o ut of the nine o riginal ones estimated in [23]. 2 1.1 Protein P olymerization It is no w know n that sev eral neuro- degenerativ e disorders, including Alzheimers disease, Huntingtons disease a nd Prion diseases e.g., mad cow , are related to agg regations of pro teins presen ting an abnorma l folding. These protein aggregates are called amyloids and hav e become a f o cus of mo deling efforts in recen t y ears [11, 23, 27, 2 8 , 29]. One of the main c hallenges in t his field is to understand the k ey aggrega t ion mec hanisms, b o th qualita t ively and quan- titativ ely . In order to tes t our metho dology on a relative ly simple case, we fo cus here on p olyglutamine (PolyQ) con taining pro teins. This w as also the case study chosen to illustrate the fairly general O D E-PDE mo del prop osed in [23]; the reason for our c hoice is that, as sho wn in [23], the p olymerization mec hanisms prov e to b e simpler for P olyQ ag g regation than for other t yp es of proteins, e.g. PrP [24]. T o understand dat a sets fro m exp erimen ts carried b y Human Rezaei and his team at INRA, (Virologie et Imm unologie Molec- ulaires), see [23], w e adapt the general mo del to this con text. The data sets (DS1-DS4 ) o f in terest to us here are depicted in Figure 1 b elow. 0 1 2 3 4 5 6 7 8 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Adimensionalized total polymerized mass for c 0 =200 µ mol time (hour) % of the total polyemerized mass data set 1 data set 2 data set 3 data set 4 Figure 1: The data sets of in terest fro m [23, 7]. In [23] and a subseque nt effort in [7], the authors sough t to inv estigate 3 sev eral questions inclu ding (i) understanding the k ey p olymerization mec h- anisms, (ii) how to select parameters and calibrate the mo del, and (iii) how to nume rically approximate the mo del. Here w e briefly summarize results related to ( iii) and fo cus primarily on ( ii) . 2 The Mo del 2.1 Original ODE Mo del This mo del w e used is the same a s that of [23]. W e briefly outline that mo del. Let ( V , V ∗ , c i ) b e the concen trat io ns of the normal monomeric proteins that w e will call monomers, o f the monomeric pro teins presen ting an abnor ma l configuration that we will call conformers, and of the i -p olymers made of i agg r egated abnormal proteins, resp ectiv ely . The fo llo wing comprise the fundamen tal dynamics mo deled in [23]: • Mono mer- conf o rmer exc hange: V k + I ⇋ k − I V ∗ • Nucleation: V ∗ + V ∗ + ... + V ∗ | {z } i 0 k N on ⇋ k N of f c i 0 • Polymeriz ation by conformer addition: c i + V ∗ k i on ⇋ c i +1 Other reactions lik e f r a gmen tation and coalescence are negligible for the case of p olyglutamine con taining proteins (see [23] for exp erimen ta l justification). The la w of mass action in the deterministic framew or k (see [10, 25] and the n umerous references therein), tr anslates A + B k + I ⇋ k − I A ′ + B ′ in to the ordinary differen tial equation d [ A ] dt = − k + [ A ][ B ] + k − [ A ] ′ [ B ] ′ . 4 Using these basic ideas we obtain the infinite system of ordinary differ- en tial equations (ODEs) studied in [23] dV dt = − k + I V + k − I V ∗ , (1) dV ∗ dt = k + I V − k − I V ∗ + i 0 k N of f c i 0 − V ∗ X i ≥ i 0 k i on c i , (2) dc i 0 dt = k N on ( V ∗ ) i 0 − k N of f c i 0 − k i 0 on c i 0 V ∗ , (3) dc i dt = V ∗ ( k i − 1 on c i − 1 − k i on c i ) , i = i 0 + 1 , .... (4) with initial conditions V (0) = c 0 , V ∗ (0) = 0 , c i 0 (0) = c i (0) = 0 and the mass balance equation d dt V + V ∗ + ∞ X i = i 0 ic i ! = 0 . The exp erimen ts of interes t t o us measure the to tal p olymerized mass, i.e., M ( t ) = X i ≥ i 0 ic i ( t ) . 2.2 An A ppro ximate P DE Sy stem and the A sso ciated F orw ard Problem Since very long p olymers (a fibril ma y contain up to 10 6 monomer units) c haracterize am yloid formations, a PDE v ersion of the standard mo del, where a contin uous v ariable x appro ximates the discrete sizes i , is a reasonable appro ximation for la rge amy loid p olymers. How ev er, for small p olymer sizes this curarization do es not work v ery w ell. Th us w e tak e a ”hy brid approach” of leaving the ODE for smaller sizes and use the PDE fo r larg er ones, see [7]. W e define a small parameter ε = 1 i M , and let x i = iε with i M ≫ 1 b e the a v erage po lymer size defined b y i M = P i ≥ i 0 ic i P c i . 5 Then after definition of dimensionless quan t it ies c ε ( t, x ) = X c i 1 [ x i ,x i +1 ] w e ma y obtain a partial differen tial equation (PDE) to replace the infinite ODE system. Rigorous deriv at io ns of suc h con tin uous in tegro-PDE models ma y b e fo und in [20] for coagulation-fr a gmen tation equations, in [14] for the limit of the Bec k er-D¨ oring system tow ard Lifshitz-Slyozo v mo del, and in [18] for the gro wth-fragmentation ”Prion Model”. A formal deriv atio n f o r a full mo del, also including n ucleation, is carried out in [23]. Let N 0 ∈ N . W e then use the a ppro ximation dV dt = − k + I V + k − I V ∗ , dV ∗ dt = k + I V − k − I V ∗ + i 0 k N of f c i 0 − V ∗ X i ≥ i 0 k i on c i , (5) dc i 0 dt = k N on ( V ∗ ) i 0 − k N of f c i 0 − k i 0 on c i 0 V ∗ , (6) dc i dt = V ∗ ( k i − 1 on c i − 1 − k i on c i ) , i ≤ N 0 , (7) ∂ t c ε ( x, t )= − V ∗ ∂ x ( k on c ε ( x, t )) , x ≥ N 0 , (8) with initial conditions V (0) = c 0 , V ∗ (0) = 0 , c i 0 (0) = c i (0) = 0 , c ε ( x, 0) = 0 , and the b oundary condition c ǫ ( x = N 0 , t ) = c N 0 ( t ) . Then an a ssumed mass balance equation b ecomes d dt V + V ∗ + N 0 X i = i 0 ic i + Z ∞ N 0 xc ε ( x ) dx ! = 0 . In [7] w e considered requiremen ts for a go o d discretization sc heme includ- ing (i) it should conserv e the total p olymerized mass, (ii) it should be fast and most imp o rtan tly , (iii) it should b e accurate. 6 T o ensure the mass conserv a tion, w e replace the O DE for V ∗ b y the mass c onservation e quation and obtain dV dt = − k + I V + k − I V ∗ , V ∗ = c 0 − V − N 0 X i = i 0 ic i − Z ∞ N 0 xc ε dx, dc i 0 dt = k N on ( V ∗ ) i 0 − k N of f c i 0 − k i 0 on c i 0 V ∗ , dc i dt = V ∗ ( k i − 1 on c i − 1 − k i on c i ) , i ≤ N 0 , ∂ t c ε ( x, t ) = − V ∗ ∂ x ( k on c ε ( x, t )) , x ≥ N 0 , with initial and b oundary conditions as b efore. W e deve lop ed metho dolo g y fo r forward solutions in [7]. In considering these forw a rd solutio ns we first observ ed that the de sired spatial computa- tional do ma in is v ery large as determined b y the maxim um size of observ ed p olymers, with range up to 10 6 and the p eak in the distribution is at the left side of the domain of in terest; for larger p olymer sizes, the distribution is almost linearly decreasing. Based on these and other considerations discussed in [7], the PDE w as appro ximated b y the Finite V olume Metho d (see [21] for discussions of Up- wind, Lax-W endroff and flux limiter metho ds) with an adaptiv e mesh, refined to w ard the smaller p olymer sizes. F urthermore, w e ke pt the ratio b etw een the step size a nd the corresp onding mesh elemen t constan t, i.e., we used ∆ x i x i = q < 1 so that x i = 1 1 − q x i − 1 . This mesh is quasi-linear in the sense of ∆ x i − 1 ∆ x i = 1 + O ( q ). The resulting Upw ind and Lax-W endroff sc hemes are then consisten t on the pro g ressiv e mes h (see [21]). F or fur t her details on these sc hemes including examples demonstrating con v ergence prop erties, the in terested reader ma y consult [7]. 3 The In v erse Prob l em A ma jo r question in form ulating the mo del for use in in v erse problem sce- narios c onsists of how to b est parametrically represen t the function k on for our application? F o llo wing [23], w e c hose t o appro ximate k on b y a function as depicted in Figure 2. (According to o ur discussions b et w een S. Prigen t, H. 7 Rezaei and J. T orren t, other choice s lik e a Gaussian b ell curv e are also po s- sible, and w e discus s this later). Th us w ith this parametrization w e ha ve 5 more parameters k min on , k max on , x 1 , x 2 , i max in addition to the 4 basic parameters k + I , k − I , k N on , k N of f to b e estimated using our data sets. Figure 2: Parametric represen tation for k on . Th us w e seek to estimate (with ac c eptable quantific ation of unc ertainties ) the nine parameters k + I , k − I , k N of f , k N on , and k on (represen ted in parametrical form depicted ab ov e with the 5 a dditio nal unkno wns k min on , k max on , x 1 , x 2 , i max ) that fit the data b est! T o do this we need an efficien t discretization metho d as discusse d ab ov e for the forw ard problem as wel l as a c orr e ct assumption on the me asur emen t err ors in the in verse problem. 3.1 Estimation of Pa rameters W e mak e some standard statistical assumptions ( see [9, 10, 16, 26]) underly- ing our in ve rse problem fo rm ulations. • Assume that there exists a true or nominal set of parameter θ 0 = ( k − I , ..., i max ) • L et E i b e iid with E ( E i ) = 0 and co v( E i , E j ) = σ 2 . Let ǫ i ∈ E i . 8 Denote the estimated parameter for θ 0 as ˆ θ . The in ve rse problem is based on statistical assumptions on the observ ation error in the data. If w e assume an absolute err or data mo del then data p oints are tak en with equal imp ortance. This is represen ted b y observ ations y i = M ( t i , θ 0 ) + ǫ i . (9) On the other hand, if one assumes some t yp e of r elative e rr or data mo del then the error is pro p ortional in some sense to the measured p olymerized mass. This can b e represen ted b y o bserv ations o f the fo r m y i = M ( t i , θ 0 ) + M ( t i , θ 0 ) γ ǫ i , γ ∈ (0 , 1] . (10) Absolute mo del error form ulations dictate w e use Or dinary L e ast Squar es (OLS) inv erse problem [9, 10] giv en b y ˆ θ = arg min X ( y i − M ( t i , θ )) 2 (11) while for relativ e error mo del one should use inv erse problem for m ulations with Gener al i ze d L e as t Squar es (GLS) cost functional ˆ θ = a rg min X y i − M ( t i , θ ) M ( t i , θ ) γ 2 , γ ∈ ( 0 , 1] . (12) 3.1.1 The Residual P lots T o obtain a correct statistical mo del, we used res idual plots (see [9, 10] for more details) with residuals give n b y r i = y i − M ( t i , ˆ θ ) M ( t i , ˆ θ ) γ , γ ∈ [0 , 1] T o illustrate what we are seeking for our data sets, we first used sim ulated r elative err or data (sim ulated data for γ = 1), then carried out the in v erse problems for b oth a relative error cost functional (i.e., γ = 1) and an ordinary least squares cost functiona l (i.e., γ = 0 ). W e then plotted the corresp onding residuals vs time and also residuals vs the mo del v alues. The first plots are related to the correctness of our assumption o f indep endency and iden tical distributions i.i. d . for the data whereas the second plo ts con tain informa t io n as to t he correctness of the form of our prop osed statistical mo del. 9 2 3 4 5 6 7 8 −0.03 −0.02 −0.01 0 0.01 0.02 0.03 Residuals using GLS cost function, noise level: 0.01 t Residuals 1 2 3 4 5 6 7 8 −0.03 −0.02 −0.01 0 0.01 0.02 0.03 Residuals using OLS cost function, noise level: 0.01 t Residuals (a) (b) Figure 3: Plots with sim ulated data: (a) Correct cost f unction vs. time ( γ = 1); (b)Incorrect cost function vs. time ( γ = 0) 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 −0.03 −0.02 −0.01 0 0.01 0.02 0.03 Residuals using GLS cost function, noise level: 0.01 Model Residuals 0 0.2 0.4 0.6 0.8 1 −0.03 −0.02 −0.01 0 0.01 0.02 0.03 Residuals using OLS cost function, noise level: 0.01 model Residuals (a) (b) Figure 4: Plots with sim ulated data: (a) Correct cost function vs. mo del ( γ = 1); (b)Incorrect cost function vs. mo del ( γ = 0) 10 3.2 Statistical M o dels of Noise W e next carried out similar inv erse problems with data set (DS) 4 o f our exp erimental data collection. W e first used DS 4 on the in terv al t ∈ [0 , 8 ]. Based on some earlier calculations w e also chose the n ucleation index i 0 = 2 for all our subsequen t calculations. The residual plots giv en b elo w in Figures 5 and 6 suggest stro ng ly that ne i ther of the first attempts of assumed stat is- tical mo dels and corresponding cost functionals (absolute error and OLS or relativ e error with γ = 1 and simple GLS) a r e correct. 0 1 2 3 4 5 6 7 8 9 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 time Madim for OLS and dataset 4 data Madim after opti 0 0.2 0.4 0.6 0.8 1 −8 −6 −4 −2 0 2 4 6 8 x 10 −3 Residuals for OLS, N0=500 and dataset 4 model Residuals (a) (b) Figure 5: (a) M ( t k ) with OLS; (b) Residuals vs Mo del: OLS Based on these initial results and the sp eculation that early p erio ds of the p olymerization pro cess ma y b e somewhat sto chas tic in nature, w e chose to subseq uen tly use all the data sets on the in terv als [ t 0 , 8] where t 0 is the first time when M ( t 0 ) > 0 . 12 (th us 12% of the total p olymerized mass). Moreo v er, we decided to use other v alues of γ b et w een 0 and 1 to test dat a set 4. W e th us carried out further inv estigations with inv erse problems fo r da ta p oin ts M ( t k ) ≥ 0 . 12 and i 0 = 2 where w e fo cused on the question of the most appropriate v a lues of γ to use in a generalized least squares approach (again see [9] fo r further motiv ation and details). W e then obtained the results with data set 4 depicted in Figure 7. Analysis of thes e residuals suggest that either γ = 0 . 6 or γ = 0 . 7 migh t b e satisfactory for use in a generalized least squares setting. 11 0 1 2 3 4 5 6 7 8 9 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Madim for GLS and dataset 4 data Madim after opti 0 0.2 0.4 0.6 0.8 1 −0.2 −0.15 −0.1 −0.05 0 0.05 0.1 0.15 Residuals vs model for GLS, N0=500 and dataset 4 Model Residuals (a) (b) Figure 6: (a) M ( t k ) with GL S, γ = 1; (b) R esiduals vs Mo del: GLS 0 0.2 0.4 0.6 0.8 1 −0.01 −0.005 0 0.005 0.01 gamma=0.0 model 0 0.2 0.4 0.6 0.8 1 −0.01 −0.005 0 0.005 0.01 gamma=0.1 model 0 0.2 0.4 0.6 0.8 1 −0.01 −0.005 0 0.005 0.01 gamma=0.2 model 0 0.2 0.4 0.6 0.8 1 −0.01 −0.005 0 0.005 0.01 gamma=0.3 model 0 0.2 0.4 0.6 0.8 1 −0.01 −0.005 0 0.005 0.01 gamma=0.4 model 0 0.2 0.4 0.6 0.8 1 −0.01 −0.005 0 0.005 0.01 gamma=0.5 model 0 0.2 0.4 0.6 0.8 1 −0.01 −0.005 0 0.005 0.01 gamma=0.6 model 0 0.2 0.4 0.6 0.8 1 −0.01 −0.005 0 0.005 0.01 gamma=0.7 model 0 0.2 0.4 0.6 0.8 1 −0.01 −0.005 0 0.005 0.01 gamma=0.8 model 0 0.2 0.4 0.6 0.8 1 −0.01 −0.005 0 0.005 0.01 gamma=0.9 model 0 0.2 0.4 0.6 0.8 1 −0.02 −0.01 0 0.01 0.02 gamma=1.0 model Figure 7: Residuals f or data set 4 using different v alues of γ . Motiv ated b y these results , w e next in v estigated the in v erse problems for eac h of the four exp erimen tal data sets with initial concen tratio n c 0 = 200 µ mol and i 0 = 2. W e carried out the optimization o v er all data p oints with 12 M ( t k ) ≥ 0 . 12 and used the generalized least squares metho d with γ = 0 . 6. The resulting graphics depicted in Figure 8 again suggest that γ = 0 . 6 is a reasonable v alue to use in our subseque nt analysis of the p olyglutamine data with regard to its info rmation conte nt for inv erse problem estimation and parameter uncertaint y quan tificatio n. 0 0.5 1 −0.01 0 0.01 0.02 model 0 0.5 1 −0.01 −0.005 0 0.005 0.01 model 0 0.5 1 −0.01 −0.005 0 0.005 0.01 model 0 0.5 1 −0.01 −0.005 0 0.005 0.01 model Figure 8: Residuals for the 4 experimen tal data sets using γ = 0 . 6. 13 4 Standard Err o rs and Asymptotic Analysis 4.1 Standard Errors for P arameters Using G LS W e emplo y ed first the asymptotic theory for parameter uncertain t y summa- rized in [9, 10, 1 6 ] and the references therein. In the case of generalized least squares, the asso ciated standard errors f o r the estimated parameters ˆ θ = ( k + I , ..., i max ) (v ector length κ θ = 9) are given by the follo wing construc- tion (for details see Chap. 3.2.5 and 3.2.6 of [9]): Define the co v a r ia nce matrix b y the form ula S E k = q Σ k k ( ˆ θ ) , k = 1 , ..., 9 , where Σ( ˆ θ ) = ˆ σ 2 ( χ T ( ˆ θ ) W ( ˆ θ ) χ ( ˆ θ )) − 1 . Here χ is the se nsitivit y matrix o f size n × κ θ ( n b eing the n umber of data p oin ts and κ θ b eing the num b er of estimated parameters) and W is defined b y W − 1 ( ˆ θ ) = diag( M ( t 1 ; ˆ θ ) 2 γ , . . . , M ( t n ; ˆ θ ) 2 γ ) . W e use the appro ximation of the v ar ia nce σ 2 ≈ ˆ σ ( ˆ θ ) 2 = 1 n − κ θ n X i =1 1 M ( t i ; ˆ θ ) 2 γ ( M ( t i , ˆ θ ) − y i ) 2 . T o obtain a finite standard error using asymptotic theory , the 9 × 9 matrix F = χ T ( ˆ θ ) W ( ˆ θ ) χ ( ˆ θ ) th us m ust b e in v ertible. In the abov e pro blem we do indeed obta in a go o d fit of t he curv e and go o d residuals (for the sak e of brevit y , not depicted here!). How ev er, w e also found that the condition n um b er of the ma t r ix F = χ T ( ˆ θ ) W ( ˆ θ ) χ ( ˆ θ ) is κ = 10 24 . Lo oking more closely at the matrix F rev eals a near linear dep endence b etw een certain rows, hence the larg e condition num b er. W e th us quic kly reac h the fol lowin g c onclusions : 1. W e obtain a set of para meters fo r whic h the mo del fits w ell, but w e c annot hav e an y reasonable confidence in them using the asymptotic theories from statistics e.g., see the references given ab ov e. 14 2. W e susp ect that it may not b e p ossible to obtain sufficien t information from our data set curv es to estimate a ll 9 pa rameters with a high degree of confidence! This is based on our calculations with the corresp onding Fisher matrices as we ll our prior know ledge in that the graphs depicted in Figure 1 are v ery similar to Logistic o r Go mp ertz curv es whic h can b e quite w ell fit with parameterized mo dels w ith only 2 or 3 carefully c hosen par a meters! T o assist in initial understanding o f these issues, w e consider the associated sensitivit y matrices χ = ∂ M ∂ θ . 4.2 Sensitivit y Analysis F or the sens itivity analysis, w e follow [9, 10]. Hereafter a ll our analysis will b e carried using data set 4 and the b est estimate ˆ θ obtained for t he latter. W e find t hat the mo del is sensitiv e mainly to four par a meters: k + I , k − I , k N on , k N of f . The sensitivities for the remaining para meters a r e on a n or der of magnitude of 10 − 6 or less. It a lso sho ws s ome s ensitivit y with resp ect to x 1 . How ev er, the parameter x 1 app ears in the mo del only as factor x 1 i max . The sensitivities depicted b elow use ˆ θ for the nine b est fit GLS parameters , i.e., ˆ θ for κ θ = 9. 0 1 2 3 4 5 6 7 8 9 −0.1 −0.09 −0.08 −0.07 −0.06 −0.05 −0.04 −0.03 −0.02 −0.01 0 time (h) ∂ kImoins Madim Sensitivity for k I − ∂ kImoins Madim 0 1 2 3 4 5 6 7 8 9 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 time (h) ∂ kIplus Madim Sensitivity for k I + ∂ kIplus Madim (a) (b) Figure 9: (a) Sensitivit y w.r.t. k − I ; (b) Sensitivit y w.r.t. k + I 15 0 1 2 3 4 5 6 7 8 9 0 0.5 1 1.5 2 2.5 3 3.5 4 x 10 −5 time (h) ∂ konN Madim Sensitivity for k on N ∂ konN Madim 0 1 2 3 4 5 6 7 8 9 −2.5 −2 −1.5 −1 −0.5 0 x 10 −3 time (h) ∂ koffN Madim Sensitivity for k off N ∂ koffN Madim (a) (b) Figure 10 : (a) Sensitivit y w.r.t. k N on ; (b) Sensitivit y w.r.t. k N of f 0 1 2 3 4 5 6 7 8 9 0 0.2 0.4 0.6 0.8 1 1.2 1.4 1.6 1.8 2 x 10 −6 time (h) ∂ konmin Madim Sensitivity for k on min ∂ konmin Madim 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 x 10 −10 time (h) ∂ konmax Madim Sensitivity for k on max ∂ konmax Madim (a) (b) Figure 11 : (a) Sensitivit y w.r.t. k min on ; (b) Sensitivit y w.r.t. k max of f 16 0 1 2 3 4 5 6 7 8 9 −25 −20 −15 −10 −5 0 time (h) ∂ x1 Madim Sensitivity for x 1 ∂ x1 Madim 0 1 2 3 4 5 6 7 8 9 0 0.5 1 1.5 2 2.5 3 x 10 −59 time (h) ∂ x2 Madim Sensitivity for x 2 ∂ x2 Madim (a) (b) Figure 12 : (a) Sensitivit y w.r.t. x 1 ; (b) Sensitivit y w.r.t. x 2 0 1 2 3 4 5 6 7 8 9 −3.5 −3 −2.5 −2 −1.5 −1 −0.5 0 x 10 −6 time (h) ∂ imax Madim Sensitivity for i max ∂ imax Madim 0 1 2 3 4 5 6 7 8 9 −6 −5 −4 −3 −2 −1 0 x 10 −5 time (h) ∂ x11 Madim Sensitivity for x 11 =i max x 1 ∂ x11 Madim (a) (b) Figure 13: (a) Sensitivit y w.r.t. i max ; (b) Sensitivit y w.r.t. x 11 = i max x 1 17 5 Sensiti vit y Motiv ated In v e rse Problems Based on the sensitivit y findings depicte d ab o v e, w e inv estigated a series of in v erse problems in whic h w e attempted to estimate a n increasing nu mber o f parameters b eginning first with the fundamen tal parameters k + I and k − I . In eac h of these in v erse pro blems w e a t t empted to ascertain uncertaint y b ounds for the estimated para meters using b ot h the asymptotic theory describ ed ab ov e and a generalized least squares v ersion of b o otstrapping [12, 13, 1 5 , 17, 19]. A quic k outline o f the a ppropriate b o o t strapping algo r it hm is giv en next. 5.1 Bo otstrapping Algorithm: Nonconstan t V ariance Data W e suppo se no w that w e are giv en exp erimen tal data ( t 1 , y 1 ) , . . . , ( t n , y n ) from the underlying observ ation pr o cess Y i = M ( t i ; θ 0 ) + M ( t i ; θ 0 ) γ e E i , (13) where i = 1 , . . . , n and the e E i are i.i.d. with mean zero and constant v ariance σ 2 0 . Then w e see that E ( Y i ) = M ( t i ; θ 0 ) and V ar ( Y i ) = σ 2 0 M 2 γ ( t i , θ 0 ), with asso ciated corresp onding realizations o f Y i giv en b y y i = M ( t i ; θ 0 ) + M ( t i ; θ 0 ) γ e ǫ i . A standard algo rithm can b e used to compute the corresp onding b o ot- str apping estimate ˆ θ boot of θ 0 and its em pirical distribution. W e treat t he general case for nonlinear dep endence of the mo del output on the para me- ters θ . The algorithm is g iv en as follo ws. 1. First obtain the estimate ˆ θ 0 from the en tire sample { y i } using t he GL S giv en in (12) with γ = 1. An estimate ˆ θ boot can b e solv ed for iterativ ely as follow s. 2. Define the nonconstant v ariance standardized residuals ¯ s i = y i − M ( t ; ˆ θ 0 ) M ( t i ; ˆ θ 0 ) γ , i = 1 , 2 , . . . , n. Set m = 0. 18 3. Create a bo otstrapping s ample of size n using random sampling with replacemen t from the data (realizations) { ¯ s 1 ,. . . , ¯ s n } to f orm a b o ot- strapping sample { s m 1 , . . . , s m n } . 4. Create b o otstrapping sample p oints y m i = M ( t i ; ˆ θ 0 ) + M ( t i ; ˆ θ 0 ) γ s m i , where i = 1,. . . , n . 5. Obtain a new estimate ˆ θ m +1 from the b o o t stra pping sample { y m i } using GLS. 6. Set m = m + 1 and repeat steps 3– 5 un til m ≥ M where M is large (e.g., M=100 0 ). W e then calculate the mean, s tandard error, a nd confidence in terv als using the f orm ulae ˆ θ boot = 1 M P M m =1 ˆ θ m , V ar ( θ boot ) = 1 M − 1 P M m =1 ( ˆ θ m − ˆ θ boot ) T ( ˆ θ m − ˆ θ boot ) , (14) SE k ( ˆ θ boot ) = p V ar ( θ boot ) k k . where θ boot denotes the b o otstrapping estimator. 5.2 Estimation of t w o parameters W e first carried out es timation for the 2 parameters k + I and k − I . W e use the GL S form ulation with γ = 0 . 6. W e fix globally (based on previous estimations with DS 4) the pa rameter v alues k N on k N of f k min on k max on x 1 x 2 i max 4616 . 962 93 . 332 1684 . 381 1 . 5152 · 10 9 0 . 0626 0 . 859 3 . 542 · 10 5 and used the initial guesses for the para meters giv en b y k + I k − I q 0 2 . 1600 10 . 9270 19 W e then used the b o otstrapping algorit hm and obtained the following means and standard errors for M = 1000 whic h, as rep o rted b elow , com- pare quite we ll with the asymptotic theory es timates. The corresp onding distributions ar e sho wn in Figures 14 and 15. k + I ( boot )( GLS ) k − I ( boot )( GLS ) k + I ( asy mp )( GLS ) k − I ( asy mp )( GLS ) mean 2 . 158 10 . 911 2 . 157 10 . 911 S E 0 . 0044 0 . 0247 0 . 00396 0 . 0225 2.13 2.14 2.15 2.16 2.17 2.18 0 50 100 150 200 250 300 350 400 450 k I + Figure 14: Tw o parameters estimation ( k + I , k − I ). Bo otstrapping distribution for k + I . W e use GLS a nd M=1000 runs. 5.3 GLS Estimation of 3 P arameters W e tried next to estimate 3 parameters. W e ag ain used the GLS form ulation with γ = 0 . 6. Once again w e fixed all the parameters describing the domain and the p olymerization function k on and w e also fix ed either k N of f or k N on in the corresp onding in verse problems. 5.4 GLS Estimation for k + I , k − I and k N on W e fixed v a lues as follo ws: k N of f k min on k max on x 1 x 2 i max 93 . 33 1684 . 38 1 . 5 · 1 0 9 0 . 062 0 . 859 3 . 5 · 10 5 20 10.75 10.8 10.85 10.9 10.95 11 11.05 11.1 0 50 100 150 200 250 300 350 400 450 k I − Figure 15: Tw o parameters estimation ( k + I , k − I ). Bo otstrapping distribution for k − I . W e use GLS a nd M=1000 runs. W e used as initial parameter v alues: k + I k − I k N on q 0 2 . 1600 10 . 9270 4616 . 962 W e obta ined the estimated parameters together with the correspo nding stan- dard errors, v ariances and the condition n um b ers κ of the corresponding sensitivit y matrices for the four data sets as rep orted b elo w. The 95 % con- fidence results based on the asymptotic theory are also depicted for DS 4 in Figure 16. k + I k − I k N on S E σ 2 κ D S 1 2 . 26 13 . 49 4616 . 96 ( . 012 , . 099 , 5 3 . 925) 8 . 52 · 10 − 6 8 . 89 · 10 10 D S 2 2 . 99 16 . 20 4616 . 96 ( . 021 , . 151 , 5 6 . 691) 9 . 67 · 10 − 6 4 . 37 · 10 10 D S 3 2 . 18 15 . 76 9840 . 31 ( . 011 , . 103 , 9 0 . 466) 6 . 45 · 10 − 6 3 . 94 · 10 11 D S 4 2 . 16 10 . 91 4616 . 96 (0 . 0089 , 0 . 0649 , 4 5 . 262) 6 . 36 · 10 − 6 7 . 14 · 10 10 T o compare these asymptotic r esults with b o otstrapping, w e carried out b o otstra pping with Data Set (D S) 4 for the estimation of k + I , k − I and k N on with the same initial v alues as a b o v e. W e then obtained the follo wing means and standard errors for a run with M = 1000, in comparison to the asymptotic 21 1 2 3 4 2.2 2.4 2.6 2.8 3 data set k I + 95% confidence intervals for k I + 1 2 3 4 12 14 16 data set k I − 95% confidence intervals for k I − 1 2 3 4 4000 6000 8000 10000 data set k on N 95% confidence intervals for k on N Figure 16: Confidence In terv als theory . k + I ( boot ) k − I ( boot ) k N on ( boot ) k + I ( asy mp ) k − I ( asy mp ) k N on ( asy mp ) mean 2 . 153 10 . 887 4616 . 962 2 . 157 10 . 910 4616 . 962 S E 0 . 0039 0 . 0219 0 . 00003 0 . 0089 0 . 0649 45 . 262 Of particular in terest are the v alues obtained f or k N on and the bo otstrap- ping standard errors for k N on whic h are extremely small. It should be noted that the sensitivit y of the mo del output on k N on is a lso v ery small. Th us o ne migh t conjecture t ha t the iterations in the bo otstrapping algorithm do not c hange the v a lues of k N on v ery muc h and hence one observ es the extremely small SE tha t are pro duced for the b o otstrapping estimates. 22 10.8 10.82 10.84 10.86 10.88 10.9 10.92 10.94 10.96 10.98 0 50 100 150 200 250 300 Figure 17: Estimation for k + I , k − I and k N on : Bo otstrapping distribution for k − I for GLS a nd 1000 runs. 2.14 2.145 2.15 2.155 2.16 2.165 2.17 0 50 100 150 200 250 Figure 18: Estimation for k + I , k − I and k N on : Bo otstrapping distribution for k + I for GLS a nd 1000 runs. 23 4616.962 4616.962 4616.9621 4616.9621 4616.9622 4616.9622 4616.9623 0 50 100 150 200 250 Figure 19: Estimation f o r k + I , k − I and k N on : Bo o tstrapping distribution for k N on for GLS a nd 1000 runs. 24 5.5 GLS estimation for k + I , K − I and k N of f In ano ther test, w e fix ed k N on and instead estimate k N of f (along with k + I and k − I ). W e use the fixed v alues: k N on k min on k max on x 1 x 2 i max 4616 . 962 1684 . 381 1 . 5152 · 1 0 9 0 . 0626 0 . 859 3 . 542 · 10 5 and the initial guesses for the parameters to b e estimated given b y: k + I k − I k N of f q 0 2 . 1600 10 . 9270 108 . 256 W e obtained the estimated par ameters and corr esp o nding SE. k + I k − I k N of f S E σ 2 κ D S 1 2 . 203 12 . 997 99 . 861 ( . 01 1 , . 091 , 1 . 208 ) 8 . 165 · 10 − 6 4 . 912 · 10 7 D S 2 2 . 893 15 . 474 100 . 019 ( . 019 , . 137 , 1 . 27 9) 9 . 323 · 10 − 6 2 . 486 · 10 7 D S 3 2 . 168 15 . 631 41 . 935 ( . 01 1 , . 102 , 0 . 424 ) 6 . 435 · 10 − 6 9 . 125 · 10 6 D S 4 2 . 181 11 . 090 90 . 536 ( . 00 9 , . 066 , 0 . 936 ) 6 . 289 · 10 − 6 3 . 043 · 10 7 Also in this case, w e carried out b o otstrapping for DS 4. The bo otstrap- ping distributions for k + I , k − I and k N of f are found in Figures 20-22. W e then obtained the follo wing means and standard errors for a r un with M = 1000 in comparison to the asymptotic theory . k + I ( boot ) k − I ( boot ) k N of f ( boot ) k + I ( asy mp ) k − I ( asy mp ) k N of f ( asy mp ) mean 2 . 169 11 . 013 91 . 254 2 . 181 11 . 090 90 . 536 S E 0 . 0094 0 . 0699 1 . 039 2 0 . 009 0 . 066 0 . 936 25 2.14 2.15 2.16 2.17 2.18 2.19 2.2 2.21 0 50 100 150 200 250 k I + Figure 20: Three para meters estimation ( k + I , k − I and k N of f ): Boo tstrapping distribution for k + I . W e used GLS a nd M=1000 runs. 10.8 10.9 11 11.1 11.2 11.3 0 50 100 150 200 250 k I − Figure 21: Three para meters estimation ( k + I , k − I and k N of f ): Boo tstrapping distribution for k − I . W e used GLS a nd M=1000 runs. 26 87 88 89 90 91 92 93 94 95 0 50 100 150 200 250 k off N Figure 22: Three para meters estimation ( k + I , k − I and k N of f ): Boo tstrapping distribution for k N of f . W e used GLS a nd M=1000 runs. 27 5.6 Estimation of 4 main p arameters F ollow ing the sensitivit y analysis detailed ab o ve , w e tried to estimate a com- bination of the parameters k + I , k − I , k N on , k N of f for the parameter set with κ θ = 4. P arameters as follo ws were fixed fro m the origina l 9 parameter fit: k min on k max on x 1 x 2 i max 1684 1 . 5 · 10 9 0 . 062 0 . 859 3 . 5 · 10 5 W e obta ined the follow ing result fo r the estimation of the f our pa rameters using the data se ts 1 to 4. In all of them, the condition n umber of the Fisc her’s information matrix κ is to o large to inv ert. This along with the sensitivit y results ab o v e strongly suggests that the data sets do not contain sufficien t informat ion to estimate 4 or more parameters w ith an y degree of certain t y attac hed to the estimates. k + I k − I k N on k N of f σ 2 κ D S 1 2 . 1431 12 . 4751 4616 . 96 2 108 . 2 59 8 . 7 219 · 10 − 6 6 . 1226 · 1 0 19 D S 2 2 . 7995 14 . 7630 4616 . 95 7 10 8 . 4308 9 . 8694 · 10 − 6 1 . 4442 · 1 0 19 D S 3 2 . 180 15 . 7 5 7 4618 . 599 41 . 369 6 . 4622 · 10 − 6 1 . 881 · 10 17 D S 4 2 . 161 10 . 9278 4617 . 3316 93 . 3265 6 . 37 4 · 10 − 6 2 . 144 · 10 18 6 Mo d el Co mparis on T ests A t yp e of Residuals Sum of Squares (RSS) based mo del selection criterion [8, 9, 10] can be used as a tool for mo del comparison for certain classes of mo dels. In particular this is true f or mo dels suc h a s t hose g iven in [3] in which p oten tially extraneous mec hanisms can b e eliminated from the mo del b y a simple restriction on the underly ing para meter s pace while the form of t he mathematical mo del remains unc hanged. In other words, this metho dolog y can b e used to compare t w o nested mathematical mo dels where the parameter set Ω H θ (this notation will b e defined explicitly in Section 6.1 b elow ) f or the restricted mo del can b e iden tified a s a linearly restricted subset of the admissible parameter se t Ω θ of the unrestricted mo del. Indeed , the RSS based mo del selection criterion is a useful to ol to determine whether or not certain terms in the mathematical mo dels are imp orta nt in describing the giv en exp erimen tal data. 28 6.1 Ordinary L east S q u ares W e no w turn to the statistical mo del (9), where the measuremen t errors are assumed to be independen t and ide ntically distributed w ith zero mean a nd constan t v ar iance σ 2 . In addition, we assume that there exis ts θ 0 suc h that the statistical model Y j = M ( t j ; θ 0 ) + E j , j = 1 , 2 , . . . , n. (15) correctly describes the observ ation pro cess. In other w ords, (15) is the true mo del, and θ 0 is the t r ue v alue of t he mathematical model parameter θ . With our assumption on measuremen t errors, the mathematical mo del parameter θ can b e estimated b y using the ordinary least squares method; that is, the ordinary least sq uares estimator of θ is obtained b y solving θ n = arg min θ ∈ Ω θ J n ( θ ; Y ) . Here Y = ( Y 1 , Y 2 , . . . , Y n ) T , and the cost function J n is defined as J n ( θ ; Y ) = 1 n n X k =1 ( Y k − M ( t k ; θ )) 2 . The corresp onding realization ˆ θ n of θ n is obtained b y solving ˆ θ n = arg min θ ∈ Ω θ J n ( θ ; y ) , where y is a realizatio n of Y (that is, y = ( y 1 , y 2 , . . . , y n ) T ). As a lluded to in the in tro duction, we migh t also consider a restricted v ersion of the mathematical mo del in whic h the unkno wn true para meter is assumed to lie in a subset Ω H θ ⊂ Ω θ of the admissible parameter space. W e assume this restriction can be written as a linear constrain t, H θ 0 = h , where H ∈ R κ r × κ q is a matrix having r a nk κ r (that is, κ r is the n umber of constrain ts imp osed), and h is a kno wn v ector. Th us the restricted parameter space is Ω H θ = { θ ∈ Ω θ : H θ = h } . Then the n ull and alternative hypotheses are H 0 : θ 0 ∈ Ω H θ H A : θ 0 6∈ Ω H θ . 29 W e may define the restricted parameter estimator as θ n,H = arg min θ ∈ Ω H θ J n ( θ ; Y ) , and the corresp onding realization is denoted b y ˆ θ n,H . Since Ω H θ ⊂ Ω θ , it is clear that J n ( ˆ θ n ; y ) ≤ J n ( ˆ θ n,H ; y ) . This fact f orms the basis for a mo del selection criterion based upo n the residual sum of squares. Using the standard assumptions (giv en in detail in [9]), one can establish asymptotic conv ergence result fo r the test statistics (whic h is a function of observ ations and is used t o determine whether or not the nu ll h yp othesis is rejected) U n = n J n ( θ n,H ; Y ) − J n ( θ n ; Y ) J n ( θ n ; Y ) , where the corresponding realization ˆ U n is defined as ˆ U n = n J n ( ˆ θ n,H ; y ) − J n ( ˆ θ n ; y ) J n ( ˆ θ n ; y ) . (16) This asymptotic conv ergence result is summarized in the following theorem. Theorem 6.1. Under assumptions detaile d in [9, 10] and assuming the nul l hyp othesis H 0 is true, then U n c onver ges in distribution (as n → ∞ ) to a r andom va riable U having a chi-squar e distribution with κ r de gr e es of fr e e- dom. The ab ov e t heorem suggests t ha t if the sample size n is sufficien tly large, then U n is approxim ately c hi-square distributed with κ r degrees of freedom. W e use t his fact to dete rmine whether or not the n ull hy p othesis H 0 is re- jected. T o do that, we choose a sig nific anc e lev e l α (usually chosen to b e 0.05) and use χ 2 tables to obtain the corresp onding thr es h old v alue τ so that P r ob ( U > τ ) = α . W e next c ompute ˆ U n and compare it to τ . If ˆ U n > τ , then w e r eje ct the n ull h yp ot hesis H 0 with confidence lev el (1 − α )10 0 %; otherwise, w e do no t reject. W e emphasize that care should b e tak en in stat- ing conclusions: w e either r eject or do not reject H 0 at the specified lev el of 30 confidence. The table b elo w illustrates the threshold v alues for χ 2 (1) with the give n significance lev el. α τ confidence lev el . 25 1 . 32 75% . 1 2 . 71 90% . 05 3 . 84 95% . 01 6 . 63 99% . 001 10 . 83 99 . 9% Similar tables can b e found in a ny elemen tary statistics text or online or calculated b y some softw are pack age suc h as Matlab, and is giv en here for illustrativ e purpo ses and also fo r use in the examples demonstrated b elo w. 6.2 Generalized L east Sq u ares The mo del comparison results outlined can b e extende d to deal with gener- alized least squares problems in whic h measureme nt errors are indep enden t with E ( E k ) = 0 and V ar ( E k ) = σ 2 w 2 ( t k , ˆ θ ), k = 1 , 2 , . . . , n , where w is some kno wn real-v alued function with w ( t, ˆ θ ) 6 = 0 for any t . This is ac hieve d through rescaling the observ ations in accordance with their v ariance (as dis- cussed in [9]) so that the resulting (transformed) observ ations are identically distributed as w ell as indep enden t. 6.3 Results for P olyQ Aggregation M o dels W e then carried out a series of mo del comparison tests (w e ag ain used DS 4) for nes ted mo dels to determine if an added parameter yields a statistically significan tly improv ed mo del fit. Our n ull hypothesis in each case was: H 0 : The restricted mo del is adequate (i.e., the fit-to-data is not significantly impro v ed with the mo del con taining the additional parameter as a parameter to b e estimated). W e obtained t he following results. 1. Model with estimation of { k + I , k − I } vs. the mo del with estimation of { k + I , k − I , k N of f } : W e find with n=699 , J n ( ˆ θ n H ; Y ) = . 004419210 9 , J n ( ˆ θ n ; Y ) = . 0043709501 a nd ˆ U n = 7 . 7178 . Th us we r eject H 0 at a 99% confidence lev el. 31 2. Model with estimation of { k + I , k − I } vs. the mo del with estimation of { k + I , k − I , k N on } : W e find J n ( ˆ θ n ; Y ) = . 0044192 1 08 with ˆ U n = 7 . 49 × 10 − 06 . Th us w e don’t reject H 0 at a 99% confidence lev el. 3. Model with estimation of { k + I , k − I , k N of f } vs. the mo del with estimation of { k + I , k − I , k N of f , k N on } : T o the order of computation w e find no difference in the cost functions in this case and therefore w e do not r eject H 0 at a confidence lev el of 9 9 %. 4. Model with estimation of { k + I , k − I , k N on } vs. the mo del with estimation of { k + I , k − I , k N on , k N of f } : W e find J n ( ˆ θ n ; Y ) = . 0 04370978 0 with ˆ U n = 7 . 71 33 and hence we reject H 0 with a confidence lev el o f 99%. F rom these and the preceding results we conclude the information con ten t of the t ypical da t a set fo r the dynamics considered here will supp o rt at most 3 parameters estimated with reasonable confidence le ve ls and these are the parameters { k + I , k − I , k N of f } . 7 Conclus ions and Suggested F urther Efforts F or the efforts r ep orted on ab ov e w e mak e sev eral conclusions. F or the ma jority of data sets, t he GLS residual plots with γ = 0 . 6 are random when fitted for data p oints M ( t k ) ≥ 0 . 12 . As conjectured earlier, this may b e b ecause the early formation of aggregates is somewhat sto c has- tic in nature whic h is not w ell described b y either the mathematical and/or statistical mo dels. It a pp ears that one needs sp ecial consideration of smaller p olymer sizes . Indeed w e susp ect from additional discus sions with our col- leagues that p erhaps the nucleation step might b e dominated by a sto c hastic rather than deterministic pro cess in the early stages (i.e., for small p o lymer sizes). This is a p ossible direction of further inv estigation. Based on sev eral differen t mathematical/statistical metho dologies (sen- sitivities, asymptotic analys is, b o otstrapping, mo del comparison t ests), the data sets we considered do not contain sufficien t information for the reliable estimation of all 9 parameters of in terest. Indeed our findings suggest that at most 3 parameters can b e reliably estimated with the data sets t ypical of those presen ted here, and that these parameters are { k + I , k − I , k N of f } . Re- cen t ly related efforts [2] suggest that p erhaps there are experimental design questions that could b e addressed to collect data that migh t supp o r t the 32 more sophisticated mo dels derive d in [23], es p ecially in order to in ve stigate information coming from differen t initia l concen trations. Indeed, w e hav e considered here data sets related to exp erimen ts carried out with the s ame initial concen tr a tion. Adapting the previously used techniq ues to simulta- ne ously or suc c essively use all the information conte nt in data sets carried out for different initial concen tration is a challe nging problem (see [22] for a discussion of the effect of initial concen tration on nucleated p olymerization). Here w e conclude that at most 3 par ameters { k + I , k − I , k N of f } can b e reliably estimated with the data sets in v estigated. The tw o first pa r a meters determine the bala nce b et w een the normal and abnormal protein concentrations and the third represen ts the stabilit y of the nuc leus ag ainst the degradation in to monomeric entities . These three parameters are related to the early steps of the aggregation pro cess, and th us w e conclud e that t he mo del applied to these data sets do es not prov ide any insigh t in to the p olymerization of larger polymers. Since this is the cas e, there is little mot iv ation to modify the p olymerization function depicted in Figure 2 until further data collection pro cedures a r e pursue d. Ac kno wledge men ts This researc h w as supp orted in part (MD, CK) b y the ER C Starting Gran t SKIPPERAD, in part (HTB) b y G ran t Number NIAID R0 1AI071915- 1 0 from the National In stitute of Allergy and Infec tious Diseases, and in part (HTB) by the Air F orce Office of Scien tific Rese arch under grant num b er AF OSR F A9550 -12-1- 0188. References [1] B.M. Adams , H.T. Banks, M. Da vidian, and E.S. Rosen b erg, Mo del fitting and prediction with HIV treatmen t in terruption data, Cen ter for Researc h in Scien tific Computation T ec hnical Rep ort CRSC-TR05- 40, NC State Univ., Octob er, 2005; Bul letin of Math. Biolo gy , 69 (200 7 ), 563–584. [2] Kask a Adotey e, H.T. Banks and Kevin B. Flores, Opt ima l design of non-equilibrium ex p eriments for genetic net w ork in terrog a tion, CRSC- 33 TR14-12, N. C. State Univ ersit y , Raleigh, NC, Septem b er, 2 014; Applie d Mathematics L etters , 40 (2015), 84–89; D OI: 10.1016 / j.aml.2014.09.0 13. [3] H.T. Banks, J.E. Banks, K. Link, J.A. Ro senheim, Chelsea Ro ss, and K.A. Tillman, Mo del comparison tests to determine data information con ten t, CRSC-TR14-13, N. C. State Univ ersit y , Raleigh, NC, Octob er, 2014; Applie d Math L etters , to a pp ear. [4] H.T. Banks, R . Baraldi, K. Cross, K. F lores, C. McChesney , L. P oag, a nd E. Thorp e, Uncertain t y quan tification in mo deling HIV viral mec hanics, CRSC-TR13-16, N. C. State Univ ersit y , R a leigh, NC, Decem b er, 2013; Math. Bioscienc es an d Engr. , submitted. [5] H.T. Banks, A. Cintron-Arias and F. Ka pp el, P arameter selection meth- o ds in in v erse problem formulation, CRSC-TR10- 0 3, N.C. State Univ er- sit y , F ebruary , 20 1 0, Revised, Nov em b er, 2010; in Mathematic al Mo deling and V alidation in Physiolo gy: Applic ation to the Car diov a scular and R es- pir atory Systems ,(J. J. Batzel, M. Bachar, and F. Kapp el, eds .), pp. 43 – 73, Lecture Notes in Mathematics V ol. 2 0 64, Springer-V erlag, Berlin 2013. [6] H. T. Banks , M. Dav idian, S. Hu, G. M. Kepler, and E . S. Rosen b erg, Mo deling HIV imm une resp onse and v alidation with clinical data, Journal of Biolo gic al D ynamics , 2 (20 0 8), 357–3 85. [7] H.T. Ba nks, M. Doumic and C. Kruse, Efficien t numerical sc hemes for Nucleation-Agg regation mo dels: Early steps, CRSC-TR14-01, N. C. State Univ ersit y , Raleigh, NC, Marc h, 2014. [8] H.T. Banks and B.G. Fitzpatrick, Statistical metho ds fo r mo del compar- ison in parameter estimation problems for distributed sys tems, Journal of Mathematic al Biolo gy , 28 (1990), 501- 527. [9] H.T. Banks, S. Hu and W.C. Thompson, Mo deling and I nverse Pr ob- lems in the Pr esenc e of Unc ertainty , T a ylor/F rancis-Chapman/Hall-CR C Press, Bo ca Raton, FL, 2014. [10] H.T. Banks a nd H.T. T r a n, Mathematic al and Exp erimental Mo deling of Physic al and Biolo g ic al Pr o c ess es , CR C Press, Bo ca Ra ton, FL, 200 9. 34 [11] V. Calv ez and N. Len uzza and M. D oumic and J.-P . Deslys and F. Mouthon and B. P erthame, Prion dynam ic with s ize dep e n dency - str a i n phenomena , J. of Biol. Dyn., 4 (1), 28–42. [12] R.J. Carroll and D. Rupp ert, T r ansformation and Weighting in R e gr es- sion, Chapman & Hall, New Y ork, 1988. [13] R.J. Carroll, C.F.J. W u and D. Rupp ert, The effect of estimating weigh ts in W eigh ted Least Squares, J. A mer. Statistic al Asso c. , 83 (1 988), 1 045– 1054. [14] J.F. Collet, T. Goudon,F. P oupaud and A. V asseur, The Bec k er-D¨ oring system and its Lifshitz-Sly ozov limit, SIAM J. Appl. Math. , 62 (2002), 1488–150 0. [15] M. D a vidian, Nonline ar Mo dels for Univariate and Multivariate R e- sp onse , ST 762 Lecture Notes, Chapters 2, 3, 9 a nd 11, 2007; h ttp://www4.stat.ncsu.edu/ ∼ da vidian/courses.html [16] M. Davidian and D.M. Gilt ina n, Nonline ar Mo dels for R ep e a te d Me a- sur ement Data , Chapman and Hall, London, 2000. [17] T.J. D iCiccio and B. Efron, Bo otstrap confidence in terv als, Statistic al Scienc e , 11 (1995), 189–228 . [18] M. Doumic, T. Goudon and T. Lep outre, Scaling limit of a discrete prion dynamics mo del, Commun. Math. Sci. , 7 (2009), 839–8 65. [19] B. Efron, The Jackk n ife, the B o otstr ap and Other R esam pling Plans , CBMS 38, SIAM Publishing, Philadelphia, P A, 1982. [20] P . Lauren¸ cot and S. Misc hler, F rom the discrete to the con t in uous coagu- lationfragmen tatio n equations, Pr o c. R oyal So ciety of Edinbur gh: Se ction A Mathematics , 132 (2 002), 1219–12 48. [21] R.J. LeV eque, Finite-V olume Metho ds for Hyp erb olic Pr ob l e ms , Cam- bridge Univ ersit y Press, 20 02. [22] E.T. P ow ers and D.L. Po wers , The kinetic s of n ucleated p olymeriza- tions at high concen trations: Amy loid fibril formation near and a b o v e the “sup ercritical concen tration” , Bioph ysic al J. , 91 (2 006), 122–132 . 35 [23] S. Prigent, A. Ballesta, F. Charles, N. Len uzza, P . Gabriel, L.M. Tine, H. Rezaei and M. D oumic, An efficien t kinetic mo del fo r assem blies of am yloid fibrils and it s application to p olyglutamine aggregation, PL o S ONE , 7 (20 12), e43273 ; D OI:10.1371/j ournal.p one.00432 73 [24] F. Eghiaian, T. Daubenfeld, Y. Quene t, M. v an Audenhaege, A.P . Bouin, G. v an der Rest, J. Gr o sclaude and H. Rezaei, Div ersit y in prio n protein oligomerization path w ay s results from domain expansion as re- v ealed b y hy drogen/deuterium exc hange and disulfide link age, PNAS , bf 104 (18), 200 7, 7414–7 419. [25] S.I. Rubinow , I ntr o duction to Mathematic al Bio lo gy , John Wiley & So ns, New Y ork, 1975. [26] G.A.F . Seb er and C.J. Wild, Nonline ar R e g r ession , J. Wiley & Sons, Hob ok en, NJ, 2003. [27] W ei-F eng Xue, S.W. Homans and S.E. Radford, Systematic analysis of n ucleation-dep enden t p olymerization r eveals new insights in to the mec h- anism of am yloid self-assem bly , Pr o c Natl A c ad Sci U S A , 105 (2008), 8926–893 1. [28] W.-F. Xue, S. W. Homans, and S. E. Radford, Am yloid fibril length distribution quan tified b y atomic force microscop y single-particle image analysis, Pr otein Engine ering, Design & Sele ction:PED S , 22 (2009),48 9– 496. [29] W.-F. Xue and S. E. Ra dford, An imaging and systems mo deling ap- proac h to fibril br eak age enables prediction o f am yloid b eha vior, Biophys- ic al Journal , 105 (2013), 2811– 2819. 36 0 100 200 300 400 500 0 0.2 0.4 0.6 0.8 1 1.2 1.4 1.6 x 10 −9 x c(x,t=12) Polymer Distribution c(x,t=12), q=1/32, N 0 =100 Upwind LW Van−Leer BWLW 2.13 2.14 2.15 2.16 2.17 2.18 2.19 2.2 0 10 20 30 40 50 60 70 80 Bootstrapping for kiplus and M=300 10.8 10.85 10.9 10.95 11 11.05 11.1 11.15 11.2 11.25 0 10 20 30 40 50 60 70 80 Bootstrapping for kiminus and M=300 88 89 90 91 92 93 94 0 10 20 30 40 50 60 70 Bootstrapping for koffN and M=300 0 2 4 6 8 10 12 14 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 time (h) M(t) Fit for initial concentration of c 0 =100 µ M Data set 1 Data set 2 Data set 3 q 1 q 2 q 3 q 4 0 1 2 3 4 5 6 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 time (h) M(t) Fit for initial concentration of c 0 =382 µ M Data set 1 Data set 2 Data set 3 Data set 4 q 1 q 2 q 3 q 4 10.84 10.86 10.88 10.9 10.92 10.94 10.96 10.98 11 11.02 0 50 100 150 200 250 300 350 400 2.145 2.15 2.155 2.16 2.165 2.17 2.175 2.18 2.185 0 50 100 150 200 250 300 350 400 2 3 4 5 6 7 8 −0.03 −0.02 −0.01 0 0.01 0.02 0.03 0.04 Residuals for noise level=0.01, GLS t Residuals

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment