Bayesian Nonparametric Covariance Regression

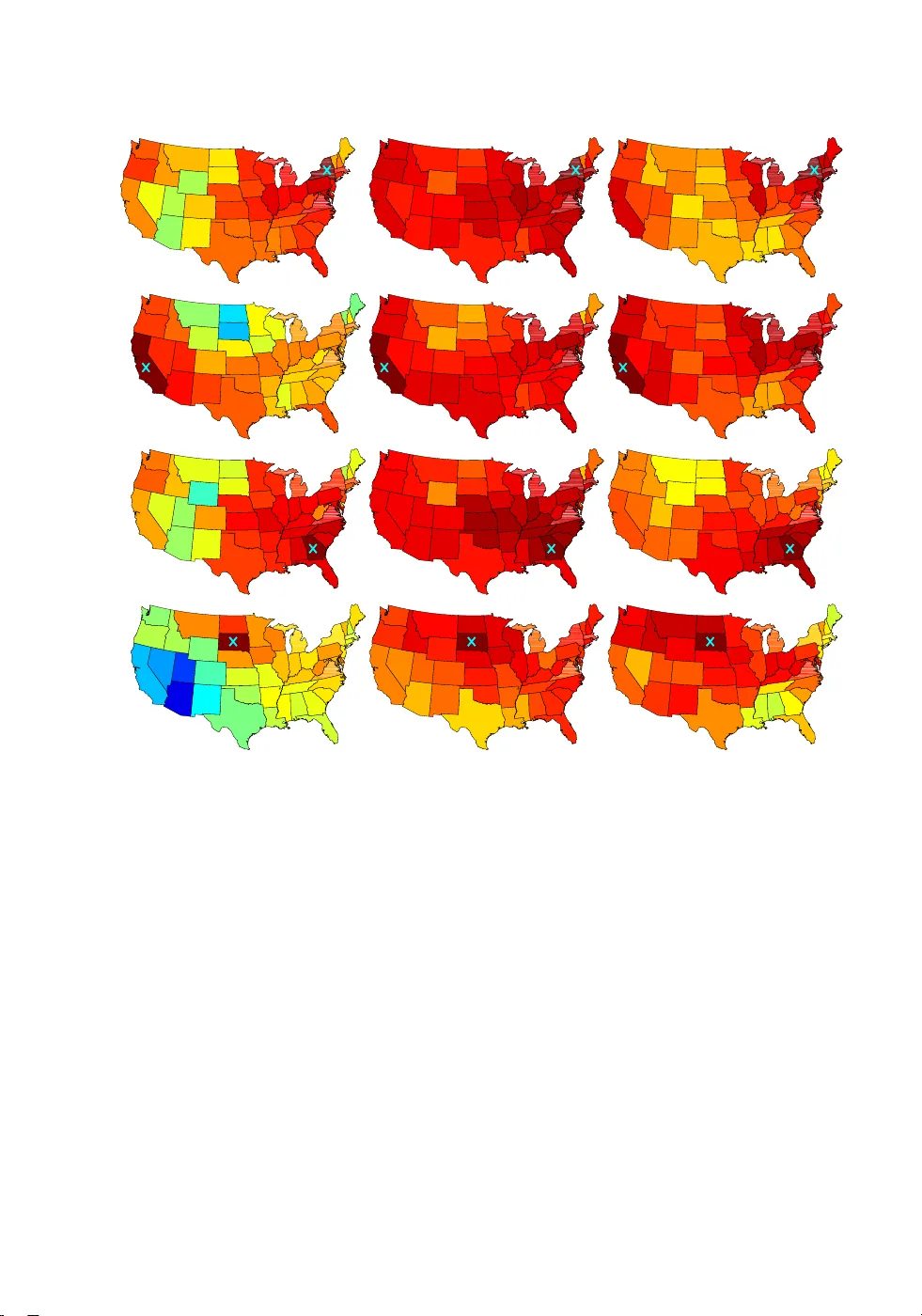

Although there is a rich literature on methods for allowing the variance in a univariate regression model to vary with predictors, time and other factors, relatively little has been done in the multivariate case. Our focus is on developing a class of…

Authors: Emily Fox, David Dunson