Arithmetic Operations Beyond Floating Point Number Precision

In basic computational physics classes, students often raise the question of how to compute a number that exceeds the numerical limit of the machine. While technique of avoiding overflow/underflow has practical application in the electrical and electronics engineering industries, it is not commonly utilized in scientific computing, because scientific notation is adequate in most cases. We present an undergraduate project that deals with such calculations beyond a machine’s numerical limit, known as arbitrary precision arithmetic. The assignment asks students to investigate the approach of calculating the exact value of a large number beyond the floating point number precision, using the basic scientific programming language Fortran. The basic concept is to utilize arrays to decompose the number and allocate finite memory. Examples of the successive multiplication of even number and the multiplication and division of two overflowing floats are presented. The multiple precision scheme has been applied to hardware and firmware design for digital signal processing (DSP) systems, and is gaining importance to scientific computing. Such basic arithmetic operations can be integrated to solve advanced mathematical problems to almost arbitrarily-high precision that is limited by the memory of the host machine.

💡 Research Summary

The paper addresses a pedagogical gap in introductory computational physics and engineering courses: how to perform arithmetic on numbers that exceed the native floating‑point limits of a computer. While engineers often resort to scientific notation or hardware tricks to avoid overflow and underflow, the authors argue that a deeper understanding of arbitrary‑precision arithmetic is valuable for students and for certain scientific applications. The core contribution is an undergraduate project that implements high‑precision integer arithmetic using only standard Fortran constructs and arrays, without relying on external libraries such as GMP or MPFR.

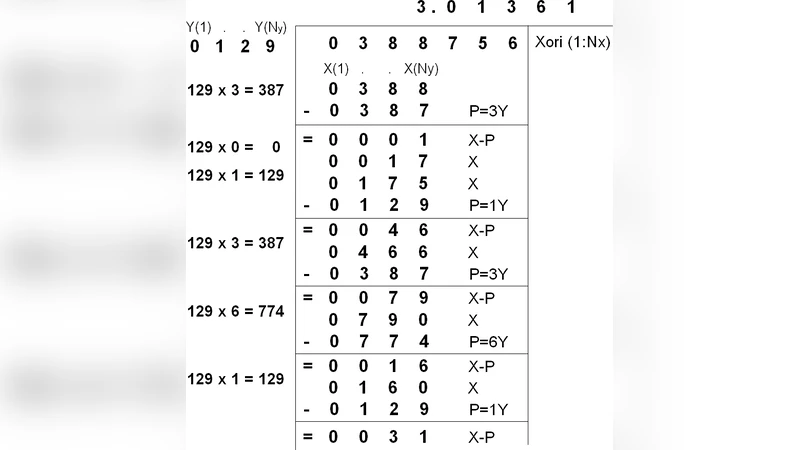

The methodology begins by representing a large integer as a one‑dimensional array of “digits” in a chosen base (e.g., 10⁴ or 10⁶). Each array element stores a value between 0 and BASE‑1, and the overall number is the sum of element × BASEⁱ for the appropriate index i. Basic operations—addition, subtraction, multiplication, and division—are coded directly on this representation. Addition and subtraction propagate carries or borrows across the array; multiplication follows the school‑book vertical algorithm, accumulating partial products and handling carries with 64‑bit temporaries to avoid intermediate overflow; division implements a long‑division routine that can be extended to arbitrary numbers of fractional digits. The authors emphasize that the algorithmic steps mirror textbook procedures, making the code transparent for educational purposes.

Two illustrative assignments are presented. The first computes the product of the first k even numbers (2 × 4 × 6 × … × 2k). As k grows, the result quickly exceeds the range of double‑precision floating point, causing overflow in standard arithmetic. Using the array‑based scheme, students can observe the growth of the number, monitor memory consumption, and measure execution time, thereby gaining intuition about the trade‑off between precision and resources. The second assignment deals with the multiplication and subsequent division of two floating‑point numbers that have already overflowed. The approach separates each operand into mantissa and exponent, converts the mantissas into the arbitrary‑precision array format, performs the multiplication (or division) on the mantissas, and finally recombines the result with the appropriate exponent adjustment. This demonstrates that the technique can recover accurate results even when the native floating‑point representation has lost significance.

Performance comparisons show that the pure Fortran implementation is slower by one to two orders of magnitude relative to highly optimized libraries, but the slowdown is acceptable for classroom experiments. Memory usage scales linearly with the number of digits, and modern workstations with several gigabytes of RAM can comfortably handle numbers with thousands of decimal digits. The authors also discuss real‑world relevance: in digital signal processing (DSP) firmware, fixed‑point arithmetic with limited word length is common, and software‑based multi‑precision routines are employed to extend effective precision without redesigning hardware. Similar needs arise in astrophysics, high‑energy physics, and cryptography, where exact integer results are required.

In conclusion, the project serves a dual purpose. It provides a concrete, hands‑on experience with arbitrary‑precision arithmetic, reinforcing concepts of overflow, underflow, and numerical stability, while also illustrating how such techniques can be applied in practical engineering contexts. The paper suggests future extensions, including parallelization with OpenMP, implementation of faster multiplication algorithms (Karatsuba, FFT‑based), and integration with existing scientific libraries to bridge the gap between educational simplicity and production‑level performance.