A Distributed Newton Method for Network Utility Maximization

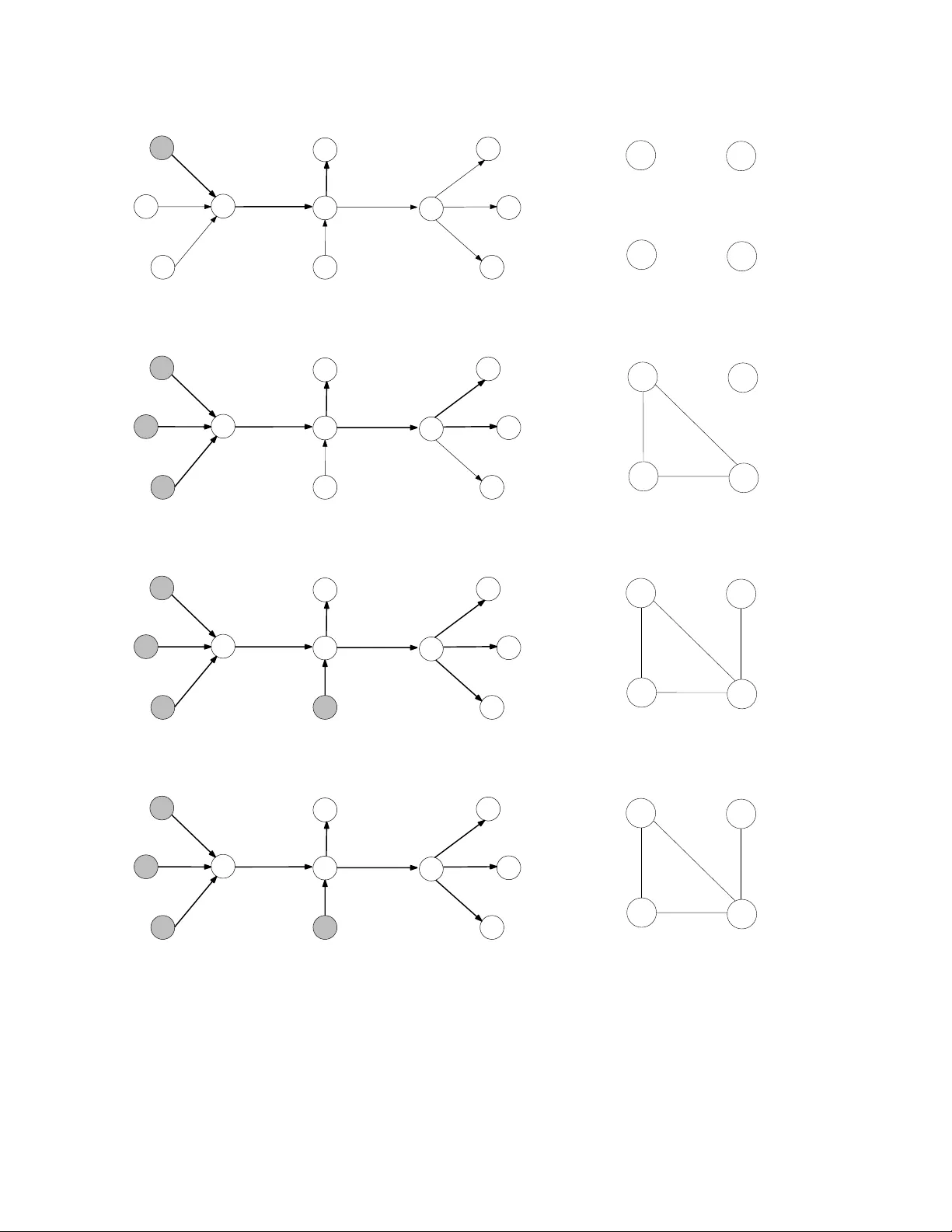

Most existing work uses dual decomposition and subgradient methods to solve Network Utility Maximization (NUM) problems in a distributed manner, which suffer from slow rate of convergence properties. This work develops an alternative distributed Newt…

Authors: Ermin Wei, Asuman Ozdaglar, Ali Jadbabaie