In this paper, we describe efficient MapReduce simulations of parallel algorithms specified in the BSP and PRAM models. We also provide some applications of these simulation results to problems in parallel computational geometry for the MapReduce framework, which result in efficient MapReduce algorithms for sorting, 1-dimensional all nearest-neighbors, 2-dimensional convex hulls, 3-dimensional convex hulls, and fixed-dimensional linear programming. For the case when reducers can have a buffer size of $B=O(n^\epsilon)$, for a small constant $\epsilon>0$, all of our MapReduce algorithms for these applications run in a constant number of rounds and have a linear-sized message complexity, with high probability, while guaranteeing with high probability that all reducer lists are of size $O(B)$.

Deep Dive into Simulating Parallel Algorithms in the MapReduce Framework with Applications to Parallel Computational Geometry.

In this paper, we describe efficient MapReduce simulations of parallel algorithms specified in the BSP and PRAM models. We also provide some applications of these simulation results to problems in parallel computational geometry for the MapReduce framework, which result in efficient MapReduce algorithms for sorting, 1-dimensional all nearest-neighbors, 2-dimensional convex hulls, 3-dimensional convex hulls, and fixed-dimensional linear programming. For the case when reducers can have a buffer size of $B=O(n^\epsilon)$, for a small constant $\epsilon>0$, all of our MapReduce algorithms for these applications run in a constant number of rounds and have a linear-sized message complexity, with high probability, while guaranteeing with high probability that all reducer lists are of size $O(B)$.

The MapReduce framework [12,13] is a programming paradigm for designing parallel and distributed algorithms, which can be easily implemented in cloud computing environments and server clusters (e.g., see [24]). It provides a simple programming interface that is specifically designed to make it easy for a programmer to design an efficient parallel program that can efficiently perform a data-intensive computation. This framework is gaining wide-spread interest in both theory and systems domains, in that it is motivating new models for parallel computing [15,21] while also being used in Google data centers and as a part of the open-source Hadoop system [29] for server clusters. In this framework, a computation is specified as a sequence of map, shuffle, and reduce steps that operate on a set X = {x 1 , x 2 , . . . , x n } of values:

• A map step applies a function, µ, to each value, x i , to produce a key-value pair, (k i , v i ). To allow for parallel execution, the computation of the function, µ(x i ) → (k i , v i ), must depend only on x i .

• A shuffle step collects all the key-value pairs produced in the previous map step, and produces a set of lists, L k = (k; v i 1 , v i 2 , . . .), where each such list consists of all the values, v i j , such that k i j = k for a key k assigned in the map step.

• A reduce step applies a function, ρ, to each list, L k = (k; v i 1 , v i 2 , . . .), formed in the shuffle step, to produce a set of values, y j 1 , y j 2 , . . . . The reduction function, ρ, is allowed to be defined sequentially on L k , but should be independent of other lists L k where k = k.

Outputs from a reduce step can, in general, be used as inputs to another round of map-shufflereduce steps. So we allow the output values from a reduce step can be either final values, which are included in the final output of the algorithm, or they can be intermediate values, which are used as input for another round of map-shuffle-reduce steps. Typically, only values output in the last round of the algorithm are labeled as final. Thus, a typical MapReduce computation is described as a sequence of map-shuffle-reduce steps that perform a desired action and produce the output after the last reduce step.

For example, consider an often-cited MapReduce algorithm to count all the instances of words in a document. Given a document, D, we define the set of input values X to be all the words in the document and we then proceed as follows:

- Map: For each word, w, in the document, map w to (w, 1).

: collect all the (w, 1) pairs for each word, producing a list (w; 1, 1, . . . , 1), noting that the number of 1’s in each such list is equal to the number of times w appears in the document. 3. Reduce: scan each list (w; 1, 1, . . . , 1), summing up the number of 1’s in each such list, and output a pair (w, n w ) as a final output value, where n w is the number of 1’s in the list for w.

This single-round computation clearly computes the number of times each word appears in D.

There are several metrics that one can use to measure the efficiency of a MapReduce algorithm over the course of its execution, including the following:

• t: the number of rounds of map-shuffle-reduce that the algorithm uses.

• n i,1 , n i,2 , . . .: the reducer I/O sizes for round i, so that n i,j is the size of the inputs and outputs for reducer j in round i.

• M i : the message complexity of round i of the algorithm, that is, the total size of the inputs and outputs for reducers in round i, that is, M i = j n i,j . We can also define a message complexity, M = t i=1 M i , for the entire algorithm. • r i : the internal running time for round i, which is the maximum internal running time taken by a reducer in round i, where we assume r i ≥ max j {n i,j }, since a reducer must have a running time that is at least the size of its inputs and outputs. We can also define an internal running time, r = t i=1 r i , for the entire algorithm, as well. • B: the buffer size for reducers, that is, the maximum size of the working memory needed by a reducer to process its inputs and outputs (in addition to the storage used for the input itself), taken across all t rounds of the algorithm.

We can make a crude calibration of a MapReduce implementation using the following additional parameters:

• L: the latency L of the shuffle network, which is the number of steps that a mapper or reducer has to wait until it receives its first input in a given round.

• b: the bandwidth of the shuffle network, which is the number of elements in a MapReduce computation that can be delivered by the shuffle network in any time unit.

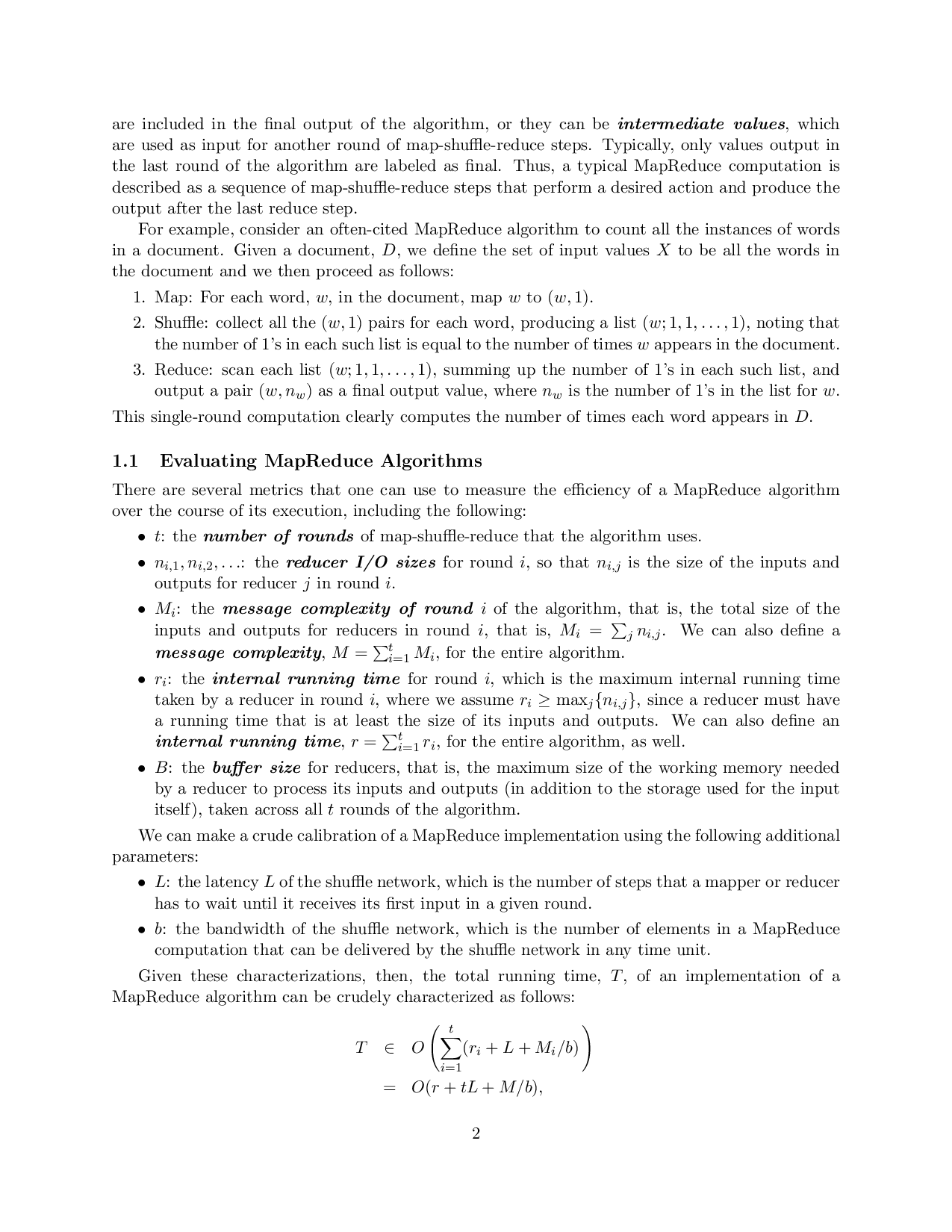

Given these characterizations, then, the total running time, T , of an implementation of a MapReduce algorithm can be crudely characterized as follows:

which we call the MapReduce running time. For example, given a document D of n words, the simple word-counting MapReduce algorithm given above has a worst-case performance of t being 1, M being O(n), and

…(Full text truncated)…

This content is AI-processed based on ArXiv data.