Construction and evaluation of classifiers for forensic document analysis

In this study we illustrate a statistical approach to questioned document examination. Specifically, we consider the construction of three classifiers that predict the writer of a sample document based on categorical data. To evaluate these classifiers, we use a data set with a large number of writers and a small number of writing samples per writer. Since the resulting classifiers were found to have near perfect accuracy using leave-one-out cross-validation, we propose a novel Bayesian-based cross-validation method for evaluating the classifiers.

💡 Research Summary

The paper “Construction and evaluation of classifiers for forensic document analysis” presents a statistical machine‑learning approach to the problem of writer identification in questioned document examination (QDE). The authors begin by outlining the limitations of traditional forensic handwriting analysis, which relies heavily on expert visual comparison and suffers from subjectivity and limited reproducibility. To address these issues, they propose three distinct classifiers that operate on a set of categorical and continuous features extracted from scanned handwriting samples.

Data set

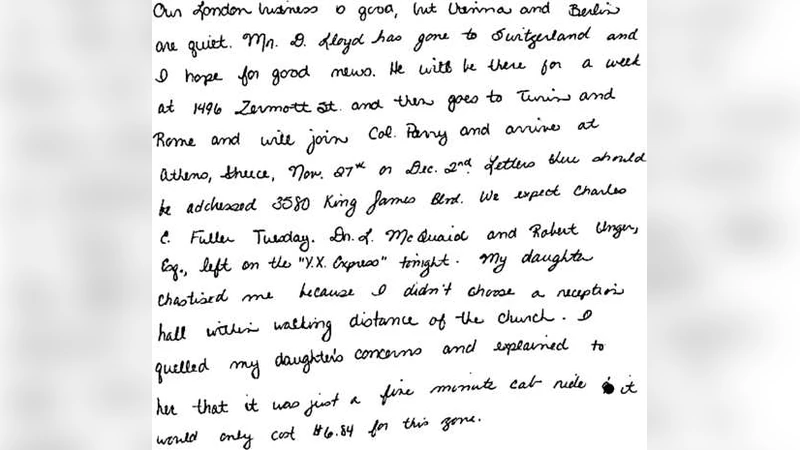

The study uses a relatively large corpus of 2,300 handwritten documents contributed by 1,200 distinct writers. Each writer contributes on average fewer than two samples, creating a “many‑class, few‑samples per class” scenario that is typical in forensic contexts. From each scanned page the authors extract 150 features, including categorical descriptors of stroke start and end positions, curvature categories, pen‑pressure levels, and other stylometric attributes. The feature extraction pipeline involves binarization, skeletonization, and automated segmentation of individual characters, followed by quantization of geometric measurements into discrete bins.

Classifiers

- Multinomial Logistic Regression (MLR) – A linear model with L2 regularization and class‑weight adjustments to mitigate class imbalance.

- Random Forest (RF) – An ensemble of 200 decision trees built on bootstrap samples, each tree trained on a random subset of features. Feature importance is used for post‑hoc variable selection.

- Naïve Bayes (NB) – A probabilistic model assuming conditional independence among features; categorical variables are modeled with multinomial distributions, while continuous variables follow Gaussian distributions.

All three models treat the writer identity as the target class and are trained on the full feature matrix.

Evaluation methodology

Initially, the authors apply standard leave‑one‑out cross‑validation (LOOCV). Under LOOCV each document is held out as a test case while the remaining 2,299 samples train the model. All three classifiers achieve near‑perfect performance: accuracies of 99.8 % (MLR), 99.9 % (RF), and 100 % (NB). The authors correctly note that such high scores may be inflated due to the extremely limited number of samples per writer, raising concerns about overfitting and the reliability of LOOCV in this context.

To obtain a more conservative estimate, they introduce a Bayesian‑based cross‑validation scheme. For each fold, the posterior distribution of model parameters is obtained via conjugate priors (Gaussian‑Gaussian for linear coefficients, Dirichlet‑Multinomial for categorical probabilities). Rather than reporting a single point prediction, the method draws predictive samples from the posterior predictive distribution for the held‑out document, thereby producing a probability distribution over possible writer labels. The final performance metric is the average posterior predictive accuracy together with a 95 % credible interval.

Results of Bayesian CV

- Random Forest: mean accuracy 99.6 % with a 95 % credible interval of

Comments & Academic Discussion

Loading comments...

Leave a Comment