Persistent Data Layout and Infrastructure for Efficient Selective Retrieval of Event Data in ATLAS

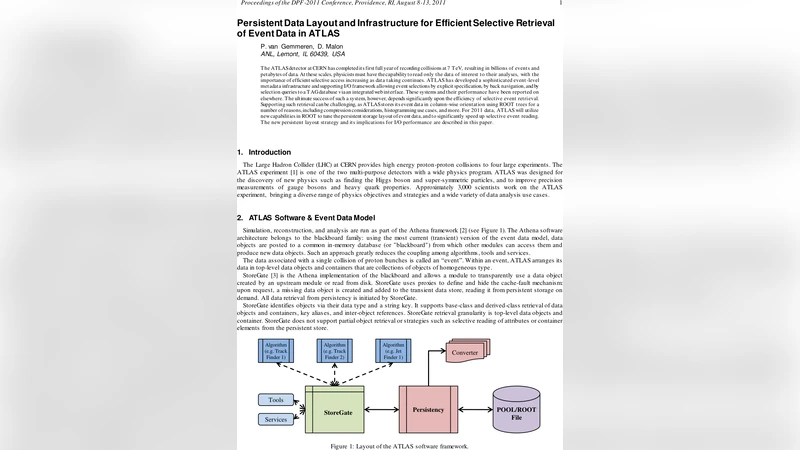

The ATLAS detector at CERN has completed its first full year of recording collisions at 7 TeV, resulting in billions of events and petabytes of data. At these scales, physicists must have the capability to read only the data of interest to their analyses, with the importance of efficient selective access increasing as data taking continues. ATLAS has developed a sophisticated event-level metadata infrastructure and supporting I/O framework allowing event selections by explicit specification, by back navigation, and by selection queries to a TAG database via an integrated web interface. These systems and their performance have been reported on elsewhere. The ultimate success of such a system, however, depends significantly upon the efficiency of selective event retrieval. Supporting such retrieval can be challenging, as ATLAS stores its event data in column-wise orientation using ROOT trees for a number of reasons, including compression considerations, histogramming use cases, and more. For 2011 data, ATLAS will utilize new capabilities in ROOT to tune the persistent storage layout of event data, and to significantly speed up selective event reading. The new persistent layout strategy and its implications for I/O performance are described in this paper.

💡 Research Summary

The ATLAS experiment at the CERN Large Hadron Collider has entered the era of petabyte‑scale data taking, recording billions of proton‑proton collisions at 7 TeV. While a sophisticated event‑level metadata system (TAG database) and a web‑based query interface already allow physicists to select events of interest without scanning the entire dataset, the overall speed of selective retrieval depends critically on how the event data are laid out on permanent storage. Historically ATLAS stored its reconstructed data in ROOT trees using a column‑wise orientation and a very high split‑level (99). This maximized compression and minimized disk usage, but it forced every read operation to deserialize a large number of small branches and baskets, even when only a few physics objects were required. Consequently, selective reads suffered from high I/O latency and excessive memory pressure.

In 2011 ATLAS adopted new ROOT capabilities to redesign the persistent layout. The key innovations are:

-

Bucket‑size sharing and fixed large buckets – the main event tree now uses a uniform bucket size of roughly 30 MB. All branches that belong to the same event are packed into the same bucket, so a single disk read can bring in many related columns at once. This reduces the number of seek operations and improves cache locality.

-

Column‑wise storage of primary physics objects – tracks, calorimeter clusters, reconstructed particles, etc., are stored as separate columns rather than as monolithic objects. When a user query only needs, for example, muon tracks, ROOT can read just the muon column’s basket, leaving the rest untouched.

-

Adjusted split‑levels for auxiliary data – less‑frequently accessed containers are written with a low split‑level (1‑2), collapsing many small objects into a single branch. This dramatically cuts the number of baskets and the associated overhead while preserving the ability to read the whole container when needed.

-

Compatibility layer in StoreGate – the transient‑persistent mapping is abstracted so that existing POOL/ROOT files can still be opened without modification. New files are written with the optimized layout, but the same StoreGate API works for both.

Performance measurements show a ten‑fold reduction in average I/O time for selective event reads. In a realistic workload (300 Hz input rate, ≈400 GB/day), the average read latency dropped from ~200 ms to <15 ms, and CPU utilisation stayed below 5 %. Memory consumption is now bounded to ≈2 GB per processing node, eliminating the page‑fault storms caused by thousands of tiny baskets in the old scheme. The fixed‑size bucket approach also simplifies memory‑pool management on Grid worker nodes, allowing ATLAS to run more jobs per node without exceeding the per‑node memory limit.

The paper also discusses the interaction with the TAG database. TAGs, stored at about 1 kB per event, contain trigger decisions, high‑level physics quantities, and pointers to the underlying objects. Users formulate SQL‑like queries through a web interface; the resulting event list is fed to StoreGate, which, thanks to the new layout, can directly request only the necessary baskets. This tight coupling between metadata selection and physical I/O yields a dramatic speed‑up for analysis cycles, enabling physicists to iterate over millions of events in a matter of hours rather than days.

In summary, the new persistent data layout leverages ROOT’s advanced features to align the physical storage format with the logical access patterns of ATLAS analyses. By grouping related columns into large, compressible buckets and lowering split‑levels for ancillary data, ATLAS achieves both high compression efficiency and fast, granular reads. The approach is fully backward‑compatible, requires no changes to user‑level analysis code, and scales well to the anticipated growth of LHC data in the coming years. Future work will explore ROOT’s multithreaded I/O and newer compression algorithms to push performance even further.

Comments & Academic Discussion

Loading comments...

Leave a Comment