Experience-driven formation of parts-based representations in a model of layered visual memory

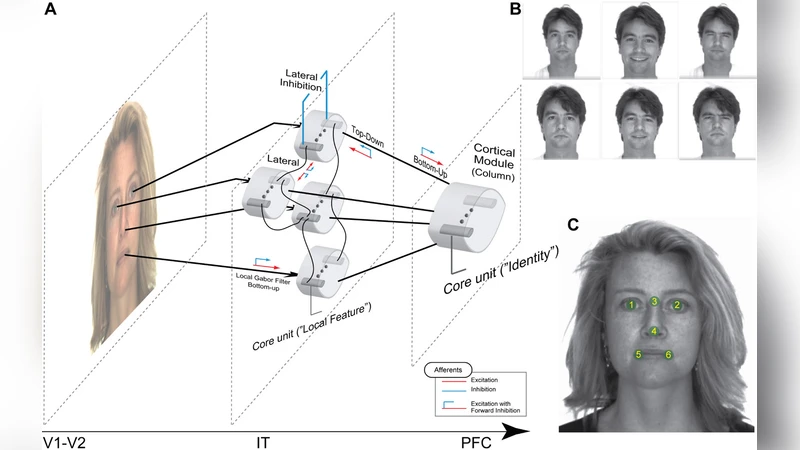

Growing neuropsychological and neurophysiological evidence suggests that the visual cortex uses parts-based representations to encode, store and retrieve relevant objects. In such a scheme, objects are represented as a set of spatially distributed local features, or parts, arranged in stereotypical fashion. To encode the local appearance and to represent the relations between the constituent parts, there has to be an appropriate memory structure formed by previous experience with visual objects. Here, we propose a model how a hierarchical memory structure supporting efficient storage and rapid recall of parts-based representations can be established by an experience-driven process of self-organization. The process is based on the collaboration of slow bidirectional synaptic plasticity and homeostatic unit activity regulation, both running at the top of fast activity dynamics with winner-take-all character modulated by an oscillatory rhythm. These neural mechanisms lay down the basis for cooperation and competition between the distributed units and their synaptic connections. Choosing human face recognition as a test task, we show that, under the condition of open-ended, unsupervised incremental learning, the system is able to form memory traces for individual faces in a parts-based fashion. On a lower memory layer the synaptic structure is developed to represent local facial features and their interrelations, while the identities of different persons are captured explicitly on a higher layer. An additional property of the resulting representations is the sparseness of both the activity during the recall and the synaptic patterns comprising the memory traces.

💡 Research Summary

The paper addresses a long‑standing hypothesis in visual neuroscience: that the cortex encodes objects not as holistic images but as collections of spatially distributed parts arranged in stereotyped configurations. To turn this conceptual idea into a concrete computational model, the authors propose a hierarchical memory architecture that self‑organizes through experience. The core of the learning process combines two slow regulatory mechanisms—bidirectional synaptic plasticity (both potentiation and depression) and homeostatic regulation of neuronal firing rates—operating on top of fast spiking dynamics that are shaped by a winner‑take‑all (WTA) competition and an oscillatory rhythm (e.g., gamma‑like cycles).

During each presentation of an input image, the oscillatory rhythm imposes a temporal window in which a subset of neurons can become active. Global inhibition enforces a WTA constraint, allowing only the most strongly driven neuronal ensemble to win the competition. The winning ensemble then undergoes synaptic modification: connections among the active units are gradually strengthened, while connections to inactive units are weakened, according to a Hebbian‑type rule that is symmetric (bidirectional). Simultaneously, all neurons receive a homeostatic drive that nudges their average firing rate toward a target value, preventing runaway excitation and ensuring that the overall activity remains sparse.

Because the plasticity and homeostatic adjustments are incremental, the network can be exposed to a continuous stream of novel images without catastrophic forgetting. Over time, frequently co‑occurring local features (e.g., an eye, a nose, a mouth in a face) become encoded as stable, sparse patterns in the lower memory layer. The relational information—how these features are positioned relative to each other—is captured implicitly in the pattern of strengthened inter‑unit synapses. A second, higher layer receives the activation pattern of the lower layer as its input. When a particular combination of lower‑layer features recurs, a dedicated higher‑layer neuron (or small population) is recruited and its synapses are reinforced, thereby forming a distinct “identity” trace for that object. In this way, the lower layer stores the parts, while the upper layer stores the part‑configurations that correspond to whole objects.

The authors validate the model on a human‑face recognition task using the Labeled Faces in the Wild (LFW) dataset. Images are presented in an open‑ended, unsupervised incremental fashion, mimicking natural visual experience. After training, the lower layer exhibits clusters of synaptic weights that correspond to canonical facial parts; visualizing these weights reveals localized receptive fields resembling eyes, eyebrows, nose, and mouth. The higher layer shows a one‑to‑one mapping between individual faces and specific high‑level units, confirming that identities are captured explicitly.

Crucially, the system demonstrates robust recall under partial occlusion. When only a subset of facial features is visible (e.g., the eyes are masked), the lower layer still activates the learned part patterns for the visible regions, and the higher layer can reconstruct the full identity based on the incomplete pattern, illustrating the fault‑tolerant nature of parts‑based coding. Moreover, both the activity during recall and the synaptic footprints of each memory trace are highly sparse—typically only 5–10 % of neurons fire for any given stimulus—indicating efficient use of neural resources and fast retrieval.

Compared with conventional deep‑learning face recognizers, this model offers several distinctive advantages: (1) it learns without explicit labels, (2) it incrementally incorporates new exemplars without retraining the whole network, and (3) its memory traces are interpretable, as the synaptic patterns directly map onto recognizable facial parts. However, the current implementation is limited to a modest network size and a single visual modality. Extending the framework to handle more complex objects, multimodal integration, and large‑scale real‑time applications will require scaling up the architecture and possibly incorporating additional mechanisms such as attention or hierarchical temporal processing.

In summary, the paper provides a biologically plausible account of how experience‑driven self‑organization can give rise to hierarchical, parts‑based visual memory. By coupling slow synaptic and homeostatic dynamics with fast competitive oscillations, the model achieves sparse, efficient storage and rapid, robust recall of complex visual objects, as demonstrated on the challenging task of unsupervised face learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment