Modified-CS: Modifying Compressive Sensing for Problems with Partially Known Support

We study the problem of reconstructing a sparse signal from a limited number of its linear projections when a part of its support is known, although the known part may contain some errors. The ``known" part of the support, denoted T, may be available…

Authors: Namrata Vaswani, Wei Lu

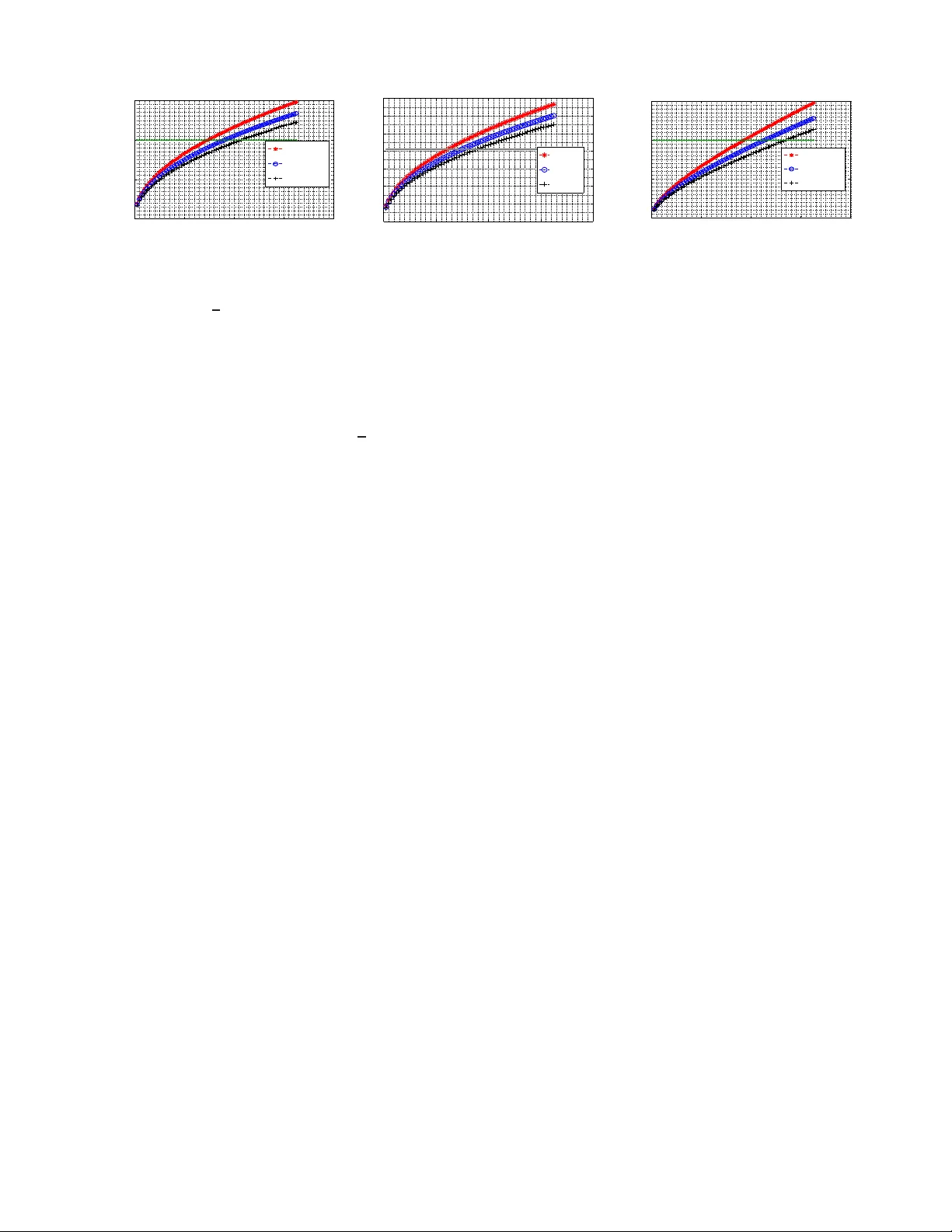

1 Modified-CS: Modifying Compressi v e Sensing for Problems with P artially Kno wn Supp ort Namrata V aswani and W ei Lu Abstract —W e study the problem of re constructing a sparse signal from a limited number of its linear pro jections when a part of its support is known, although the known part may contain some errors . The “known” part of the support, denoted T , may be av ailabl e from prior k nowledge. Alternativ ely , in a problem of recursi vely re constructing time sequences of sparse spatial signals, one may use the support estimate from the prev ious time instant as the “known” part. Th e id ea of our p roposed solu t ion (modified-CS) is to solve a con vex relaxation of the following problem: fin d the signal that satisfies the data constraint and is sparsest outsid e of T . W e obtain suffi ci en t conditions for exact reconstruction using modified-CS. These ar e much w eaker than those n eeded for compressiv e sensing (CS) when the sizes of the unknown part of the sup p ort and of errors in the known part are small compared to the support size. An imp ortant extension called Regularized Modified- CS (RegModCS) is developed wh i ch also u ses prior signal estimate kn owledge. Simulation compar - isons for both sparse and compressible signals are shown. I . I N T R O D U C T I O N In th is work, we study the spar se reco nstruction problem from noiseless measurements wh en a par t of the suppor t is known, althoug h th e known part may contain some errors. The “known” part o f the suppo rt may be available fro m pr ior knowledge. For example, conside r M R im age reco nstruction using th e 2D discrete wa velet transfo rm (DW T) as th e sparsi- fying basis. If it is known th at an image has no (or very little) black backgroun d, all (or most) a pprox imation coefficients will be no nzero. In this case, the “kn own suppo rt” is the set o f ind ices o f the appr oximation coe fficients. Altern ativ ely , in a pro blem of re cursively r econstructin g time sequences of sparse sp atial signals, on e may use the support estimate from the previous tim e instan t as the “ known suppo rt”. This latter pr oblem occ urs in various pr actical application s such as real-time d ynamic MRI recon struction, real-time single-pixel camera vid eo imaging o r video compression/d ecompr ession. There are also numero us o ther potential applica tions where sparse reconstru ction for time sequ ences of signals/imag es may b e need ed, e.g. see [3 ], [4]. Sparse r econstructio n has bee n well studied for a wh ile, e.g . see [5], [ 6]. Recen t work on Com pressed Sen sing (CS) gives condition s fo r its exact reconstru ction [7], [8], [9 ] and boun ds the error when this is not po ssible [ 10], [1 1]. Our recen t work on Least Squares CS-residua l (LS-CS) [12], [13] can be interpreted as a solution to the pro blem of sparse recon struction with partly known support. L S-CS N. V asw ani and W . Lu are with the ECE dept . at Iow a State Unive rsity (email: { namrata,luwe i } @iasta te.edu). A part of this work appeared in [1], [2]. This research wa s support ed by NSF grants ECCS-0725849 and CCF- 0917015. Copy right (c) 2010 IEEE. P ersonal use of this material is permitte d. Ho wev er, permission to use this m ateria l for any other purposes must be obtaine d from the IEEE by sending a request to pubs-permission s@ieee.or g. Original sequence (a) T op: larynx image sequenc e, Bottom: cardiac sequence 5 10 15 20 0 0.01 0.02 0.03 Time → | N t \ N t − 1 | | N t | Cardiac, 99% Larynx, 99% 5 10 15 20 0 0.01 0.02 0.03 Time → | N t − 1 \ N t | | N t | Cardiac, 99% Larynx, 99% (b) Slo w support change plots. Left: additi ons, Right: remova ls Fig. 1. In Fig. 1(a), we show two medical image sequences. In Fig. 1(b), N t refers to the 99% energy support of the two- lev el Daubechies-4 2D discrete wav elet transform (DWT) of t hese sequences. | N t | varied between 4121-41 83 ( ≈ 0 . 07 m ) for larynx and between 1108-1127 ( ≈ 0 . 06 m ) for cardiac. W e plot the number of additions (left) and the number of remov als (right) as a fraction of | N t | . Notice that all chang es ar e less than 2% of t he support size. replaces CS o n the o bservation b y CS o n the LS ob servation residual, comp uted using the “known” part of the sup port. Since the observation resid ual measures th e signal residual which has m uch fewer la r ge n onzer o co mpone nts, LS-CS greatly improves reco nstruction error when fewer measure- ments are a vailable. But the exact sparsity size (total number of nonzer o components) of the signal residual is equal to or lar ger than that of the sig nal. Since the numb er of measu rements required for exact reconstru ction is g overned by the exact sparsity size, LS-CS is n ot able to achieve exact reconstruction using fewer no iseless me asurements than those needed by CS. Exact r eco nstruction using fewer noiseless measu rements than tho se needed for CS is the f ocus of the curren t work. Denote the “known” part of the support by T . Our propo sed solution (modified- CS) solves an ℓ 1 relaxation of the following problem : find the sign al that satisfies the data constraint an d is sparsest ou tside of T . W e d eriv e sufficient condition s for exact reconstruc tion using mod ified-CS. When T is a fairly accur ate estimate of the true supp ort, th ese are much weaker than the sufficient conditions fo r CS. For a recursiv e time sequence reconstruc tion p roblem, th is holds if the reconstru ction at t = 0 is exact and the support change s slowly over time. The former can be ensur ed by using more measur ements at t = 0 , while the latter is ofte n tr ue in prac tice, e.g. see Fig. 1. W e also develop an important extension c alled Regularized Modified-CS wh ich also u ses p rior signal estimate knowledge. It improves the error when exact reconstructio n is not possible. 2 A shorter versio n of this work first appeared in ISIT’ 09 [1]. I n p arallel an d indep endent work in [1 4], Kh ajehnejad et al hav e also studied a similar p roblem to ours but they assume a prob abilistic prior on the supp ort. Other r elated work includes [1 5]. V ery recen t work o n causal reconstruction o f time sequen ces inc ludes [16] (fo cusses on the tim e-in variant support case) and [ 17] (u se past estimates to only sp eed up the current o ptimization but no t to improve reco nstruction erro r). Except [14], no n e of these p r ove exact reconstruction using fewer measur ements and except [15], [14], none o f these even demonstrate it. Other recent work, e.g. [18], applies CS on observation differences to reconstru ct the difference signal. While their goal is to o nly estimate the difference signal, the appr oach could be easily modified to also rec onstruct the actual signal sequence (we ref er to this as CS-d iff ). But, since all nonz ero coefficients of a s parse signal in any sparsity basis will typically c hange over time, thoug h grad ually , and some new elements will bec ome n onzero , thus th e exact spar sity size of the signal difference will also be eq ual to/larger than th at o f the sign al itself. As a r esult CS-diff will a lso not achieve exact reconstruc tion u sing fewer mea surements, e.g . see Fig.3. In this work, whenever we use the term CS, we a r e ac tually r eferring to b asis pursuit (BP) [ 5]. As pointed ou t by a n anonymou s revie wer, mo dified-CS is a misnom er and a mor e approp riate n ame fo r ou r app roach should b e modified-BP . As poin ted o ut by an anonymou s reviewer , m odified-CS can be used in co njunctio n with multiscale CS fo r vide o compression [19] to improve their compression ratio s. The pa per is o rganized as f ollows. W e g iv e the nota tion and problem definition below . Mo dified-CS is developed in Sec. II. W e obtain sufficient cond itions for exact r econstructio n using it in Sec . II I. In Sec. IV, we comp are these with the correspo nding condition s for CS a nd we also do a Monte Carlo comp arison of modified-CS and CS. W e discuss Dy- namic Modified-CS and Regularized Modified CS in Sec. V. Comparison s for actua l image s and imag e sequ ences are given in Sec. VI and con clusions and future work in Sec. VII. A. Notation W e use ′ for tran spose. Th e notation k c k k denotes th e ℓ k norm of the vector c . The ℓ 0 pseudo- norm, k c k 0 , coun ts the number of n onzero elements in c . For a m atrix, M , k M k denotes its induced ℓ 2 norm, i.e. k M k := max c : k c k 2 =1 k M c k 2 . W e use th e no tation A T to d enote the sub -matrix co ntaining the columns of A with indices belongin g to T . For a v ector, the notation ( β ) T (or β T ) refe rs to a sub-vector that co ntains the elements with indice s in T . Th e notation , [1 , n ] := [1 , 2 , . . . n ] . W e use T c to denote the comp lement of the set T w . r .t. [1 , n ] , i.e. T c := [1 , n ] \ T . The set operations, ∪ , ∩ stand for s et union and intersectio n respectiv ely . Also T 1 \ T 2 := T 1 ∩ T c 2 denotes set difference. For a set T , | T | denotes its size (car dinality). But f or a scalar , b , | b | d enotes th e mag nitude of b . The S -restricted isometr y co nstant [9], δ S , for a matrix, A , is de fined as the smallest real number satisfying (1 − δ S ) k c k 2 2 ≤ k A T c k 2 2 ≤ (1 + δ S ) k c k 2 2 (1) for all subsets T ⊂ [1 , n ] of car dinality | T | ≤ S an d all r eal vectors c of le ngth | T | . The re stricted orthog onality constant [9], θ S 1 ,S 2 , is defined as the smallest real numb er satisfying | c 1 ′ A T 1 ′ A T 2 c 2 | ≤ θ S 1 ,S 2 k c 1 k 2 k c 2 k 2 (2) for a ll disjoint sets T 1 , T 2 ⊂ [1 , n ] with | T 1 | ≤ S 1 , | T 2 | ≤ S 2 and S 1 + S 2 ≤ n , and for all vectors c 1 , c 2 of length | T 1 | , | T 2 | r espectiv ely . By setting c 1 ≡ A T 1 ′ A T 2 c 2 in (2 ), k A T 1 ′ A T 2 k ≤ θ S 1 ,S 2 (3) The notation X ∼ N ( µ, Σ) means that X is Gaussian distributed with mean µ and covariance Σ while N ( x ; µ, Σ) denotes the value of th e Gaussian PDF c omputed at poin t x . B. Pr ob lem Defin ition W e m easure a n m -length vector y where y := Ax (4) W e need to estimate x w hich is a sparse n -leng th vector with n > m . The sup port of x , denoted N , can be split as N = T ∪ ∆ \ ∆ e where T is the “known” par t of the support, ∆ e := T \ N is the error in the the k nown p art and ∆ := N \ T is th e unkn own part. Thu s, ∆ e ⊆ T , ∆ , T are disjoint and | N | = | T | + | ∆ | − | ∆ e | . W e use s := | N | to d enote the size of the (s)uppo rt, k := | T | to den o te the size of the (k)n own part o f the support, e = | ∆ e | to denote the size of the (e)rr or in the known part and u = | ∆ | to den ote the size o f th e (u) nknown part of the support. W e assume that A satisfies the S -restricted isometry prop- erty ( RIP) [9] f or S = ( s + e + u ) = ( k + 2 u ) . S -RIP means that δ S < 1 where δ S is the RIP co nstant fo r A defined in (1). In a static p roblem, T is a vailable from prior kn owledge. For example, in the MRI problem d escribed in the in troductio n, let N be th e (u nknown) set of all DWT coefficients with magnitud e ab ove a certain zero ing thresho ld. Assume that th e smaller coefficients are set to zer o. Prior knowledge tells us that most imag e intensities are nonzero and so the approx ima- tion coe fficients are m ostly nonzero . Thus we can let T be th e (known) set of ind ices of all the app roximatio n coefficients. The (unkn own) set of ind ices of the approx imation c oefficients which are zero form ∆ e . The ( unknown) set of indices of the nonzer o detail coefficients f orm ∆ . For the time series pr oblem, y ≡ y t and x ≡ x t with support, N t = T ∪ ∆ \ ∆ e , and T = ˆ N t − 1 is the support estimate from the pr evious time instant. If exact reco nstruction occurs at t − 1 , T = N t − 1 . In this c ase, ∆ e = N t − 1 \ N t is th e set o f indice s of elements tha t were nonzero at t − 1 , b ut are now zero (deletion s) while ∆ = N t \ N t − 1 is the newly ad ded coefficients at t ( additions). Slow sparsity pattern chan ge over time, e.g . see Fig. 1, then implies that u ≡ | ∆ | and e ≡ | ∆ e | ar e much smaller than s ≡ | N | . When exact reconstru ction does not occur, ∆ e includes both the cur rent d eletions and th e extras from t − 1 , ˆ N t − 1 \ N t − 1 . Similarly , ∆ in cludes both the curren t additions and the misses from t − 1 , N t − 1 \ ˆ N t − 1 . I n this case, slow supp ort chang e, along with ˆ N t − 1 ≈ N t − 1 , still implies th at u ≪ s and e ≪ s . 3 I I . M O D I FI E D C O M P R E S S I V E S E N S I N G ( M O D I FI E D - C S ) Our g oal is to find a signal that satisfies the data constrain t giv en in (4) and wh ose support contain s the smallest number of new additions to T , altho ugh it may or may n ot contain all elements o f T . In other words, we would like to solve min β k ( β ) T c k 0 subject to y = Aβ (5) If ∆ e is emp ty , i.e. if N = T ∪ ∆ , then the solution of (5) is also the sparsest solution who se suppo rt contains T . As is well kn own, minimizing the ℓ 0 norm is a co mbina- torial o ptimization p roblem [ 20]. W e pr opose to u se the same trick that r esulted in CS [5], [ 7], [ 8], [ 10]. W e rep lace the ℓ 0 norm b y th e ℓ 1 norm, which is the closest no rm to ℓ 0 that makes the optimization p roblem conve x, i. e. we solve min β k ( β ) T c k 1 subject to y = Aβ (6) Denote its ou tput by ˆ x . If need ed, the supp ort can be estimated as ˆ N := { i ∈ [1 , n ] : ( ˆ x ) 2 i > α } (7) where α ≥ 0 is a zero ing threshold . If exact recon struction occurs, α can be zero. W e discu ss thre shold setting for cases where exact reconstruction do es not occur in Sec. V -A. I I I . E X AC T R E C O N S T RU C T I O N R E S U LT W e first an alyze th e ℓ 0 version of modified- CS in Sec. III-A. W e then give the exact reconstru ction result for th e actual ℓ 1 problem in Sec. III- B. In Sec. III-C, we give the two key lemmas that lead to its proof and we explain how they lead to the pro of. The co mplete pr oof is given in the Append ix. Th e pro of of the lem mas is given in Sec. III -D. Recall th at k = | T | , u = | ∆ | , e = | ∆ e | an d s = | N | . A. Exact Reconstruction Result: ℓ 0 version of modified-CS Consider the ℓ 0 problem , (5). Using a ran k argumen t similar to [ 9, Lemma 1.2] we can show the fo llowing. The pr oof is giv en in the App endix. Pr opo sition 1: Giv en a sparse vector, x , with su pport, N = T ∪ ∆ \ ∆ e , where ∆ and T a re d isjoint and ∆ e ⊆ T . Consider reconstruc ting it f rom y := Ax by solving (5). x is the u nique minimizer of (5) if δ k +2 u < 1 ( A satisfies the ( k + 2 u ) -RIP). Using k = s + e − u , th is is eq uiv alen t to δ s + e + u < 1 . Compare this with [ 9, Lemma 1.2] fo r the ℓ 0 version of CS. It requires δ 2 s < 1 which is much stro nger when u ≪ s and e ≪ s , as is true for time series p roblems. B. Exact Reconstruction Result: modified- CS Of cour se we do not so lve (5) b ut its ℓ 1 relaxation, (6). Just like in CS, the sufficient conditio ns for this to give exact recon struction will be slightly strong er . In the n ext few subsections, we prove th e following result. Theor em 1 (Exact Rec o nstruction): Given a spar se vector, x , who se suppor t, N = T ∪ ∆ \ ∆ e , where ∆ and T are disjoint and ∆ e ⊆ T . Consider recon structing it f rom y := Ax by solv ing (6) . x is the unique minimizer of (6) if 1) δ k + u < 1 and δ 2 u + δ k + θ 2 k, 2 u < 1 and 2) a k (2 u, u ) + a k ( u, u ) < 1 wh ere a k ( S, ˇ S ) := θ ˇ S ,S + θ ˇ S,k θ S,k 1 − δ k 1 − δ S − θ 2 S,k 1 − δ k (8) The ab ove con ditions can be rewritten using k = s + e − u . T o under stand the secon d cond ition better and relate it to the co rrespon ding CS resu lt, let us simplify it. a k (2 u, u ) + a k ( u, u ) ≤ θ u, 2 u + θ u,u + θ 2 2 u,k + θ 2 u,k 1 − δ k 1 − δ 2 u − θ 2 2 u,k 1 − δ k . Simplifying f urther, a suffi- cient co ndition for a k (2 u, u ) + a k ( u, u ) < 1 is θ u, 2 u + θ u,u + 2 θ 2 2 u,k + θ 2 u,k 1 − δ k + δ 2 u < 1 . Fu rther, a sufficient cond ition for this is θ u,u + δ 2 u + θ u, 2 u + δ k + θ 2 u,k + 2 θ 2 2 u,k < 1 . T o g et a con dition only in ter ms of δ S ’ s, use the fact that θ S, ˇ S ≤ δ S + ˇ S [9]. A sufficient co ndition is 2 δ 2 u + δ 3 u + δ k + δ 2 k + u + 2 δ 2 k +2 u < 1 . Fur ther , n otice that if u ≤ k and if δ k +2 u < 1 / 5 , th en 2 δ 2 u + δ 3 u + δ k + δ 2 k + u + 2 δ 2 k +2 u < 4 δ k +2 u + δ k +2 u (3 δ k +2 u ) ≤ (4 + 3 / 5) δ k +2 u < 23 / 25 < 1 . Cor ollary 1 (Exa ct Reco n struction): Gi ven a spar se vector, x , who se suppor t, N = T ∪ ∆ \ ∆ e , wh ere ∆ a nd T are disjoint and ∆ e ⊆ T . Consider recon structing it f rom y := Ax by solv ing (6) . • x is the uniq ue minimizer o f (6) if δ k + u < 1 and ( δ 2 u + θ u,u + θ u, 2 u ) + ( δ k + θ 2 k,u + 2 θ 2 k, 2 u ) < 1 (9) • T his, in turn, ho lds if 2 δ 2 u + δ 3 u + δ k + δ 2 k + u + 2 δ 2 k +2 u < 1 . • T his, in turn, ho lds if u ≤ k and δ k +2 u < 1 / 5 . These condition s can be rewritten by substituting k = s + e − u . Compare (9) to the sufficient condition for CS given in [9]: δ 2 s + θ s,s + θ s, 2 s < 1 (10) As shown in Fig. 1 , usually u ≪ s , e ≪ s and u ≈ e (which m eans that k ≈ s ). Co nsider the case when the num ber of m easurements, m , is smaller than wh at is need ed for exact reconstruc tion for a given suppor t size, s , but is large en ough to ensur e that θ k, 2 u < 1 / 2 . Und er th ese assump tions, compa re (9) with (1 0). No tice that (a) the first bracket o f the left h and side (LHS) of (9) will b e small com pared to th e LHS of (10). The same will hold for the second and third terms of it s second bracket comp ared with the secon d and third ter ms of (10). Th e first term of its secon d bracket, δ k , will be smaller than th e first term o f (10), δ 2 s . Thu s, for a c ertain range o f values of m , th e LHS of (9) will be smaller than that of (1 0) and it m ay happen that (9) holds, but (10) does n ot h old. For example, if m < 2 s , (10) will not hold , but if s + u + e < m < 2 s , (9) can ho ld if u , e ar e small enoug h. A detailed com parison is done in Sec. IV. 4 C. Pr o of of Theorem 1: Main Lemmas and Pr oof Ou tline The idea o f the proof is motiv a ted by that of [9, Theo rem 1.3]. Supp ose that we want to m inimize a co n vex functio n J ( β ) subject to Aβ = y an d that J is differentiable. The Lagrang e multiplier op timality con dition req uires that there exists a Lagrange multiplier, w , s.t. ∇ J ( β ) − A ′ w = 0 . Thu s for x to be a solu tion we n eed A ′ w = ∇ J ( x ) . In our case, J ( x ) = k x T c k 1 = P j ∈ T c | x j | . Thu s ( ∇ J ( x )) j = 0 for j ∈ T and ( ∇ J ( x )) j = sgn ( x j ) for j ∈ ∆ . For j / ∈ T ∪ ∆ , x j = 0 . Since J is not differentiable at 0, we req uire that ( A ′ w ) j = A j ′ w = w ′ A j lie in the subgrad ient set of J ( x j ) at 0, which is the set [ − 1 , 1] [21]. In summ ary , we need a w that satisfies w ′ A j = 0 if j ∈ T , w ′ A j = sgn ( x j ) if j ∈ ∆ , and | w ′ A j | ≤ 1 , if j / ∈ T ∪ ∆ (11) Lemma 1 below shows that by using (11) but with | w ′ A j | ≤ 1 replaced by | w ′ A j | < 1 for all j / ∈ T ∪ ∆ , we get a set of sufficient c ondition s fo r x to b e the uniqu e so lution o f (6). Lemma 1: The sp arse signal, x , with suppo rt as defined in Theorem 1, and with y := Ax , is the uniq ue minimizer of (6) if δ k + u < 1 and if we can find a vector w satisfying 1) w ′ A j = 0 if j ∈ T 2) w ′ A j = sgn ( x j ) if j ∈ ∆ 3) | w ′ A j | < 1 , if j / ∈ T ∪ ∆ Recall th at k = | T | an d u = | ∆ | . The p roof is giv en in the next su bsection. Next we give L emma 2 which con structs a ˜ w which satisfies A T ′ ˜ w = 0 an d A T d ′ ˜ w = c for any set T d disjoint with T of size | T d | ≤ S and for any gi ven vector c of size | T d | . It also bound s | A j ′ ˜ w | f or all j / ∈ T ∪ T d ∪ E where E is called an “exceptional set”. W e prove Th eorem 1 by apply ing Lem ma 2 iter ativ ely to constru ct a w that satisfies the cond itions of Lemma 1 under the assump tions of Theorem 1. Lemma 2: Giv en the kn own part of the sup port, T , of size k . Let S , ˇ S be suc h tha t k + S + ˇ S ≤ n and δ S + δ k + θ 2 k,S < 1 . Let c be a vector suppo rted o n a set T d , that is disjoin t with T , of size | T d | ≤ S . Then there exists a vector ˜ w and an exceptional set, E , disjoin t with T ∪ T d , s.t. A j ′ ˜ w = 0 , ∀ j ∈ T A j ′ ˜ w = c j , ∀ j ∈ T d (12) | E | < ˇ S k A E ′ ˜ w k 2 ≤ a k ( S, ˇ S ) k c k 2 | A j ′ ˜ w | ≤ a k ( S, ˇ S ) √ ˇ S k c k 2 ∀ j / ∈ T ∪ T d ∪ E and k ˜ w k 2 ≤ K k ( S ) k c k 2 (13) where a k ( S, ˇ S ) is defined in (8) and K k ( S ) := √ 1 + δ S 1 − δ S − θ 2 S,k 1 − δ k (14) The p roof is giv en in the next su bsection. Pr oof Ou tline of Th eor em 1. T o prove Th eorem 1, a pply Lemma 2 iterativ ely , in a fashion similar to that of the proof of [9, Lem ma 2.2 ] (this proo f had some importan t typ os). The main idea is as follows. At iteration zero, app ly Lemma 2 with T d ≡ ∆ (so that S ≡ u ), c j ≡ sgn ( x j ) ∀ j ∈ ∆ , and ˇ S ≡ u , to get a w 1 and an excep tional set T d, 1 , o f size less than u , th at satisfy the above cond itions. At iter ation r > 0 , apply Lemm a 2 with T d ≡ ∆ ∪ T d,r (so that S ≡ 2 u ), c j ≡ 0 ∀ j ∈ ∆ , c j ≡ A j ′ w r ∀ j ∈ T d,r and ˇ S ≡ u to g et a w r +1 and an exceptional set T d,r +1 , of size less than u . Lemma 2 is applicable in the above fashion b ecause con dition 1 of Theorem 1 ho lds. Define w := P ∞ r =1 ( − 1) r − 1 w r . W e th en argue that if condition 2 of Theor em 1 holds, w satisfies th e condition s of Lemma 1. Applying Lemm a 1 , the result f ollows. W e g iv e the entire proof in the App endix. D. Pr oo fs of Lemmas 1 and 2 W e prove the lemmas fr om the previous subsection h ere. Recall th at k = | T | and u = | ∆ | . 1) Pr oof of Lemma 1: T he proof is motiv ated by [9, Section II-A]. Th ere is c learly at least one e lement in the feasible set of (6) - x - and h ence there will be at least on e minimizer of (6). Let β be a m inimizer o f (6). W e need to prove that if the con ditions of the lemma ho ld, it is equal to x . For any minimizer, β , k ( β ) T c k 1 ≤ k ( x ) T c k 1 := X j ∈ ∆ | x j | (15) Recall that x is zer o outside of T ∪ ∆ , T and ∆ are disjoint, and x is always no nzero on the set ∆ . T ake a w that satisfies the thr ee con ditions of the lemm a. Th en, k ( β ) T c k 1 = X j ∈ ∆ | x j + ( β j − x j ) | + X j / ∈ T ∪ ∆ | β j | ≥ X j ∈ ∆ | x j + ( β j − x j ) | + X j / ∈ T ∪ ∆ w ′ A j β j ≥ X j ∈ ∆ sgn ( x j )( x j + ( β j − x j )) + X j / ∈ T ∪ ∆ w ′ A j β j = X j ∈ ∆ | x j | + X j ∈ ∆ w ′ A j ( β j − x j ) + X j / ∈ T ∪ ∆ w ′ A j β j + X j ∈ T w ′ A j ( β j − x j ) = k x T c k 1 + w ′ ( Aβ − Ax ) = k x T c k 1 (16) Now , the o nly way ( 16) and (15) c an ho ld simultaneously is if all in equalities in ( 16) ar e actu ally equalities. Consider the first in equality . Since | w ′ A j | is stric tly le ss than 1 for all j / ∈ T ∪ ∆ , th e o nly way P j / ∈ T ∪ ∆ | β j | = P j / ∈ T ∪ ∆ w ′ A j β j is if β j = 0 for all j / ∈ T ∪ ∆ . Since bo th β and x solve (6), y = Ax = Aβ . Since β j = 0 = x j for all j / ∈ T ∪ ∆ , this means that y = A T ∪ ∆ ( β ) T ∪ ∆ = A T ∪ ∆ ( x ) T ∪ ∆ or that A T ∪ ∆ (( β ) T ∪ ∆ − ( x ) T ∪ ∆ ) = 0 . Sin ce δ k + u < 1 , A T ∪ ∆ is f ull rank and so the on ly way this can happen is if ( β ) T ∪ ∆ = ( x ) T ∪ ∆ . Thus any minimizer, β = x , i.e. x is th e unique minimize r of ( 6). 2) Pr oof of Lemma 2: The p roof of this lemma is signifi- cantly d ifferent from that of the correspon ding lemma in [9 ], ev en thou gh the form of the final result is similar . Any ˜ w th at satisfies A T ′ ˜ w = 0 will be of the form ˜ w = [ I − A T ( A T ′ A T ) − 1 A T ′ ] γ := M γ (17) 5 W e need to find a γ s.t. A T d ′ ˜ w = c , i.e. A T d ′ M γ = c . Let γ = M ′ A T d η . Th en η = ( A T d ′ M M ′ A T d ) − 1 c = ( A T d ′ M A T d ) − 1 c . This follows be cause M M ′ = M 2 = M since M is a projection matrix. Thus, ˜ w = M M ′ A T d ( A T d ′ M A T d ) − 1 c = M A T d ( A T d ′ M A T d ) − 1 c (18) Consider any set ˇ T d with | ˇ T d | ≤ ˇ S disjoint with T ∪ T d . The n k A ˇ T d ′ ˜ w k 2 ≤ k A ˇ T d ′ M A T d k k ( A T d ′ M A T d ) − 1 k k c k 2 (19) Consider the first te rm fro m the rig ht hand side ( RHS) of (1 9). k A ˇ T d ′ M A T d k ≤ k A ˇ T d ′ A T d k + k A ˇ T d ′ A T ( A T ′ A T ) − 1 A T ′ A T d k ≤ θ ˇ S ,S + θ ˇ S ,k θ S,k 1 − δ k (20) Consider the second term fro m the RHS o f (19). Since A T d ′ M A T d is no n-negative defin ite, k ( A T d ′ M A T d ) − 1 k = 1 λ min ( A T d ′ M A T d ) (21) Now , A T d ′ M A T d = A T d ′ A T d − A T d ′ A T ( A T ′ A T ) − 1 A T ′ A T d which is the difference of two symmetr ic non-negative definite matrices. Let B 1 denote the first m atrix and B 2 the second one. Use the fact that λ min ( B 1 − B 2 ) ≥ λ min ( B 1 ) + λ min ( − B 2 ) = λ min ( B 1 ) − λ max ( B 2 ) whe re λ min ( . ) , λ min ( . ) denote th e min- imum, maximu m eigenv alu e. Sin ce λ min ( B 1 ) ≥ (1 − δ S ) an d λ max ( B 2 ) = k B 2 k ≤ k ( A T d ′ A T ) k 2 1 − δ k ≤ θ 2 S,k 1 − δ k , thus k ( A T d ′ M A T d ) − 1 k ≤ 1 1 − δ S − θ 2 S,k 1 − δ k (22) as lon g as the deno minator is po siti ve. It is p ositiv e because we h av e assumed th at δ S + δ k + θ 2 k,S < 1 . Using (20) and (2 2) to bound (19), w e g et th at for any set ˇ T d with | ˇ T d | ≤ ˇ S , k A ˇ T d ′ ˜ w k 2 ≤ θ ˇ S ,S + θ ˇ S ,k θ S,k 1 − δ k 1 − δ S − θ 2 S,k 1 − δ k k c k 2 = a k ( S, ˇ S ) k c k 2 (23) where a k ( S, ˇ S ) is defined in (8). No tice that a k ( S, ˇ S ) is n on- decreasing in k , S , ˇ S . Defin e an exceptional set, E , as E := { j ∈ ( T ∪ T d ) c : | A j ′ ˜ w | > a k ( S, ˇ S ) √ ˇ S k c k 2 } (24) Notice that | E | must obey | E | < ˇ S since other wise we c an contradict ( 23) by taking ˇ T d ⊆ E . Since | E | < ˇ S and E is disjoin t with T ∪ T d , ( 23) holds f or ˇ T d ≡ E , i.e. k A E ′ ˜ w k 2 ≤ a k ( S, ˇ S ) k c k 2 . Also, by d efinition of E , | A j ′ ˜ w | ≤ a k ( S, ˇ S ) √ ˇ S k c k 2 , for all j / ∈ T ∪ T d ∪ E . Fin ally , k ˜ w k 2 ≤ k M A T d ( A T d ′ M A T d ) − 1 k k c k 2 ≤ k M k k A T d k k ( A T d ′ M A T d ) − 1 k k c k 2 ≤ √ 1 + δ S 1 − δ S − θ 2 S,k 1 − δ k k c k 2 = K k ( S ) k c k 2 (25) since k M k = 1 (holds becau se M is a pro jection matrix). Thus, a ll eq uations of (13) h old. Using (18), (12) holds. I V . C O M PA R I S O N O F C S A N D M O D I FI E D - C S In Theo rem 1 and Corollary 1, we derived sufficient condi- tions for exact rec onstruction using modified-CS. In Sec. IV -A, we compare the sufficient cond itions f or modified- CS with those for CS. In Sec. IV -B, we u se Monte Carlo to co mpare the pr obabilities of exact reco nstruction for both metho ds. A. Comparing sufficient con ditions W e co mpare the sufficient condition s fo r modified -CS and for CS, expre ssed only in terms of δ S ’ s. Sufficient c ondition s for an algorith m ser ve as a d esigner’ s tool to decide the nu mber of measur ements need ed for it a nd in that sense c omparin g the two sufficient con ditions is meaningful. For modified-CS, fr om Corollar y 1, the sufficient cond ition in terms of only δ S ’ s is 2 δ 2 u + δ 3 u + δ k + δ 2 k + u + 2 δ 2 k +2 u < 1 . Using k = s + e − u , this becomes 2 δ 2 u + δ 3 u + δ s + e − u + δ 2 s + e + 2 δ 2 s + e + u < 1 . (26) For CS, two of the best ( weakest) sufficient conditio ns tha t use on ly δ S ’ s ar e g iv en in [22], [23] and [11]. Between these two, it is not obviou s which one is weaker . U sing [ 22] and [11], CS achieves exact re construction if either δ 2 s < √ 2 − 1 or δ 2 s + δ 3 s < 1 . (27) T o compar e ( 26) an d (27), we use u = e = 0 . 02 s which is ty pical fo r time series application s (see Fig. 1 ). One way to c ompare them is to u se δ cr ≤ cδ 2 r [24, Corollary 3.4] to get the LHS’ s of b oth in ter ms of a scalar multiple of δ 2 u . Thus, (26) ho lds if δ s + e + u < 1 / 2 and δ 2 u < 1 / 132 . 5 . Since δ s + e + u = δ 52 u < 52 δ 2 u , the second con dition implies the first, and so only δ 2 u < 1 / 1 3 2 . 5 is sufficient. On the other hand, (2 7) hold s if δ 2 u < 1 / 241 . 5 which is clea rly str o n ger . Alternatively , we can compar e ( 26) and (2 7) using th e high probab ility upper bo unds on δ S as in [9]. Using [9, Eq 3.22], for a n m × n rand om Gaussian matrix, with hig h probability (w .h.p.) , δ S < g n/m ( S n ) , wh ere g n/m S n := − 1 + 1 + f S n , n m 2 , wher e f S n , n m := r n m r S n + s 2 H S n ! , and bin ary entropy H ( r ) := − r log r − (1 − r ) lo g(1 − r ) for 0 ≤ r ≤ 1 . Thus, w .h.p ., m odified-CS achieves exact reconstruc tion f rom rand om-Gau ssian measur ements if ρ modC S := 2 g n/m 2 u n + g n/m 3 u n + g n/m s + e − u n + g n/m s + e n 2 + 2 g n/m s + e + u n 2 < 1 . (28) Similarly , f rom (27), w .h.p ., CS achieves exact re construction from ran dom-Ga ussian measu rements if either ρ C S := g n/m 2 s n + g n/m 3 s n < 1 or ρ C S, 2 := g n/m 2 s n < √ 2 − 1 . (29) 6 0 2 4 6 8 x 10 −4 0 0.5 1 1.5 s/n → ρ CS → CS m/n=1/2 m/n=2/3 m/n=3/4 (a) Plots of ρ C S defined in (29 ) 0 2 4 6 8 x 10 −4 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 s/n → ρ CS,2 → CS m/n=1/2 m/n=2/3 m/n=3/4 (b) Plots of ρ C S, 2 defined in (29 ) 0 2 4 6 8 x 10 −4 0 0.5 1 1.5 s/n → ρ modCS → Modified−CS (u=e=s/50) m/n=1/2 m/n=2/3 m/n=3/4 (c) Plots of ρ modC S defined in (28) Fig. 2. Plots of ρ C S and ρ C S, 2 (in (a) and (b)) and ρ modC S (in (c)) against s/n for 3 differen t values of m/n . For ρ modC S , we used u = e = s/ 50 . Notice that, for any giv en m/n , the maximum allo w ed sparsity , s/n , for ρ modC S < 1 is larger t han t hat for which either ρ C S < 1 or ρ C S, 2 < √ 2 − 1 . A lso, both are much smaller than what is observed in simulations. In Fig. 2, we plo t ρ C S , ρ C S, 2 and ρ modC S against s/n for three different choices of m/n . For ρ modC S , we use u = e = 0 . 0 2 s (from Fig. 1). As can be seen, the maxim um allowed sparsity , i.e. the maximu m allowed value o f s/n , for which eith er ρ C S < 1 or ρ C S, 2 < √ 2 − 1 is smaller than that for which ρ modC S < 1 . T hus, fo r a given nu mber of measuremen ts, m , w .h.p., modifie d -CS will g ive exact r e- construction fr om rando m-Gaussian measur ements, for lar ger sparsity sizes, s/n , than CS would . As also noted in [9], in all cases, the maxim um allowed s/ n is m uch smaller than wh at is obser ved in simu lations, bec ause of the lo oseness of th e bound s. For the same reason, the difference between CS and modified- CS is also not as significant. B. Comparison using Mo n te Carlo So far we o nly co mpared sufficient con ditions. The actual allowed s for CS may be much larger . T o actu ally comp are exact reconstru ction ab ility o f mod ified-CS with that of CS, we thu s need Monte Carlo. W e use the f ollowing proced ure to o btain a Monte Carlo estimate of the pro bability of exact reconstruc tion u sing CS an d mo dified-CS, for a gi ven A (i.e. we average over the joint distribution of x an d y given A ). 1) Fix signa l len gth, n = 256 and its sup port size, s = 0 . 1 n = 2 6 . Select m , u and e . 2) Gen erate the m × n r andom -Gaussian matrix, A (g en- erate an m × n matrix with indepen dent identically distributed (i.i.d.) ze ro mean Ga ussian e ntries and nor- malize each colum n to u nit ℓ 2 norm) 1 . 3) Repe at th e following tot = 5 00 times a) Gen erate the support, N , o f size s , uniform ly at random from [1 , n ] . b) Gen erate ( x ) N ∼ N (0 , 10 0 I ) . Set ( x ) N c = 0 . c) Set y := Ax . d) Gen erate ∆ of size u uniformly at ra ndom fro m the elements of N . e) Gen erate ∆ e of size e , un iformly a t random from the elements of [1 , n ] \ N . 1 As pointed out by an anonymous re viewe r , we actua lly do not need to normaliz e each column to unit norm. As prove d in [25], a matrix with i.i.d. zero mean Gaussian entries with varianc e 1 /n will itself satisfy the RIP . If the varian ce is not 1 /n , there will just be a s calin g f actor in the RIP . This does not affect reconstructi on performance in any way . f) Let T = N ∪ ∆ e \ ∆ . Run modified -CS, i.e. solve (6)). Call the output ˆ x modC S . g) Run CS, i. e. solve (6) with T bein g the emp ty set. Call the outpu t ˆ x C S . 4) Estima te the probability of exact reconstru ction using modified- CS by coun ting the number of times ˆ x modC S was equal to x (“equa l” was defin ed as k ˆ x modC S − x k 2 / k x k 2 < 10 − 5 ) and dividing by tot = 500 . 5) Do the same for CS using ˆ x C S . 6) Repe at for various values o f m , u an d e . W e set n = 2 5 6 and s = 0 . 1 n and we varied m b etween 0 . 16 n = 1 . 6 s and 0 . 4 n = 4 s . For each m , we varied u = | ∆ | between 0 . 04 s to s and e = | ∆ e | between 0 to 0 . 4 s . W e tabulate ou r results in T able I . Th e ca se u = s and e = 0 corr espond s to CS. Notice that wh en m is just 0 . 19 n = 1 . 9 s < 2 s , modified-CS achieves exact re construction more than 99.8 % o f the times if u ≤ 0 . 08 s an d e ≤ 0 . 08 s . In this case, CS has zer o p robab ility of exact recon struction. W ith m = 0 . 3 n = 3 s , CS has a very small (14%) chance of exact reconstruc tion. On the other han d, m odified-CS work s alm ost all the time for u ≤ 0 . 2 s a nd e ≤ 0 . 4 s . CS n eeds at least m = 0 . 4 n = 4 s to work r eliably . The above simulatio n was do ne in a fashion similar to tha t of [9]. I t d oes n ot compute the m req uired for Theo rem 1 to hold. Th eorem 1 say s th at if m is large en ough f or a g iv en s , u , e , so tha t the two condition s given th ere hold , m odified-CS will always work. But all we show above is that (a) for certain lar ge en ough values of m , the Monte Carlo estimate of the pr oba bility of exact r econstruction using mo dified-CS is on e (pr ob ability com p uted b y averaging over the joint distribution of x and y ); and (b ) when u , e ≪ s , this happ ens for much smaller va lues of m with modifi ed-CS than with CS. As poin ted out by an anonymous revie wer, Monte Carlo only compu tes expected values (h ere, expe ctation of the in di- cator function o f th e event th at exact recon struction o ccurs) and thus, it ignores the p athologic al cases which occur with zero p robab ility [26], [27]. In [26], the autho rs give a greedy pursuit algorithm to find these patholo gical cases for CS, i.e. to find the spar sest vector x for which CS d oes not give exact reconstruc tion. The supp ort size of th is vector then gives an upper bo und on th e sparsity that CS can han dle. Developing a similar appro ach for m odified-CS is a useful open p roblem . 7 T ABLE I P R O B A B I L I T Y O F E X A C T R E C O N S T R U C T I O N F O R M O D I FI E D - C S . R E C A L L T H A T u = | ∆ | , e = | ∆ e | A N D s = | N | . N O T I C E T H A T u = s A N D e = 0 C O R R E S P O N D S T O C S . (a) m = 0 . 16 n ❍ ❍ ❍ ❍ u e 0 0 . 08 s 0 . 24 s 0 . 40 s 0 . 04 s 0.9980 0.9900 0.8680 0.4100 0 . 08 s 0.8880 0.8040 0.3820 0.0580 s (CS) 0.0000 (b) m = 0 . 19 n ❍ ❍ ❍ ❍ u e 0 0 . 08 s 0 . 24 s 0 . 40 s 0 . 08 s 0.9980 0.9980 0.9540 0 .7700 0 . 12 s 0.9700 0.9540 0.7800 0 .4360 s (CS) 0.0000 (c) m = 0 . 25 n ❍ ❍ ❍ ❍ u e 0 0 . 08 s 0 . 24 s 0 . 40 s 0 . 04 s 1 1 1 1 0 . 20 s 1 1 0.9900 0.9520 0 . 35 s 0.9180 0.8220 0.6320 0.3780 0 . 50 s 0.4340 0.3300 0.1720 0.0600 s (CS) 0.0020 (d) m = 0 . 30 n ❍ ❍ ❍ ❍ u e 0 0 . 08 s 0 . 24 s 0 . 40 s 0 . 04 s 1 1 1 1 0 . 20 s 1 1 1 1 0 . 35 s 1 1 0.9940 0.9700 0 . 50 s 0.9620 0.9440 0.8740 0.6920 s (CS) 0.1400 (e) m = 0 . 40 n ❍ ❍ ❍ ❍ u e 0 0 . 40 s 0 . 04 s 1 1 0 . 20 s 1 1 0 . 35 s 1 1 0 . 50 s 1 1 s (CS) 0.9820 C. Robustness to noise Using an ano nymous reviewer’ s su ggestion, we stud ied the robustness of modified-CS to me asurement noise. Of cour se notice that in this case the true sign al, x , does no t satisfy the data con straint. Thus it is no t clear if (6) will e ven b e feasible. A correct way to appr oach noisy m easurements is to relax the data constraint as is do ne fo r CS in [5] or [22]. This is d one for m odified-CS in our recent work [2 8] an d also in [2 9]. In practice thou gh, a t least with rand om Gau ssian measure- ments an d small en ough noise, ( 6) did tu rn out to b e feasible, i.e. we wer e able find a solutio n, in all our simulation s. W e used n = 256 , s = 0 . 1 n , u = e = 0 . 08 s and m = 0 . 1 9 n . W e r an the simu lation as in step 3 of the previous subsection with the following ch ange. The measurem ents wer e g enerated as y := Ax + w where w ∼ N (0 , σ 2 w I ) . W e varied σ 2 w and compared the nor malized ro ot m ean squared err or (N- RMSE) of mod ified-CS with th at of CS in T a ble II. N-RMSE is comp uted as p E [ k x − ˆ x k 2 2 ] / E [ k x k 2 2 ] wher e E [ . ] denotes the expected value compu ted using Mon te Carlo. Recall that x N ∼ N (0 , 10 0 I ) . When the noise is small en ough, mo dified- CS has small error . CS has large error in all cases since m is too small for it. T ABLE II R E C O N S T R U C T I O N E R R O R ( N - R M S E ) F RO M N O I S Y M E A S U R E M E N T S . σ 2 w 0.001 0.01 0.1 1 10 CS 0.7059 0.7011 0.7243 0.8065 1.1531 Modified-CS 0.0366 0.0635 0.1958 0.5179 1.3794 V . E X T E N S I O N S O F M O D I FI E D - C S W e now d iscuss some key extensions - dyn amic mo dified- CS, regu larized modified- CS ( RegModCS) and dynam ic Reg- ModCS. RegModCS is useful when exact reconstru ction do es not occur - eithe r m is too small fo r exact reconstruction or the signal is compressible. Th e dyn amic versions a re for r ecursive reconstruc tion o f a time sequence of sparse signa ls. Before g oing fu rther we define th e b % -en ergy sup port. Definition 1 ( b % -en er g y support or b % -suppo rt): For sparse signals, clearly the su pport is N := { i ∈ [1 , n ] : x 2 i > 0 } . For compr essible signals, we misuse notation slightly and let N be the b % -ene rgy support , i.e. N := { i ∈ [1 , n ] : x 2 i > ζ } , wher e ζ is the largest real number for which N co ntains at least b % of th e signal energy , e.g. b = 99 in Fig . 1. A. Dynamic Modified -CS: Mo dified-CS for Recu rsive Rec on- struction o f Signa l Sequen ces The most importan t app lication of mo dified-CS is fo r re- cursive reconstru ction of time sequ ences of sp arse or co m- pressible signals. T o apply it to tim e sequences, at eac h time t , we solve ( 6) with T = ˆ N t − 1 where ˆ N t − 1 is the support estimate from t − 1 and is compu ted using (7). At t = 0 we can either initialize with CS, i.e. set T to be the emp ty set, or with mod ified-CS with T being the support av ailab le from prior kn owledge, e.g. fo r wav elet sp arse images, T co uld b e the set of indices of the app roxima tion coefficients. The prio r knowledge is usually not very accur ate and th us at t = 0 one will usu ally n eed mo re m easurements i.e. one will need to use y 0 = A 0 x 0 where A 0 is an m 0 × n measuremen t matrix with m 0 > m . The full algorithm is summarized in Algo rithm 1. Threshold Selection. If m is large enough for exact reconstruc tion, th e support estima tion thresho ld, α , ca n be set to zero. In case o f very accurate reconstru ction, if we set α to be e qual/slightly smaller th an the magn itude of the smallest element o f the support, it will ensure zero misses and fewest false addition s. As m is reduced fu rther (err or increases), α should be increased furthe r to prevent too many false add itions. For com pressible signals, one shou ld do the a bove but with “suppor t” replaced by the b % -supp ort. For a giv en m , b sho uld be ch osen to be just large en ough so that the elements of the b % -suppo rt can be exactly reconstruc ted. Alternatively , o ne can use the approach prop osed in [13, Section II]. First, only d etect add itions to the suppo rt using a small thresho ld (or keep adding largest elements into T and stop w hen the con dition num ber of A T becomes to o la rge); then compu te an LS estimate on that suppor t and th en use this LS estimate to perform sup port de letion, typ ically , using a larger threshold. If there are few misses in th e suppo rt addition step, the LS estimate will ha ve lower erro r than the ou tput of modified- CS, thu s makin g deletio n more accurate. 8 Algorithm 1 Dynamic Modified-CS At t = 0 , compu te ˆ x 0 as the solution of min β k ( β ) T c k 1 , s.t. y 0 = A 0 β , where T is either empty or is av ailable fr om prior knowledge. Compu te ˆ N 0 = { i ∈ [1 , n ] : ( ˆ x 0 ) 2 i > α } . For t > 0 , d o 1) Mo d ified-CS. L et T = ˆ N t − 1 . Comp ute ˆ x t as the solution of min β k ( β ) T c k 1 , s.t. y t = Aβ . 2) Estima te the Support. ˆ N t = { i ∈ [1 , n ] : ( ˆ x t ) 2 i > α } . 3) Ou tput th e recon struction ˆ x t . Feedback ˆ N t , increment t , and go to step 1. B. RegModCS: R e g ularized Modifie d-CS So far we on ly used prior knowledge about the suppor t to reduce the m r equired for exact recon struction or to reduce the erro r in cases where exact recon struction is no t possible. If we also know som ething ab out how the sign al along T was gen erated, e.g . we know that the elements o f x T were generated fro m some distribution with mean µ T , we can use this knowledge 2 to red uce th e recon struction er ror by solv ing min β k ( β ) T c k 1 + γ k ( β ) T − µ T k 2 2 s.t. y = Aβ (30) W e call the ab ove Regularized Modified- CS or RegModCS. Denote its outpu t by ˆ x r eg . W e ran a Monte Carlo simulation to compar e Mod ified-CS with RegModCS for spa rse signals. W e fixed n = 256 , s = 26 ≈ 0 . 1 n , u = e = 0 . 0 8 s . W e used m = 0 . 1 6 n, 0 . 12 n, 0 . 11 n in th ree sets of simulations done in a fashion similar to that of Sec. IV -B, but with th e following change. In eac h ru n of a simulation, we gen erated each elem ent of µ N \ ∆ to be i.i.d . ± 1 with prob ability (w . p.) 1/2 an d each element of µ ∆ and of µ ∆ e to be i.i.d . ± 0 . 25 w .p. 1 /2. W e gen erated x N ∼ N ( µ N , 0 . 01 I ) and we set x N c = 0 . W e set y := Ax . W e tested RegModCS with various values of γ ( γ = 0 correspo nds to m odified- CS). W e used tot = 50 . The r esults are tabulated in T able III. W e com puted the exact rec onstruction probab ility as in Sec. IV -B by cou nting th e numb er of time s ˆ x r eg equals x an d normalizin g. As can be seen, RegModCS does not improve the exact reconstru ction probability , in fact it can reduc e it. Th is is primarily b ecause th e elements of ( ˆ x r eg ) ∆ e are of ten n onzero, though small 3 . But, it sign ificantly redu ces th e reco nstruction error, particu larly when m is small. C. Setting γ using an MAP interpr etation of Re gModCS One way to select γ is to interpret the solutio n of (30) as a maximum a posteriori ( MAP) estimate under th e f ollowing prior mo del and un der the obser vation model of (4). Gi ven the prior sup port and signa l estimates, T an d µ T , assume that x T and x T c are mu tually in depend ent and p ( x T | T , µ T ) = N ( x T ; µ T , σ 2 p I ) , p ( x T c | T , µ T ) = 1 2 b p | T c | e − k x T c k 1 b p , (31) 2 Because of error in T , this knowl edge is also not complet ely correct . 3 But if w e use ˆ x r eg to first estimate the support using a small threshold, α , and then estimate the signal as A ˆ N † y , this probabili ty does not decrease as much and in fact it e ven increa ses when m is smaller . T ABLE III C O M PA R I N G P R O B A B I L I T Y O F E X A C T R E C O N S T R U C T I O N ( P R O B ) A N D R E C O N S T R U C T I O N E R R O R ( E R R O R ) O F R E G M O D C S W I T H D I FF E R E N T γ ’ S . γ = 0 C O R R E S P O N D S T O M O D I FI E D - C S . (a) m = 0 . 16 n γ 0 (modCS) 0.001 0.05 0.1 0.5 1 prob 0.76 0.76 0.74 0.74 0.70 0.34 error 0.0484 0 .0469 0.0421 0.0350 0.0273 0.0286 (b) m = 0 . 12 n γ 0 (modCS) 1 prob 0.04 0 error 0.2027 0.0791 (c) m = 0 . 11 n γ 0 (modCS) 1 prob 0 0 error 0.378 3 0.0965 i.e. all elements of x are mutu ally ind epende nt; each e lement of T c is zero m ean Lap lace distributed with p arameter b p ; an d the i th element of T is Gaussian with mean µ i and variance σ 2 p . Under the above mod el, if γ = b p / 2 σ 2 p in (30), then, clearly , its solution, ˆ x r eg , will be an MAP solution. Giv en i.i.d . train ing d ata, th e m aximum likeliho od estimate (MLE) of b p , σ 2 p can b e ea sily co mputed in closed fo rm. D. Dynamic Re gu larized Modified -CS (R e g ModCS) T o apply RegModCS to time sequences, we solve ( 30) with T = ˆ N t − 1 and µ T = ( ˆ x t − 1 ) T . Th us, we use Algorith m 1 with step 1 replaced b y min β k ( β ) ˆ N c t − 1 k 1 + γ k ( β ) ˆ N t − 1 − ( ˆ x t − 1 ) ˆ N t − 1 k 2 2 s.t. y t = Aβ (32) and in the last step of Algo rithm 1, we feed bac k ˆ x t and ˆ N t . In Appendix C, we gi ve the conditio ns under which the solution of (32) becomes a causal MAP estimate. T o su mma- rize th at d iscussion, if we set γ = b p / 2 σ 2 p where b p , σ 2 p are the paramete rs o f the signal model given in Appendix C, and if we assum e that the previous signa l is p erfectly estimated from y 0 , . . . y t − 1 with th e estimate bein g zero ou tside ˆ N t − 1 and equal to ( ˆ x t − 1 ) ˆ N t − 1 on it, then th e solution of (32) will be the causal M AP solu tion u nder that model. In prac tice, the model para meters are usually not known. But, if we have a training time seq uence of signals, we can compute th eir MLE s using (42), also given in Appendix C. V I . R E C O N S T R U C T I N G S PA R S I FI E D / T R U E I M AG E S F R O M S I M U L A T E D M E A S U R E M E N T S W e simulated two applicatio ns: CS-based image/vid eo com- pression (or single-p ixel camera imag ing) a nd static/dynam ic MRI. The m easurement m atrix was A = H Φ where Φ is the sparsity basis of the image and H m odels th e m easurement acquisition. All operation s are explained by rewriting the image as a 1 D vector . W e u sed Φ = W ′ where W is an orthon ormal m atrix corr espondin g to a 2D-DWT for a 2-level Daubechies-4 wa velet. For video compr ession (or single-pixel imaging) , H is a random Ga ussian matrix, denoted G r , (i.i.d. zero mean Gau ssian m × n matrix with column s no rmalized to un it ℓ 2 norm) . For MRI, H is a partial F o u rier ma trix, i. e. H = M F wher e M is an m × n mask wh ich con tains a single 1 at a different rand omly selected location in each row and all 9 other entries are zero and F is the matrix co rrespon ding to the 2D discrete F ourier tr ansform ( DFT). N-RMSE, defined here as k x t − ˆ x t k 2 / k x t k 2 , is used to compare the recon struction perf ormance . W e first used the sparsified and then th e true image and the n did the same for image sequ ences. In all cases, the im age was sparsified by computin g its 2 D-DWT , retain ing the coefficients fr om the 99%-energy suppo rt while setting o thers to zero and taking the in verse DWT . W e used the 2 -level Daubechie s-4 2D- DW T as the sp arsifying basis. W e compa re mo dified-CS and RegModCS with simple CS, CS-diff [1 8] an d LS-CS [13]. For solving the minimization prob lems giv en in (6) a nd (30), we used CVX, http://www .stanfor d.edu/ ∼ boyd/cvx/, for smaller sized p roblems ( n < 4096 ). All simu lations of Sec. IV and all results of T able IV and Figs. 3, 4 used CVX. For bigger signals/images, (i) the size of th e matrix A b ecomes too large to sto re on a PC (need ed by m ost existing solvers inc luding the o nes in CVX) and (ii) dire ct matrix m ultiplications take too muc h time. For bigger images and structu red matrices like DFT times D WT , we wrote our own solver for (6) by using a modification of the code in L1Ma gic [30]. W e show results using this code on a 256 × 256 larynx image sequen ce ( n = 6553 6 ) in Fig. 5. This code used the o perator form of primal-du al interior point metho d. W ith this, one only nee ds to store the samp ling mask which takes O ( n ) bits of storag e and one uses FFT and fast D WT to perfor m m atrix-vector multipli- cations in O ( n log n ) time instead of O ( n 2 ) time. In fact for a b × b imag e the cost difference is O ( b 2 log b ) versus O ( b 4 ) . All our code, for both small and large p roblems, is posted online at http://www .ece.iastate.ed u/ ∼ namrata/Sequ entialCS.html . This page also links to mo re experimental r esults. A. Spa rsified an d T rue (Compr essible) Sin gle Image W e first evaluated the single im age recon struction prob lem for a spar sified image. T he image used was a 32 × 3 2 cardiac imag e (obtain ed by decimating the full 128 × 128 cardiac image sh own in Fig. 1), i.e. n = 10 24 . Its sup port size s = 10 7 ≈ 0 . 1 n . W e used th e set of indice s of the approx imation coefficients as the kn own part of the suppor t, T . Thu s, k = | T | = 64 and so u = | ∆ | ≥ 43 wh ich is a significantly large fr action of s . W e compa re the N-RMSE in T able IV. Even with such a large un known suppo rt size, modified- CS a chieved exact recon struction from 2 9% ra ndom Gaussian and 19% partial Fourier me asurements. CS err or in these cases was 34 % and 13% respecti vely . W e also d id a compar ison for actua l cardiac and larynx images (which are only ap proxim ately sp arse). The results are tabulated in T able IV. Modified-CS works better than CS, though no t by much since | ∆ | is a large fraction o f | N | . Here N refers to the b % sup port fo r any large b , e.g. b = 99 . B. Spa rsified Image S equence s W e com pared modified-CS with simple CS (CS at each time instant), CS-diff an d LS-CS [13] for the spar sified 32 × 32 cardiac seque nce in Fig. 3. Mo dified-CS was implemen ted as in Algorithm 1. At t = 0 , the set T was empty and we used 50% measuremen ts. For this seq uence, | N t | ≈ 0 . 1 n = 107 , 5 10 15 20 10 −15 10 −10 10 −5 10 0 time → N−RMSE Simple CS Modified−CS CS−diff LS−CS (a) H = G r , m 0 = 0 . 5 n , m = 0 . 16 n 5 10 15 20 10 −15 10 −10 10 −5 10 0 time → N−RMSE Simple CS Modified−CS CS−diff LS−CS (b) H = M F , m 0 = 0 . 5 n , m = 0 . 16 n Fig. 3. Reconstructing the spar si fied 32 × 32 cardiac image sequence. s ≈ 0 . 1 n , u ≈ 0 . 01 n , e ≈ 0 . 005 n . (a) H = G r , (b) H = M F . Similar results were also obtained for the larynx sequen ce. T hese are sho wn in [2, Fig. 3] (not repeated here due to lack of space). T ABLE IV R E C O N S T R U C T I O N E R R O R ( N - R M S E ) Sparsified True T rue Cardiac Cardiac Larynx CS ( H = G r , m = 0 . 29 n ) 0.34 0.36 0.090 Mod-CS ( H = G r , m = 0 . 29 n ) 0 0.14 0.033 CS ( H = M F , m = 0 . 19 n ) 0.13 0.12 0.097 Mod-CS ( H = M F , m = 0 . 19 n ) 0 0.11 0.025 u = | ∆ | ≤ 10 ≈ 0 . 0 1 n and e = | ∆ e | ≤ 5 ≈ 0 . 005 n . Since u ≪ | N t | and e ≪ | N t | , mo dified-CS achieves exact reconstruc tion with a s few a s 16% measuremen ts at t > 0 . Fig. 3(a) u sed H = G r (compr ession/single-pixel imag ing) and Fig. 3(b) used H = M F ( MRI). As can b e seen, simple CS has very large erro r . CS-diff and LS-CS also have significantly nonzer o error since the exact sparsity size of b oth the sign al difference and the signal residua l is e qual to/larger than the signal’ s sparsity size. Modified -CS err or is 10 − 8 or less (exact for numerical implemen tation) . Similar con clusions were also obtained for th e sparsified lary nx sequence, see [2, Fig. 3]. This is not repeated here due to lack o f space. C. T rue (Compr e ssible) Image Seque n ces Finally we did the co mparison for actual im age sequences which are only co mpressible. W e show results o n the larynx (vocal tract) im age seq uence of Fig. 1. For Fig. 4, we used a 32 × 32 b lock of it with r andom Ga ussian measu rements. For Fig. 5 we used the entire 25 6 × 256 image sequence with p artial Fourier m easurements. At t = 0 , modified-CS , RegModCS and LS-CS used T to be the set of indices of the appr o ximation coefficients. For th e subfigures in Fig. 4, we u sed H = G r (rando m Gaussian) and m 0 = 0 . 19 n . Fig. 4(a) and 4 (b) used m = 0 . 19 n, 0 . 06 n respectiv ely . At each t , RegModCS-MAP solved (32) with b p , σ 2 p estimated u sing (42) from a few frames of the sequence treated as training d ata. The resulting γ = ˆ b p / 2 ˆ σ 2 p was 0. 007. RegModCS-exp-o pt solved (30) with T = ˆ N t − 1 , µ T = ( ˆ x r eg,t − 1 ) T and we experimen ted with many values o f γ and chose th e one which ga ve the smallest err or . Notice from Fig. 4(a) that RegModCS-MA P gives MSEs which are very close to those of RegModCS-exp- opt. Fig. 5 shows r econstructio n of the full larynx s equen ce u sing H = M F , m = 0 . 1 9 n and three choices of m 0 . In 5( a), we compare th e re constructed image sequen ce u sing modified-CS 10 2 4 6 8 10 10 −1 10 0 time → N−RMSE Simple CS Modified−CS CS−diff RegModCS−exp−opt RegModCS−MAP LS−CS (a) H = G r , m 0 = 0 . 19 n , m = 0 . 19 n 2 4 6 8 10 10 −1 10 0 time → N−RMSE Simple CS Modified−CS CS−diff RegModCS−MAP LS−CS (b) H = G r , m 0 = 0 . 19 n , m = 0 . 06 n Fig. 4. Reconstructing a 32 × 32 block of the actual ( compress ible) larynx sequence from random Gaussian measurements. n = 1024 , 99%-ener gy support size, s ≈ 0 . 07 n , u ≈ 0 . 001 n and e ≈ 0 . 002 n . Modified-CS used α = 50 2 when m = 0 . 19 n and i ncreased it to α = 80 2 when m = 0 . 06 n . with th at using simple CS. The error (N-RMSE) was 8-11% for CS, while it was stab le at 2% o r lesser f or mo dified-CS. Since m 0 is large eno ugh for CS to work, the N-RMSE of CS- diff (n ot shown) also started at a small value of 2% for the first few fr ames, but kept increasing slo wly over time. In 5(b), 5(c), we show N- RMSE co mparisons with simple CS, CS-diff and LS-CS. I n the plot shown, the LS-CS err or is c lose to that o f mod ified-CS b ecause we impleme nted LS estimation using conjug ate grad ient and did not allow the solu tion to conv erge (for cibly ran it with a redu ced n umber of iterations). W itho ut th is tweekin g, LS-CS err or was much higher, since the computed initial LS estimate itself was inaccurate. Original sequence CS−reconstructed sequence Modified CS reconstructed sequence (a) Reconstructed sequence. H = M F . m = 0 . 19 n , m 0 = 0 . 5 n . 5 10 15 20 10 −2 10 −1 Time → N−RMSE CS−diff Mod−CS CS LS−CS (b) H = M F , m 0 = 0 . 2 n , m = 0 . 19 n 5 10 15 20 10 −2 10 −1 Time → N−RMSE CS−diff Mod−CS CS (c) H = M F , m 0 = 0 . 19 n , m = 0 . 19 n Fig. 5. R econstructing the 256x 256 actual (compre ssible) vocal t ract (larynx) image sequenc e from simulated MRI measurements, i.e. H = M F . All three figures used m = 0 . 19 n for t > 0 but used different v alues of m 0 . Image size, n = 256 2 = 6553 6 . 99% energy support, | N t | ≈ 0 . 07 n ; u ≈ 0 . 001 n . In F ig. 5(a), modified-CS used α = 10 2 which is the smallest magnitude element in the 99% support. Notice from both Figs. 4 and 5, that mo d ifiedCS and Re g- ModCS significa ntly outperform CS a nd CS-diff. In most ca ses, both also outperform LS- CS. RegModCS a lways outperf orms all the others, with the difference being largest wh en m is smallest, i.e. in Fig. 4 (b). In Figs. 4 a nd 5(c ), CS-diff perfor ms so poorly , in part, because the initial error at t = 0 is very large (since we use on ly m 0 = 0 . 19 n ). As a result th e d ifference signal at t = 1 is not compr essible enough, makin g its error large an d so o n. But even when m 0 is larger a nd the initial error is small, e.g. in Fig. 5( b), CS-diff is still the worst and its err or still increases over time, th ough m ore slowly . V I I . C O N C L U S I O N S A N D F U T U R E D I R E C T I O N S W e studied th e problem of reconstru cting a sparse signal from a limited num ber o f its line ar pro jections wh en the support is p artly known (althou gh the known part may contain some error s). Denote the kn own sup port by T . Mo dified- CS solves an ℓ 1 relaxation of the following pro blem: find the signal th at is sparsest ou tside of T a nd that satisfies the data con straint. W e derived sufficient cond itions fo r exact reconstruc tion u sing mod ified-CS. These are much wea ker than th ose for CS when the sizes of the unknown part of the support an d of erro rs in the known part are sm all compared to the supp ort size. An imp ortant extension, called RegModCS, was developed that also uses pr ior signal estimate knowledge. Simulation results showing greatly improved perfor mance of modified- CS and Re gModCS u sing both ran dom Gaussian and partial Fourier measurements were shown. The curren t work does not bou nd the error either under noisy measu rements or for co mpressible signals or for the TV norm. The former is don e in [28], [3 1] fo r mo dified- CS and Re gMod CS respe cti vely , an d, in p arallel, also in [29] for modified-CS. A more impor tant question for recur si ve reconstruc tion o f signal sequenc es from noisy m easurements, is the stability of the err or over tim e (i.e. h ow to obtain a time-inv arian t and small bound on the er ror over time). This is studied in ongoin g work [32]. The stability of RegModCS over tim e is a much more difficult an d currently open question. This is d ue to its dependen ce on both th e pr evious support and the pr evious signal estimates. A key a pplication of our w ork is for recursiv e reconstruction of time sequ ences of (ap proxim ately) spa rse sign als, e. g. fo r real-time dy namic MRI. As p ointed out by a n anonym ous revie wer, many MRI pro blems minimize th e total variation (TV) no rm. Th e mod ified-CS idea can b e ap plied easily for the TV norm as fo llows. Let T conta in the set of pixel in dices whose spatial gradien t m agnitud e was nonzer o at the previous time (or should be nonzero based on some other a vailable prior knowledge). Min imize the TV n orm of the image alon g all pixels not in T sub ject to the d ata constrain t. Also, by designing ho motopy method s, similar to those in [17] for CS, one can efficiently hand le sequentially arriving measur ements and th is can be very useful fo r MRI application s. A P P E N D I X Recall th at k = | T | , u = | ∆ | , e = | ∆ e | an d s = | N | . 11 A. Pr oo f of Pr opo sition 1 The proof follows by contradictio n. Sup pose that we can find two different so lutions β 1 and β 2 that satisfy y = Aβ 1 = Aβ 2 and have the same ℓ 0 norm, u , along T c . Thus β 1 is nonzer o along T (or a sub set of it) and some set ∆ 1 of size u while β 2 is nonzero along T (or a subset of it) and some set ∆ 2 also of size u . The sets ∆ 1 and ∆ 2 may o r may not overlap. Thus A ( β 1 − β 2 ) = 0 . Since ( β 1 − β 2 ) is sup ported on T ∪ ∆ 1 ∪ ∆ 2 , this is equ iv alent to A T ∪ ∆ 1 ∪ ∆ 2 ( β 1 − β 2 ) T ∪ ∆ 1 ∪ ∆ 2 = 0 . But if δ k +2 u < 1 , A T ∪ ∆ 1 ∪ ∆ 2 is full r ank an d so the only way this ca n hap pen is if β 1 − β 2 = 0 , i.e β 1 = β 2 . Therefo re there can be on ly one solution with ℓ 0 norm u along T c that satisfies th at data constra int. Sinc e x is on e such solution, any other solutio n has to b e equa l to x . B. Pr oo f of Theor em 1 W e construc t a w that satisfies the con ditions of Lemma 1 by applying Lemma 2 iteratively as follows and de fining w using (37) below . At iteration z ero, we apply Le mma 2 with T d ≡ ∆ (so that S ≡ u ), c j ≡ sgn ( x j ) ∀ j ∈ ∆ (so that k c k 2 = √ u ), and with ˇ S ≡ u . Lemm a 2 can be ap plied because δ u + δ k + θ 2 k,u < 1 (follows f rom condition 1 of the theorem) . Fro m Lemma 2, there exists a w 1 and an exceptional set T d, 1 , disjoint with T ∪ ∆ , of size less than ˇ S = u , s.t. A j ′ w 1 = 0 , ∀ j ∈ T A j ′ w 1 = sgn ( x j ) , ∀ j ∈ ∆ | T d, 1 | < u k A T d, 1 ′ w 1 k 2 ≤ a k ( u, u ) √ u | A j ′ w 1 | ≤ a k ( u, u ) , ∀ j / ∈ T ∪ ∆ ∪ T d, 1 k w 1 k 2 ≤ K k ( u ) √ u (33) At iteration r , apply L emma 2 with T d ≡ ∆ ∪ T d,r (so that S ≡ 2 u ), c j ≡ 0 ∀ j ∈ ∆ , c j ≡ A j ′ w r ∀ j ∈ T d,r and ˇ S ≡ u . Call the exceptional set T d,r +1 . Lemma 2 can be app lied because δ 2 u + δ k + θ 2 k, 2 u < 1 (con dition 1 o f the theorem ). From Lemma 2, th ere exists a w r +1 and an exception al set T d,r +1 , disjoint with T ∪ ∆ ∪ T d,r , of size less than ˇ S = u , s.t. A j ′ w r +1 = 0 ∀ j ∈ T A j ′ w r +1 = 0 , ∀ j ∈ ∆ A j ′ w r +1 = A j ′ w r , ∀ j ∈ T d,r | T d,r +1 | < u k A T d,r +1 ′ w r +1 k 2 ≤ a k (2 u, u ) k A T d,r ′ w r k 2 | A j ′ w r +1 | ≤ a k (2 u, u ) √ u k A T d,r ′ w r k 2 ∀ j / ∈ T ∪ ∆ ∪ T d,r ∪ T d,r +1 k w r +1 k 2 ≤ K k (2 u ) k A T d,r ′ w r k 2 (34) Notice that | T d, 1 | < u (at iteration zero) and | T d,r +1 | < u (at iteration r ) ensures that | ∆ ∪ T d,r | < S = 2 u f or all r ≥ 1 . The last three equations of (34), combined with the fourth equation o f (3 3), simplify to k A T d,r +1 ′ w r +1 k 2 ≤ a k (2 u, u ) r a k ( u, u ) √ u | A j ′ w r +1 | ≤ a k (2 u, u ) r a k ( u, u ) , ∀ j / ∈ T ∪ ∆ ∪ T d,r ∪ T d,r +1 (35) k w r +1 k 2 ≤ K k (2 u ) a k (2 u, u ) r − 1 a k ( u, u ) √ u (36) W e c an define w := ∞ X r =1 ( − 1) r − 1 w r (37) Since a k (2 u, u ) < 1 , k w r k 2 approa ches zero with r , and so the above summation is absolutely conver gent, i.e. w is well- defined. From th e first two equa tions of (33) and (34), A j ′ w = 0 , ∀ j ∈ T A j ′ w = A j ′ w 1 = sgn ( x j ) , ∀ j ∈ ∆ (38) Consider A j ′ w = A j ′ P ∞ r =1 ( − 1) r − 1 w r for some j / ∈ T ∪ ∆ . If fo r a given r , j ∈ T d,r , th en A j ′ w r = A j ′ w r +1 (gets canceled by the r + 1 th term). I f j ∈ T d,r − 1 , th en A j ′ w r = A j ′ w r − 1 (gets canceled by the r − 1 th term). Since T d,r and T d,r − 1 are disjoint, j cannot b elong to both of them. Thus, A j ′ w = X r : j / ∈ T d,r ∪ T d,r − 1 ( − 1) r − 1 A j ′ w r , ∀ j / ∈ T ∪ ∆ (39 ) Consider a gi ven r in th e above summation. Since j / ∈ T d,r ∪ T d,r − 1 ∪ T ∪ ∆ , we can use (3 5) to get | A j ′ w r | ≤ a k (2 u, u ) r − 1 a k ( u, u ) . Thus, fo r all j / ∈ T ∪ ∆ , | A j ′ w | ≤ X r : j / ∈ T d,r ∪ T d,r − 1 a k (2 u, u ) r − 1 a k ( u, u ) ≤ a k ( u, u ) 1 − a k (2 u, u ) (40) Since a k (2 u, u ) + a k ( u, u ) < 1 ( condition 2 of the theo rem), | A j ′ w | < 1 , ∀ j / ∈ T ∪ ∆ (41) Thus, fr om (38) and (41), we h av e foun d a w that satisfies the cond itions o f Lem ma 1. Fro m conditio n 1 of the theore m, δ k + u < 1 . Applying Lemma 1, the claim follows. C. Causal MAP Interpretation o f Dyn amic RegModCS The solution o f (32) becomes a causal M AP estimate under the f ollowing assumptions. L et p ( X | Y ) denote th e co nditional PDF of X of given Y a nd let δ ( X ) den ote th e Dirac delta function . Assume that 1) th e random pr ocesses { x t } , { y t } satisfy the h idden Markov model pro perty; p ( y t | x t ) = δ ( y t − Ax t ) (re- statement o f the observation m odel); and p ( x t | x t − 1 ) = p (( x t ) N t − 1 | x t − 1 ) p (( x t ) N c t − 1 | x t − 1 ) , where p (( x t ) N t − 1 | x t − 1 ) = N (( x t ) N t − 1 ; ( x t − 1 ) N t − 1 , σ 2 p I ) p (( x t ) N c t − 1 | x t − 1 ) = 1 2 b p | N c t − 1 | exp − k ( x t ) N c t − 1 k 1 b p ! 12 i.e. g iv en x t − 1 (and h ence given N t − 1 ), ( x t ) N t − 1 and ( x t ) N c t − 1 are cond itionally ind ependen t; ( x t ) N t − 1 is Gaussian with mean ( x t − 1 ) N t − 1 while ( x t ) N c t − 1 is zero mean L aplace. 2) x t − 1 is pe rfectly estimated from y 0 , y 1 , . . . y t − 1 , and p ( x t − 1 | y 0 , . . . y t − 1 ) = δ x t − 1 − " ( ˆ x t − 1 ) ˆ N t − 1 0 ˆ N c t − 1 #! 3) ˆ x t is the solution o f (32) with γ = b p 2 σ 2 p . If the first two a ssumptions above hold , it is easy to see th at the “causal posterio r” at time t , p ( x t | y 1 , . . . y t ) , satisfies p ( x t | y 1 , . . . y t ) = C δ ( y t − Ax t ) e − k ( x t ) T − ( ˆ x t − 1 ) T k 2 2 2 σ 2 p e − k ( x t ) T c k 1 b p where T := ˆ N t − 1 and C is th e nor malizing constant. Clearly , the secon d assum ption is only an app roximatio n since it assumes that the posterior estimate of x t − 1 is exactly sparse. If th e last assum ption also holds, then the solutio n of (32) is a maximizer o f p ( x t | y 1 , . . . y t ) , i.e. it is a c ausal MAP solutio n. The MLE o f b p , σ 2 p can be co mputed from a train ing time sequence of signals, ˜ x 0 , ˜ x 1 , ˜ x 2 , . . . ˜ x t max as follows. Den ote their supports ( b % -en ergy su pports in case of co mpressible signal sequ ences) b y ˜ N 0 , ˜ N 1 , . . . ˜ N t max . Then the MLE is ˆ b p = P t max t =1 k ( ˜ x t ) ˜ N c t − 1 k 1 P t max t =1 | ˜ N c t − 1 | , ˆ σ 2 p = P t max t =1 k ( ˜ x t − ˜ x t − 1 ) ˜ N t − 1 k 2 2 P t max t =1 | ˜ N t − 1 | (42) R E F E R E N C E S [1] N. V aswani and W . L u, “Modified-cs: Modifying compressi ve sensing for problems with partiall y kno wn support, ” in IEEE Intl. Symp. Info. Theory (ISIT) , June 2009. [2] W . Lu and N. V aswan i, “Modified compressi ve sensing for real-ti me dynamic mr imaging, ” in IEE E Intl. Conf. Image Pr oc. (ICIP) , 2009. [3] I. Carron, “Nuit blanche, ” in http:/ / nuit- blanc he.blogspot.com/ . [4] “Rice compressi ve sensing resources, ” in http:// www- dsp.rice.edu / cs . [5] S. Chen, D. Donoho, and M. Saunders, “ Atomic decomposition by basis pursuit, ” SIAM Journa l of Scientific Computati on , vol . 20, pp. 33–61, 1998. [6] D. Wipf and B. Rao, “Sparse bayesian learning for basis selectio n, ” IEEE T rans. Sig. Proc. , vol. 52, pp. 2153–2164, Aug 2004. [7] E. Candes, J. Ro mberg, an d T . T ao, “Robust uncertai nty principl es: E xact signal reconstruct ion from highly incomple te frequenc y information, ” IEEE T rans. Info. Th. , vol. 52(2), pp. 489–509, February 2006. [8] D. Donoho, “Compressed sensing, ” IEEE T rans. on Information Theory , vol. 52(4), pp. 1289–1306, April 2006. [9] E. Cande s and T . T ao, “Dec oding by linear programming, ” IEEE Tr ans. Info. Th. , vol . 51(12), pp. 4203 – 4215, Dec. 2005. [10] J. A. Tropp, “Just relax: Con vex programming methods for identifying sparse signals, ” IEEE T rans. Info. Th. , pp. 1030–1051, March 2006. [11] E. Candes and T . T ao, “The dantzig selec tor: statist ical estimati on when p is much larg er than n, ” Annals of Statistic s , 2006. [12] N. V aswani, “Kalman filtered compressed sensing, ” in IE EE Intl. Conf . Imag e Pr oc. (ICIP) , 2008. [13] ——, “Ls-cs-residual (ls-cs): Compressiv e s ensing on the least squares residual , ” IEEE T rans. Sig. Proc. , vol. 58 (8), pp. 4108–4120, August 2010. [14] A. Khajehnejad, W . Xu, A. A vestimehr , and B. Hassibi, “W eighted l1 minimizat ion for sparse recov ery with prior information , ” in IE EE Intl. Symp. Info. Theory (ISIT) , June 2009. [15] C. J. Miosso, R. v on Borries, M. Argez, L. V alazquez, C. Quintero, and C. Potes, “Compressi ve sensing recon struction with prior information by ite rati vely re weighted least-squa res, ” IEEE T rans. Sig . Pro c. , vol. 57 (6), pp. 2424–243 1, June 2009. [16] D. Angelosante and G. Giannakis, “Rls-weighted lasso for adapt iv e estimati on of sparse signals, ” in IE EE Intl. Conf. Acoustics, Speec h, Sig. Pr oc. (ICASSP) , 2009. [17] M. Asif and J. Romberg, “Dynamic updating for s parse time v arying signals, ” in CISS , 2009. [18] V . Cevhe r , A. Sankaranara yanan, M. Duarte, D. Redd y , R. Baraniuk, and R. Chellappa, “Compressi ve sensing for background subtraction, ” in Eur . Conf. on Comp. V is. (ECCV) , 2008. [19] J. Park and M. W akin, “ A m ultisca le framew ork for compressiv e sensing of video, ” in Pictur e Coding Symposium (PCS) , May 2009. [20] B. K. Natarajan, “Sparse approximate solutions to linea r systems, ” SIAM J . Comput. , vol . 24, pp. 227–234, 1995. [21] S. Boyd and L. V andenber ghe, Con vex Optimization . Cambridge Uni versi ty Press, 2004. [22] E. Candes, “The restri cted isometry property and its implications for compressed sensing, ” Compte R endus de l’Academi e des Sci ences, P aris, Serie I , pp. 589–592, 2008. [23] S. Foucart and M. J. Lai, “Sparsest solutions of underde termined linea r systems via ell-q-min imizati on for 0 ¡= q ¡= 1, ” A pplied and Computati onal Harmonic Analysis , vol . 26, pp. 395–407, 2009. [24] D. Needell and J. Tropp., “Cosamp: Iterati ve signa l recov ery from incomple te and inaccu rate samples, ” Appl. Comp. Harmonic Anal. , T o Appear . [25] R. Baraniuk, M. Da venport , R. DeV ore, and M. W akin, “ A simple proof of the restricted isometry property for random m atrice s, ” Constructive Appr oximation , vol . 28(3), pp. 253–263, D ec 2008. [26] C. Dossal, G. Peyre , and J. Fadili, “ A numeri cal ex ploratio n of com- pressed sampling recove ry , ” in Signal Pr ocessing with Adaptive Sparse Structur ed Repre sentation s (SP ARS) , 2009. [27] C. Dossal, “ A necessary and suf ficient conditio n for exac t recovery by l1 minimizatio n, ” in Prepri nt , 2007. [28] W . Lu and N. V aswani, “Modified bpdn for noisy compressiv e sensing with partially kno wn support, ” in IEEE Intl. Conf. Acoustics, Speec h, Sig. Pr oc. (ICASSP) , 2010. [29] L. J acques, “ A short note on compressed sensing with partially known signal support, ” A rXiv pre print 0908.0660 , 2009. [30] E. Candes and J. Romberg, “L1 Magic Users Guide, ” Octobe r 2005. [31] W . L u and N. V aswani, “Regular ized modified bpdn for compressiv e sensing with partially kno wn support, ” in ArXiv preprint , 2010. [32] N. V aswani, “Stabilit y (ove r time ) of m odified-cs and ls-cs for recursi ve causal sparse reconstruc tion, ” in ArXiv pr eprint arXiv:1006.4818 , 2010. B I O G R A P H Y Namrata V aswani re ceiv ed a B.T ech . from the Indian Institute of T echn ology (IIT ), Delhi, in Aug ust 19 99 an d a Ph.D. from the University o f Mary land, College Park, in August 2004, both in electrical engineer ing. From 200 4 to 2005, she was a research scientist at Georgia T ech. Since Fall 2 005, she has been an Assistant Profe ssor in the ECE departmen t at Iowa State University . She is currently serving as an Associate Editor for the IEEE Transactions o n Signal Processing (2009-pr esent). Her research interests are in estimatio n and detection problem s in sequential signal pro cessing and in biomed ical imaging. Her curren t focus is on recursive sparse reconstruc tion problem s, sequen tial com pressiv e sensing and large d imensional trac king pro blems. W ei Lu r eceived the B.E. degree fro m the Departmen t of Electrical En gineering f rom N anjing Un iv ersity of Posts and T eleco mmunic ations, China,in 20 03. He received M.E. degree in Department of Electr onic Engineering an d In formation Science f rom University of Science a nd T echnolog y of China in 20 06. He is curren tly a Ph.D student in the Dep artment of Electrical and Comp uter Enginee ring in Iowa State Un iv ersity . His current research is fo cused o n Modified Com pressiv e Sensing for reconstru ction of spar se sign als. His research interests includes signal processing and image p rocessing.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment