Finite State Machine Based Evaluation Model for Web Service Reliability Analysis

Now-a-days they are very much considering about the changes to be done at shorter time since the reaction time needs are decreasing every moment. Business Logic Evaluation Model (BLEM) are the proposed solution targeting business logic automation and facilitating business experts to write sophisticated business rules and complex calculations without costly custom programming. BLEM is powerful enough to handle service manageability issues by analyzing and evaluating the computability and traceability and other criteria of modified business logic at run time. The web service and QOS grows expensively based on the reliability of the service. Hence the service provider of today things that reliability is the major factor and any problem in the reliability of the service should overcome then and there in order to achieve the expected level of reliability. In our paper we propose business logic evaluation model for web service reliability analysis using Finite State Machine (FSM) where FSM will be extended to analyze the reliability of composed set of service i.e., services under composition, by analyzing reliability of each participating service of composition with its functional work flow process. FSM is exploited to measure the quality parameters. If any change occurs in the business logic the FSM will automatically measure the reliability.

💡 Research Summary

The paper addresses the growing need for rapid, reliable web‑based business processes by proposing a Business Logic Evaluation Model (BLEM) that incorporates a Finite State Machine (FSM) to assess and maintain the reliability of composed web services. After a thorough literature review covering service discovery, QoS‑based selection, dynamic composition, and various formal models (Petri Nets, colored timed nets, etc.), the authors argue that existing solutions either focus on static QoS attributes or lack mechanisms for real‑time re‑evaluation when business logic changes.

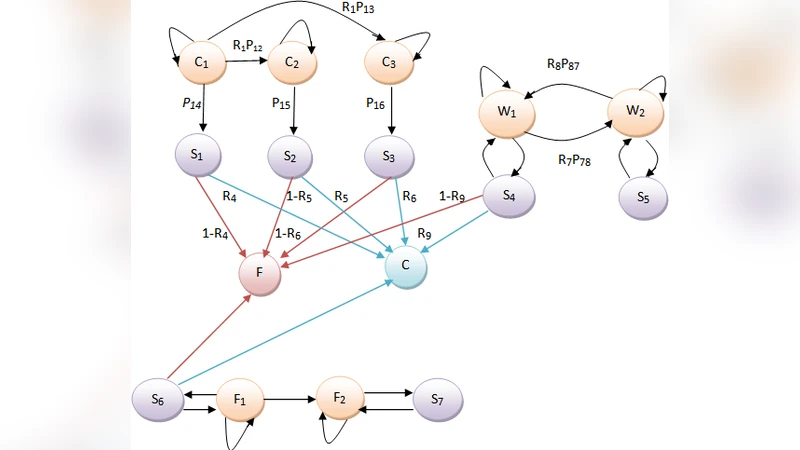

The core contribution is a layered architecture that first separates business logic into a rule source and a function source. These are analyzed by a Rule Bound Analyzer and a Function Bound Analyzer, respectively. The FSM Simulator then evaluates four “interoperability” goals—computability, traceability, decidability, and manageability—by modeling each service’s execution steps as states and transitions. A Primitive Business Function (PBF) Evaluator measures non‑functional goals such as configurability, customizability, and serviceability. A Change Monitor watches for any modifications in the business logic; when a change is detected, the FSM is re‑run, updating reliability estimates instantly. Reliability is quantified by assigning success probabilities to each transition (derived from historical success/failure data) and computing the expected success rate of the entire workflow.

The authors detail definitions for computability (whether a requested change can be expressed as a rule), traceability (re‑using solutions to similar past errors), and manageability (monitoring impact of changes). They claim that the FSM‑based approach enables automatic, fine‑grained reliability measurement for each component service and for the overall composition, something not offered by prior static QoS models or Petri‑Net verification techniques.

However, the paper lacks empirical validation. No concrete mathematical formulation of transition probabilities, no case study, and no performance evaluation are provided. The scalability of the FSM—potentially exploding state space as the number of services grows—is acknowledged but not addressed with hierarchical or distributed techniques. Comparisons with existing QoS‑based selection algorithms or with Petri‑Net based verification are absent, leaving the practical benefits of the proposed model unquantified.

In conclusion, the work presents an interesting integration of business‑logic automation and reliability assessment via FSMs, highlighting the possibility of real‑time reliability updates upon logic changes. Future research is needed to formalize the probabilistic model, conduct large‑scale experiments, and explore optimization strategies to keep the FSM tractable in realistic service‑oriented environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment