Understanding need of "Uncertainty Analysis" in the system Design process

Software project development process is requiring accurate software cost and schedule estimation for achieve goal or success. A lot it referred to as the “Intricate brainteaser” because of its conscience attribute which is impact by complexity and uncertainty, Generally estimation is not as difficult or puzzling as people think. In fact, generating accurate estimates is straightforward-once you understand the intensity of uncertainty and module which contribute itself process. In our everyday life, we enhance our estimation based on past experience in which problem solve by which method and in which condition and which opportune provide that method to produce better result . So, Instead of unexplained treatises and inflexible modeling techniques, this will guide highlights a proven set of procedures, understandable formulas, and heuristics that individuals and complete team can apply to their projects to help achieve estimation ability with choose appropriate development approaches In the early stage of software life cycle project manager are inefficient to estimate the effort, schedule, cost estimation and its development approach .This in turn, confuses the manager to bid effectively on software project and choose incorrect development approach. That will directly effect on productivity cycle and increase level of uncertainty. This becomes a strong cause of project failure. So to avoid such problem if we know level and sources of uncertainty in model design, It will directive the developer to design accurate software cost and schedule estimation. which are require l for software project success. This paper demonstrates need of uncertainty analysis module at the modeling process for assist to recognize modular uncertainty system development process and the role of uncertainty at different stages in the modeling

💡 Research Summary

The paper argues that uncertainty is a central factor that undermines accurate cost‑and‑schedule estimation in the early phases of software development, and that this uncertainty is a major cause of project failure. To address this, the authors propose embedding an “uncertainty analysis module” into the modeling process and provide a conceptual framework for identifying, classifying, and managing uncertainty at the module level.

The introduction contrasts two broad families of development methodologies: plan‑driven (traditional, predictive) and practice‑driven (Agile, adaptive). Plan‑driven approaches work well when requirements are stable and well understood, but they become fragile when requirements are volatile, because the underlying plan is then exposed to high uncertainty. Agile methods, by contrast, embrace change and rely on close collaboration with the customer, but they make long‑term cost and schedule predictions difficult, so explicit uncertainty management is essential.

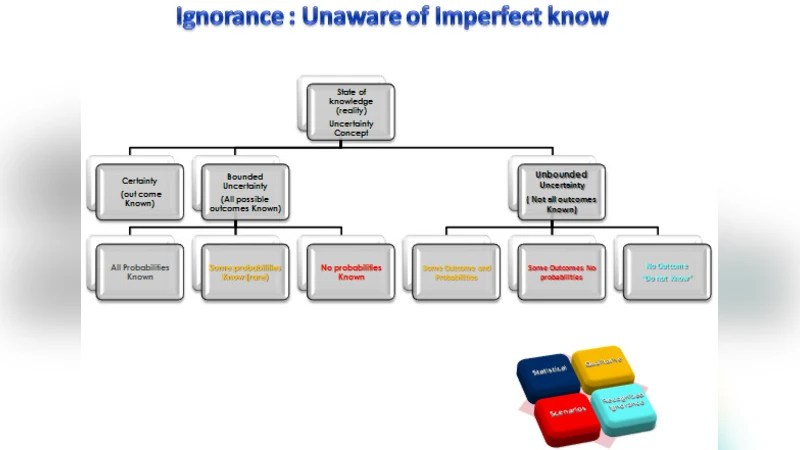

The core of the paper is a multi‑dimensional taxonomy of uncertainty. First, uncertainty is split into bounded (all possible outcomes are known) and unbounded (some outcomes are unknown). Second, each type is further divided into epistemic (knowledge‑based, reducible by study or expert advice) and stochastic (inherent variability, not reducible). This four‑fold classification mirrors risk‑management concepts but focuses directly on the nature of the unknown rather than on its consequences.

Next, the authors identify four sources (or “locations”) where uncertainty manifests in a model‑based software project:

- Context – external forces such as technology trends, economic conditions, political or social factors that shape the problem space.

- Framing – the way the model’s structure and concepts are defined, reflecting partial or simplified understanding of reality.

- Parameter – uncertainty in input values, assumptions, or calibration data.

- Technical – uncertainties introduced by the implementation of the model itself (numerical approximations, discretisation, bugs).

These sources are organized into an “uncertainty matrix” that allows project teams to locate each module on the axes of source and type, thereby visualising which aspects of a system are most vulnerable to unknowns.

The practical contribution is a set of guidelines for integrating this matrix into the creation of a Work Breakdown Structure (WBS). For each work package, the team assesses the level and type of uncertainty, then uses that assessment to decide (a) which development methodology to apply (plan‑driven for high‑bounded, low‑uncertainty modules; Agile for high‑unbounded, stochastic modules) and (b) which personnel to assign (senior engineers for modules where precise specification is critical, cross‑functional Agile teams for modules that will evolve). By aligning methodology and staffing with quantified uncertainty, the authors claim that initial estimates become more reliable and that downstream risk mitigation is more targeted.

The paper outlines a five‑step modeling workflow: (1) model study plan, (2) data collection and conceptualisation, (3) model set‑up, (4) calibration and validation, (5) simulation and evaluation. At each stage the uncertainty matrix is updated, and re‑calibration is performed when new information reduces epistemic uncertainty.

However, the manuscript suffers from several serious shortcomings. It provides no empirical validation—no case studies, no data sets, and no quantitative comparison with established estimation techniques such as COCOMO or Function Point analysis. The “understandable formulas” promised in the abstract are never presented, leaving readers without a concrete method to compute uncertainty scores. The discussion of the uncertainty matrix remains purely conceptual, lacking algorithms for scoring, weighting, or aggregating uncertainties across modules. Moreover, the writing is riddled with grammatical errors, redundant sentences, and typographical glitches, which obscure the intended contributions and reduce credibility.

In conclusion, the paper raises an important research direction: systematic, module‑level uncertainty analysis could indeed improve the alignment of development approaches with the nature of the problem, thereby reducing cost overruns and schedule slips. Future work should focus on (1) developing a mathematically rigorous uncertainty quantification method (e.g., Bayesian networks, Monte‑Carlo simulation), (2) integrating the matrix with existing estimation models to demonstrate added predictive power, (3) conducting real‑world case studies across different domains (e.g., embedded systems, web applications) to validate the approach, and (4) refining the presentation to meet academic standards. Only with such empirical grounding can the proposed framework move from a theoretical suggestion to a practical tool for software project managers.

Comments & Academic Discussion

Loading comments...

Leave a Comment