Why does Deep Learning work? - A perspective from Group Theory

Why does Deep Learning work? What representations does it capture? How do higher-order representations emerge? We study these questions from the perspective of group theory, thereby opening a new approach towards a theory of Deep learning. One factor behind the recent resurgence of the subject is a key algorithmic step called pre-training: first search for a good generative model for the input samples, and repeat the process one layer at a time. We show deeper implications of this simple principle, by establishing a connection with the interplay of orbits and stabilizers of group actions. Although the neural networks themselves may not form groups, we show the existence of {\em shadow} groups whose elements serve as close approximations. Over the shadow groups, the pre-training step, originally introduced as a mechanism to better initialize a network, becomes equivalent to a search for features with minimal orbits. Intuitively, these features are in a way the {\em simplest}. Which explains why a deep learning network learns simple features first. Next, we show how the same principle, when repeated in the deeper layers, can capture higher order representations, and why representation complexity increases as the layers get deeper.

💡 Research Summary

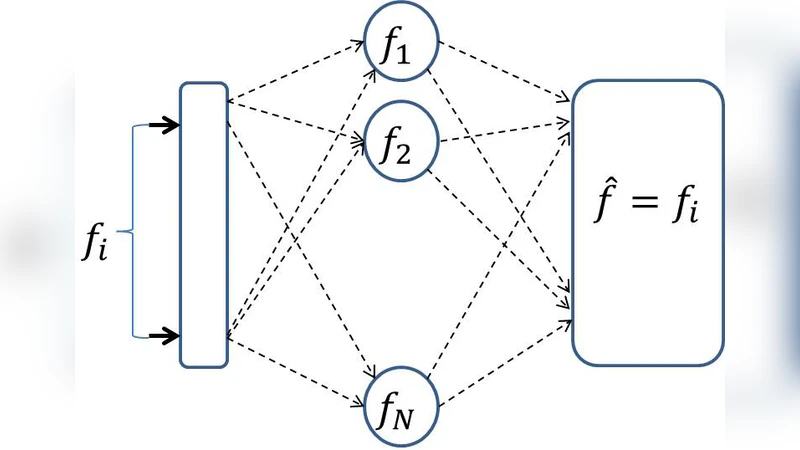

The paper “Why does Deep Learning work? – A perspective from Group Theory” attempts to explain the success of deep learning, especially layer‑wise pre‑training, through the lens of group theory. The authors begin by interpreting an auto‑encoder as a transformation that stabilizes its input: after training, feeding an input f into the network yields an output f′≈f, so the learned mapping can be seen as a stabilizer of f. In group theory, a group G acting on a set X partitions the orbit Oₓ (all points reachable from x) and the stabilizer Sₓ (all group elements that leave x unchanged). For finite groups the classic orbit‑stabilizer theorem states |Oₓ|·|Sₓ|=|G|; for continuous groups the relationship is expressed via dimensions or Haar measure, i.e., dim G − dim Sₓ = dim Oₓ.

Real neural networks, however, are not groups because they consist of highly non‑linear transformations. To bridge this gap the authors introduce the notion of “shadow groups”: approximate groups that capture the local linear structure of the network’s parameter space. By treating the learning dynamics as a random walk or a Markov‑Chain‑Monte‑Carlo (MCMC) process over the parameter space, they argue that the search will most likely encounter large stabilizers early, because a larger stabilizer corresponds to a smaller orbit (i.e., fewer distinct transformed states). Consequently, the network first discovers features whose stabilizers are large—features that are “simple” in the sense of having small orbits.

The paper illustrates this idea with concrete examples in the group GL(2,ℝ). An edge (a line through the origin) has a stabilizer isomorphic to SO(2)×ℝ⁺, giving it dimension 2, whereas a circle or an ellipse have one‑dimensional stabilizers (essentially the orthogonal group O(2)). Since the edge’s stabilizer occupies a larger volume in the group, a random walk in GL(2,ℝ) is far more likely to hit an edge‑stabilizer than a circle‑ or ellipse‑stabilizer. This mirrors empirical observations that the first layer of convolutional networks learns Gabor‑like edge detectors.

Extending the argument to deep networks, the authors propose that each hidden layer operates on the output of the previous layer, which defines a new “input space”. The same stabilizer‑orbit principle applies recursively: the first layer learns low‑dimensional, high‑stabilizer features (edges); the second layer treats combinations of edges as its inputs and therefore learns higher‑order structures such as corners or textures; deeper layers continue this hierarchy, eventually yielding abstract representations. The sigmoid non‑linearity is highlighted as a crucial element that preserves the continuity needed for the shadow‑group approximation while providing the necessary non‑linearity for expressive power.

Key contributions claimed are: (1) a formal link between auto‑encoders and stabilizers, showing that random‑walk‑style optimization preferentially discovers large stabilizers; (2) the construction of shadow groups that approximate the network’s transformation space, allowing group‑theoretic reasoning to be applied; (3) an explanation of hierarchical feature emergence across layers, with the sigmoid function playing a pivotal role.

Critically, the paper’s theoretical development remains largely heuristic. The definition of shadow groups is informal, and no quantitative bounds are provided on how closely they approximate the true network dynamics. Empirical validation is limited to simple 2‑D examples; there is no experimental evidence on modern architectures (e.g., CNNs with ReLU, batch normalization). Moreover, the analysis assumes a random‑walk search, whereas practical training uses stochastic gradient descent with momentum, adaptive learning rates, and regularization, which may alter the dynamics substantially. Nonetheless, the work offers a fresh perspective by connecting deep learning to symmetry, stabilizers, and orbits, and it opens avenues for more rigorous group‑theoretic analyses of representation learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment