Interpreting "altmetrics": viewing acts on social media through the lens of citation and social theories

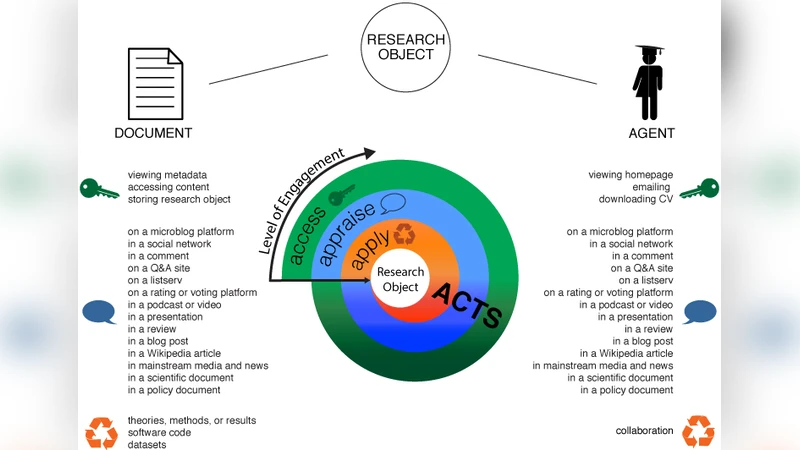

More than 30 years after Cronin’s seminal paper on “the need for a theory of citing” (Cronin, 1981), the metrics community is once again in need of a new theory, this time one for so-called “altmetrics”. Altmetrics, short for alternative (to citation) metrics – and as such a misnomer – refers to a new group of metrics based (largely) on social media events relating to scholarly communication. As current definitions of altmetrics are shaped and limited by active platforms, technical possibilities, and business models of aggregators such as Altmetric.com, ImpactStory, PLOS, and Plum Analytics, and as such constantly changing, this work refrains from defining an umbrella term for these very heterogeneous new metrics. Instead a framework is presented that describes acts leading to (online) events on which the metrics are based. These activities occur in the context of social media, such as discussing on Twitter or saving to Mendeley, as well as downloading and citing. The framework groups various types of acts into three categories – accessing, appraising, and applying – and provides examples of actions that lead to visibility and traceability online. To improve the understanding of the acts, which result in online events from which metrics are collected, select citation and social theories are used to interpret the phenomena being measured. Citation theories are used because the new metrics based on these events are supposed to replace or complement citations as indicators of impact. Social theories, on the other hand, are discussed because there is an inherent social aspect to the measurements.

💡 Research Summary

The paper argues that the field of scholarly metrics is once again in need of a robust theoretical foundation, this time for the rapidly expanding set of “altmetrics” – metrics derived from social‑media events rather than traditional citations. Rather than attempting to coin a single, all‑encompassing definition, the authors propose a behavior‑centric framework that categorises the online actions that generate measurable events into three sequential stages: Accessing, Appraising, and Applying.

Accessing includes any activity that brings a scholarly object into a user’s attention – downloads, page views, saves to reference managers such as Mendeley or Zotero, and similar “first‑look” interactions. These actions are analogous to the visibility‑building phase that precedes formal citation.

Appraising captures the social evaluation of a work: tweets, retweets, Facebook shares, blog comments, “likes”, and other public signals that reflect a community’s judgment, endorsement, or critique. This stage is where the social dimension of scholarly communication becomes most evident, and where reputation and network effects are generated.

Applying refers to the concrete incorporation of the work into subsequent research, policy documents, teaching materials, or other knowledge‑transfer activities – essentially the traditional citation event and its broader equivalents.

To interpret these stages, the authors draw on two families of citation theory. The normative perspective (Merton) treats citations as mechanisms of trust, recognition, and reward; the authors map Accessing, Appraising, and Applying onto the pre‑, peri‑, and post‑citation phases respectively, suggesting that altmetrics can complement rather than replace citations. The social‑constructivist view sees citations as strategic communication tools; similarly, altmetric events may be motivated by self‑promotion, community building, or agenda setting, and thus require a nuanced reading.

Social theory is invoked to explain why and how these events spread. Bourdieu’s concept of social capital frames the accumulation of reputation and network resources through Appraising actions on open platforms. Granovetter’s weak‑tie theory highlights that Twitter, Reddit, and similar media create connections across disciplinary and professional boundaries, accelerating the diffusion of ideas. Rogers’ diffusion‑of‑innovation model provides a temporal lens: an article first gains awareness (knowledge), then persuasion (Appraising), decision (saving or bookmarking), implementation (citing or policy use), and finally confirmation (continued discussion).

A substantial portion of the paper is devoted to the methodological challenges posed by platform dependence. Commercial aggregators (Altmetric.com, ImpactStory, Plum Analytics, PLOS) each define event types, weighting schemes, and API limits differently, leading to inconsistent scores for the same article across services. Moreover, automated bots, spam accounts, and algorithmic retweets introduce noise that can inflate Appraising metrics without reflecting genuine scholarly interest. The authors recommend a suite of data‑cleaning strategies: authentication‑based filtering, temporal‑frequency analysis to detect bursts typical of bots, and sentiment or content analysis to distinguish substantive discussion from trivial amplification.

Policy implications are explored in depth. If institutions seek to capture “societal impact” – public engagement, policy relevance, or rapid knowledge transfer – altmetrics provide a richer, multi‑dimensional picture than citation counts alone. However, the authors caution against treating altmetric scores as a direct proxy for quality; popularity can be driven by sensationalism, media hype, or coordinated campaigns, leading to a visibility bias that may disadvantage rigorous but less “shareable” research. Consequently, they advocate for mixed‑methods evaluation frameworks that combine quantitative altmetric indicators with qualitative peer review, field‑specific normalization, and transparent weighting that reflects the intended assessment goals.

In conclusion, the paper positions altmetrics not merely as a new set of numbers but as a reflection of distinct scholarly behaviours embedded in social‑media ecosystems. By linking these behaviours to established citation theories and to broader sociological concepts such as social capital and diffusion, the authors provide a conceptual scaffold that can guide both empirical research and responsible metric‑based evaluation practices.