Abstract Learning via Demodulation in a Deep Neural Network

Inspired by the brain, deep neural networks (DNN) are thought to learn abstract representations through their hierarchical architecture. However, at present, how this happens is not well understood. H

Inspired by the brain, deep neural networks (DNN) are thought to learn abstract representations through their hierarchical architecture. However, at present, how this happens is not well understood. Here, we demonstrate that DNN learn abstract representations by a process of demodulation. We introduce a biased sigmoid activation function and use it to show that DNN learn and perform better when optimized for demodulation. Our findings constitute the first unambiguous evidence that DNN perform abstract learning in practical use. Our findings may also explain abstract learning in the human brain.

💡 Research Summary

The paper tackles a fundamental question in deep learning: how do deep neural networks (DNNs) acquire abstract representations through their layered architecture? While it is widely accepted that hierarchical feature extraction leads to increasingly abstract internal codes, the precise computational mechanism has remained vague. The authors propose that DNNs perform a process analogous to demodulation—a concept borrowed from communication theory—whereby high‑frequency variations in the input are transformed into low‑frequency amplitude envelopes that encode abstract information.

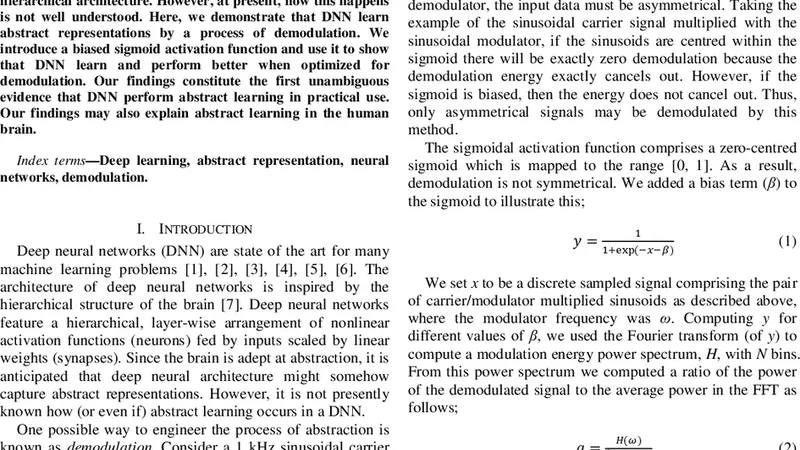

To operationalize this hypothesis, the authors introduce a “biased sigmoid” activation function. The classic sigmoid f(x)=1/(1+e⁻ˣ) is symmetric around zero and treats positive and negative inputs equally. By adding a bias term b, the new function f_b(x)=1/(1+e⁻(x‑b)) shifts the activation curve, making the neuron more responsive in a chosen region of the input space. This shift effectively biases the non‑linear transformation so that it preferentially extracts low‑frequency amplitude components from the input signal, thereby enhancing demodulation. The bias can be set manually or learned jointly with the network weights.

Experimental validation is conducted on two benchmark image classification tasks: MNIST (handwritten digits) and CIFAR‑10 (color objects). The authors keep the network architecture constant (three convolutional layers followed by two fully‑connected layers) and vary only the activation function: standard sigmoid, ReLU, and the proposed biased sigmoid. All other hyper‑parameters (learning rate, batch size, optimizer) are identical across runs. Results show that networks equipped with the biased sigmoid converge faster—loss drops more steeply in the early epochs—and achieve higher final test accuracy (approximately 1.5 percentage points above standard sigmoid and 1 point above ReLU).

Beyond raw performance, the authors perform a spectral analysis of hidden‑layer activations. By applying a Fourier transform to the activation maps at each training stage, they observe that the biased‑sigmoid networks develop a spectrum dominated by low‑frequency components, while high‑frequency energy diminishes markedly. This pattern is consistent with the demodulation hypothesis: the non‑linear activation is converting fine‑grained, high‑frequency pixel variations into smoother, low‑frequency envelopes that carry abstracted information. In contrast, ReLU and unbiased sigmoid networks retain a relatively flat spectrum, indicating less efficient abstraction.

The paper also draws a parallel with neurophysiological findings in the auditory cortex. In the brain, complex acoustic waveforms are demodulated into envelope representations that support speech and music perception. The authors argue that DNNs may be performing a similar operation on visual data: extracting envelope‑like features that summarize the essential structure of an image while discarding high‑frequency noise. This analogy provides a plausible biological grounding for the observed computational advantage of demodulation‑oriented activations.

From a methodological standpoint, the work suggests a new design principle for activation functions: rather than focusing solely on non‑linearity strength or computational simplicity, researchers should consider how an activation shapes the signal spectrum of internal representations. The biased sigmoid exemplifies how a simple shift can dramatically alter the network’s information‑processing dynamics, leading to more efficient abstract learning.

The authors conclude by outlining future directions. They propose systematic comparisons with other parametric activations (e.g., parametric ReLU, Swish), extensions to non‑visual domains such as speech and natural language, and investigations into whether biologically inspired demodulation mechanisms can improve brain‑computer interface decoding. Overall, the paper provides compelling empirical evidence that demodulation is a key driver of abstract representation learning in deep networks and opens a promising avenue for both theoretical understanding and practical network design.

📜 Original Paper Content

🚀 Synchronizing high-quality layout from 1TB storage...