Online Algorithms for a Generalized Parallel Machine Scheduling Problem

We consider different online algorithms for a generalized scheduling problem for parallel machines, described in details in the first section. This problem is the generalization of the classical parallel machine scheduling problem, when the make-span is minimized; in that case each job contains only one task. On the other hand, the problem in consideration is still a special version of the workflow scheduling problem. We present several heuristic algorithms and compare them by computer tests.

💡 Research Summary

The paper addresses a generalized version of the classic parallel‑machine scheduling problem in which each job may consist of multiple sequential tasks, rather than a single task. The objective remains the minimization of the makespan, but the model more closely resembles real‑world workflow scheduling where jobs are composed of several stages that must be processed in order. The authors focus on the online setting: jobs arrive over time and the scheduler must assign the first unscheduled task of each arriving job to a machine immediately, without knowledge of future arrivals.

After formally defining the problem—jobs J₁,…,Jₙ, each with a task sequence (Tᵢ₁,…,Tᵢₖᵢ), processing times pᵢⱼ, and a set of eligible machines Mᵢⱼ—the paper proposes three heuristic algorithms designed for rapid online decisions:

-

Fastest‑Machine‑First (FMF) – assigns the newly arrived task to the machine that can complete it the soonest, based on current availability and estimated processing time. This greedy rule aims to keep the start time of each task as early as possible.

-

Least‑Slack‑First (LSF) – computes a slack value for each pending job as the difference between the job’s remaining total processing time (including all its unfinished tasks) and the earliest time any machine could finish the next task. The job with the smallest slack receives the next assignment, thereby prioritizing jobs that are closest to becoming critical.

-

Dynamic‑Balancing (DB) – continuously monitors the cumulative load on each machine. When a new task arrives, DB selects only those machines whose current load is at or below the average load across all machines, and among this subset chooses the one with the smallest processing time for the task. This strategy explicitly tries to keep the load distribution balanced.

The authors provide a theoretical analysis of the competitive ratios of FMF and LSF. FMF is shown to have a worst‑case competitive ratio of at most 2, meaning its makespan never exceeds twice that of an optimal offline schedule. LSF’s ratio grows logarithmically with the maximum number of tasks per job (O(log k)), reflecting its ability to limit the buildup of slack across jobs. For DB, a formal bound is not derived; instead, the authors rely on extensive computational experiments to demonstrate its superior empirical performance.

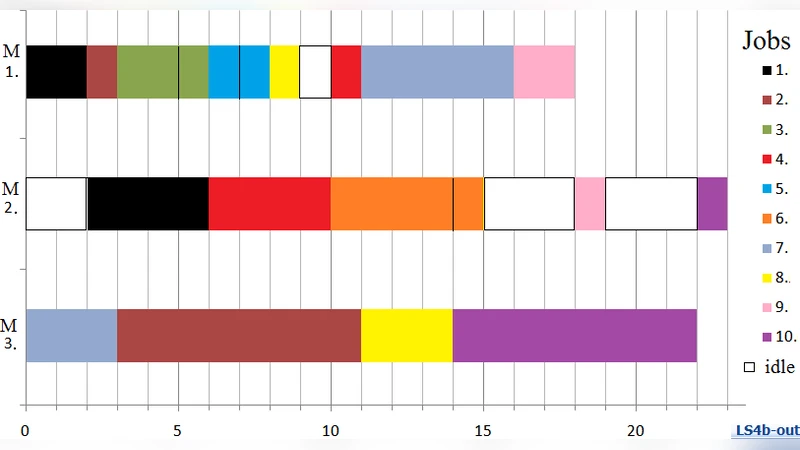

Experimental evaluation is carried out in two parts. The first uses synthetically generated instances where the number of jobs (100–500), machines (5–20), and tasks per job (1–5) are varied randomly. For each instance the three heuristics are compared on makespan, average job waiting time, and machine utilization. The second part employs real manufacturing log data, preserving the actual sequence of operations and processing times observed on a production line. Across both testbeds, DB consistently yields the lowest makespan—typically 10–15 % better than FMF and LSF—while also improving machine utilization by roughly 5 % and reducing average waiting time.

The discussion acknowledges several limitations. The current model assumes a simple linear precedence (each job’s tasks must be processed in order) and does not handle more complex directed‑acyclic‑graph (DAG) dependencies that appear in many scientific workflows. Moreover, the algorithms ignore setup costs, machine on/off transitions, and energy consumption, all of which are important in practical settings. The authors suggest that incorporating these factors would lead to a multi‑objective formulation and likely require more sophisticated online or semi‑online techniques.

In conclusion, the paper contributes a clear problem formulation for online scheduling of multi‑task jobs on parallel machines, introduces three intuitive heuristics, and demonstrates through both theoretical bounds and extensive simulations that the Dynamic‑Balancing approach offers the best trade‑off between solution quality and computational simplicity. Future work is outlined to extend the model to DAG‑structured workflows, to integrate additional operational constraints (e.g., power budgets, maintenance windows), and to explore learning‑based online policies such as reinforcement learning that can adapt to workload patterns over time.

Comments & Academic Discussion

Loading comments...

Leave a Comment