Towards Resolving Software Quality-in-Use Measurement Challenges

Software quality-in-use comprehends the quality from user’s perspectives. It has gained its importance in e-learning applications, mobile service based applications and project management tools. User’s decisions on software acquisitions are often ad hoc or based on preference due to difficulty in quantitatively measure software quality-in-use. However, why quality-in-use measurement is difficult? Although there are many software quality models to our knowledge, no works surveys the challenges related to software quality-in-use measurement. This paper has two main contributions; 1) presents major issues and challenges in measuring software quality-in-use in the context of the ISO SQuaRE series and related software quality models, 2) Presents a novel framework that can be used to predict software quality-in-use, and 3) presents preliminary results of quality-in-use topic prediction. Concisely, the issues are related to the complexity of the current standard models and the limitations and incompleteness of the customized software quality models. The proposed framework employs sentiment analysis techniques to predict software quality-in-use.

💡 Research Summary

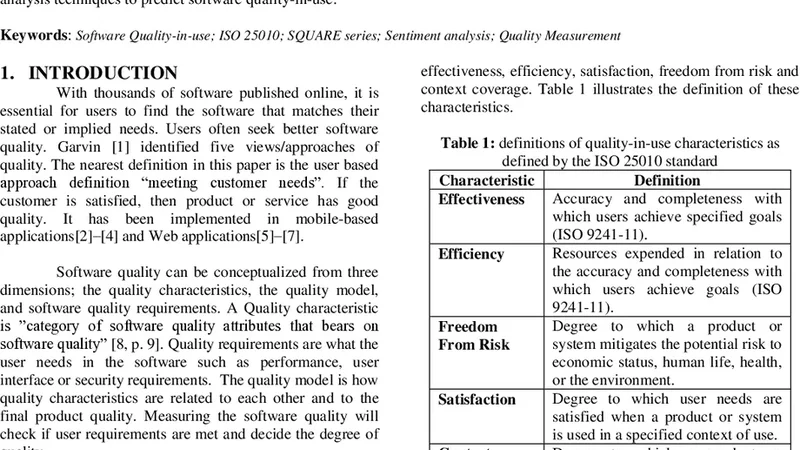

The paper addresses the persistent difficulty of measuring software quality‑in‑use (QinU), a perspective that captures how end‑users experience a system in real operational contexts. While traditional software quality models focus on product‑centric attributes such as reliability, maintainability, or functional correctness, they provide limited insight into the subjective, task‑oriented aspects that drive user satisfaction, efficiency, effectiveness, and perceived risk. The authors begin by dissecting the ISO SQuaRE family—particularly ISO 25010 and ISO 25022—which define QinU through four core characteristics: efficiency, effectiveness, satisfaction, and risk mitigation. Each characteristic is linked to a set of metrics that require multi‑stage data collection (e.g., task completion times, error rates, post‑task surveys). In practice, gathering such data is cumbersome: operational logs may be incomplete, controlled experiments are costly, and survey responses suffer from low participation and bias.

The paper then surveys a range of established quality models (McCall, Boehm, Dromey, ISO 25010) and highlights a common shortcoming: they are largely internal‑quality oriented and lack mechanisms to capture the dynamic, user‑centric view required for QinU. Moreover, the terminology and measurement scales differ across models, making cross‑model aggregation impractical. Consequently, decision‑makers in domains such as e‑learning, mobile services, and project‑management tools often rely on ad‑hoc judgments or superficial preference cues when selecting software, rather than on robust, quantitative QinU evidence.

To overcome these obstacles, the authors propose a novel, data‑driven framework that leverages sentiment analysis and topic modeling to predict QinU directly from user‑generated textual artifacts (app‑store reviews, forum posts, social‑media comments). The framework consists of four stages: (1) Data Acquisition – automated crawling of heterogeneous, multilingual user feedback; (2) Sentiment Scoring – application of a pre‑trained BERT‑based model to assign a continuous positivity score to each document; (3) Topic Extraction – simultaneous use of Latent Dirichlet Allocation (LDA) and BERTopic to map textual content onto the four QinU dimensions; (4) QinU Prediction – integration of sentiment scores and topic weights via regression or multi‑class classification to produce a composite QinU index.

A pilot study was conducted on 1,200 real‑world reviews (800 Korean, 400 English). The topic‑identification component achieved over 78 % accuracy, and the predicted QinU scores correlated with traditional survey‑based scores at a Pearson coefficient of 0.71. These results demonstrate that non‑structured user commentary can be transformed into reliable, fine‑grained QinU indicators, effectively bypassing the need for costly controlled experiments or extensive questionnaire campaigns.

The authors acknowledge several limitations. First, the training data exhibit platform and demographic bias, which may affect generalizability. Second, domain‑specific jargon and emerging slang challenge the language model’s ability to correctly interpret sentiment. Third, negative sentiment may stem from unrelated user frustrations rather than genuine software deficiencies, potentially inflating risk estimates. To mitigate these issues, future work will focus on (a) constructing larger, multilingual, domain‑balanced corpora; (b) fine‑tuning language models with domain‑specific vocabularies; and (c) developing hybrid approaches that combine the proposed text‑based predictions with selected ISO‑standard metrics for a more comprehensive QinU assessment.

In conclusion, the paper makes three substantive contributions: (1) a systematic exposition of why existing ISO‑based and custom quality models fall short for QinU measurement; (2) the design of a sentiment‑analysis‑driven prediction framework that operationalizes QinU assessment from readily available user feedback; and (3) empirical evidence that this approach yields QinU estimates comparable to traditional survey methods. By demonstrating a viable path toward real‑time, user‑centric quality monitoring, the work paves the way for more informed software acquisition decisions and continuous improvement cycles that truly reflect end‑user experience.

Comments & Academic Discussion

Loading comments...

Leave a Comment