Pixel-wise Orthogonal Decomposition for Color Illumination Invariant and Shadow-free Image

In this paper, we propose a novel, effective and fast method to obtain a color illumination invariant and shadow-free image from a single outdoor image. Different from state-of-the-art methods for shadow-free image that either need shadow detection or statistical learning, we set up a linear equation set for each pixel value vector based on physically-based shadow invariants, deduce a pixel-wise orthogonal decomposition for its solutions, and then get an illumination invariant vector for each pixel value vector on an image. The illumination invariant vector is the unique particular solution of the linear equation set, which is orthogonal to its free solutions. With this illumination invariant vector and Lab color space, we propose an algorithm to generate a shadow-free image which well preserves the texture and color information of the original image. A series of experiments on a diverse set of outdoor images and the comparisons with the state-of-the-art methods validate our method.

💡 Research Summary

**

The paper presents a novel, fast, and illumination‑invariant method for generating shadow‑free images from a single outdoor photograph, without requiring any shadow detection or learning stage. The authors start from a physically based illumination model that distinguishes direct sunlight from diffuse skylight. By measuring the spectral power distributions of daylight and skylight under various sun angles, they derive a proportional relationship between the RGB tristimulus values of a surface when illuminated by sunlight (non‑shadow) and when illuminated only by skylight (shadow). After adding a small constant (14) and applying a logarithmic transform, this relationship becomes linear:

log(v_R + 14) + log(v_G + 14) − β₁·log(v_B + 14) = constant

where β₁ is a pre‑computed scalar that depends only on the illumination spectra. Crucially, the expression is invariant to whether the pixel lies in shadow or not, providing a grayscale illumination‑invariant feature for every pixel.

The authors then construct three such linear equations per pixel (using three different invariant combinations derived from the same physical model). The set of equations can be expressed in matrix form A·x = b, where x is the unknown RGB vector. The solution space of this under‑determined system splits into a one‑dimensional nullspace (the “free solution”) and a one‑dimensional particular solution. The free solution encodes the ratio of illumination components (i.e., it varies with the mixture of direct and diffuse light) while the particular solution is orthogonal to the free solution and, by construction, does not change with illumination. The authors call this orthogonal component the “color illumination‑invariant vector”.

To obtain a visually pleasing result, the authors note that the particular solution alone may suffer from color distortion because the orthogonal projection removes some chromatic information. They therefore convert the image to the Lab color space. The L (luminance) channel is replaced by the grayscale illumination‑invariant image derived from the particular solution, while the a and b channels (chromaticity) are kept from the original image. This simple color‑restoration step preserves the original hue and saturation while eliminating shadow‑induced luminance variations.

Algorithmically, the method requires only per‑pixel logarithmic operations, a few scalar multiplications, and a projection onto the orthogonal subspace—operations that are constant‑time per pixel. Consequently, the entire pipeline runs in real time on standard hardware (tens of milliseconds for a 1080p image) without any iterative optimization or large‑scale matrix solves.

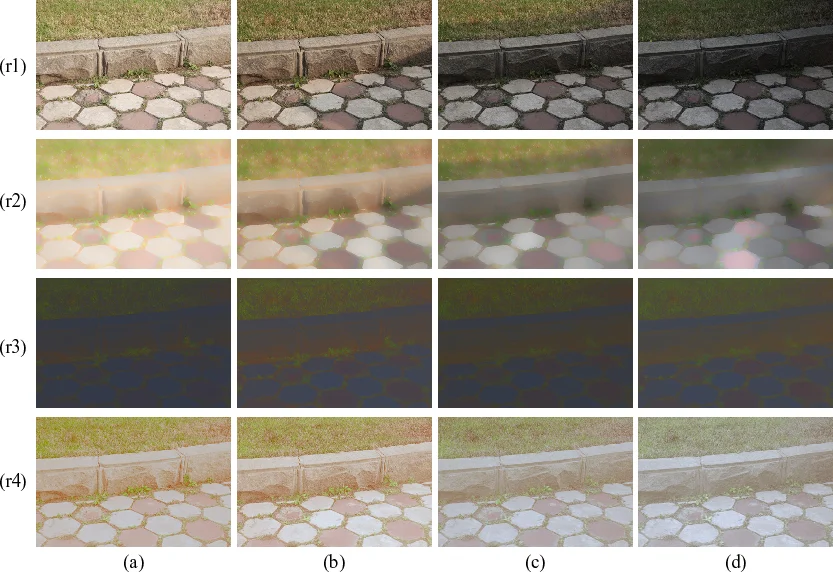

The experimental section evaluates the method on a diverse set of outdoor scenes containing hard shadows, soft shadows, and complex textures (e.g., foliage, building facades). Quantitative metrics include Structural Similarity Index (SSIM), CIE ΔE color difference, and processing time. Compared against state‑of‑the‑art approaches—shadow‑detection‑based methods (CRF, SVM, edge‑based Poisson reconstruction), statistical learning methods, grayscale illumination‑invariant techniques, and 2‑D chromaticity projection methods—the proposed technique consistently achieves higher SSIM, lower ΔE, and faster execution. Visual results demonstrate that fine texture details are retained and color fidelity is maintained, even in regions where shadows are soft or where shadow edges are ambiguous.

Key contributions are:

- Introduction of a pixel‑wise orthogonal decomposition grounded in a physically derived linear illumination model, enabling illumination‑invariant color extraction without any shadow detection.

- Demonstration that the particular solution, being orthogonal to the illumination‑dependent nullspace, provides a unique, illumination‑invariant color vector for each pixel.

- A straightforward Lab‑based color restoration that compensates for chromatic distortion while preserving original hue and saturation.

- Real‑time performance and robustness across a wide range of outdoor lighting conditions, surpassing existing methods in both quality and speed.

The authors suggest future work on extending the model to indoor or mixed lighting environments, handling non‑narrowband camera responses, and incorporating temporal consistency for video streams. Overall, the paper offers a compelling, physics‑driven alternative to data‑heavy or detection‑reliant shadow removal techniques, with clear practical implications for computer vision, photography, and augmented reality applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment