Teaching Deep Convolutional Neural Networks to Play Go

Mastering the game of Go has remained a long standing challenge to the field of AI. Modern computer Go systems rely on processing millions of possible future positions to play well, but intuitively a stronger and more ‘humanlike’ way to play the game would be to rely on pattern recognition abilities rather then brute force computation. Following this sentiment, we train deep convolutional neural networks to play Go by training them to predict the moves made by expert Go players. To solve this problem we introduce a number of novel techniques, including a method of tying weights in the network to ‘hard code’ symmetries that are expect to exist in the target function, and demonstrate in an ablation study they considerably improve performance. Our final networks are able to achieve move prediction accuracies of 41.1% and 44.4% on two different Go datasets, surpassing previous state of the art on this task by significant margins. Additionally, while previous move prediction programs have not yielded strong Go playing programs, we show that the networks trained in this work acquired high levels of skill. Our convolutional neural networks can consistently defeat the well known Go program GNU Go, indicating it is state of the art among programs that do not use Monte Carlo Tree Search. It is also able to win some games against state of the art Go playing program Fuego while using a fraction of the play time. This success at playing Go indicates high level principles of the game were learned.

💡 Research Summary

The paper “Teaching Deep Convolutional Neural Networks to Play Go” investigates whether a deep learning approach can capture the pattern‑recognition abilities that human Go experts use, thereby reducing reliance on brute‑force search. The authors train deep convolutional neural networks (DCNNs) to predict the next move made by expert players, treating move prediction as a supervised classification problem over the 361 board intersections.

Two datasets are used: one of professional games and another of strong amateur games. The board state is encoded as a multi‑channel 19×19 image. The basic representation uses three binary channels (current player stones, opponent stones, and simple‑ko constraints). An alternative 7‑channel encoding further distinguishes stones by their number of liberties, providing richer tactical information. Pass moves are omitted because they are rare and not central to the task.

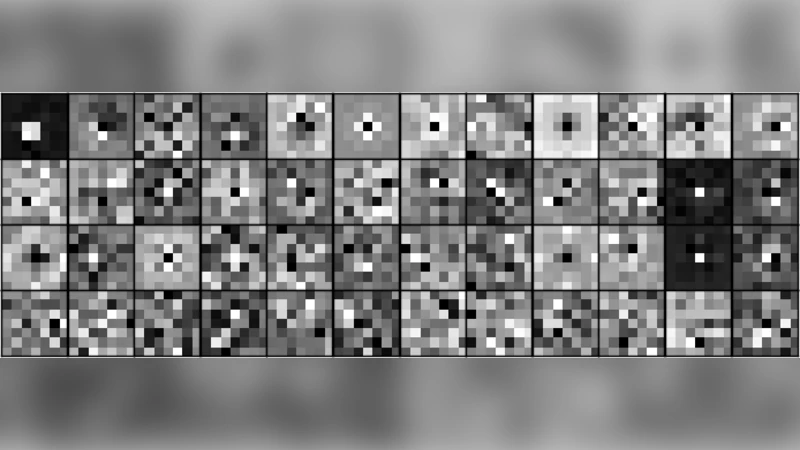

The network architecture consists of many convolutional layers (typically 12–20) with zero‑padding to preserve the 19×19 spatial dimensions, followed by a single fully‑connected layer that outputs a 361‑dimensional softmax distribution. ReLU activations and stochastic gradient descent with learning‑rate scheduling are employed. Experiments show that deeper networks with more filters consistently improve accuracy, and that using many small filters is more efficient than fewer large ones.

A key methodological contribution is weight tying for symmetries. Since Go is invariant under the eight rotations and reflections of the board, the authors enforce this invariance by sharing each convolutional filter across all eight symmetric transformations. This reduces the number of free parameters, speeds up convergence, and improves generalisation. An ablation study demonstrates that removing the symmetry‑tying reduces top‑1 move prediction accuracy by 2–3 percentage points.

Results: on the professional dataset the best model attains 41.1 % top‑1 accuracy; on the amateur dataset it reaches 44.4 %, surpassing the previous state‑of‑the‑art (≈41 %). To assess whether prediction quality translates into playing strength, the trained networks are used as stand‑alone Go agents without any Monte Carlo Tree Search. Against GNU Go (a well‑known open‑source program) the DCNN agents win consistently, establishing them as the strongest non‑MCTS programs at the time of writing. Against Fuego, a strong MCTS‑based engine, the DCNNs win a few games when limited to a fraction of the usual search time, indicating that the learned patterns are indeed useful for actual play.

The paper discusses limitations: (1) passing is not modeled, which affects end‑game performance; (2) only simple‑ko constraints are encoded, ignoring rarer super‑ko rules; (3) scaling the networks further would demand substantial computational resources. The authors suggest future work integrating the policy network with a value network via reinforcement learning (as later done in AlphaGo), incorporating more sophisticated rule encodings, and exploring model compression for real‑time deployment.

In summary, the study demonstrates that deep convolutional networks, when equipped with symmetry‑aware weight sharing and rich board encodings, can learn complex, non‑smooth move‑selection functions in Go, achieve state‑of‑the‑art prediction accuracy, and translate that knowledge into competitive playing strength without relying on exhaustive search. This work laid important groundwork for later successes in deep reinforcement learning for board games.

Comments & Academic Discussion

Loading comments...

Leave a Comment