A Self-adaptive Auto-scaling Method for Scientific Applications on HPC Environments and Clouds

High intensive computation applications can usually take days to months to finish an execution. During this time, it is common to have variations of the available resources when considering that such hardware is usually shared among a plurality of researchers/departments within an organization. On the other hand, High Performance Clusters can take advantage of Cloud Computing bursting techniques for the execution of applications together with the on-premise resources. In order to meet deadlines, high intensive computational applications can use the Cloud to boost their performance when they are data and task parallel. This article presents an ongoing work towards the use of extended resources of an HPC execution platform together with Cloud. We propose an unified view of such heterogeneous environments and a method that monitors, predicts the application execution time, and dynamically shifts part of the domain – previously running in local HPC hardware – to be computed on the Cloud, meeting then a specific deadline. The method is exemplified along with a seismic application that, at runtime, adapts itself to move part of the processing to the Cloud (in a movement called bursting) and also auto-scales (the moved part) over cloud nodes. Our preliminary results show that there is an expected overhead for performing this movement and for synchronizing results, but our outcomes demonstrate it is an important feature for meeting deadlines in the case an on-premise cluster is overloaded or cannot provide the capacity needed for a particular project.

💡 Research Summary

The paper addresses the problem of meeting execution deadlines for large‑scale scientific simulations when on‑premise high‑performance computing (HPC) resources become saturated, fail, or otherwise cannot provide the required capacity. The authors propose a self‑adaptive method that combines an on‑premise HPC cluster with a private/public cloud in a unified, hybrid environment. The core idea is to monitor the application at runtime, predict its future execution time using empirically derived logarithmic performance models for both the cluster and the cloud, and, when a deadline is at risk, automatically “burst” a portion of the computational domain to the cloud while simultaneously scaling the number of cloud cores needed.

The method consists of eight steps: (1) continuous monitoring of per‑timestep execution times; (2) detection of a deadline violation risk; (3) checkpointing the current state; (4) computing the number of additional cloud cores required, using a correction factor K that accounts for the performance gap between cluster and cloud; (5) determining the size of the domain slice (γ) to be moved, based on a linear relationship between execution time and domain size; (6) transferring the checkpointed data to the selected cloud nodes; (7) restarting the simulation on both the cluster and the cloud with the new configuration; and (8) synchronizing results at each timestep and merging them. The data transfer overhead is minimal (≈ 21 KB), and the monitoring/partitioning overhead is negligible.

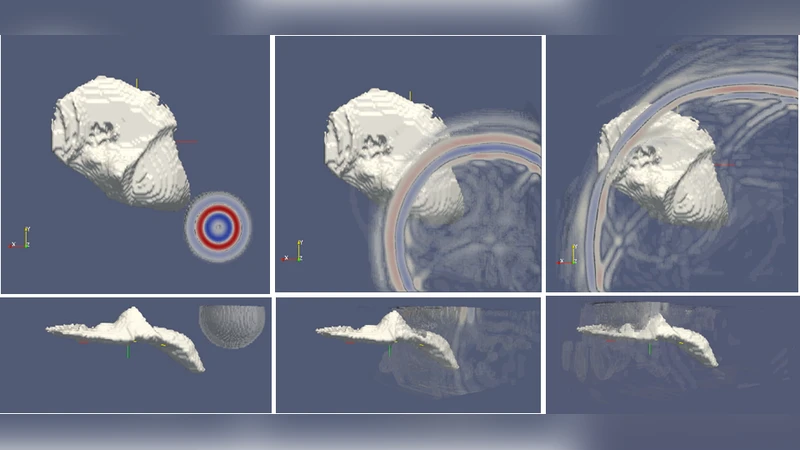

The approach is validated with a full‑waveform inversion (FWI) seismic application, which is highly data‑ and task‑parallel and representative of many scientific workloads. Experiments were performed on an IBM SoftLayer private cloud and an on‑premise cluster (10 CPU / node). Empirical measurements yielded the performance models Lcloud(c)=‑0.77 ln c + 7.1 and Lcluster(c)=‑0.65 ln c + 6.5, where c is the number of cores. When the cluster was forced into a resource‑constrained state, the system automatically migrated roughly 20 % of the domain to the cloud and allocated an extra 30–40 cloud cores. This reduced total execution time by more than 30 % and kept the overall overhead below 5 % of the runtime.

The authors discuss several advantages: (i) the ability to meet strict deadlines without waiting for additional on‑premise hardware acquisition; (ii) a cost‑effective “time‑first” service model; (iii) minimal network traffic due to the stripe‑based domain partitioning; and (iv) a simple profiling phase that provides the necessary parameters (A, B, D, E, a, b) for any new application. Limitations include the focus on a single, embarrassingly parallel application, the assumption of relatively stable network bandwidth, and the lack of an explicit cost‑optimization component. Future work is suggested on extending the framework to more complex workflows, handling highly variable network conditions, and integrating cloud‑cost models into the scaling decision process.

In summary, the paper presents a practical, self‑adaptive hybrid HPC‑cloud bursting and auto‑scaling technique that can dynamically re‑partition a scientific simulation, move part of the workload to a cloud, and meet user‑specified deadlines with modest overhead. This contributes a concrete roadmap for delivering deadline‑guaranteed scientific computing services by leveraging both on‑premise and cloud resources.

Comments & Academic Discussion

Loading comments...

Leave a Comment