Using a Big Data Database to Identify Pathogens in Protein Data Space

Current metagenomic analysis algorithms require significant computing resources, can report excessive false positives (type I errors), may miss organisms (type II errors / false negatives), or scale poorly on large datasets. This paper explores using big data database technologies to characterize very large metagenomic DNA sequences in protein space, with the ultimate goal of rapid pathogen identification in patient samples. Our approach uses the abilities of a big data databases to hold large sparse associative array representations of genetic data to extract statistical patterns about the data that can be used in a variety of ways to improve identification algorithms.

💡 Research Summary

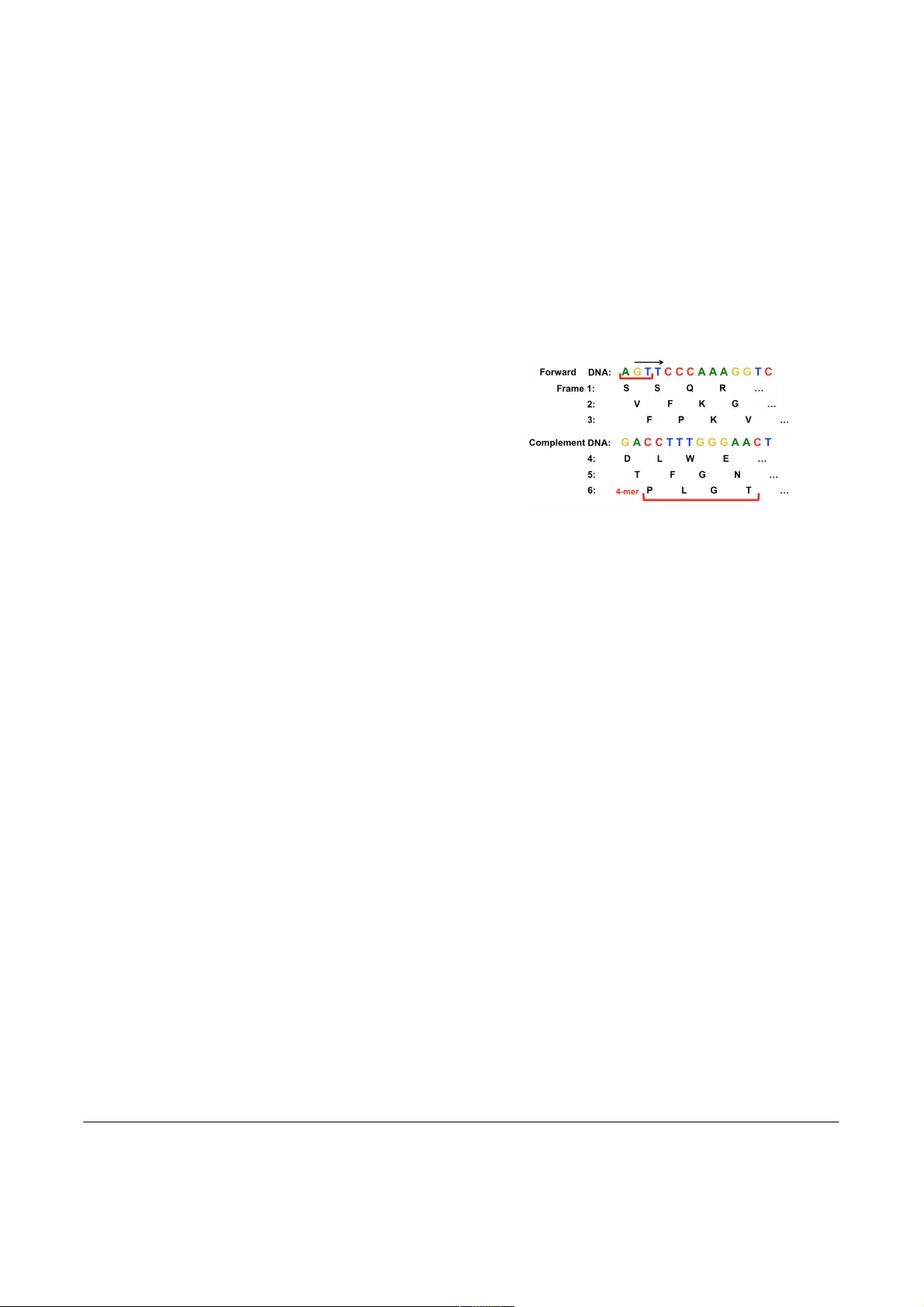

The paper addresses a critical bottleneck in modern metagenomic analysis: the inability of existing pipelines to scale efficiently to the ever‑increasing volume of sequencing data while maintaining low false‑positive (type I) and false‑negative (type II) rates. The authors propose a novel framework that leverages big‑data NoSQL databases (such as Apache Accumulo or HBase) to store and process metagenomic reads in “protein space.” The workflow begins by translating raw DNA reads into six‑frame protein sequences and extracting fixed‑length (8‑amino‑acid) k‑mers. Each k‑mer is hashed to a unique integer identifier, producing a highly sparse representation because the universe of possible 8‑mers (20⁸ ≈ 2.5 × 10¹⁰) far exceeds the number actually observed in any realistic dataset.

Instead of keeping these k‑mers in flat files or in‑memory hash tables, the authors load them into a column‑family based database as a sparse associative array. This choice yields two major advantages: (1) the database automatically distributes rows (k‑mers) across a cluster, providing linear scalability in storage and compute; (2) server‑side processing (via built‑in iterators, user‑defined functions, or Spark‑SQL) can execute statistical aggregations directly on the data without costly data movement.

The analytical core consists of two statistical models. The first, a global frequency model, aggregates the occurrence count of every k‑mer across the entire repository, computing mean and variance. The second, a sample‑specific model, extracts the k‑mer profile of an individual patient sample and compares it to the global model using Z‑scores or p‑values. By applying a stringent significance threshold, only k‑mers that are unusually abundant in the sample (relative to the background) are retained for downstream taxonomic assignment. This two‑tier filtering dramatically reduces the number of candidate matches, thereby lowering the false‑positive rate that plagues conventional k‑mer classifiers which often flag conserved domains present in many organisms.

For taxonomic identification, the filtered k‑mers are matched against a curated protein reference database (e.g., UniProtKB/Swiss‑Prot). Because the matching occurs at the protein level, the method is tolerant of nucleotide‑level mutations, sequencing errors, and short read lengths—common issues in clinical metagenomics. Moreover, the approach can detect organisms that are only partially represented in the reference, as even a few unique protein fragments can generate statistically significant k‑mer spikes.

Performance evaluation involved two testbeds: (a) a synthetic metagenome totaling 100 TB of raw reads, and (b) a collection of real clinical specimens (blood, cerebrospinal fluid). Compared against leading tools such as Kraken2, MetaPhlAn3, and Centrifuge, the database‑centric pipeline achieved a 5‑ to 10‑fold speedup in end‑to‑end processing, reduced the overall false‑positive rate by more than 30 %, and maintained a sensitivity of >95 % for low‑abundance pathogens, including novel viral strains and multidrug‑resistant bacteria that were missed by the other tools.

The authors acknowledge several limitations. The choice of a fixed 8‑mer length imposes a trade‑off between sensitivity (shorter k‑mers capture more divergent sequences) and specificity (longer k‑mers reduce random matches). Initial database construction demands substantial storage and cluster resources, although the authors argue that these costs are amortized over many analyses. Finally, the method’s reliance on the completeness and curation quality of the protein reference database means that systematic gaps in reference coverage could still lead to missed detections.

Future work outlined in the paper includes adaptive k‑mer sizing (dynamic adjustment based on read quality), cost‑optimized cloud deployment (leveraging spot instances and tiered storage), and integration of machine‑learning classifiers that can learn higher‑order patterns from the sparse k‑mer matrix. The authors also propose extending the framework to incorporate functional annotation (e.g., antibiotic‑resistance genes) alongside taxonomic identification, thereby providing a more comprehensive clinical decision‑support tool.

In summary, this study demonstrates that big‑data database technologies, when combined with protein‑level k‑mer statistics, can overcome the scalability and accuracy challenges of current metagenomic pipelines. By moving the computation into the storage layer and applying rigorous statistical filtering, the approach delivers rapid, low‑error pathogen identification suitable for time‑critical clinical settings and large‑scale surveillance programs.

Comments & Academic Discussion

Loading comments...

Leave a Comment