Iso-Quality of Service: Fairly Ranking Servers for Real-Time Data Analytics

We present a mathematically rigorous Quality-of-Service (QoS) metric which relates the achievable quality of service metric (QoS) for a real-time analytics service to the server energy cost of offering the service. Using a new iso-QoS evaluation methodology, we scale server resources to meet QoS targets and directly rank the servers in terms of their energy-efficiency and by extension cost of ownership. Our metric and method are platform-independent and enable fair comparison of datacenter compute servers with significant architectural diversity, including micro-servers. We deploy our metric and methodology to compare three servers running financial option pricing workloads on real-life market data. We find that server ranking is sensitive to data inputs and desired QoS level and that although scale-out micro-servers can be up to two times more energy-efficient than conventional heavyweight servers for the same target QoS, they are still six times less energy efficient than high-performance computational accelerators.

💡 Research Summary

The paper introduces a rigorous Quality‑of‑Service (QoS) metric that directly links the achievable service quality of a real‑time analytics application to the energy cost of the server delivering that service. By defining QoS as the ratio of successfully computed option prices to the total number of requested evaluations, the authors model the arrival of market price updates as a Poisson process and derive a cumulative frequency distribution (CFD) that captures the time gaps between updates. They identify a platform‑dependent threshold G – the minimum time required to price all options for a given update – and express QoS as the complement of the exponential CDF beyond this threshold.

To evaluate the metric, three architecturally diverse platforms are used: (1) a conventional dual‑socket Intel Sandy Bridge server (2 × 8 cores at 2 GHz), (2) an Intel Xeon Phi Knights Corner many‑core accelerator (60 cores, 4‑way hyper‑threaded, 512‑bit vector units), and (3) a Calxeda‑based micro‑server rack (Viridis) comprising sixteen nodes each with four ARM Cortex‑A9 cores. All platforms run the same C code base, optimized for each architecture via hand‑written assembly, compiler intrinsics, or auto‑vectorization. Two financial option‑pricing kernels are examined: a Monte‑Carlo (MC) method and a Binomial Tree (BT) method, both applied to European vanilla options.

Power is measured at the PRE‑VRM point (before the voltage regulator module) using RAPL counters on Intel, IPMI on ARM, and equivalent counters on Xeon Phi, ensuring a fair, platform‑independent energy accounting. The power profile during kernel execution is essentially a flat trapezoid, indicating near‑100 % CPU utilization and making average power a reliable proxy for total energy consumption.

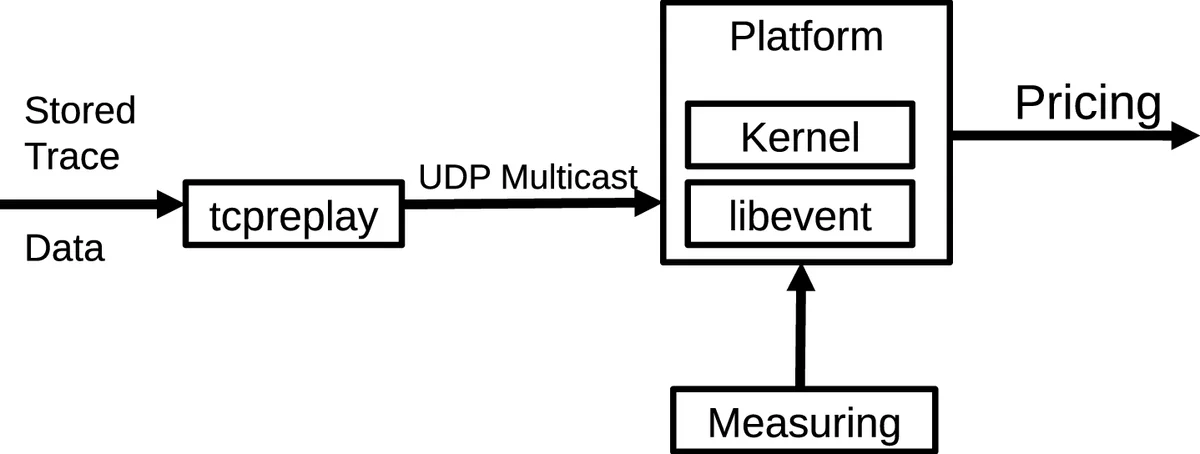

The experimental workload replays a full day of Facebook stock price ticks over UDP multicast to all nodes, triggering the pricing of 617 European options each time a price change occurs. From these runs the authors extract two key per‑option metrics: time per option (S_opt, seconds) and energy per option (J_opt, joules). By fixing a target QoS level (iso‑QoS) and using the derived CFD, they compute the required S_opt and J_opt for each platform and kernel, thereby ranking the platforms in terms of energy efficiency under identical service quality constraints.

Results show that the micro‑server platform can be up to twice as energy‑efficient as the conventional Intel server when delivering the same QoS, thanks to its low‑power cores and higher parallelism. However, the Xeon Phi accelerator outperforms both, achieving roughly six times better energy efficiency under the same QoS, owing to its massive vector units and high memory bandwidth. The ranking is not static: the MC kernel, which is compute‑intensive and relies heavily on transcendental functions, favors the Xeon Phi, whereas the BT kernel, dominated by memory‑bound add‑multiply operations, narrows the gap between the micro‑server and the Intel server. Moreover, varying the number of options, the update frequency, or the target QoS shifts the threshold G and can invert the relative ordering of platforms.

The authors conclude that server selection for latency‑critical, event‑driven analytics should be driven by a joint consideration of QoS requirements and energy cost, rather than by peak performance or nominal power alone. Their iso‑QoS methodology provides a quantitative framework for datacenter operators to minimize operational expenditure while meeting Service Level Agreements. The paper also suggests future work on dynamic voltage‑frequency scaling, workload‑aware scheduling, and multi‑tenant QoS arbitration in cloud environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment