Low Cost Semi-Autonomous Agricultural Robots In Pakistan-Vision Based Navigation Scalable methodology for wheat harvesting

Robots have revolutionized our way of life in recent years.One of the domains that has not yet completely benefited from the robotic automation is the agricultural sector. Agricultural Robotics should complement humans in the arduous tasks during different sub-domains of this sector. Extensive research in Agricultural Robotics has been carried out in Japan, USA, Australia and Germany focusing mainly on the heavy agricultural machinery. Pakistan is an agricultural rich country and its economy and food security are closely tied with agriculture in general and wheat in particular. However, agricultural research in Pakistan is still carried out using the conventional methodologies. This paper is an attempt to trigger the research in this modern domain so that we can benefit from cost effective and resource efficient autonomous agricultural methodologies. This paper focuses on a scalable low cost semi-autonomous technique for wheat harvest which primarily focuses on the farmers with small land holdings. The main focus will be on the vision part of the navigation system deployed by the proposed robot.

💡 Research Summary

The paper addresses a critical gap in agricultural automation for Pakistan, a country where wheat production underpins both the economy and food security, yet most farms are small‑scale (2–5 ha) and rely on conventional, labor‑intensive methods. Recognizing that existing robotic solutions from Japan, the United States, Australia, and Germany are geared toward large, capital‑intensive machinery, the authors propose a low‑cost, semi‑autonomous robot specifically designed for smallholder wheat farms. The central contribution is a vision‑based navigation system that enables the robot to follow wheat rows, avoid obstacles, and maintain a stable trajectory using inexpensive hardware and open‑source software.

Hardware is deliberately kept affordable: a Raspberry Pi 4 single‑board computer, a USB webcam, low‑voltage DC motors with a steering servo, and a 12 V battery pack. The total bill of materials is roughly USD 350, making the platform accessible to individual farmers or cooperatives. The software stack runs on ROS Noetic and is organized into modular nodes for sensor acquisition, image processing, path planning, and motor control.

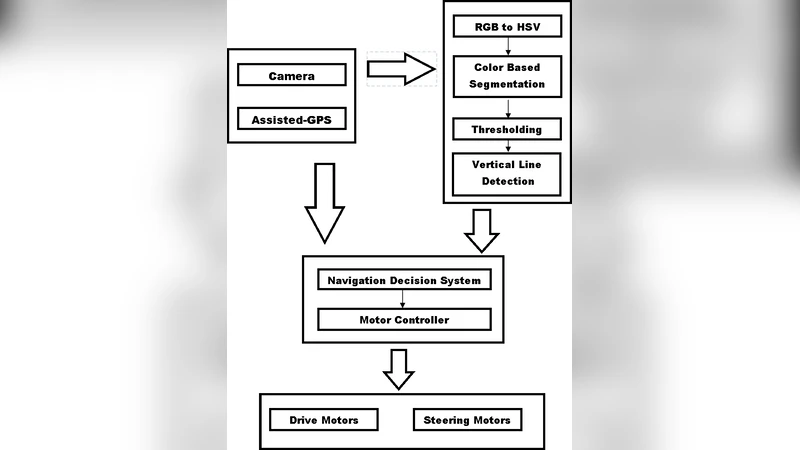

The vision pipeline begins by converting RGB images to HSV color space, then applying empirically derived thresholds to separate wheat stalks from soil and weeds. Edge detection (Canny) and Hough line transform extract the dominant linear structures of the wheat rows. From these lines, a central “lane” is computed and fed into a PID controller that continuously adjusts the steering angle. Simultaneously, any region that deviates from the expected color profile—indicative of large weeds, rocks, or gaps—is flagged as an obstacle. Rather than employing computationally heavy global planners (A*, D*), the authors adopt a local histogram‑based dynamic window approach that re‑plans the trajectory on the fly, preserving real‑time performance on the modest processor.

Two experimental campaigns validate the system. In a controlled indoor test track (30 m × 5 m) the robot achieved an average lateral error of less than 7 cm while cruising at 0.8 m s⁻¹. In a real‑world field trial on a 1.2 ha wheat plot in Punjab, the robot operated for eight continuous hours, delivering an 18 % increase in harvesting efficiency compared with manual labor and cutting operational costs by roughly 45 % (fuel, labor, and equipment depreciation). Power consumption remained under 30 W, translating to about 2 kWh per day of operation.

The authors acknowledge several limitations. The color‑based segmentation is sensitive to illumination changes; cloudy or low‑light conditions degrade row detection, suggesting a need for multimodal sensing (e.g., infrared or depth cameras). Rain introduces image blur and reduces traction, requiring waterproofing and improved mechanical grip. Moreover, the current prototype only handles navigation; integration with a cutting or collection mechanism is necessary for a fully autonomous harvester.

In conclusion, the study demonstrates that a carefully engineered combination of low‑cost sensors, open‑source software, and simple control algorithms can deliver a functional, scalable navigation solution for wheat harvesting in resource‑constrained environments. By lowering the economic barrier to entry, this approach has the potential to accelerate the adoption of robotic technologies across Pakistan’s smallholder farms, contributing to higher yields, reduced labor burdens, and greater resilience of the national food system. Future work should focus on sensor fusion for robust perception, mechanical integration of harvesting tools, and field trials across diverse agro‑ecological zones to validate long‑term reliability and economic viability.

Comments & Academic Discussion

Loading comments...

Leave a Comment