An Experimental Analysis of the Echo State Network Initialization Using the Particle Swarm Optimization

This article introduces a robust hybrid method for solving supervised learning tasks, which uses the Echo State Network (ESN) model and the Particle Swarm Optimization (PSO) algorithm. An ESN is a Recurrent Neural Network with the hidden-hidden weights fixed in the learning process. The recurrent part of the network stores the input information in internal states of the network. Another structure forms a free-memory method used as supervised learning tool. The setting procedure for initializing the recurrent structure of the ESN model can impact on the model performance. On the other hand, the PSO has been shown to be a successful technique for finding optimal points in complex spaces. Here, we present an approach to use the PSO for finding some initial hidden-hidden weights of the ESN model. We present empirical results that compare the canonical ESN model with this hybrid method on a wide range of benchmark problems.

💡 Research Summary

The paper proposes a hybrid method that combines Echo State Networks (ESNs) with Particle Swarm Optimization (PSO) to improve the initialization of the recurrent reservoir in ESNs. Traditional ESNs keep all hidden‑hidden (reservoir) weights fixed after random initialization and only train the read‑out layer via linear regression. However, the performance of an ESN is highly sensitive to reservoir parameters such as input scaling, size, spectral radius, and connectivity. Existing approaches either manually tune these parameters or use global meta‑heuristics to optimize the entire reservoir, which often requires costly spectral‑radius calculations and extensive computation.

In the presented approach, only a subset of the reservoir weights (denoted Ω_h) is selected randomly and optimized with PSO, while the remaining reservoir weights stay fixed. The proportion of optimized weights is controlled by a parameter α (0 < α < 1), typically set between 0.1 and 0.3. PSO particles explore the M‑dimensional space of the selected weights, updating positions and velocities according to the standard equations, and using the mean‑square error (MSE) on the training set as the fitness function. After PSO converges, the read‑out weights are computed in a single step using ridge regression. Crucially, the method does not require explicit computation of the spectral radius, avoiding the associated numerical instability and computational burden.

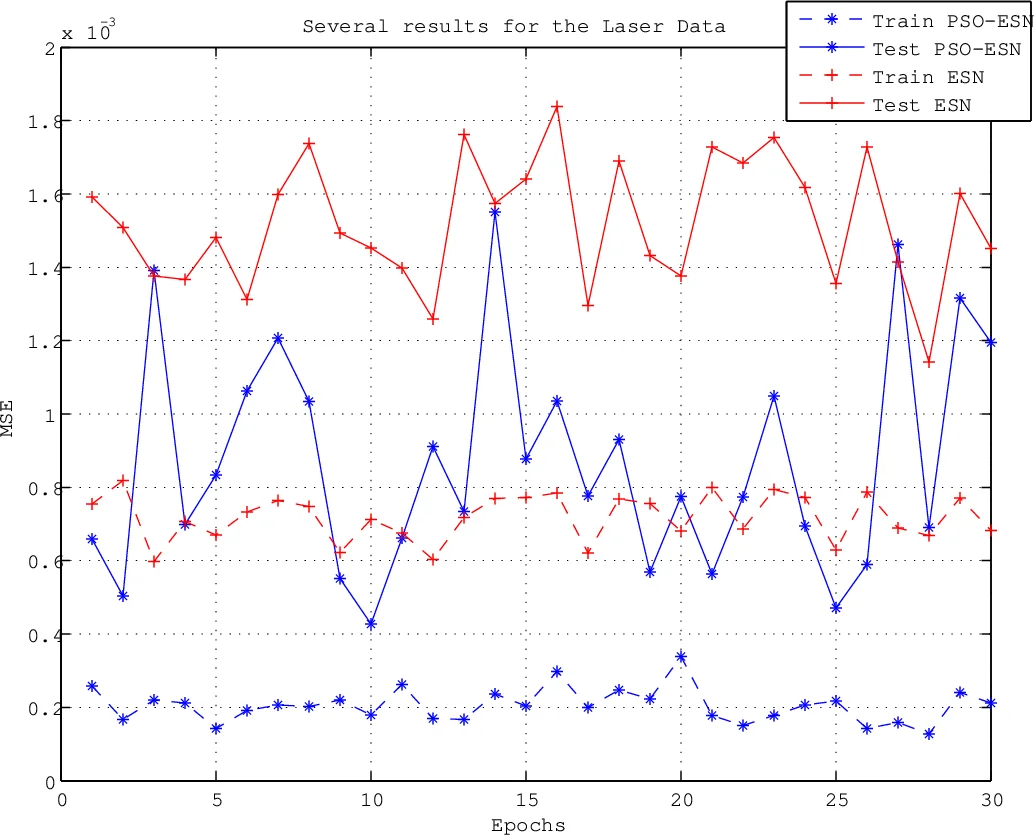

The authors evaluate the hybrid PSO‑ESN on four benchmark tasks, including the Santa Fe laser intensity time series and two synthetic NARMA series, each representing challenging chaotic and long‑range‑dependency dynamics. For each benchmark, 30 independent runs with different random seeds were performed for both the canonical ESN and the PSO‑ESN. The canonical ESN was initialized with uniformly distributed weights, the reservoir size fixed at 50 units, spectral radius set to 0.9, and sparsity to 0.3. The PSO‑ESN used the same base initialization but applied PSO to the selected subset of reservoir weights, optimizing directly for MSE. Results show that PSO‑ESN consistently achieves lower prediction error (often a substantial reduction in MSE) and shorter training times compared with the standard ESN. Statistical analysis using asymptotic confidence intervals confirms that the performance gains are significant.

The study demonstrates that adjusting only a small fraction of reservoir connections is sufficient to enhance the expressive power of the ESN, while keeping computational costs low. By bypassing the need for spectral‑radius scaling, the method simplifies the reservoir design process and reduces the reliance on expert manual tuning. The authors conclude that this PSO‑based partial‑weight optimization offers a practical, scalable avenue for improving Reservoir Computing models and suggest future work on adaptive α selection, larger‑scale reservoirs, and application to real‑world control and forecasting problems.

Comments & Academic Discussion

Loading comments...

Leave a Comment