Robust Spectral Compressed Sensing via Structured Matrix Completion

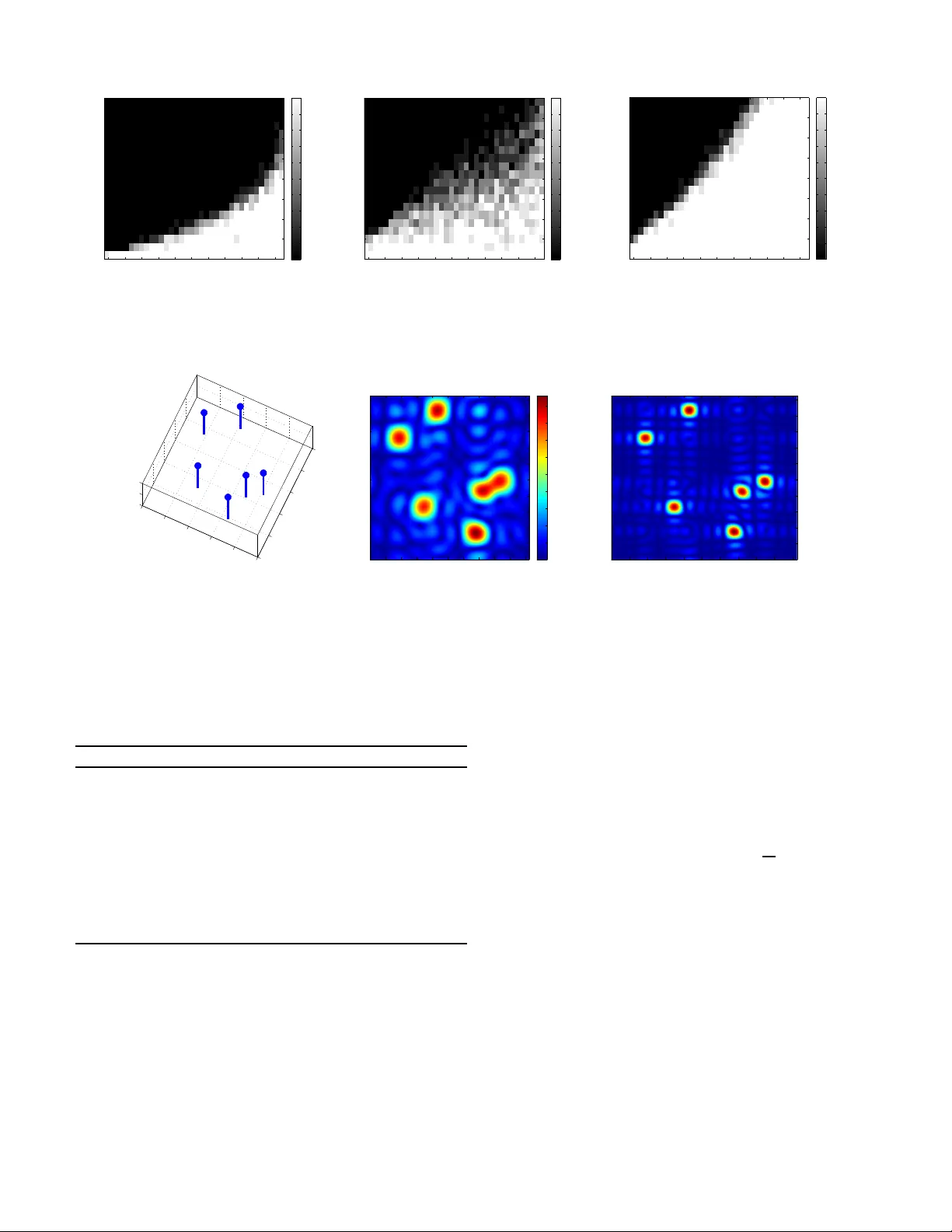

The paper explores the problem of \emph{spectral compressed sensing}, which aims to recover a spectrally sparse signal from a small random subset of its $n$ time domain samples. The signal of interest is assumed to be a superposition of $r$ multi-dim…

Authors: Yuxin Chen, Yuejie Chi