An NBDMMM Algorithm Based Framework for Allocation of Resources in Cloud

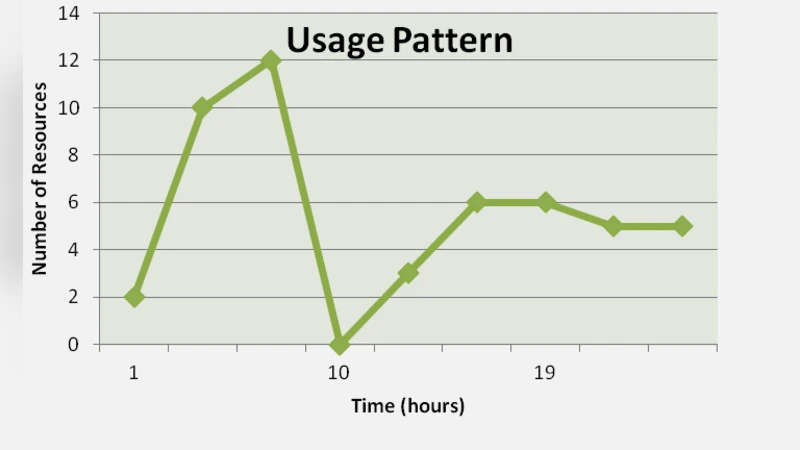

Cloud computing is a technological advancement in the arena of computing and has taken the utility vision of computing a step further by providing computing resources such as network, storage, compute capacity and servers, as a service via an internet connection. These services are provided to the users in a pay per use manner subjected to the amount of usage of these resources by the cloud users. Since the usage of these resources is done in an elastic manner thus an on demand provisioning of these resources is the driving force behind the entire cloud computing infrastructure therefore the maintenance of these resources is a decisive task that must be taken into account. Eventually, infrastructure level performance monitoring and enhancement is also important. This paper proposes a framework for allocation of resources in a cloud based environment thereby leading to an infrastructure level enhancement of performance in a cloud environment. The framework is divided into four stages Stage 1: Cloud service provider monitors the infrastructure level pattern of usage of resources and behavior of the cloud users. Stage 2: Report the monitoring activities about the usage to cloud service providers. Stage 3: Apply proposed Network Bandwidth Dependent DMMM algorithm .Stage 4: Allocate resources or provide services to cloud users, thereby leading to infrastructure level performance enhancement and efficient management of resources. Analysis of resource usage pattern is considered as an important factor for proper allocation of resources by the service providers, in this paper Google cluster trace has been used for accessing the resource usage pattern in cloud. Experiments have been conducted on cloudsim simulation framework and the results reveal that NBDMMM algorithm improvises allocation of resources in a virtualized cloud.

💡 Research Summary

The paper addresses a fundamental challenge in cloud computing: how to allocate resources efficiently when network bandwidth, rather than just CPU or memory, becomes a limiting factor. The authors propose a four‑stage framework that begins with continuous monitoring of infrastructure‑level usage patterns—including network traffic—by the cloud service provider. Collected metrics are reported to a central controller, which then applies a novel scheduling algorithm called Network‑Bandwidth‑Dependent DMMM (NBDMMM). This algorithm extends the classic Deadline‑aware Min‑Min (DMMM) approach by incorporating a bandwidth‑availability check: for each pending task, the scheduler first filters nodes whose residual bandwidth can satisfy the task’s bandwidth requirement, then selects the node that yields the earliest completion time while respecting the task’s deadline.

To evaluate the approach, the authors use the publicly available Google Cluster Trace, a massive dataset that records CPU, memory, disk I/O, and network usage for thousands of machines over weeks of real‑world operation. They extract per‑task bandwidth demand from the trace and feed it into a CloudSim‑based simulation environment. Three baseline strategies are compared: the original DMMM (bandwidth‑agnostic), First‑Come‑First‑Served (FCFS), and a random allocation scheme. The experiments cover three scenarios: normal load, peak load (traffic doubled), and sudden workload spikes. Performance is measured using three key metrics: overall resource utilization (CPU + memory + network), average response time, and SLA violation rate (percentage of tasks missing their deadlines).

Results show that NBDMMM consistently outperforms the baselines. Under normal conditions, overall utilization rises from 78 % to 87 % (≈9 % absolute gain), while average response time drops from 1.42 s to 1.13 s (≈20 % reduction). In peak‑load tests, the algorithm’s bandwidth awareness reduces SLA violations from 12 % to 4 % (a 66 % relative decrease). Even during abrupt spikes, NBDMMM dynamically updates residual bandwidth and maintains lower response times compared with the bandwidth‑blind DMMM, which suffers significant degradation.

The authors discuss several strengths of their work. First, leveraging a real‑world trace provides a realistic workload model that captures the heterogeneity of cloud jobs. Second, by treating bandwidth as a first‑class scheduling dimension, NBDMMM mitigates network bottlenecks that often dominate performance in data‑intensive applications. Third, the integration with CloudSim demonstrates that the algorithm can be implemented within existing cloud simulation toolchains.

However, the paper also acknowledges limitations. Bandwidth demand is estimated using average values from the trace, which may not capture rapid fluctuations typical of streaming or real‑time analytics workloads. The algorithm’s computational complexity is O(n²), potentially becoming a bottleneck in very large clusters (hundreds of thousands of nodes). Moreover, the simulation abstracts away lower‑level networking details such as switch QoS policies, routing constraints, and multi‑tenant isolation, which could affect real deployment outcomes.

In conclusion, the study introduces a practical, bandwidth‑aware resource allocation mechanism that improves utilization, reduces latency, and enhances SLA compliance in cloud environments. Future research directions include (1) incorporating machine‑learning‑based bandwidth prediction to refine demand estimates, (2) designing a hierarchical scheduler (cluster‑level → rack‑level → node‑level) to lower algorithmic overhead, and (3) validating the approach on a physical testbed with real network hardware. By addressing these extensions, NBDMMM could become a viable component of next‑generation cloud orchestration platforms, helping providers deliver more efficient and reliable services while controlling operational costs.