Students Behavioural Analysis in an Online Learning Environment Using Data Mining (ICIAfS)

The focus of this research was to use Educational Data Mining (EDM) techniques to conduct a quantitative analysis of students interaction with an e-learning system through instructor-led non-graded and graded courses. This exercise is useful for establishing a guideline for a series of online short courses for them. A group of 412 students’ access behaviour in an e-learning system were analysed and they were grouped into clusters using K-Means clustering method according to their course access log records. The results explained that more than 40% from the student group are passive online learners in both graded and non-graded learning environments. The result showed that the difference in the learning environments could change the online access behaviour of a student group. Clustering divided the student population into five access groups based on their course access behaviour. Among these groups, the least access group (NG-41% and G-42%) and the highest access group (NG-9% and G-5%) could be identified very clearly due to their access variation from the rest of the groups.

💡 Research Summary

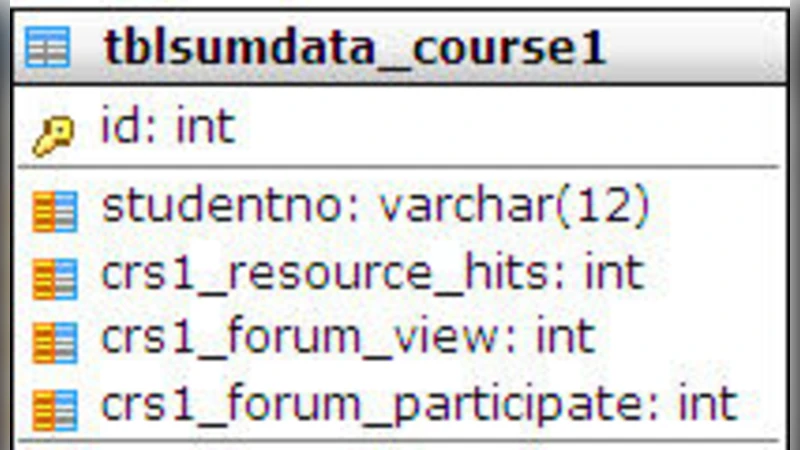

The paper presents a quantitative investigation of how university students interact with an e‑learning platform, employing Educational Data Mining (EDM) techniques to uncover distinct behavioral patterns. A total of 412 students participated in two parallel courses taught by the same instructor: a non‑graded (NG) version and a graded (G) version. For each student, detailed access logs were extracted from the Learning Management System (LMS), capturing timestamps, session durations, types of resources accessed (videos, PDFs, quizzes, etc.), and weekly visit frequencies. After cleaning the raw data, ten derived variables were created, normalized, and examined for multicollinearity.

The core analytical method was K‑Means clustering. The optimal number of clusters was determined jointly by the elbow method and silhouette scores, leading to the selection of five clusters (k = 5) for both NG and G datasets. Each cluster was characterized by its mean values across the derived variables, allowing the authors to label the groups according to their overall level of platform engagement.

The five clusters can be summarized as follows:

- Low‑Access Group (NG 41 %, G 42 %) – Students in this segment exhibit the fewest log‑ins, shortest session times, and minimal resource usage. They are labeled “passive learners” and constitute over 40 % of the entire cohort.

- Low‑to‑Medium Access Group (NG 25 %, G 30 %) – These learners display average visit frequencies and a moderate mix of resource consumption, with varied assignment submission behavior.

- Medium Access Group (NG 15 %, G 12 %) – Characterized by slightly above‑average weekly visits and a higher proportion of video consumption.

- High‑Access Group (NG 9 %, G 5 %) – Students here log in more than twice as often as the overall mean, spend extended periods on the platform, and interact with all types of materials. They are identified as “active learners.”

- Irregular Access Group (NG 10 %, G 11 %) – This segment shows sporadic usage patterns, with bursts of activity concentrated in particular weeks.

A key finding is that the same individuals can shift between clusters depending on whether the course is graded. Some students who were passive in the NG environment moved to the high‑access cluster when grades were at stake, illustrating the motivational impact of assessment. Conversely, a subset remained passive across both contexts, suggesting deeper issues such as low intrinsic motivation, external time constraints, or competing responsibilities.

Methodologically, the authors acknowledge the limitations of K‑Means, notably its assumption of spherical clusters and sensitivity to initial centroid placement. They recommend complementing the analysis with density‑based or hierarchical clustering techniques in future work. Moreover, while log data provide a rich picture of observable behavior, they lack direct insight into cognitive or affective states; integrating surveys, interviews, or performance metrics would yield a more holistic understanding.

The study’s implications for instructional design are substantial. By identifying passive versus active learners, educators can tailor interventions: automated reminders, gamified incentives, or personalized nudges for the former, and enrichment resources or peer‑lead activities for the latter. The authors propose extending the research to (1) correlate cluster membership with actual academic outcomes (e.g., exam scores, course completion rates) and (2) develop real‑time recommendation or reinforcement‑learning systems that adaptively promote engagement based on detected behavior patterns.

In conclusion, this work demonstrates that systematic mining of LMS access logs, coupled with robust clustering, can reveal actionable student segments in both graded and non‑graded online learning contexts. The findings provide a data‑driven foundation for designing differentiated support strategies aimed at increasing participation, reducing dropout, and ultimately improving learning outcomes in digital education environments.