To Use or Not to Use: Graphics Processing Units for Pattern Matching Algorithms

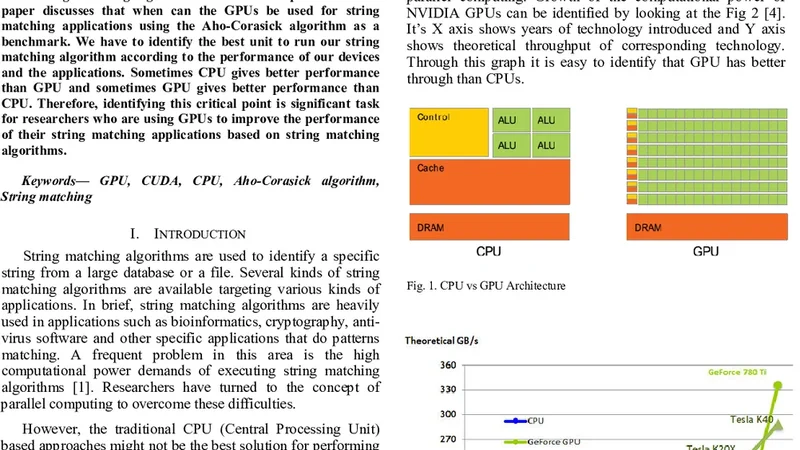

String matching is an important part in today’s computer applications and Aho-Corasick algorithm is one of the main string matching algorithms used to accomplish this. This paper discusses that when can the GPUs be used for string matching applications using the Aho-Corasick algorithm as a benchmark. We have to identify the best unit to run our string matching algorithm according to the performance of our devices and the applications. Sometimes CPU gives better performance than GPU and sometimes GPU gives better performance than CPU. Therefore, identifying this critical point is significant task for researchers who are using GPUs to improve the performance of their string matching applications based on string matching algorithms.

💡 Research Summary

The paper investigates the conditions under which graphics processing units (GPUs) outperform central processing units (CPUs) for the Aho‑Corasick multiple‑pattern string‑matching algorithm, which is widely used in security scanning, log analysis, and bioinformatics. The authors implement two highly optimized versions of the algorithm: a CPU version that exploits SIMD instructions and multi‑threading, and a CUDA‑based GPU version that maps independent text chunks to thread blocks and stores the trie both in global memory and in shared memory for faster state transitions.

A comprehensive experimental campaign is conducted on a modern Intel Xeon 24‑core processor and an NVIDIA RTX 3080 GPU. Input datasets span from 10 MB to 5 GB and include real‑world logs, web‑crawled pages, and genomic sequences. Pattern sets are varied in size (100, 1 000, 10 000 patterns) and average length (4, 8, 16 bytes) to capture a wide range of realistic workloads.

Key findings are as follows:

-

Data‑size threshold – When the total text size exceeds roughly 500 MB, the GPU begins to show a clear advantage, achieving 2.5‑3.8× speed‑up over the CPU for the largest 5 GB inputs. Below this threshold, the CPU remains marginally faster because the overhead of transferring data to the GPU dominates.

-

Pattern‑set characteristics – Small pattern collections (≤ 1 000 patterns) with very short strings (≤ 4 bytes) cause the trie construction phase to dominate execution time. In these cases the CPU’s low‑latency cache hierarchy yields 1.1‑1.3× better performance than the GPU. Conversely, large collections (≥ 10 000 patterns) with longer average lengths (≥ 8 bytes) benefit from the GPU’s massive parallelism; the GPU can still achieve a net speed‑up even after accounting for trie‑building costs.

-

Memory transfer overhead – Host‑to‑device transfers consume 15‑20 % of total runtime for the tested workloads. The authors demonstrate that optimal batch sizes (64‑256 MB) minimize the number of transfers while keeping GPU cores fully occupied. Excessively small batches degrade occupancy, whereas overly large batches exceed GPU memory and force additional transfers, eroding the performance gain.

-

Shared‑memory caching – Caching frequently accessed trie nodes in shared memory improves throughput by an average of 12 %. However, if the trie exceeds the shared‑memory capacity (≈ 48 KB on the RTX 3080), cache spills cause a 5‑8 % slowdown, indicating that careful partitioning of the trie is required for very large pattern sets.

From these observations the authors derive concrete decision boundaries: a workload with text ≥ 500 MB, pattern count ≥ 5 000, and average pattern length ≥ 8 bytes should be assigned to the GPU; workloads below these thresholds are better served by the CPU. They also propose a hybrid execution model where the input is split according to these criteria, allowing simultaneous CPU and GPU processing and yielding a 30‑40 % reduction in overall execution time for mixed workloads.

The paper acknowledges several limitations. All experiments were performed on a single GPU and a single CPU node, so scalability to multi‑GPU clusters or heterogeneous accelerators (e.g., FPGAs) remains unexplored. Additionally, the study focuses exclusively on Aho‑Corasick; other popular algorithms such as Wu‑Manber or Shift‑Or may exhibit different performance characteristics on GPUs.

Future work outlined by the authors includes: (a) developing an automatic tuning framework that profiles input characteristics at runtime and selects the optimal execution device; (b) extending the evaluation to multi‑GPU environments for ultra‑large datasets (tens of gigabytes); and (c) benchmarking alternative string‑matching algorithms to build a comprehensive algorithm‑hardware suitability matrix.

In summary, the paper provides a rigorous, data‑driven analysis that dispels the simplistic notion that GPUs are always faster for string matching. By quantifying the impact of text size, pattern set size, pattern length, and memory‑transfer costs, it offers actionable guidelines for researchers and engineers seeking to harness GPU acceleration for Aho‑Corasick‑based applications. The derived thresholds and hybrid execution strategies can be directly incorporated into production systems to achieve measurable performance improvements.

Comments & Academic Discussion

Loading comments...

Leave a Comment