Decomposition of Big Tensors With Low Multilinear Rank

Tensor decompositions are promising tools for big data analytics as they bring multiple modes and aspects of data to a unified framework, which allows us to discover complex internal structures and correlations of data. Unfortunately most existing approaches are not designed to meet the major challenges posed by big data analytics. This paper attempts to improve the scalability of tensor decompositions and provides two contributions: A flexible and fast algorithm for the CP decomposition (FFCP) of tensors based on their Tucker compression; A distributed randomized Tucker decomposition approach for arbitrarily big tensors but with relatively low multilinear rank. These two algorithms can deal with huge tensors, even if they are dense. Extensive simulations provide empirical evidence of the validity and efficiency of the proposed algorithms.

💡 Research Summary

This paper addresses the scalability challenges of tensor decompositions for big data by exploiting the assumption that the target tensor possesses a relatively low multilinear rank. Two novel algorithms are introduced. The first, called Flexible Fast CP (FFCP), performs a Tucker compression of the original tensor and then carries out a CP decomposition directly on the compressed representation rather than on the small core tensor alone. By approximating the costly term Y⁽ⁿ⁾B⁽ⁿ⁾ in the CP gradient with U⁽ⁿ⁾·(G⁽ⁿ⁾·Π_{p≠n}V⁽ᵖ⁾), where V⁽ᵖ⁾=U⁽ᵖ⁾ᵀA⁽ᵖ⁾, the computational complexity is reduced from O(R·∏Iₙ) to O(IₙRₙ²+R·R). This reduction enables the use of first‑order CP algorithms (ALS, multiplicative updates, HALS) with additional constraints such as non‑negativity and sparsity at virtually no extra cost. The second contribution is a Distributed Randomized Tucker decomposition. Each mode is projected onto a random matrix Ω⁽ⁿ⁾ of size Iₙ×Kₙ (Kₙ slightly larger than the target rank). By computing the n‑mode product Y×ₙΩ⁽ⁿ⁾ locally on distributed nodes, a much smaller “sketch” tensor S is formed. Performing HOSVD on S yields approximate factor matrices Ũ⁽ⁿ⁾, which are then used to obtain a compressed core G̃ = Y×₁Ũ⁽¹⁾ᵀ…×_NŨ⁽ᴺ⁾ᵀ. The FFCP algorithm is subsequently applied to ⟦G̃;Ũ⁽¹⁾,…,Ũ⁽ᴺ⁾⟧, completing the CP decomposition of the original large tensor.

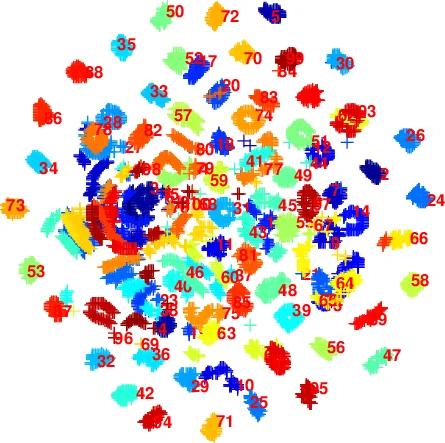

Both algorithms are validated on synthetic tensors of varying order, size, and noise level, as well as on real‑world datasets including color images, video clips, and multi‑channel EEG recordings. Compared with state‑of‑the‑art scalable methods such as ParCube, GigaTensor, and PARACOMP, FFCP achieves 5–10× speed‑up while delivering comparable or higher fit values (often >0.95). The randomized Tucker approach enables processing of dense tensors that would otherwise exceed memory limits on a single machine; it scales linearly with the number of compute nodes and requires only modest communication of the random projection matrices.

The paper also discusses practical aspects: the choice of compression ranks Rₙ and sketch dimensions Kₙ, the impact of approximation error on downstream CP accuracy, and the ease of incorporating constraints. Limitations are acknowledged: if the low‑multilinear‑rank assumption is violated, compression loss may degrade results; selecting Kₙ remains heuristic; and simultaneous non‑negativity and sparsity constraints can slow convergence.

Overall, the work presents a coherent framework that couples Tucker compression with CP factorization, delivering a highly scalable, memory‑efficient solution for big‑tensor analytics. It opens avenues for further research on adaptive rank estimation, streaming tensor processing, and integration with deep learning pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment