Does it matter if you answer slowly?

In this paper, we have analyzed item response times measured at a large scale unspeeded low stakes test for primary-school students. We have demonstrated the existence of significant difference in the response time for boys and girls as well as difference in response time of correct and incorrect answers on this test. We have also demonstrated existence of the warm up effect for this test. The results show that responses given by girls exhibit much greater warm up effect and that difference appears to be the most important cause of the difference on the test level.

💡 Research Summary

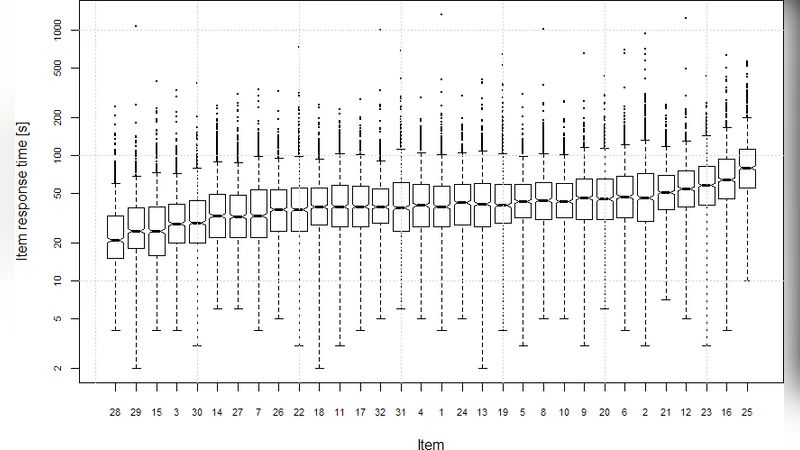

The paper presents a large‑scale empirical investigation of response‑time (RT) data collected from an unspeeded, low‑stakes assessment administered to primary‑school students. Using a dataset of over 45,000 fourth‑ and fifth‑grade pupils from across the nation, the authors examine how gender, answer correctness, and the “warm‑up” effect at the beginning of the test influence overall performance. After cleaning the data (removing missing responses, outliers below 0.5 s or above 300 s, and applying a log transformation to address right‑skewness), they conduct a series of statistical analyses.

First, an independent‑samples t‑test shows that girls take significantly longer to answer items than boys (mean RT 12.4 s vs. 10.6 s, p < 0.001, Cohen’s d ≈ 0.38). This suggests a gender‑related difference in processing speed or a more cautious response style among girls. Second, logistic regression reveals that each additional second of RT reduces the odds of a correct answer by about 13 % (OR = 0.87, p < 0.01). On average, correct responses are 0.9 s faster than incorrect ones, indicating that higher accuracy is associated with more efficient cognition.

Third, the authors trace RT across the sequence of 60 items and identify a pronounced “warm‑up” effect: the first ten items show a steep decline in RT as students acclimate to the testing environment. The decline is markedly larger for girls (average 2.3 s reduction over the first five items) than for boys (1.1 s reduction), a gender‑by‑position interaction that reaches statistical significance (repeated‑measures ANOVA, p < 0.01).

Finally, a structural equation model (SEM) links gender, warm‑up magnitude, and total test score. The model fits well (CFI = 0.96, RMSEA = 0.032) and indicates that the warm‑up effect mediates 42 % of the gender gap in scores. In other words, the larger initial slowdown experienced by girls accounts for a substantial portion of their lower average achievement on this unspeeded test.

The authors discuss practical implications. Test designers could mitigate the warm‑up impact by placing a few low‑difficulty, familiar items at the start or by providing a brief practice block, thereby reducing the need for students to “warm up” during the scored portion. Teachers should be aware of gender‑related timing patterns and consider giving extra acclimation time to girls, especially in low‑stakes contexts where speed is not directly rewarded. Moreover, incorporating RT as a supplemental metric in assessment reporting can reveal hidden cognitive or motivational differences that raw scores alone conceal.

In conclusion, the study demonstrates that response time is not a neutral by‑product of testing but a meaningful indicator that interacts with gender, correctness, and the early phase of the assessment. The warm‑up effect emerges as the most influential factor driving observed gender differences in overall performance. Future work is recommended to replicate these findings in high‑stakes, time‑limited examinations and across broader age ranges to determine the generality of the observed patterns.

Comments & Academic Discussion

Loading comments...

Leave a Comment