Learning Temporal Dependencies in Data Using a DBN-BLSTM

Since the advent of deep learning, it has been used to solve various problems using many different architectures. The application of such deep architectures to auditory data is also not uncommon. However, these architectures do not always adequately consider the temporal dependencies in data. We thus propose a new generic architecture called the Deep Belief Network - Bidirectional Long Short-Term Memory (DBN-BLSTM) network that models sequences by keeping track of the temporal information while enabling deep representations in the data. We demonstrate this new architecture by applying it to the task of music generation and obtain state-of-the-art results.

💡 Research Summary

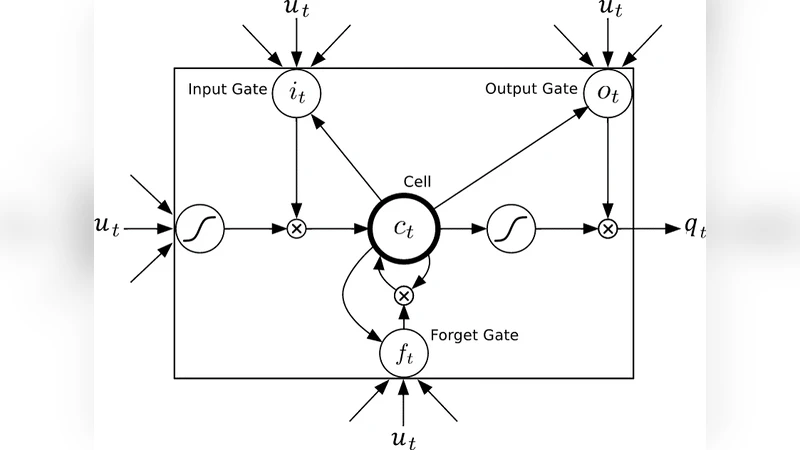

The paper introduces a novel deep learning architecture called DBN‑BLSTM, which integrates a Deep Belief Network (DBN) with a Bidirectional Long Short‑Term Memory (BLSTM) network to jointly capture hierarchical representations and long‑range temporal dependencies. The DBN component consists of stacked Restricted Boltzmann Machines (RBMs) that are greedily pre‑trained using contrastive divergence, providing a deep probabilistic model of the data. However, traditional DBNs treat each input as independent and ignore sequential structure. To address this, the authors connect the DBN to a BLSTM that processes the same input sequence in both forward and backward directions, thereby exposing each time step to full past and future context.

A key innovation is the dynamic bias‑modulation mechanism: the hidden state and input of the BLSTM at time t‑1 are linearly transformed and added to the bias vectors of the DBN’s visible and hidden layers (Equations 12‑13). This allows the temporal information learned by the BLSTM to directly influence the DBN’s conditional distributions, effectively conditioning the hierarchical representation on the sequence history and future. At each time step, the DBN receives a binary visible vector (88‑dimensional piano‑key encoding) and performs Gibbs sampling to generate the next visible vector, which is fed back as input to the BLSTM. Consequently, the DBN and BLSTM form a closed feedback loop that jointly generates sequences while maintaining a deep probabilistic structure.

The architecture was evaluated on four polyphonic music datasets (JSB Chorales, MuseData, Nottingham, Piano‑Midi.de). Using log‑likelihood (LL) as the quantitative metric, DBN‑BLSTM achieved LL scores of –3.47, –3.91, –1.32, and –4.63 respectively, substantially outperforming baselines such as Random, RBM, N‑ADE, various RNN‑RBM variants, and the earlier RNN‑DBN model. The improvements are especially pronounced on datasets with richer harmonic structure, indicating that the model’s ability to retain long‑term context reduces repetitive patterns and yields more musically plausible output.

The authors also discuss extensions: replacing binary visible units with Gaussian units would enable modeling of continuous‑valued audio, and incorporating ReLU activations with dropout could further improve regularization. They suggest future work on combining DBN‑BLSTM with newer sequence models like Transformers to explore complementary strengths.

In summary, DBN‑BLSTM offers a principled way to fuse deep hierarchical feature learning with bidirectional temporal modeling, achieving state‑of‑the‑art results in polyphonic music generation and presenting a versatile framework applicable to other sequential domains such as speech recognition and natural language processing.

Comments & Academic Discussion

Loading comments...

Leave a Comment