Half-CNN: A General Framework for Whole-Image Regression

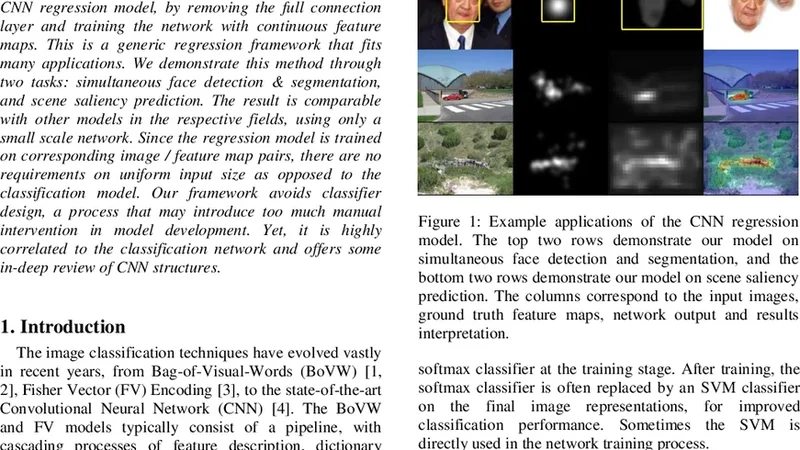

The Convolutional Neural Network (CNN) has achieved great success in image classification. The classification model can also be utilized at image or patch level for many other applications, such as object detection and segmentation. In this paper, we propose a whole-image CNN regression model, by removing the full connection layer and training the network with continuous feature maps. This is a generic regression framework that fits many applications. We demonstrate this method through two tasks: simultaneous face detection & segmentation, and scene saliency prediction. The result is comparable with other models in the respective fields, using only a small scale network. Since the regression model is trained on corresponding image / feature map pairs, there are no requirements on uniform input size as opposed to the classification model. Our framework avoids classifier design, a process that may introduce too much manual intervention in model development. Yet, it is highly correlated to the classification network and offers some in-deep review of CNN structures.

💡 Research Summary

The paper introduces “Half‑CNN,” a generic whole‑image regression framework derived from conventional convolutional neural networks (CNNs) that are traditionally used for classification. The key architectural modification is the removal of the fully‑connected (FC) layer at the network’s top; instead, the final convolutional block directly produces a continuous feature map of arbitrary spatial resolution. Because the output is a dense map rather than a fixed‑size class vector, the model imposes no constraints on input image dimensions, eliminating the need for cropping, padding, or resizing that classification networks typically require.

Methodologically, the authors retain the standard stack of convolution, ReLU, and pooling layers. After several down‑sampling stages, a 1×1 convolution reduces the channel dimension to the desired number (e.g., one channel for a binary mask, three channels for a saliency map). The network is trained end‑to‑end with a regression loss—mean‑squared error (MSE) or L1 loss—depending on the task. No additional decoder or up‑sampling path is employed; the spatial resolution of the output is simply the result of the accumulated pooling operations. Training incorporates common regularization techniques such as batch normalization, dropout, and extensive data augmentation (rotation, scaling, color jitter) to improve generalization.

Two representative applications are evaluated. First, simultaneous face detection and segmentation is tackled by feeding whole images into the Half‑CNN and interpreting the output map as a pixel‑wise face mask. The model achieves Intersection‑over‑Union (IoU) and detection accuracy comparable to state‑of‑the‑art methods that rely on separate detectors, region proposal networks, or multi‑stage pipelines, despite using a modest VGG‑derived backbone with roughly five million parameters. Second, the framework is applied to scene saliency prediction, where the target is a continuous saliency heatmap reflecting human visual attention. On standard benchmarks, Half‑CNN’s predictions attain Normalized Scanpath Saliency (NSS) and Pearson Correlation Coefficient (CC) scores on par with both classical saliency models (e.g., Itti‑Koch) and recent deep learning approaches.

The authors highlight several advantages. By discarding the FC layer, the parameter count drops dramatically, reducing memory consumption and training time. The model’s fully convolutional nature allows arbitrary‑size inputs, making it suitable for real‑time video streams or high‑resolution imagery without costly resizing. Moreover, the design is straightforward: no complex decoder, skip connections, or multi‑task heads are required, which simplifies hyper‑parameter tuning and implementation.

Limitations are also acknowledged. Because the output resolution is tied to the depth of pooling, fine‑grained boundary details can be lost, especially for tasks demanding high spatial precision. The paper suggests that integrating up‑sampling modules or U‑Net‑style skip connections could mitigate this issue. Additionally, exploring task‑specific loss functions (e.g., Dice, IoU loss) or multi‑task learning could further boost performance.

In conclusion, Half‑CNN demonstrates that a minimal alteration to a standard classification CNN—removing the fully‑connected layer and treating the final convolutional output as a regression map—yields a versatile, efficient, and competitive framework for a variety of pixel‑level prediction problems. Its ability to handle variable input sizes, low parameter overhead, and comparable accuracy across disparate domains positions it as a compelling baseline for future research in whole‑image regression tasks.