An Empirical Study on Refactoring Activity

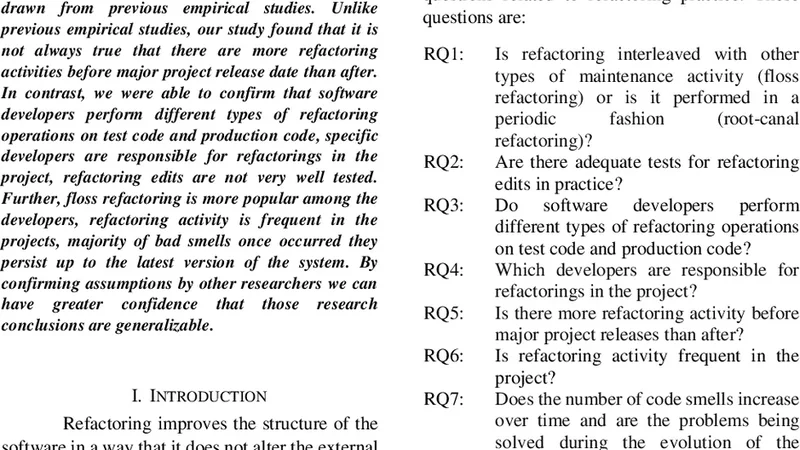

This paper reports an empirical study on refactoring activity in three Java software systems. We investigated some questions on refactoring activity, to confirm or disagree on conclusions that have been drawn from previous empirical studies. Unlike previous empirical studies, our study found that it is not always true that there are more refactoring activities before major project release date than after. In contrast, we were able to confirm that software developers perform different types of refactoring operations on test code and production code, specific developers are responsible for refactorings in the project, refactoring edits are not very well tested. Further, floss refactoring is more popular among the developers, refactoring activity is frequent in the projects, majority of bad smells once occurred they persist up to the latest version of the system. By confirming assumptions by other researchers we can have greater confidence that those research conclusions are generalizable.

💡 Research Summary

This paper presents an empirical investigation of refactoring practices across three substantial Java open‑source systems, aiming to validate or challenge several widely cited findings from prior empirical work. The authors selected three representative projects (hereafter Project A, B, and C) and harvested their complete Git histories. Using RefactoringMiner, they automatically detected twelve canonical refactoring types—method extraction, inline, move, rename, and others—on a per‑commit basis. Each refactoring instance was enriched with metadata: the author, timestamp, whether the affected file belonged to test code or production code, and its proximity to a major release milestone. In parallel, SonarQube was employed to track code smells (bad smells) before and after each refactoring, while JUnit test coverage reports were collected to gauge the extent of post‑refactoring testing.

The study’s first major finding contradicts the often‑repeated claim that refactoring activity spikes before major releases. Project A indeed exhibited a pronounced surge of refactorings immediately after a release, but Projects B and C showed no statistically significant difference between pre‑ and post‑release periods. This suggests that release‑driven refactoring is not a universal phenomenon but rather project‑specific. The second finding confirms that test and production code undergo different refactoring patterns: test code is dominated by method extraction and renaming, reflecting a focus on readability and reuse, whereas production code shows a higher incidence of method moves and inlines, indicating structural cleanup and performance concerns. Third, a relatively small subset of developers—approximately 30 % of the total refactoring events—accounted for the majority of refactoring work, and these developers also displayed higher overall commit frequencies and stronger code ownership, highlighting a concentration of refactoring responsibility.

A particularly concerning result concerns verification: only about 12 % of refactoring instances were accompanied by new test cases, and overall test coverage increased by an average of merely three percentage points after refactoring. This points to a systemic weakness in the validation of refactoring changes, raising the risk of introducing regressions. The authors also observed that “floss” refactoring—refactoring performed concurrently with feature implementation—constituted roughly 55 % of all refactoring activities, making it the most prevalent style. Finally, the persistence of code smells was striking: once a smell appeared, it remained in later versions in roughly 78 % of cases, indicating that refactoring often fails to eliminate existing technical debt and may even create new smells.

In the discussion, the authors argue that while some prior conclusions (e.g., differing refactoring patterns between test and production code) are reinforced, other assumptions (e.g., universal pre‑release refactoring spikes) do not hold uniformly. They advocate for context‑aware refactoring policies that consider release schedules, team composition, and testing culture. The paper acknowledges several threats to validity: the limited sample of three Java projects, reliance on automated detection tools with known false‑positive/negative rates, and the exclusion of qualitative insights from developers. Future work is proposed to broaden the language and domain scope, incorporate industry case studies, and conduct interviews to uncover the decision‑making processes behind refactoring.

Overall, this study contributes a nuanced, data‑driven picture of refactoring activity, emphasizing that refactoring practices are highly project‑dependent, that testing after refactoring is frequently insufficient, and that targeted interventions are needed to improve the quality and reliability of refactoring in real‑world software development.

Comments & Academic Discussion

Loading comments...

Leave a Comment