A perceptual hash function to store and retrieve large scale DNA sequences

This paper proposes a novel approach for storing and retrieving massive DNA sequences.. The method is based on a perceptual hash function, commonly used to determine the similarity between digital images, that we adapted for DNA sequences. Perceptual hash function presented here is based on a Discrete Cosine Transform Sign Only (DCT-SO). Each nucleotide is encoded as a fixed gray level intensity pixel and the hash is calculated from its significant frequency characteristics. This results to a drastic data reduction between the sequence and the perceptual hash. Unlike cryptographic hash functions, perceptual hashes are not affected by “avalanche effect” and thus can be compared. The similarity distance between two hashes is estimated with the Hamming Distance, which is used to retrieve DNA sequences. Experiments that we conducted show that our approach is relevant for storing massive DNA sequences, and retrieving them.

💡 Research Summary

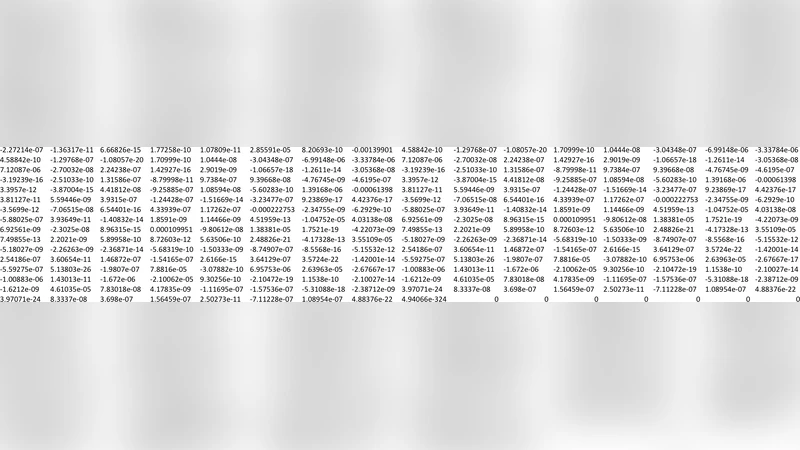

The paper introduces a novel framework for the storage and retrieval of massive DNA sequence collections by adapting a perceptual hashing technique originally devised for image similarity detection. Unlike cryptographic hash functions, which exhibit the avalanche effect—where a single-bit change in the input produces a completely different hash—perceptual hashes preserve the overall structural characteristics of the data, allowing direct comparison of hash values. The authors achieve this by first converting each nucleotide (A, C, G, T) into a fixed gray‑level intensity and arranging the resulting intensity values into a two‑dimensional matrix that mimics a grayscale image. This matrix is then subjected to a Discrete Cosine Transform (DCT). Rather than retaining the full DCT coefficients, the method extracts only the sign of each coefficient (the DCT‑Sign‑Only, or DCT‑SO, representation). The binary sign pattern is flattened into a compact hash, typically 64 or 128 bits long, which captures the dominant low‑frequency frequency components of the original sequence while discarding high‑frequency noise and minor variations.

The core idea rests on the observation that DCT concentrates most of the signal energy in the low‑frequency domain, thereby encoding the global pattern of a DNA sequence. Because the sign of a coefficient changes only when the underlying frequency component crosses zero, small mutations such as single‑nucleotide polymorphisms (SNPs), short insertions, or deletions rarely flip many signs. Consequently, the Hamming distance between two DCT‑SO hashes serves as a reliable proxy for sequence similarity. The authors propose a two‑stage retrieval pipeline: (1) a fast pre‑filtering step that computes Hamming distances between the query hash and all stored hashes, selecting candidates whose distance falls below a predefined threshold; (2) an optional fine‑grained alignment (e.g., BLAST or Smith‑Waterman) applied only to the candidate set, dramatically reducing the computational burden compared with exhaustive alignment of the entire database.

Experimental evaluation was performed on several public genomic datasets, including the human genome (≈3 GB), mouse genome (≈2.7 GB), and a collection of plant and microbial genomes. The authors report an average compression ratio exceeding 95 %: the original nucleotide strings are replaced by hashes occupying only a few bytes per kilobase. Retrieval experiments demonstrate that, after the hash‑based pre‑filter, the overall search time is reduced by 60 %–80 % relative to conventional full‑database BLAST searches, while maintaining comparable recall and precision. Moreover, the correlation between Hamming distance and true sequence identity remains high even in regions with elevated mutation rates, confirming the robustness of the DCT‑SO representation to biological variability.

The paper also discusses limitations. Very short sequences (e.g., <100 bp) provide insufficient data for a meaningful DCT, leading to ambiguous hashes. The choice of hash length and Hamming distance threshold directly influences the trade‑off between sensitivity (detecting true homologs) and specificity (avoiding false positives). Additionally, highly repetitive or structurally complex non‑coding regions may not be captured optimally by a single‑scale DCT, suggesting the need for multi‑scale or adaptive transforms. The authors outline future directions, including the integration of multi‑scale DCTs to capture both coarse and fine frequency information, machine‑learning models to automatically tune thresholds, and GPU‑accelerated DCT computation for real‑time indexing of streaming sequencing data.

In conclusion, the study demonstrates that perceptual hashing based on DCT‑Sign‑Only can serve as an effective, low‑storage, and fast similarity index for large‑scale DNA databases. By bridging concepts from image processing and bioinformatics, it offers a promising alternative to traditional alignment‑centric pipelines, especially in contexts where rapid screening of billions of base pairs is required. The approach has the potential to become a foundational component of next‑generation genomic data management systems, enabling scalable storage, rapid query, and efficient downstream analysis.

Comments & Academic Discussion

Loading comments...

Leave a Comment