Are Clouds Ready to Accelerate Ad hoc Financial Simulations?

Applications employed in the financial services industry to capture and estimate a variety of risk metrics are underpinned by stochastic simulations which are data, memory and computationally intensive. Many of these simulations are routinely performed on production-based computing systems. Ad hoc simulations in addition to routine simulations are required to obtain up-to-date views of risk metrics. Such simulations are currently not performed as they cannot be accommodated on production clusters, which are typically over committed resources. Scalable, on-demand and pay-as-you go Virtual Machines (VMs) offered by the cloud are a potential platform to satisfy the data, memory and computational constraints of the simulation. However, “Are clouds ready to accelerate ad hoc financial simulations?” The research reported in this paper aims to experimentally verify this question by developing and deploying an important financial simulation, referred to as ‘Aggregate Risk Analysis’ on the cloud. Parallel techniques to improve efficiency and performance of the simulations are explored. Challenges such as accommodating large input data on limited memory VMs and rapidly processing data for real-time use are surmounted. The key result of this investigation is that Aggregate Risk Analysis can be accommodated on cloud VMs. Acceleration of up to 24x using multiple hardware accelerators over the implementation on a single accelerator, 6x over a multiple core implementation and approximately 60x over a baseline implementation was achieved on the cloud. However, computational time is wasted for every dollar spent on the cloud due to poor acceleration over multiple virtual cores. Interestingly, private VMs can offer better performance than public VMs on comparable underlying hardware.

💡 Research Summary

The paper investigates whether cloud computing platforms can effectively accelerate ad‑hoc financial risk simulations, focusing on a widely used application called Aggregate Risk Analysis (ARA). ARA is a Monte‑Carlo‑style simulation that processes millions of trial years, each containing thousands of catastrophic event occurrences (e.g., earthquakes), and maps them to loss values using large Event‑Loss Tables (ELTs). The simulation also applies multiple layers of financial terms (occurrence retention/limit and aggregate retention/limit) to produce a Year Loss Table (YLT) from which risk metrics such as Probable Maximum Loss (PML) and Tail Value‑at‑Risk (TVaR) are derived.

The authors first describe the data structures: a Year Event Table (YET) with up to one million trials, each trial holding 800‑1500 event‑time‑stamp pairs; ELTs containing tens of thousands to a few million event‑loss pairs; and a Portfolio metadata hierarchy (programs, layers, financial terms). The algorithm consists of a preprocessing stage (loading YET, ELTs, and portfolio data into memory) followed by a four‑step per‑trial computation: (1) lookup each event’s loss, (2) apply secondary uncertainty, (3) apply occurrence‑level financial terms, and (4) apply aggregate‑level financial terms. The result is a loss value per trial that feeds downstream enterprise risk management processes.

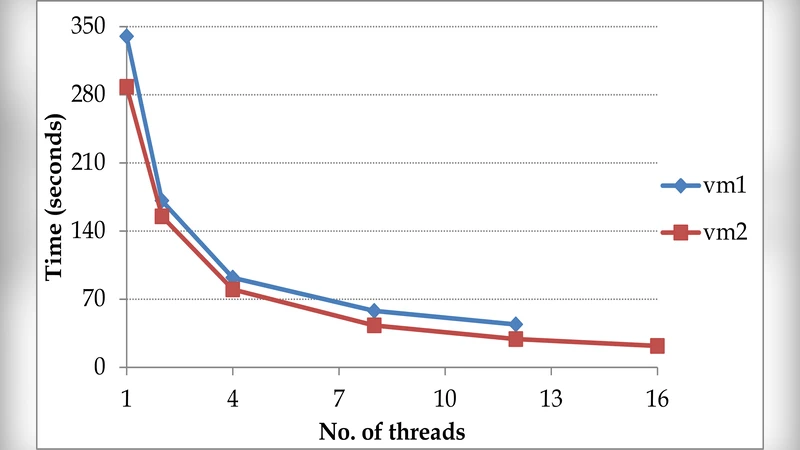

To assess cloud suitability, the authors implement three versions of ARA: a sequential C++ baseline, an OpenMP‑based multi‑core CPU version, and a CUDA‑based GPU version (both single‑GPU and multi‑GPU). They evaluate these on a range of Amazon EC2 instance types (general‑purpose m1/m3, memory‑optimized m2/cr1, compute‑optimized cc1/cc2, storage‑optimized hi1/hs1, and GPU cg1) as well as two private VMs (vm1, vm2) equipped with comparable CPUs and NVIDIA Tesla M2050 GPUs. All instances have at least 15 GB RAM; GPU instances provide 3 GB of device memory and 148 GB/s bandwidth.

Key technical challenges addressed include: (a) fitting the massive YET into limited VM memory – solved by chunked streaming of trials; (b) efficient event‑loss look‑ups on GPUs – solved by converting ELTs into hash‑based structures that fit into device memory; (c) minimizing host‑to‑device data transfers – achieved by overlapping computation with asynchronous transfers; and (d) handling hyper‑threaded virtual CPUs, which can degrade per‑core performance.

Experimental results show that cloud VMs can indeed host ARA. On a single NVIDIA Tesla M2050 GPU, the CUDA implementation achieved up to 24× speed‑up over the sequential CPU baseline. When multiple GPUs were used in parallel, the speed‑up approached 60×. Multi‑core CPU instances delivered up to 6× acceleration, but the performance per virtual core was low, leading to poor cost‑efficiency (“computational time is wasted for every dollar spent”). Notably, private VMs consistently outperformed public EC2 instances with comparable hardware, suggesting that hyper‑visor overhead, shared network/storage, and noisy‑neighbor effects in public clouds reduce effective throughput.

The authors conclude that while the cloud provides on‑demand scalability and eliminates the need for dedicated ad‑hoc hardware, achieving cost‑effective acceleration requires careful orchestration of parallelism, memory management, and data movement. They also highlight that private or hybrid cloud deployments may be more suitable for latency‑sensitive financial analytics. Future work is suggested in the areas of advanced scheduling, data compression/streaming, and leveraging newer accelerators (e.g., GPUs with larger memory, TPUs, or FPGA‑based coprocessors) to further close the gap between performance and cost for real‑time risk assessment.

Comments & Academic Discussion

Loading comments...

Leave a Comment