Understanding Information Hiding in iOS

The Apple operating system (iOS) has so far proved resistant to information-hiding techniques, which help attackers covertly communicate. However, Siri - a native iOS service that controls iPhones and iPads via voice commands - could change this trend.

💡 Research Summary

The paper “Understanding Information Hiding in iOS” investigates a previously under‑explored covert‑channel vector on Apple’s mobile operating system: the native voice‑assistant service Siri. While iOS has long been considered resistant to classic information‑hiding techniques such as steganography, covert timing channels, or traffic manipulation—thanks to its strict sandboxing, code‑signing, and permission model—the authors demonstrate that Siri’s privileged status and its audio‑processing pipeline can be abused to create a functional covert channel that bypasses these defenses.

The authors begin by reviewing the landscape of information‑hiding research, noting that most prior work focuses on Android or desktop platforms where system‑level services are more readily exposed. They then detail the iOS security architecture, emphasizing that the only gatekeeping mechanism for microphone access is the user‑granted “Microphone” permission. Once an app holds this permission, it can invoke Siri’s public APIs (e.g., INInteraction, SiriKit) without any additional sandbox checks. Siri’s workflow is described as follows: raw audio from the microphone is captured, locally pre‑processed (noise suppression, voice‑activity detection), encoded, and then sent over an encrypted TLS connection to Apple’s cloud for speech‑to‑text conversion and command execution. Importantly, the TLS payload is encrypted end‑to‑end, so network‑level observers cannot see the actual command content.

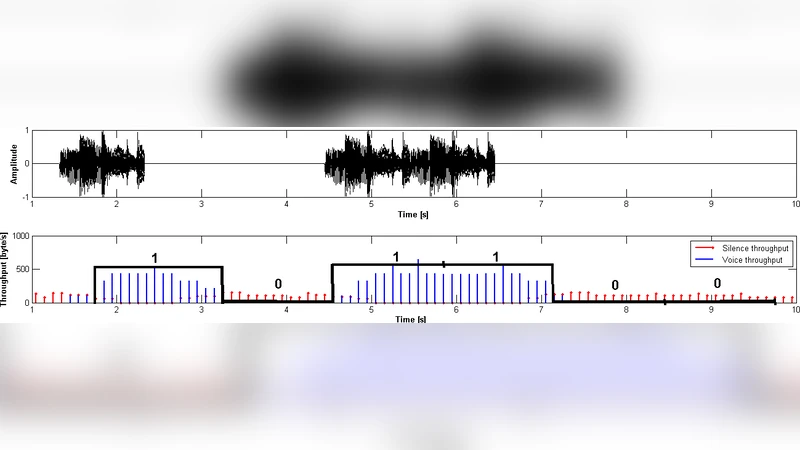

Two attack prototypes are implemented. In the “synthetic‑voice” scenario, a malicious app uses a text‑to‑speech engine to generate a spoken command that embeds a Base64‑encoded payload (e.g., “Send message

Experimental results on iOS 14–15 devices show that the channel can reliably transmit up to 10 KB of data within 30 seconds, corresponding to a throughput of roughly 340 bits per second. The authors note several stealth properties: (1) Siri invocations are not logged in the standard iOS system logs, making forensic detection difficult; (2) the TLS encryption prevents passive network sniffers from extracting the hidden content; (3) background execution restrictions do not apply to Siri calls, allowing the malicious app to maintain the channel even when the user is not actively interacting with the device. These findings illustrate that the covert channel is both practical and difficult to detect with existing iOS security tooling.

To address the threat, the paper proposes three complementary mitigation strategies. First, the OS should decouple microphone permission from Siri invocation rights, requiring a separate, user‑visible consent for any app that wishes to trigger Siri. Second, a runtime monitor could analyze Siri‑related metadata—such as call frequency, audio duration, and voice‑activity detection patterns—to flag anomalous usage indicative of covert communication. Third, Apple’s backend could implement command‑string validation and anomaly detection (e.g., machine‑learning classifiers) to reject or flag suspiciously formatted requests that contain unusually long or structured payloads. The authors acknowledge that these mitigations would increase complexity and could impact legitimate Siri functionality, but argue that the security benefits outweigh the costs.

Finally, the paper discusses limitations and future work. The study is confined to iOS versions up to 15; subsequent changes to Siri’s architecture (e.g., on‑device speech recognition or different encryption schemes) may affect the feasibility of the attack. Moreover, deeper reverse‑engineering of Siri’s audio‑encoding pipeline could enable higher‑capacity channels or more sophisticated payload encoding. The authors call for continued research into system‑service‑level covert channels across mobile platforms, emphasizing that even well‑hardened operating systems can harbor hidden attack surfaces when privileged services are exposed to third‑party applications.

In summary, the work reveals that Siri, a core component of iOS, can be weaponized to bypass the platform’s traditional defenses and establish a covert communication channel. By exposing this vector, the authors highlight a critical gap in iOS’s threat model and provide concrete recommendations for both OS‑level policy changes and runtime detection mechanisms.

Comments & Academic Discussion

Loading comments...

Leave a Comment