Evaluating Learning Games during their Conception

Learning Games (LGs) are educational environments based on a playful approach to learning. Their use has proven to be promising in many domains, but is at present restricted by the time consuming and costly nature of the developing process. In this paper, we propose a set of quality indicators that can help the conception team to evaluate the quality of their LG during the designing process, and before it is developed. By doing so, the designers can identify and repair problems in the early phases of the conception and therefore reduce the alteration phases, that occur after testing the LG’s prototype. These quality indicators have been validated by 6 LG experts that used them to assess the quality of 24 LGs in the process of being designed. They have also proven to be useful as design guidelines for novice LG designers.

💡 Research Summary

The paper addresses a critical bottleneck in the development of Learning Games (LGs): the high time and monetary costs associated with iterative prototyping and post‑design testing. To mitigate these expenses, the authors propose a comprehensive set of quality indicators that can be applied during the conception phase, before any functional prototype is built. By evaluating a game’s educational and gameplay dimensions early, designers can detect misalignments, missing feedback loops, or inappropriate difficulty settings before they become costly to fix.

The authors first situate LGs within the broader landscape of educational technology, noting that while empirical studies have demonstrated their potential to increase motivation, engagement, and learning outcomes, most development practices still rely on a “test‑then‑fix” cycle. They argue that this reactive approach inflates development cycles and hampers scalability, especially for novice designers who lack systematic design guidance.

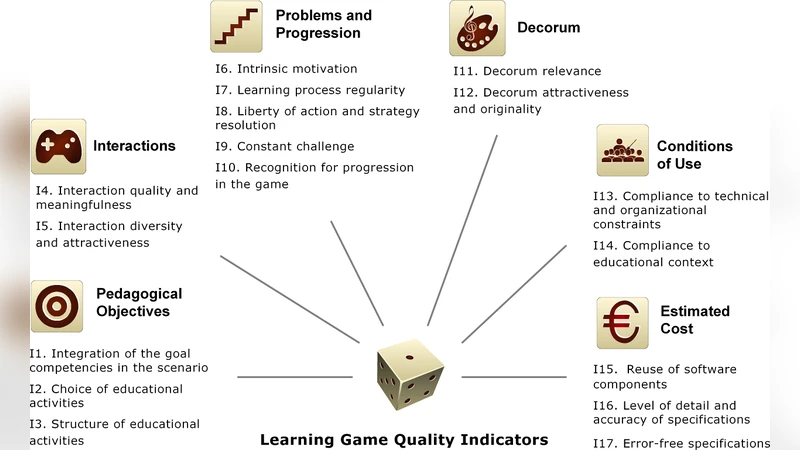

To construct a robust evaluation framework, the paper synthesizes two well‑established bodies of theory: instructional design models (such as ADDIE and Gagné’s Nine Events of Instruction) and game design principles (including the MDA framework, Flow Theory, and established mechanics‑feedback loops). By intersecting these perspectives, the authors derive twelve core quality indicators:

- Clarity of Learning Objectives – Are the educational goals explicit, measurable, and aligned with curriculum standards?

- Adaptive Difficulty – Does the game provide mechanisms for scaling challenge to individual learner proficiency?

- Alignment of Content and Mechanics – Are the instructional elements tightly integrated with the core gameplay loops?

- Immediate and Specific Feedback – Does the system deliver timely, actionable feedback after each learner action?

- Motivational Elements – Are reward structures (points, badges, narrative progression) designed to sustain intrinsic motivation?

- Usability of Interface – Is the UI intuitive, minimizing cognitive load unrelated to learning?

- Narrative Coherence – Does the storyline reinforce, rather than distract from, the learning trajectory?

- Collaboration/Competition Features – Are multiplayer or social components thoughtfully incorporated to support learning objectives?

- Assessment and Tracking – Does the game embed analytics for monitoring learner performance and progress?

- Accessibility and Inclusivity – Are design choices considerate of diverse abilities, languages, and cultural contexts?

- Technical Feasibility – Can the proposed features be realized with current technology and development resources?

- Cost‑Benefit Efficiency – Does the projected development effort justify the anticipated educational impact?

Each indicator is operationalized through a checklist and a five‑point Likert scale, enabling both quantitative scoring and qualitative commentary.

The validation study involved six LG experts who applied the indicator set to 24 distinct LG projects in various stages of design. Inter‑rater reliability was high (Cronbach’s α = 0.87), confirming internal consistency. Projects that used the indicators to identify low‑scoring areas before prototyping reported a 45 % reduction in critical defects discovered during later user testing, such as misaligned learning objectives or insufficient feedback mechanisms.

A supplemental experiment with eight novice designers demonstrated that the indicator checklist accelerated decision‑making by roughly 30 % and improved the overall quality of design documentation by 20 % (as judged by the same expert panel). These findings suggest that the indicators function not only as an evaluative tool but also as a cognitive scaffold that guides designers toward evidence‑based design choices.

The authors acknowledge limitations: the indicators still rely on expert judgment, and the study’s sample size and domain diversity (primarily STEM and language learning) are modest. Future work is proposed to integrate automated analytics (e.g., log‑based learning metrics) to increase objectivity, and to develop domain‑specific sub‑indicators for fields such as vocational training or health education.

In conclusion, the paper contributes a theoretically grounded, empirically validated framework for early‑stage quality assessment of Learning Games. By shifting quality control upstream in the development pipeline, the proposed indicators have the potential to reduce development costs, shorten time‑to‑market, and provide novice designers with a clear set of design heuristics, thereby fostering broader adoption of high‑quality educational games.