The Why and How of Nonnegative Matrix Factorization

Nonnegative matrix factorization (NMF) has become a widely used tool for the analysis of high-dimensional data as it automatically extracts sparse and meaningful features from a set of nonnegative data vectors. We first illustrate this property of NMF on three applications, in image processing, text mining and hyperspectral imaging –this is the why. Then we address the problem of solving NMF, which is NP-hard in general. We review some standard NMF algorithms, and also present a recent subclass of NMF problems, referred to as near-separable NMF, that can be solved efficiently (that is, in polynomial time), even in the presence of noise –this is the how. Finally, we briefly describe some problems in mathematics and computer science closely related to NMF via the nonnegative rank.

💡 Research Summary

The paper provides a comprehensive overview of Nonnegative Matrix Factorization (NMF), focusing on both the motivation for its widespread use (“why”) and the algorithmic strategies that make it practical (“how”). It begins by positioning NMF within the broader family of linear dimensionality reduction techniques, emphasizing that unlike the singular value decomposition (SVD) or principal component analysis (PCA), NMF imposes component‑wise nonnegativity on both the basis matrix W and the coefficient matrix H. This constraint forces the factors to be additive combinations of nonnegative parts, which naturally yields sparse, parts‑based representations that are easy to interpret.

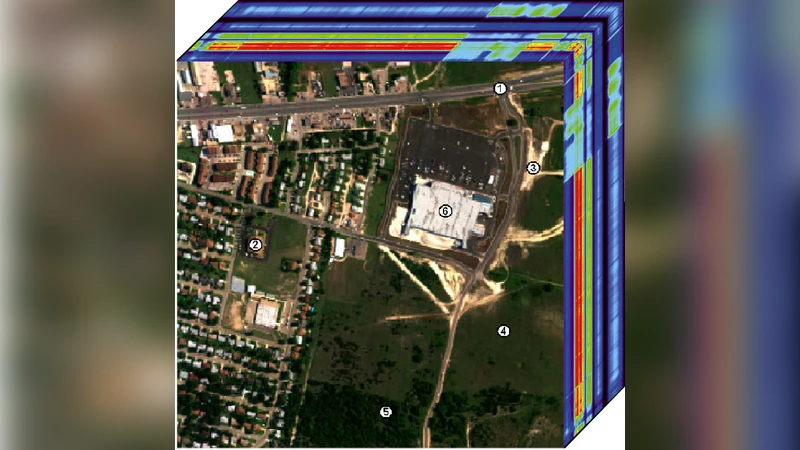

Three illustrative applications are presented. In face image analysis, each column of the data matrix corresponds to a gray‑scale face; NMF discovers basis images that look like eyes, noses, mouths, etc., and the coefficients indicate which facial parts appear in each image. Because the reconstruction uses only additive combinations, NMF is more robust to occlusions than PCA. In text mining, the document‑term matrix is factorized into topic vectors (columns of W) and document‑topic weights (rows of H). The nonnegativity ensures that topics are represented as genuine bags of words and that each document is expressed as a union of topics, mirroring probabilistic topic models but with a purely algebraic interpretation. In hyperspectral unmixing, each pixel’s spectral signature is a nonnegative mixture of a few endmember spectra; NMF recovers the endmember signatures (columns of W) and the abundance maps (rows of H), often with additional sparsity or total‑variation regularization to enforce realistic spatial smoothness.

From an algorithmic perspective, the paper stresses that the basic NMF optimization problem

min_{W≥0, H≥0} ‖X − WH‖_F²

is NP‑hard in general, so exact global solutions are infeasible for large data. Most practical methods therefore adopt a two‑block coordinate descent (alternating least squares) framework: fix H and solve a nonnegative least‑squares (NNLS) problem for W, then fix W and solve NNLS for H. Various NNLS solvers are discussed, including active‑set methods, multiplicative updates (Lee‑Seung), projected gradient, and block‑coordinate descent variants. While convergence to a stationary point is typically guaranteed, global optimality is not; nevertheless, these heuristics work well in many real‑world scenarios.

A major contribution of the paper is the discussion of a special subclass of matrices—near‑separable matrices—where NMF can be solved in polynomial time. In a near‑separable matrix, the columns of X are (up to small noise) nonnegative combinations of a subset of its own columns, which serve as the true basis. This property enables algorithms such as the Successive Projection Algorithm (SPA), XRAY, and fast conical hull methods to identify the basis columns directly, achieving provable robustness to bounded noise and a runtime that scales as O(poly(p, n, r)). The paper outlines the theoretical guarantees (e.g., error bounds proportional to the noise level) and notes that these methods bypass the need for sophisticated initialization.

The authors also touch on related theoretical topics, notably the nonnegative rank of a matrix, which measures the smallest r for which an exact nonnegative factorization exists. The nonnegative rank can exceed the usual rank and is linked to communication complexity, extended formulations in combinatorial optimization, and hardness results for matrix factorization.

Practical considerations are highlighted throughout. The choice of factorization rank r is nontrivial; common strategies include inspecting the singular value decay, cross‑validation, or leveraging domain expertise (e.g., the expected number of endmembers in a hyperspectral scene). Regularization (ℓ₁ sparsity, total variation, graph Laplacian penalties) is often added to promote desirable structure and to mitigate the inherent non‑uniqueness of NMF factorizations. Initialization schemes such as NNDSVD can improve convergence speed and solution quality.

In summary, the paper delivers a balanced treatment of NMF: it explains why the method yields meaningful, sparse parts‑based representations across diverse domains, and it surveys the algorithmic landscape—from generic alternating‑minimization heuristics to the more recent, provably efficient near‑separable approaches. By coupling theoretical insights with practical guidelines, the work serves as a valuable entry point for researchers and practitioners seeking to understand and apply NMF to high‑dimensional nonnegative data.

Comments & Academic Discussion

Loading comments...

Leave a Comment