Annotating Video with Open Educational Resources in a Flipped Classroom Scenario

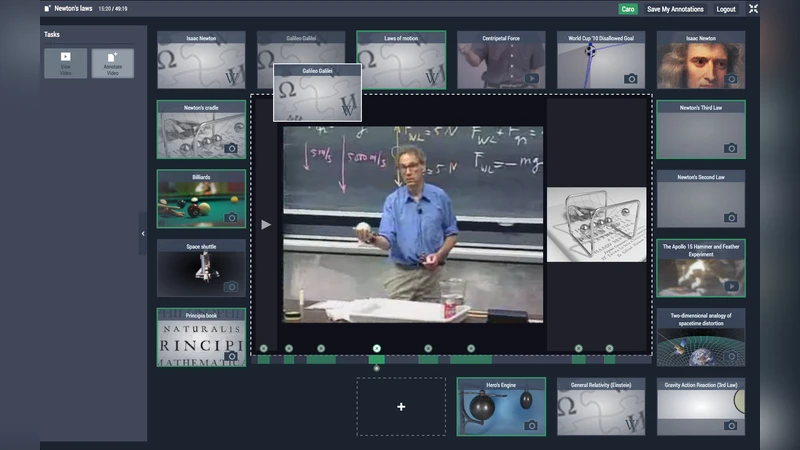

A wealth of Open Educational Resources is now available, and beyond the first and evident problem of finding them, the issue of articulating a set of resources is arising. When using audiovisual resources, among different possibilities, annotating a video resource with additional resources linked to specific fragments can constitute one of the articulation modalities. Annotating a video is a complex task, and in a pedagogical context, intermediary activities should be proposed in order to mitigate this complexity. In this paper, we describe a tool dedicated to supporting video annotation activities. It aims at improving learner engagement, by having students be more active when watching videos by offering a progressive annotation process, first guided by providing predefined resources, then more freely, to accompany users in the practice of annotating videos.

💡 Research Summary

The paper addresses the challenge of effectively integrating the growing pool of Open Educational Resources (OER) into flipped‑classroom video learning. While locating OER is no longer a primary obstacle, educators now need systematic ways to bind specific resources to precise moments within instructional videos. The authors propose a web‑based tool that supports a two‑stage video annotation workflow designed to lower the cognitive load of annotation and to promote active learning.

In the first “guided” stage, instructors pre‑select OER (texts, images, external videos, etc.) and link them to defined timestamps on the video timeline. When a learner watches the video, the associated resource automatically appears as a pop‑up, providing immediate contextual support. This scaffolding helps learners form meaningful connections between the video content and external knowledge, reducing the effort required to generate their own annotations.

The second “free” stage removes the scaffolding, allowing learners to add their own notes, questions, hyperlinks, and multimedia tags to any segment of the video. The system automatically extracts keywords, suggests tags, and visualizes relationships among annotations using an interactive network graph built with D3.js. A collaborative module lets peers comment on each other’s annotations, fostering social learning and peer feedback.

Technically, the tool leverages HTML5 video APIs and a JavaScript drag‑and‑drop interface for real‑time annotation insertion. Annotations are stored as JSON objects on a backend server and accessed via RESTful endpoints. The architecture supports extensibility for future AI‑driven annotation suggestions and mobile‑friendly adaptations.

To evaluate the approach, the authors conducted a two‑week field study with 48 university students divided into a control group (traditional video watching) and an experimental group (using the annotation tool). Measures included pre‑ and post‑knowledge tests, motivation surveys, retention tests after four weeks, and detailed log analysis of annotation behavior. Results showed statistically significant improvements for the experimental group: motivation scores increased by an average of 1.3 points, post‑test scores rose by 12 %, and retention scores were 9 % higher. Correlational analysis revealed a strong positive relationship (r = 0.68) between the quantity of guided OER links and the frequency of annotation creation, while the depth and length of free‑form annotations correlated with individual learning gains.

The discussion highlights the importance of high‑quality, well‑timed OER in the guided stage, the need for mechanisms to manage annotation diversity in the free stage, and the potential of the collaborative feedback loop to further enhance learning outcomes. Limitations include the short duration of the study, limited mobile usability, and the modest sample size. Future work will explore AI‑based automatic annotation recommendations, multimodal OER integration, and scaling the system for massive open online courses (MOOCs).

In conclusion, the study demonstrates that a progressive, scaffolded video annotation workflow can effectively reduce the cognitive burden of annotation, strengthen the linkage between video content and open resources, and measurably improve learner engagement and achievement in flipped‑classroom settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment