How to use Big Data technologies to optimize operations in Upstream Petroleum Industry

“Big Data is the oil of the new economy” is the most famous citation during the three last years. It has even been adopted by the World Economic Forum in 2011. In fact, Big Data is like crude! It’s valuable, but if unrefined it cannot be used. It must be broken down, analyzed for it to have value. But what about Big Data generated by the Petroleum Industry and particularly its upstream segment? Upstream is no stranger to Big Data. Understanding and leveraging data in the upstream segment enables firms to remain competitive throughout planning, exploration, delineation, and field development. Oil & Gas Companies conduct advanced geophysics modeling and simulation to support operations where 2D, 3D & 4D Seismic generate significant data during exploration phases. They closely monitor the performance of their operational assets. To do this, they use thousands sensors in subsurface wells and surface facilities to provide continuous and real-time monitoring of assets and environmental conditions. Unfortunately, this information comes in various and increasingly complex forms, making it a challenge to collect, interpret, and leverage the disparate data. Big Data technologies integrate common and disparate data sets to deliver the right information at the appropriate time to the correct decision-maker. These capabilities help firms act on large volumes of data, transforming decision-making from reactive to proactive and optimizing all phases of exploration, development and production. Furthermore, Big Data offers multiple opportunities to ensure safer, more responsible operations. Another invaluable effect of that would be shared learning. The aim of this paper is to explain how to use Big Data technologies to optimize operations. How can Big Data help experts to decision-making leading the desired outcomes?

💡 Research Summary

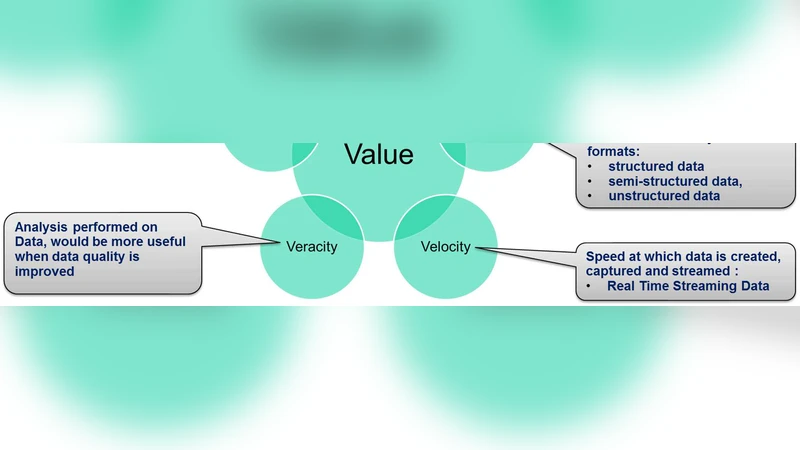

The paper presents a comprehensive framework for leveraging Big Data technologies to optimize operations throughout the upstream segment of the petroleum industry, covering exploration, drilling, and production phases. It begins by framing data as the “oil of the new economy,” emphasizing that raw data must be refined before it can generate value. The authors note that upstream activities already generate massive, heterogeneous data streams—including 2‑D, 3‑D, and 4‑D seismic surveys, drilling‑hole logs, real‑time pressure and temperature sensor readings, satellite imagery, and environmental monitoring data—yet traditional relational databases and siloed workflows cannot handle the volume, velocity, and variety of these inputs.

The proposed architecture is built around a modern data‑lake paradigm. Data ingestion is performed with Apache NiFi and Kafka, providing reliable, loss‑less streaming from field devices to the cloud. Schema enforcement and data quality checks are managed through Avro and a Schema Registry, while Apache Atlas records metadata and lineage to support governance and compliance. Raw data are stored cost‑effectively in object storage (e.g., AWS S3, Azure Blob) or HDFS, whereas curated data are persisted in columnar formats such as Parquet/ORC and in NoSQL stores (HBase, Cassandra) for low‑latency access.

For analytics, the authors combine batch processing (Apache Spark) with real‑time stream processing (Apache Flink, Spark Structured Streaming). Seismic image interpretation and geological structure prediction are accelerated using GPU‑enabled Spark MLlib and deep‑learning frameworks (TensorFlow, PyTorch). Predictive models address drilling risk, production forecasting, and equipment failure detection. Model serving is containerized on Kubernetes using KFServing or Seldon Core, exposing REST/gRPC endpoints for downstream applications and supporting A/B testing and automated rollback.

Decision‑support is delivered through integrated Business Intelligence tools (Tableau, PowerBI) and custom dashboards that surface key performance indicators, geological models, and equipment health in real time. Alerts are automatically routed to collaboration platforms (Slack, Microsoft Teams, ServiceNow) to enable rapid response. Scenario planning and what‑if analysis are incorporated via simulation engines, allowing operators to evaluate the impact of alternative drilling strategies or production schedules.

Security, governance, and cost‑optimization are addressed in depth. Data in transit and at rest are encrypted with TLS and server‑side encryption; fine‑grained access control is enforced through IAM and RBAC policies. Audit logs and lineage information satisfy GDPR, CCPA, and industry‑specific regulations. Cost efficiency is achieved by exploiting cloud spot instances, serverless functions (AWS Lambda, Azure Functions), and auto‑scaling clusters, while data compression, partitioning, and caching further reduce storage and compute expenses.

The paper quantifies the benefits of this integrated approach. In exploration, seismic processing time is reduced by more than 30 %, accelerating decision cycles. During drilling, early detection of abnormal pressure or temperature patterns cuts incident risk by roughly 40 %. In production, predictive maintenance and real‑time monitoring raise equipment availability by over 5 % and lower operating expenditures by at least 10 % annually. Moreover, the platform fosters a culture of shared learning and data‑driven decision‑making across the organization, contributing to safer, more responsible, and environmentally sustainable operations.

Finally, the authors outline future directions, including tighter integration of AI‑driven autonomous decision loops, digital‑twin simulations of reservoirs and facilities, and edge‑computing deployments that process sensor data directly at the wellhead. These advancements promise to further accelerate the digital transformation of upstream petroleum activities, turning raw data into refined insight and competitive advantage.