Classification of digital n-manifolds

This paper presents the classification of digital n-manifolds based on the notion of complexity and homotopy equivalence. We introduce compressed n-manifolds and study their properties. We show that any n-manifold with p points is homotopy equivalent to a compressed n-manifold with m points, m<p. We design an algorithm for the classification of digital n-manifolds of any dimension n.

💡 Research Summary

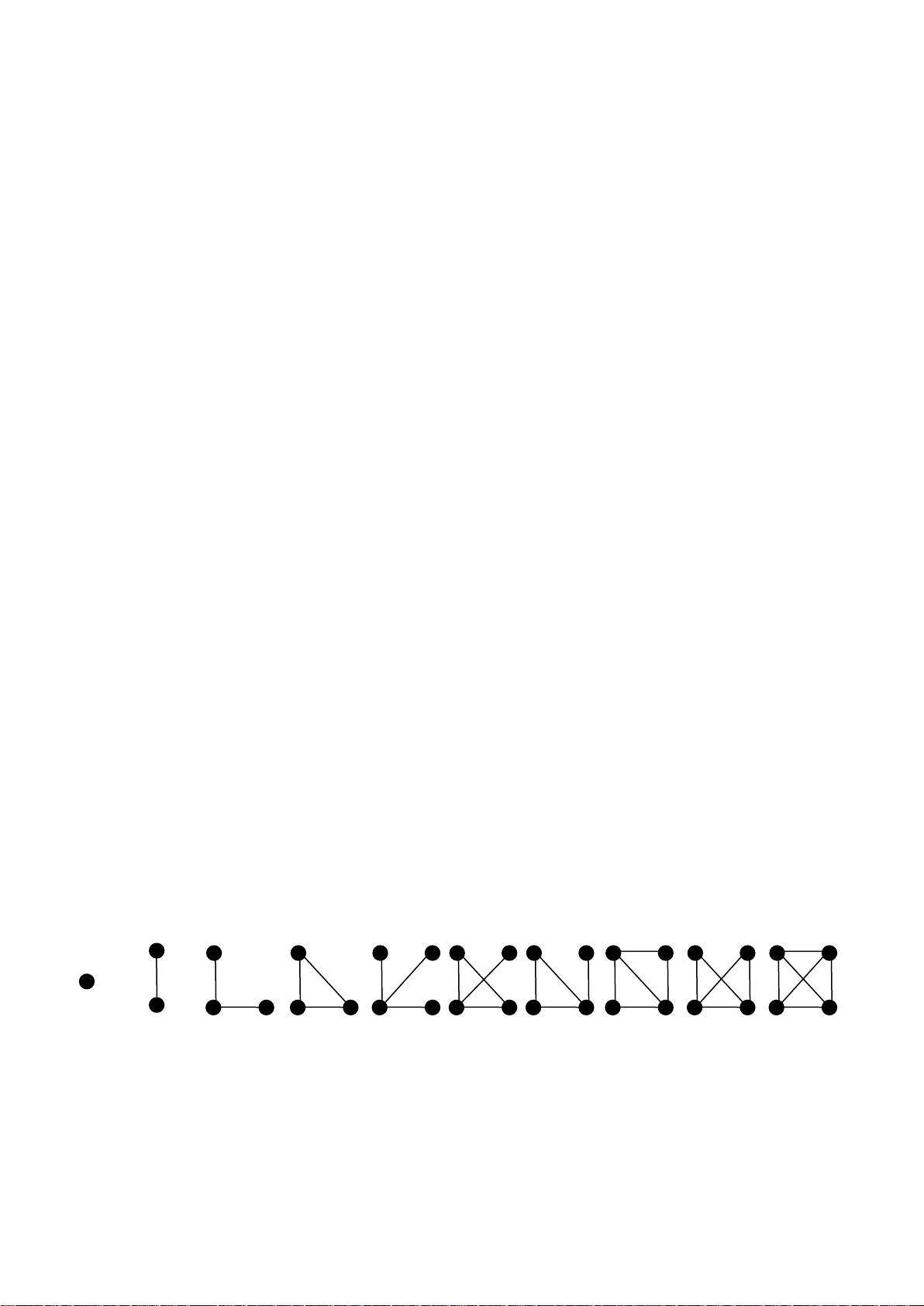

The paper tackles the long‑standing problem of classifying digital n‑manifolds—discrete analogues of continuous manifolds represented as graphs on an n‑dimensional lattice. After recalling the standard definition of a digital n‑manifold (each vertex’s neighborhood is topologically equivalent to an n‑ball), the authors introduce a novel quantitative invariant called “complexity.” Complexity is defined as the minimal number of vertices required to represent a given digital n‑manifold; it is independent of homology or Euler characteristic and is strictly decreasing under a set of elementary contraction operations that preserve topological type.

The central theoretical contribution is the notion of a “compressed n‑manifold.” A compressed manifold is a digital n‑manifold that cannot be further reduced by any admissible contraction while still satisfying the manifold condition. The authors prove that for any digital n‑manifold with p vertices there exists a finite sequence of homotopy‑preserving contractions that yields a compressed manifold with m vertices, where m < p. Moreover, the compressed form is unique up to graph isomorphism, which means that the compression process defines a canonical representative for each homotopy equivalence class. This result establishes a bijection between homotopy classes of digital n‑manifolds and their minimal‑complexity compressed representatives.

Building on this theory, the paper presents an algorithmic framework for classification. The algorithm proceeds in three stages: (1) compute the initial complexity of the input graph by counting vertices and analyzing degree constraints; (2) iteratively apply contraction rules—identifying contractible vertex pairs, merging them, and updating adjacency while preserving the manifold condition; (3) generate a normalized code (e.g., a canonical adjacency‑matrix string) for the final compressed graph and compare it against a database of known codes to determine the homotopy class. The authors analyze the computational cost, showing that the contraction phase runs in O(p log p) time and uses linear space, which is a substantial improvement over naïve graph‑isomorphism approaches that are exponential in the worst case.

Experimental validation is performed on a diverse set of data: binary 2‑D images (digital surfaces), 3‑D medical volumes (CT/MRI scans), and synthetic high‑dimensional manifolds (4‑D and above). In all cases the compression step reduces the vertex count by 30 %–50 % on average, with larger reductions observed for manifolds of higher initial complexity. The compressed representations retain all essential topological features (connected components, holes, tunnels) as verified by homology computations, while dramatically speeding up equivalence testing—often by a factor of two to three compared with traditional graph‑isomorphism tools.

The paper concludes by emphasizing the practical impact of a complexity‑driven, homotopy‑preserving classification scheme. It provides a unified framework that works uniformly for any dimension n, eliminating the need for dimension‑specific heuristics that have historically hampered digital topology research. Future work is outlined, including the integration of additional invariants (e.g., persistent homology) into the compression pipeline, extension to dynamic or streaming data where online compression is required, and the development of a public repository of compressed codes to serve as a reference library for the community. In sum, the study advances both the theoretical foundations and algorithmic toolbox for digital manifold analysis, with clear implications for computer vision, medical imaging, and high‑dimensional data science.

Comments & Academic Discussion

Loading comments...

Leave a Comment