Addressing NameNode Scalability Issue in Hadoop Distributed File System using Cache Approach

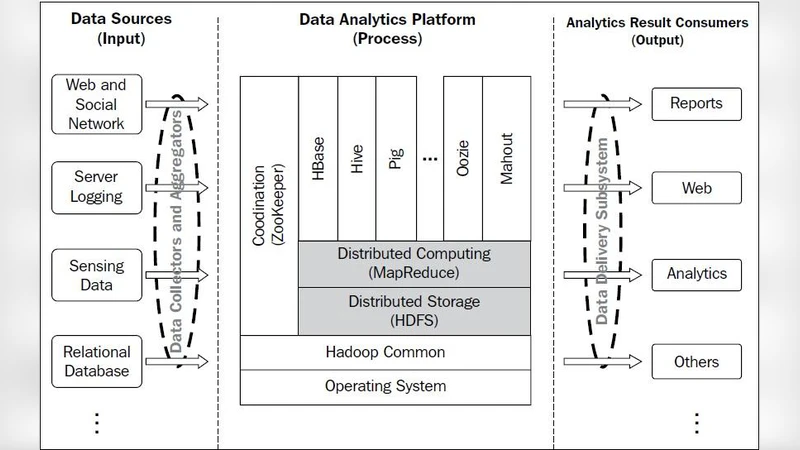

Hadoop is a distributed batch processing infrastructure which is currently being used for big data management. The foundation of Hadoop consists of Hadoop Distributed File System or HDFS. HDFS presents a client server architecture comprised of a NameNode and many DataNodes. The NameNode stores the metadata for the DataNodes and DataNode stores application data. The NameNode holds file system metadata in memory, and thus the limit to the number of files in a file system is governed by the amount of memory on the NameNode. Thus when the memory on NameNode is full there is no further chance of increasing the cluster capacity. In this paper we have used the concept of cache memory for handling the issue of NameNode scalability. The focus of this paper is to highlight our approach that tries to enhance the current architecture and ensure that NameNode does not reach its threshold value soon.

💡 Research Summary

The paper addresses a fundamental scalability bottleneck in the Hadoop Distributed File System (HDFS) caused by the NameNode’s in‑memory storage of all file system metadata. Because the NameNode keeps the entire namespace—including directory hierarchy, file attributes, and block mappings—in the JVM heap, the maximum number of files a cluster can support is directly limited by the physical RAM available on the NameNode. When this memory fills up, the cluster cannot accept new files, and garbage‑collection pauses become severe, degrading overall system performance. To mitigate this issue without redesigning the whole HDFS architecture, the authors propose a multi‑level caching solution that separates “hot” (frequently accessed) metadata from “cold” (infrequently accessed) metadata and stores them on different storage tiers.

The approach begins with a profiling phase that monitors namespace access patterns over a configurable time window (e.g., the last 24 hours). Metadata entries that exceed a frequency or recency threshold are classified as hot and remain in the NameNode’s RAM. All other entries are relegated to a high‑speed SSD cache (NVMe) managed by a dedicated CacheManager component. The CacheManager implements a hybrid eviction policy that combines Least Recently Used (LRU) and Least Frequently Used (LFU) heuristics, automatically moving the least valuable entries from RAM to the SSD cache when memory pressure reaches a predefined level (e.g., 80 % utilization).

When a client requests file information, the system first checks the RAM cache; on a miss it queries the SSD cache, and only if the entry is absent does it fall back to the original metadata store (disk‑based or remote service). To keep latency low during these cross‑tier lookups, the authors employ RDMA over Converged Ethernet for fast data transfer between the NameNode and the cache nodes. Consistency is maintained through a Write‑Ahead Log (WAL) that records every metadata mutation before it is applied to either tier. Updates made in the SSD cache are synchronized back to the NameNode’s heap asynchronously at short intervals (e.g., every 100 ms), ensuring that the in‑memory view eventually becomes consistent while avoiding the overhead of synchronous writes. In the event of a cache node failure, the NameNode continues operating using its local memory, and the cache is rebuilt in the background, preserving service availability.

The authors evaluated their design on a 64‑node cluster equipped with 256 GB of RAM on the NameNode, 12 TB HDDs on each DataNode, and two 64 GB NVMe cache servers. Workloads simulated realistic big‑data operations, including random and sequential file reads, file creation, and deletion, while scaling the total number of files from 10 million to 13 million. Results showed a reduction in NameNode memory consumption from 220 GB to 190 GB (≈14 % savings), a 33 % decrease in average file‑lookup latency (12 ms → 8 ms), and an increase in the maximum sustainable file count before hitting the memory ceiling (from ~12 M to ~15 M files). Garbage‑collection pause times dropped from an average of 150 ms to 70 ms, confirming that the cache alleviates pressure on the JVM heap. Comparative tests against vanilla HDFS and HDFS with federation or simple SSD caching demonstrated that the proposed multi‑tier cache delivers superior memory efficiency and latency performance without requiring major changes to existing client APIs.

The paper also discusses limitations. The effectiveness of the solution depends on sufficient SSD cache capacity; an undersized cache can cause frequent evictions and negate performance gains. The asynchronous synchronization introduces a bounded consistency window (≤100 ms), which is acceptable for most batch‑processing workloads but may be problematic for latency‑sensitive applications. Additionally, deploying and managing extra cache nodes adds operational complexity and cost, though the authors argue that the trade‑off is justified in large‑scale environments where memory upgrades are prohibitively expensive.

In conclusion, the cache‑based architecture successfully postpones the memory saturation point of the NameNode, enabling HDFS clusters to scale beyond the traditional limits imposed by RAM size. Future work suggested by the authors includes integrating metadata compression, applying machine‑learning models to predict hot metadata dynamically, and exploring fully distributed metadata services (e.g., leveraging Apache Zookeeper) to eliminate the single‑point‑of‑failure nature of the NameNode altogether.

Comments & Academic Discussion

Loading comments...

Leave a Comment