Inequality and cumulative advantage in science careers: a case study of high-impact journals

Analyzing a large data set of publications drawn from the most competitive journals in the natural and social sciences we show that research careers exhibit the broad distributions of individual achievement characteristic of systems in which cumulative advantage plays a key role. While most researchers are personally aware of the competition implicit in the publication process, little is known about the levels of inequality at the level of individual researchers. We analyzed both productivity and impact measures for a large set of researchers publishing in high-impact journals. For each researcher cohort we calculated Gini inequality coefficients, with average Gini values around 0.48 for total publications and 0.73 for total citations. For perspective, these observed values are well in excess of the inequality levels observed for personal income in developing countries. Investigating possible sources of this inequality, we identify two potential mechanisms that act at the level of the individual that may play defining roles in the emergence of the broad productivity and impact distributions found in science. First, we show that the average time interval between a researcher’s successive publications in top journals decreases with each subsequent publication. Second, after controlling for the time dependent features of citation distributions, we compare the citation impact of subsequent publications within a researcher’s publication record. We find that as researchers continue to publish in top journals, there is more likely to be a decreasing trend in the relative citation impact with each subsequent publication. This pattern highlights the difficulty of repeatedly publishing high-impact research and the intriguing possibility that confirmation bias plays a role in the evaluation of scientific careers.

💡 Research Summary

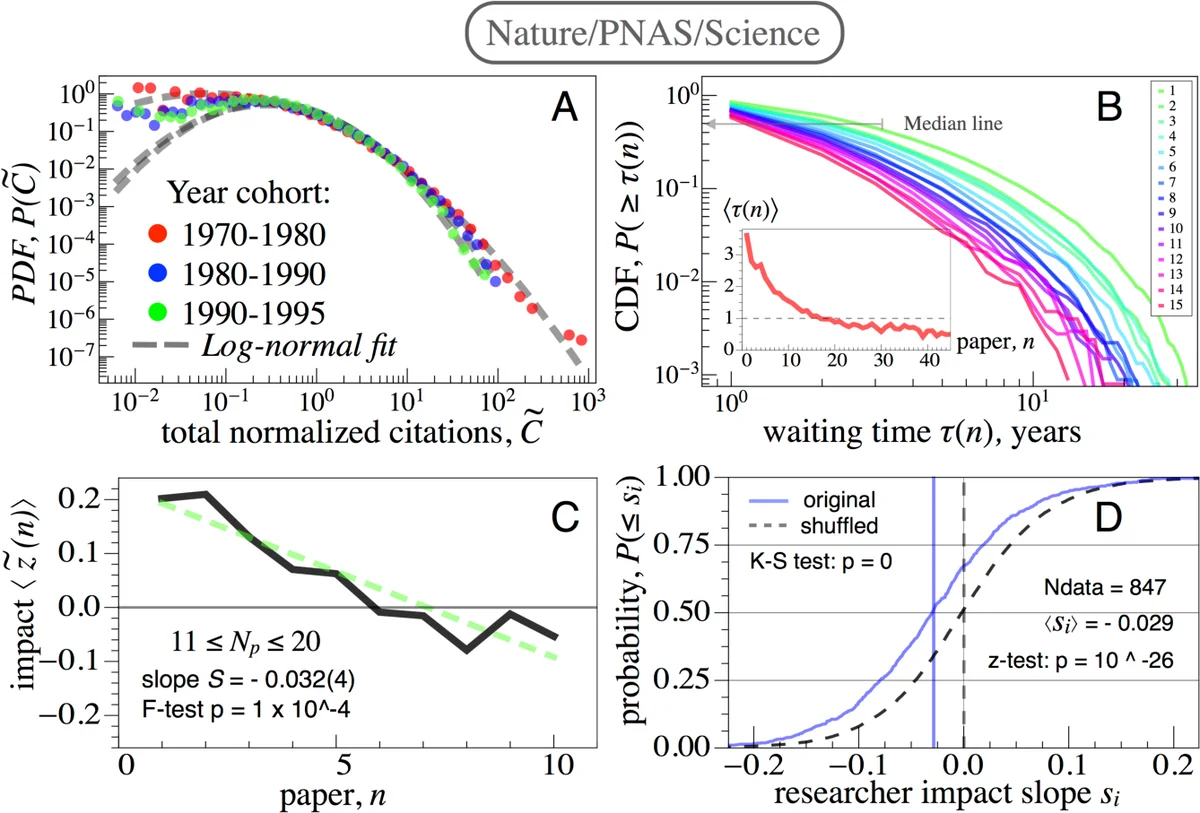

The paper investigates the extent and origins of inequality in scientific careers by focusing on researchers who publish in a set of high‑impact journals. Using a comprehensive dataset drawn from 23 top‑tier journals (including Nature/PNAS/Science, a suite of economics journals, and leading management science journals), the authors extracted 412,498 articles authored by 258,626 individual scientists spanning the period 1970‑2009. To avoid censoring and cohort biases, researchers were grouped into non‑overlapping cohorts based on the year of their first publication in the selected journal set, allowing longitudinal comparisons across comparable career stages.

The authors first quantified inequality in two key dimensions: productivity (total number of publications) and impact (total citations). Gini coefficients were calculated for each cohort, revealing average values of approximately 0.48 for publications and 0.73 for citations. These figures are markedly higher than income inequality measures observed in many developing economies. For the earliest cohort (first publication 1970‑1980), the Gini rose to 0.83 in economics and 0.74 in the natural‑science set, with the top 1 % of scientists accounting for roughly 22‑26 % of all citations. Although a modest trend toward greater equality over time was noted, the overall distribution remained heavily skewed, indicating that a small elite captures a disproportionate share of scientific credit.

Next, the study examined the temporal dynamics of publishing. For each author i, the waiting time τ_i(n) between the n‑th and (n + 1)-th paper in the high‑impact set was computed. The longest interval typically occurred between the first and second paper (average ≈3–4 years, depending on the journal family). As authors accrued more publications, the mean waiting time ⟨τ(n)⟩ declined sharply, reaching roughly half of the initial value by the tenth paper. This pattern suggests a cumulative‑advantage mechanism: early success appears to accelerate subsequent output, perhaps through increased visibility, better access to resources, or stronger collaborations.

The third analytical strand addressed citation impact over the career trajectory. Raw citation counts were normalized by the average citation count of papers published in the same year, then log‑transformed to obtain a z‑score:

z_i(n) = ln c_i(n) – ⟨ln c⟩_year.

To isolate within‑author trends, each author’s mean z‑score across all of his/her papers was subtracted, yielding a relative impact trajectory ˜z_i(n). The authors then estimated a slope s_i for each scientist by regressing ˜z_i(n) on publication order n. Across the cohort, the average ˜z_i(n) displayed a clear negative trend with increasing n, and the distribution of slopes was shifted toward negative values. A random‑shuffling control (which destroys any temporal ordering) produced a slope distribution centered at zero, and a Kolmogorov‑Smirnov test confirmed that the empirical and shuffled distributions differ significantly. In other words, as researchers continue to publish in the same elite journals, the relative citation performance of their later papers tends to decline.

The paper interprets these findings as evidence of two interacting processes. First, cumulative advantage speeds up the rate of publication after an initial breakthrough, reinforcing the elite status of early high‑impact authors. Second, the decreasing marginal citation impact suggests that the prestige system imposes diminishing returns: the same journals become harder to “beat” with each successive paper, possibly because novelty wanes, editorial bias favors established names, or confirmation bias rewards earlier successes. The authors argue that while cumulative advantage can open doors, it may also create a counter‑productive feedback loop where early success is over‑valued as a predictor of future scientific contribution.

Policy implications are discussed. The pronounced inequality and the observed dynamics call for more nuanced evaluation metrics that account for career stage, field‑specific citation norms, and the stochastic nature of breakthrough research. Relying heavily on high‑impact journal counts may perpetuate a self‑reinforcing elite and obscure valuable contributions that appear in less‑prestigious venues. The authors suggest that institutions should consider mechanisms to mitigate confirmation bias, such as blind review processes, diversified citation benchmarks, and incentives for sustained, high‑quality work beyond the “first‑paper” effect.

In sum, the study provides a rigorous, data‑driven portrait of how scientific careers evolve within the most competitive publishing arenas, quantifying the magnitude of inequality, documenting the acceleration of publishing frequency, and revealing a systematic decline in relative citation impact. These insights deepen our understanding of the structural forces shaping scientific success and highlight the need for reforms that promote fairness and long‑term excellence in research evaluation.

Comments & Academic Discussion

Loading comments...

Leave a Comment