Metric recovery from directed unweighted graphs

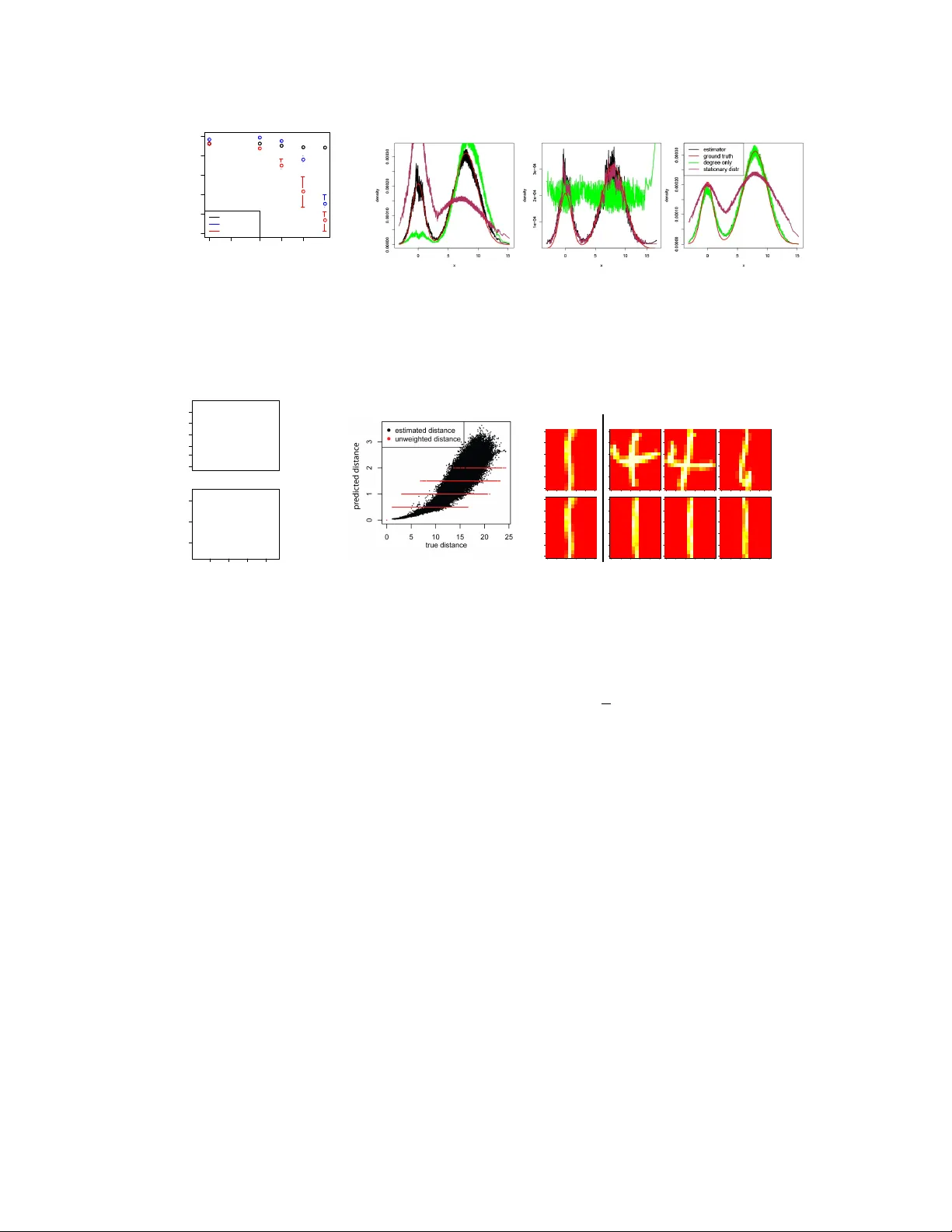

We analyze directed, unweighted graphs obtained from $x_i\in \mathbb{R}^d$ by connecting vertex $i$ to $j$ iff $|x_i - x_j| < \epsilon(x_i)$. Examples of such graphs include $k$-nearest neighbor graphs, where $\epsilon(x_i)$ varies from point to poin…

Authors: Tatsunori B. Hashimoto, Yi Sun, Tommi S. Jaakkola